6 Nonparametric tests

This chapter overviews some well-known nonparametric hypothesis tests.195 The reviewed tests are intended for different purposes, mostly related to: (i) the evaluation of the goodness-of-fit of a distribution model to a dataset; and (ii) the assessment of the relation between two random variables.

A nonparametric test evaluates a null hypothesis \(H_0\) against an alternative \(H_1\) without assuming any parametric model, on neither \(H_0\) nor \(H_1.\) Consequently, a nonparametric test is free from the overhead of evaluating a parametric assumption that one needs to conduct before applying a parametric test.196 More importantly, it is quite likely that the inspection of these parametric assumptions has a negative outcome that forbids the subsequent application of a parametric test. The direct applicability and generality of nonparametric tests are the reasons for their usefulness in real-data applications.

Nonparametric tests have lower efficiency with respect to optimal parametric tests for specific parametric problems.197 Statistical inference is full of instances of such parametric tests, especially within the context of normal populations.198 For example, given two iid samples \(X_{11},\ldots,X_{1n_1}\) and \(X_{21},\ldots,X_{2n_2}\) from two normal populations \(X_1\sim\mathcal{N}(\mu_1,\sigma^2)\) and \(X_2\sim\mathcal{N}(\mu_2,\sigma^2),\) the test for the equality of the means,

\[\begin{align*} H_0:\mu_1=\mu_2\quad\text{vs.}\quad H_1:\mu_1\neq\mu_2, \end{align*}\]

is optimally carried out using the test statistic \(T_n:=\frac{\bar{X}_1-\bar{X}_2}{S\sqrt{1/n_1+1/n_2}},\) where \(S^2:=\frac{1}{n_1+n_2-2}\left(\sum_{i=1}^{n_1}(X_{1i}-\bar{X}_1)^2+\sum_{i=1}^{n_2}(X_{2i}-\bar{X}_2)^2\right)\) is the pooled sample variance. The distribution of \(T_n\) under \(H_0\) is \(t_{n_1+n_2-2},\) which is compactly denoted by \(T_n\stackrel{H_0}{\sim}t_{n_1+n_2-2}.\) For this result to hold, it is key that the two populations are indeed normally distributed, an assumption that may be unrealistic in practice. Recall that, under \(H_0,\) this test states the equality of distributions of \(X_1\) and \(X_2.\) A nonparametric alternative therefore is the Kolmogorov–Smirnov test for two samples, to be seen in Section 6.2. It evaluates if the distributions of \(X_1\sim F_1\) and \(X_2\sim F_2\) are equal:

\[\begin{align*} H_0:F_1=F_2\quad\text{vs.}\quad H_1:F_1\neq F_2. \end{align*}\]

Finally, the term goodness-of-fit refers to the statistical tests that check the adequacy of a model for explaining a sample. For example, a goodness-of-fit test allows answering if a normal model is “acceptable” to describe a given sample \(X_1,\ldots,X_n.\) Initially, the concept of goodness-of-fit test was proposed for distribution models, but it was later extended to regression199 and other statistical models,200 although such extensions are not addressed in this book.

6.1 Goodness-of-fit tests for distribution models

Assume that an iid sample \(X_1,\ldots,X_n\) from an arbitrary distribution \(F\) is given.201 We next address the one-sample problem of testing a statement about the unknown distribution \(F.\)

6.1.1 Simple hypothesis tests

We first address tests for the simple null hypothesis

\[\begin{align} H_0:F=F_0 \tag{6.1} \end{align}\]

against the most general alternative202

\[\begin{align*} H_1:F\neq F_0, \end{align*}\]

where here and henceforth “\(F\neq F_0\)” means that there exists at least one \(x\in\mathbb{R}\) such that \(F(x)\neq F_0(x),\) and \(F_0\) is a pre-specified, non-data-dependent distribution model. This latter aspect is very important:

If some parameters of \(F_0\) are estimated from the sample, the presented tests for (6.1) will not respect the significance level \(\alpha\) for which they are constructed, and as a consequence they will be highly conservative.203

Recall that the ecdf (1.1) of \(X_1,\ldots,X_n,\) \(F_n(x)=\frac{1}{n}\sum_{i=1}^n1_{\{X_i\leq x\}},\) is a nonparametric estimator of \(F,\) as seen in Section 1.6. Therefore, a measure of proximity of \(F_n\) (driven by the sample) and \(F_0\) (specified by \(H_0\)) will be indicative of the veracity of (6.1): a “large” distance between \(F_n\) and \(F_0\) evidences that \(H_0\) is likely to be false.204 \(\!\!^,\)205

The following three well-known goodness-of-fit tests arise from the same principle: considering as the test statistic a particular type of distance between the functions \(F_n\) and \(F_0.\)

6.1.1.1 Kolmogorov–Smirnov test

Test purpose. Given \(X_1,\ldots,X_n\sim F,\) it tests \(H_0: F=F_0\) vs. \(H_1: F\neq F_0\) consistently against all the alternatives in \(H_1.\)

-

Statistic definition. The test statistic uses the supremum distance206 \(\!\!^,\)207 between \(F_n\) and \(F_0\):

\[\begin{align*} D_n:=\sqrt{n}\sup_{x\in\mathbb{R}} \left|F_n(x)-F_0(x)\right|. \end{align*}\]

If \(H_0:F=F_0\) holds, then \(D_n\) tends to be small. Conversely, when \(F\neq F_0,\) larger values of \(D_n\) are expected, and the test rejects \(H_0\) when \(D_n\) is large.

-

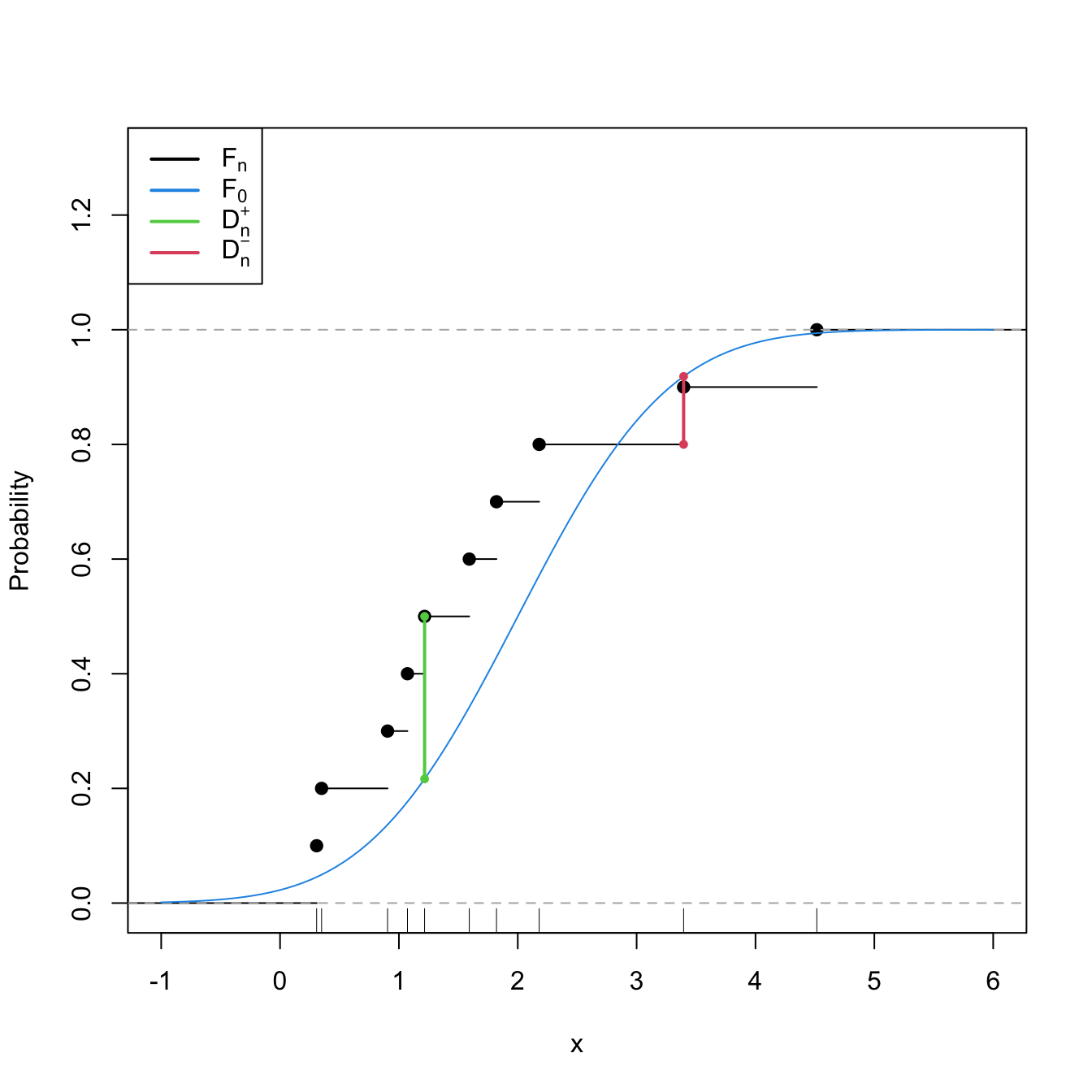

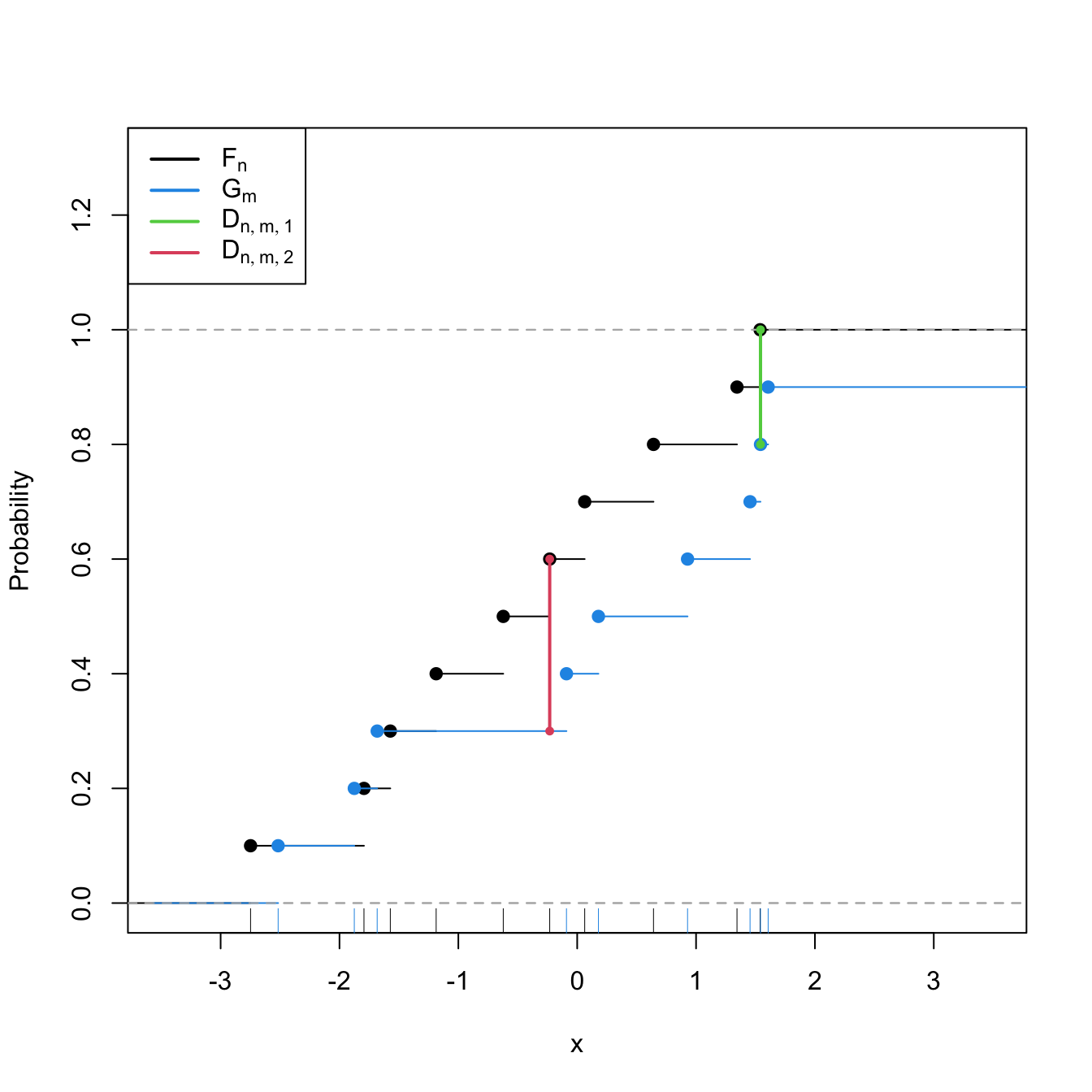

Statistic computation. The computation of \(D_n\) can be efficiently achieved by realizing that the maximum difference between \(F_n\) and \(F_0\) happens at \(x=X_i,\) for a certain \(X_i\) (observe Figure 6.1). From here, sorting the sample and applying the probability transformation \(F_0\) gives208

\[\begin{align} D_n=&\,\max(D_n^+,D_n^-),\tag{6.2}\\ D_n^+:=&\,\sqrt{n}\max_{1\leq i\leq n}\left\{\frac{i}{n}-U_{(i)}\right\},\nonumber\\ D_n^-:=&\,\sqrt{n}\max_{1\leq i\leq n}\left\{U_{(i)}-\frac{i-1}{n}\right\},\nonumber \end{align}\]

where \(U_{(j)}\) stands for the \(j\)-th sorted \(U_i:=F_0(X_i),\) \(i=1,\ldots,n.\)

-

Distribution under \(H_0.\) If \(H_0\) holds and \(F_0\) is continuous, then \(D_n\) has an asymptotic209 cdf given by the Kolmogorov–Smirnov’s \(K\)210 function:

\[\begin{align} \lim_{n\to\infty}\mathbb{P}[D_n\leq x]=K(x):=1-2\sum_{j=1}^\infty (-1)^{j-1}e^{-2j^2x^2}.\tag{6.3} \end{align}\]

Highlights and caveats. The Kolmogorov–Smirnov test is a distribution-free test because its distribution under \(H_0\) does not depend on \(F_0.\) However, this is the case only if \(F_0\) is continuous and the sample \(X_1,\ldots,X_n\) is also continuous, i.e., if the sample has no ties.211 If these assumptions are met, then the iid sample \(X_1,\ldots,X_n\stackrel{H_0}{\sim} F_0\) generates the iid sample \(U_1,\ldots,U_n\stackrel{H_0}{\sim} \mathcal{U}(0,1),\) with \(U_i:=F_0(X_i),\) \(i=1,\ldots,n.\) As a consequence, the distribution of (6.2) does not depend on \(F_0\).212 \(\!\!^,\)213 If \(F_0\) is not continuous or there are ties on the sample, the \(K\) function is not the true asymptotic cdf. An alternative for discrete \(F_0\) is given below. In case there are ties on the sample, a possibility is to slightly perturb the sample in order to remove such ties.214

Implementation in R. For continuous data and continuous \(F_0,\) the test statistic \(D_n\) and the asymptotic \(p\)-value215 are readily available through the

ks.test()function. The asymptotic cdf \(K\,\) is internally coded as thepkolmogorov1x()function within the source code ofks.test(). For discrete \(F_0,\) seedgof::ks.test().

The construction of the Kolmogorov–Smirnov test statistic is illustrated in the following chunk of code.

# Sample data

n <- 10

mu0 <- 2

sd0 <- 1

set.seed(54321)

samp <- rnorm(n = n, mean = mu0, sd = sd0)

# Fn vs. F0

plot(ecdf(samp), main = "", ylab = "Probability", xlim = c(-1, 6),

ylim = c(0, 1.3))

curve(pnorm(x, mean = mu0, sd = sd0), add = TRUE, col = 4)

# Add Dn+ and Dn-

samp_sorted <- sort(samp)

Ui <- pnorm(samp_sorted, mean = mu0, sd = sd0)

Dn_plus <- (1:n) / n - Ui

Dn_minus <- Ui - (1:n - 1) / n

i_plus <- which.max(Dn_plus)

i_minus <- which.max(Dn_minus)

lines(rep(samp_sorted[i_plus], 2),

c(i_plus / n, pnorm(samp_sorted[i_plus], mean = mu0, sd = sd0)),

col = 3, lwd = 2, pch = 16, type = "o", cex = 0.75)

lines(rep(samp_sorted[i_minus], 2),

c((i_minus - 1) / n, pnorm(samp_sorted[i_minus], mean = mu0, sd = sd0)),

col = 2, lwd = 2, pch = 16, type = "o", cex = 0.75)

rug(samp)

legend("topleft", lwd = 2, col = c(1, 4, 3, 2),

legend = latex2exp::TeX(c("$F_n$", "$F_0$", "$D_n^+$", "$D_n^-$")))

Figure 6.1: Computation of the Kolmogorov–Smirnov statistic \(D_n=\max(D_n^+,D_n^-)\) for a sample of size \(n=10\) coming from \(F_0(\cdot)=\Phi\left(\frac{\cdot-\mu_0}{\sigma_0}\right),\) where \(\mu_0=2\) and \(\sigma_0=1.\) In the example shown, \(D_n=D_n^+.\)

Exercise 6.1 Modify the parameters mu0 and sd0 in the previous code in order to have \(F\neq F_0.\) What happens with \(\sup_{x\in\mathbb{R}} \left|F_n(x)-F_0(x)\right|\)?

Let’s see an example of the use of ks.test() (also available as stats::ks.test()), the function in base R.

# Sample data from a N(0, 1)

n <- 50

set.seed(3245678)

x <- rnorm(n = n)

# Kolmogorov-Smirnov test for H_0: F = N(0, 1). Does not reject

(ks <- ks.test(x = x, y = "pnorm")) # In "y" we specify F0 as a function

##

## Exact one-sample Kolmogorov-Smirnov test

##

## data: x

## D = 0.050298, p-value = 0.9989

## alternative hypothesis: two-sided

# Structure of "htest" class

str(ks)

## List of 7

## $ statistic : Named num 0.0503

## ..- attr(*, "names")= chr "D"

## $ p.value : num 0.999

## $ alternative: chr "two-sided"

## $ method : chr "Exact one-sample Kolmogorov-Smirnov test"

## $ data.name : chr "x"

## $ data :List of 2

## ..$ x: num [1:50] 0.01911 0.0099 -0.01005 -0.02856 0.00282 ...

## ..$ y:function (q, mean = 0, sd = 1, lower.tail = TRUE, log.p = FALSE)

## $ exact : logi TRUE

## - attr(*, "class")= chr [1:2] "ks.test" "htest"

# Kolmogorov-Smirnov test for H_0: F = N(0.5, 1). Rejects

ks.test(x = x, y = "pnorm", mean = 0.5)

##

## Exact one-sample Kolmogorov-Smirnov test

##

## data: x

## D = 0.24708, p-value = 0.003565

## alternative hypothesis: two-sided

# Kolmogorov-Smirnov test for H_0: F = Exp(2). Strongly rejects

ks.test(x = x, y = "pexp", rate = 1/2)

##

## Exact one-sample Kolmogorov-Smirnov test

##

## data: x

## D = 0.53495, p-value = 6.85e-14

## alternative hypothesis: two-sidedThe following chunk of code shows how to call dgof::ks.test() to perform a correct Kolmogorov–Smirnov when \(F_0\) is discrete.

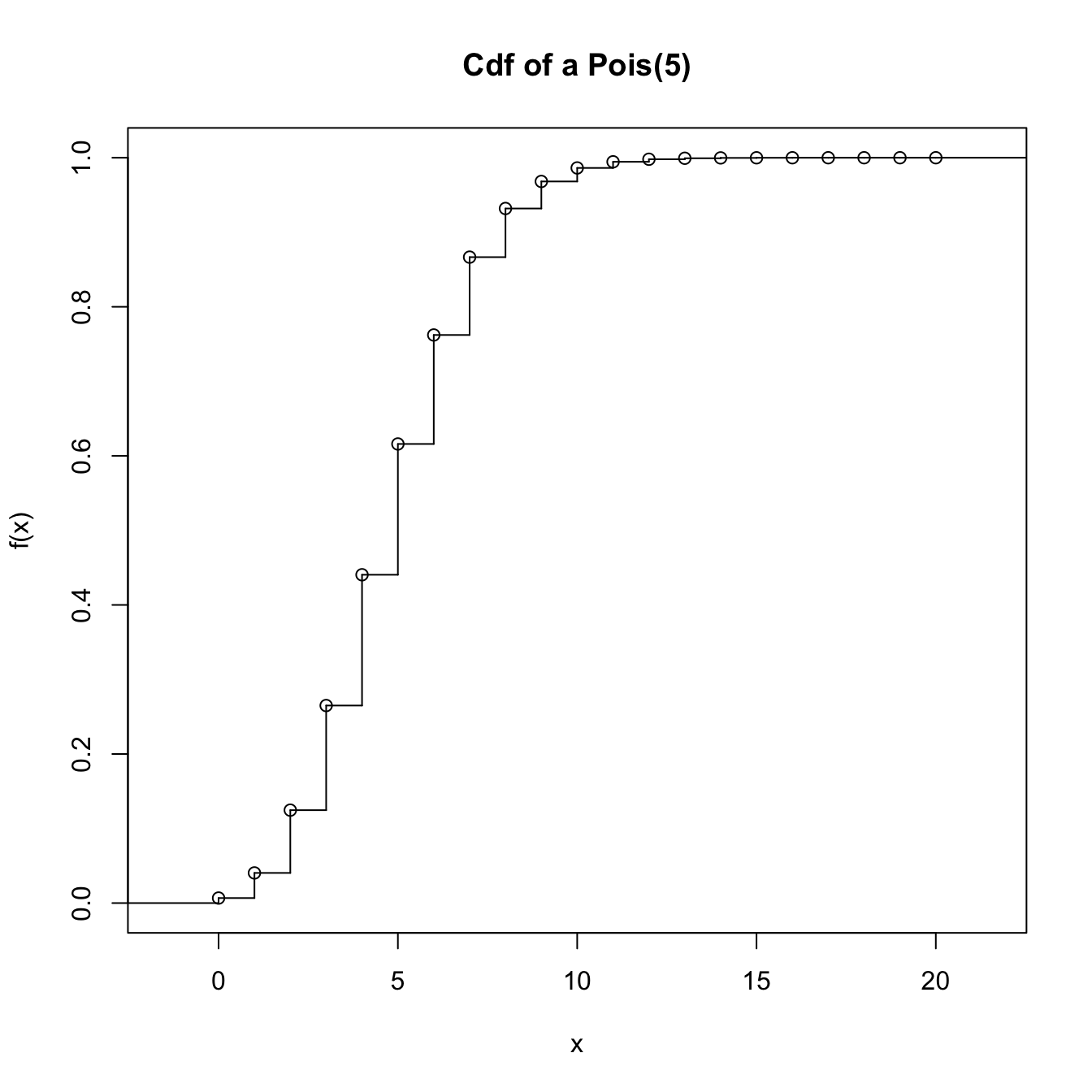

# Sample data from a Pois(5)

n <- 100

set.seed(3245678)

x <- rpois(n = n, lambda = 5)

# Kolmogorov-Smirnov test for H_0: F = Pois(5) without specifying that the

# distribution is discrete. Rejects (!?) giving a warning message

ks.test(x = x, y = "ppois", lambda = 5)

##

## Asymptotic one-sample Kolmogorov-Smirnov test

##

## data: x

## D = 0.20596, p-value = 0.0004135

## alternative hypothesis: two-sided

# We rely on dgof::ks.test(), which works as stats::ks.test() if the "y" argument

# is not marked as a "stepfun" object, the way the dgof package codifies

# discrete distribution functions

# Step function containing the cdf of the Pois(5). The "x" stands for the

# location of the steps and "y" for the value of the steps. "y" needs to have

# an extra point for the initial value of the function before the first step

x_eval <- 0:20

ppois_stepfun <- stepfun(x = x_eval, y = c(0, ppois(q = x_eval, lambda = 5)))

plot(ppois_stepfun, main = "Cdf of a Pois(5)")

# Kolmogorov-Smirnov test for H_0: F = Pois(5) adapted for discrete data,

# with data coming from a Pois(5)

dgof::ks.test(x = x, y = ppois_stepfun)

##

## One-sample Kolmogorov-Smirnov test

##

## data: x

## D = 0.032183, p-value = 0.9999

## alternative hypothesis: two-sided

# If data is normally distributed, the test rejects H_0

dgof::ks.test(x = rnorm(n = n, mean = 5), y = ppois_stepfun)

##

## One-sample Kolmogorov-Smirnov test

##

## data: rnorm(n = n, mean = 5)

## D = 0.38049, p-value = 5.321e-13

## alternative hypothesis: two-sidedExercise 6.2 Implement the Kolmogorov–Smirnov test by:

- Coding a function to compute the test statistic (6.2) from a sample \(X_1,\ldots,X_n\) and a cdf \(F_0.\)

- Implementing the \(K\) function (6.3).

- Calling the previous functions from a routine that returns the asymptotic \(p\)-value of the test.

Check that the implementations coincide with the ones of the ks.test() function when exact = FALSE for data simulated from a \(\mathcal{U}(0,1)\) and any \(n.\) Note: ks.test() computes \(D_n/\sqrt{n}\) instead of \(D_n.\)

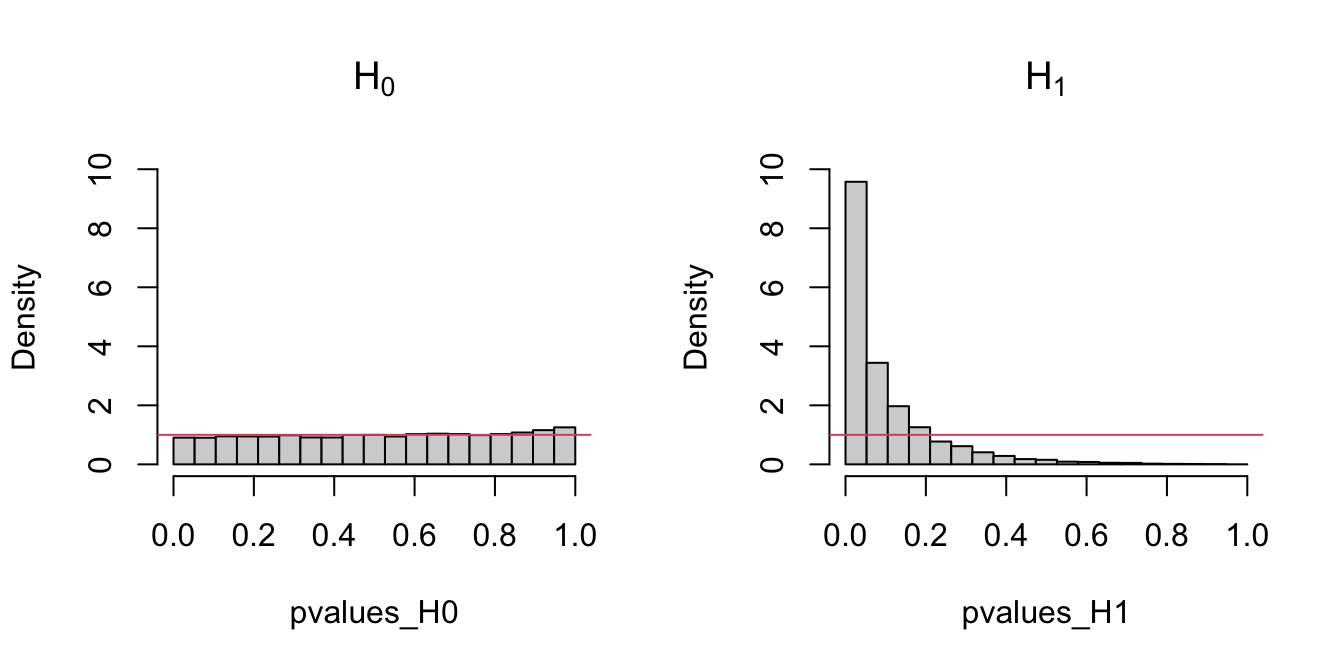

The following code chunk exemplifies with a small simulation study how the distribution of the \(p\)-values216 is uniform if \(H_0\) holds, and how it becomes more concentrated about \(0\) when \(H_1\) holds.

# Simulation of p-values when H_0 is true

set.seed(131231)

n <- 100

M <- 1e4

pvalues_H0 <- sapply(1:M, function(i) {

x <- rnorm(n) # N(0, 1)

ks.test(x, "pnorm")$p.value

})

# Simulation of p-values when H_0 is false -- the data does not

# come from a N(0, 1) but from a N(0, 2)

pvalues_H1 <- sapply(1:M, function(i) {

x <- rnorm(n, mean = 0, sd = sqrt(2)) # N(0, 2)

ks.test(x, "pnorm")$p.value

})

# Comparison of p-values

par(mfrow = 1:2)

hist(pvalues_H0, breaks = seq(0, 1, l = 20), probability = TRUE,

main = latex2exp::TeX("$H_0$"), ylim = c(0, 10))

abline(h = 1, col = 2)

hist(pvalues_H1, breaks = seq(0, 1, l = 20), probability = TRUE,

main = latex2exp::TeX("$H_1$"), ylim = c(0, 10))

abline(h = 1, col = 2)

Figure 6.2: Comparison of the distribution of \(p\)-values under \(H_0\) and \(H_1\) for the Kolmogorov–Smirnov test. Observe that the frequency of low \(p\)-values, associated with the rejection of \(H_0,\) increases when \(H_0\) does not hold. Under \(H_0,\) the distribution of the \(p\)-values is uniform.

Exercise 6.3 Modify the parameters of the normal distribution used to sample under \(H_1\) in order to increase and decrease the deviation from \(H_0.\) What do you observe in the resulting distributions of the \(p\)-values?

6.1.1.2 Cramér–von Mises test

Test purpose. Given \(X_1,\ldots,X_n\sim F,\) it tests \(H_0: F=F_0\) vs. \(H_1: F\neq F_0\) consistently against all the alternatives in \(H_1.\)

-

Statistic definition. The test statistic uses a quadratic distance217 between \(F_n\) and \(F_0\):

\[\begin{align} W_n^2:=n\int(F_n(x)-F_0(x))^2\,\mathrm{d}F_0(x).\tag{6.4} \end{align}\]

If \(H_0: F=F_0\) holds, then \(W_n^2\) tends to be small. Hence, rejection happens for large values of \(W_n^2.\)

-

Statistic computation. The computation of \(W_n^2\) can be significantly simplified by expanding the square in the integrand of (6.4) and then applying the change of variables \(u=F_0(x)\):

\[\begin{align} W_n^2=\sum_{i=1}^n\left\{U_{(i)}-\frac{2i-1}{2n}\right\}^2+\frac{1}{12n},\tag{6.5} \end{align}\]

where again \(U_{(j)}\) stands for the \(j\)-th sorted \(U_i=F_0(X_i),\) \(i=1,\ldots,n.\)

-

Distribution under \(H_0.\) If \(H_0\) holds and \(F_0\) is continuous, then \(W_n^2\) has an asymptotic cdf given by218

\[\begin{align} \lim_{n\to\infty}\mathbb{P}[W_n^2\leq x]=&\,1-\frac{1}{\pi}\sum_{j=1}^\infty (-1)^{j-1}W_j(x),\tag{6.6}\\ W_j(x):=&\,\int_{(2j-1)^2\pi^2}^{4j^2\pi^2}\sqrt{\frac{-\sqrt{y}}{\sin\sqrt{y}}}\frac{e^{-\frac{xy}{2}}}{y}\,\mathrm{d}y.\nonumber \end{align}\]

Highlights and caveats. By a reasoning analogous to the one done in the Kolmogorov–Smirnov test, the Cramér–von Mises test can be seen to be distribution-free if \(F_0\) is continuous and the sample has no ties. Otherwise, (6.6) is not the true asymptotic distribution. Although the Kolmogorov–Smirnov test is the most popular ecdf-based test, empirical evidence suggests that the Cramér–von Mises test is often more powerful than the Kolmogorov–Smirnov test for a broad class of alternative hypotheses.219

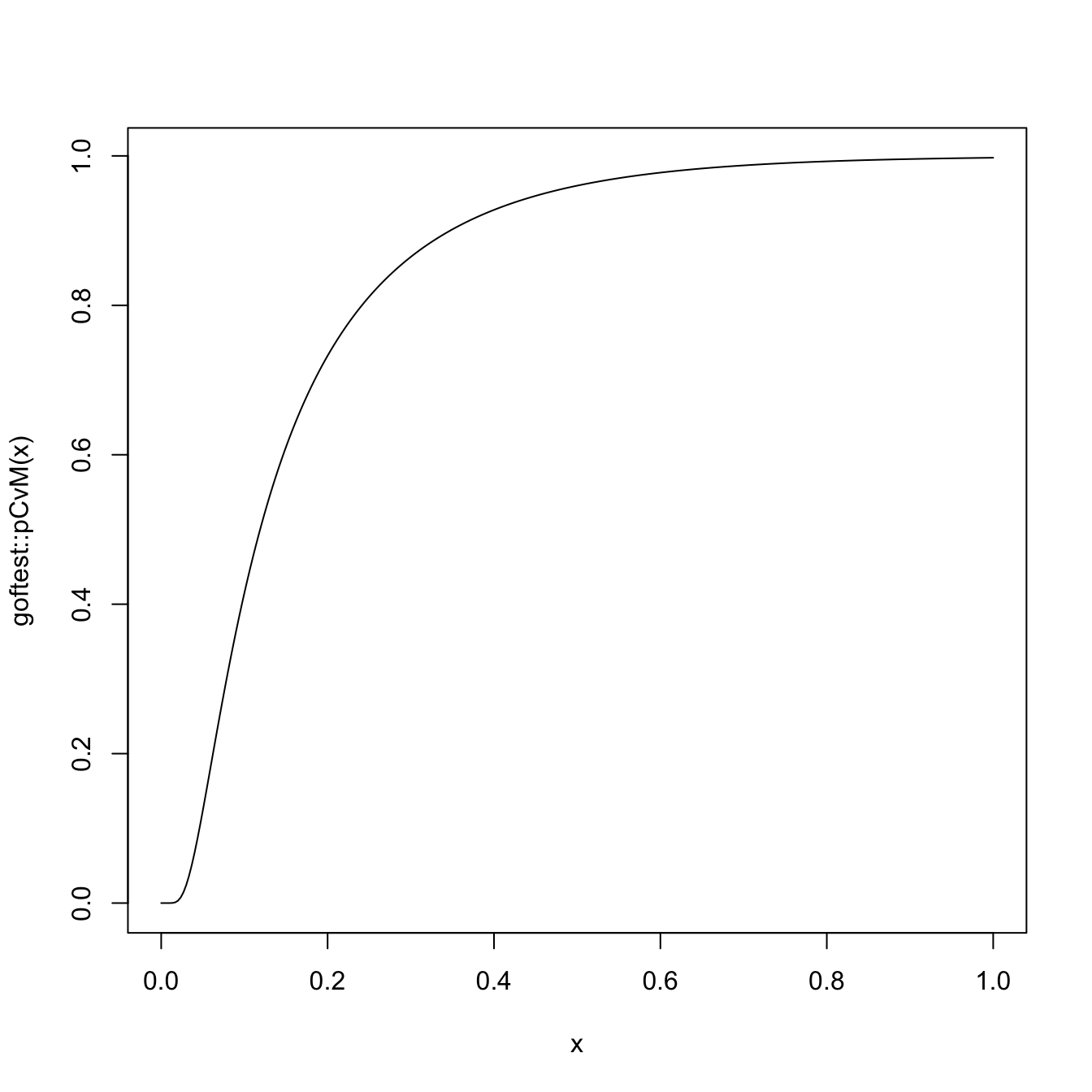

Implementation in R. For continuous data, the test statistic \(W_n^2\) and the asymptotic \(p\)-value are implemented in the

goftest::cvm.test()function. The asymptotic cdf (6.6) is given ingoftest::pCvM()(goftest::qCvM()computes its inverse). For discrete \(F_0,\) seedgof::cvm.test().

The following chunk of code points to the implementation of the Cramér–von Mises test.

# Sample data from a N(0, 1)

set.seed(3245678)

n <- 50

x <- rnorm(n = n)

# Cramér-von Mises test for H_0: F = N(0, 1). Does not reject

goftest::cvm.test(x = x, null = "pnorm")

##

## Cramer-von Mises test of goodness-of-fit

## Null hypothesis: Normal distribution

## Parameters assumed to be fixed

##

## data: x

## omega2 = 0.022294, p-value = 0.9948

# Comparison with Kolmogorov-Smirnov

ks.test(x = x, y = "pnorm")

##

## Exact one-sample Kolmogorov-Smirnov test

##

## data: x

## D = 0.050298, p-value = 0.9989

## alternative hypothesis: two-sided

# Sample data from a Pois(5)

set.seed(3245678)

n <- 100

x <- rpois(n = n, lambda = 5)

# Cramér-von Mises test for H_0: F = Pois(5) without specifying that the

# distribution is discrete. Rejects (!?) without giving a warning message

goftest::cvm.test(x = x, null = "ppois", lambda = 5)

##

## Cramer-von Mises test of goodness-of-fit

## Null hypothesis: Poisson distribution

## with parameter lambda = 5

## Parameters assumed to be fixed

##

## data: x

## omega2 = 0.74735, p-value = 0.009631

# We rely on dgof::cvm.test() and run a Cramér-von Mises test for H_0: F = Pois(5)

# adapted for discrete data, with data coming from a Pois(5)

x_eval <- 0:20

ppois_stepfun <- stepfun(x = x_eval, y = c(0, ppois(q = x_eval, lambda = 5)))

dgof::cvm.test(x = x, y = ppois_stepfun)

##

## Cramer-von Mises - W2

##

## data: x

## W2 = 0.038256, p-value = 0.9082

## alternative hypothesis: Two.sided

# Plot the asymptotic null distribution function

curve(goftest::pCvM(x), from = 0, to = 1, n = 300)

Exercise 6.4 Implement the Cramér–von Mises test by:

- Coding the Cramér–von Mises statistic (6.5) from a sample \(X_1,\ldots,X_n\) and a cdf \(F_0.\) Check that the implementation coincides with the one of the

goftest::cvm.test()function. - Computing the asymptotic \(p\)-value using

goftest::pCvM(). Compare this asymptotic \(p\)-value with the exact \(p\)-value given bygoftest::cvm.test(), observing that for a large \(n\) the difference is inappreciable.

Exercise 6.5 Verify the correctness of the asymptotic null distribution of \(W_n^2\) that was given in (6.6). To do so, numerically implement \(W_j(x)\) and compute (6.6). Validate your implementation by:

- Simulating \(M=1,000\) samples of size \(n=200\) under \(H_0,\) obtaining \(M\) statistics \(W_{n;1}^{2},\ldots, W_{n;M}^{2},\) and plotting their ecdf.

- Overlaying your asymptotic cdf and the one provided by

goftest::pCvM(). - Checking that the three curves approximately coincide.

6.1.1.3 Anderson–Darling test

Test purpose. Given \(X_1,\ldots,X_n\sim F,\) it tests \(H_0: F=F_0\) vs. \(H_1: F\neq F_0\) consistently against all the alternatives in \(H_1.\)

-

Statistic definition. The test statistic uses a quadratic distance between \(F_n\) and \(F_0\) weighted by \(w(x)=(F_0(x)(1-F_0(x)))^{-1}\):

\[\begin{align*} A_n^2:=n\int\frac{(F_n(x)-F_0(x))^2}{F_0(x)(1-F_0(x))}\,\mathrm{d}F_0(x). \end{align*}\]

If \(H_0\) holds, then \(A_n^2\) tends to be small (because of the denominator). Hence, rejection happens for large values of \(A_n^2.\) Note that, compared with \(W_n^2,\) \(A_n^2\) places more weight on the deviations between \(F_n(x)\) and \(F_0(x)\) that happen on the tails, that is, when \(F_0(x)\approx 0\) or \(F_0(x)\approx 1.\)220 \(\!\!^,\)221

-

Statistic computation. The computation of \(A_n^2\) can be significantly simplified:

\[\begin{align} A_n^2=-n-\frac{1}{n}\sum_{i=1}^n&\Big\{(2i-1)\log(U_{(i)})\nonumber\\ &+(2n+1-2i)\log(1-U_{(i)})\Big\}.\tag{6.7} \end{align}\]

-

Distribution under \(H_0.\) If \(H_0\) holds and \(F_0\) is continuous, then the asymptotic cdf of \(A_n^2\) is the cdf of the random variable

\[\begin{align} \sum_{j=1}^\infty\frac{Y_j}{j(j+1)},\quad \text{where } Y_j\sim\chi^2_1,\,j\geq 1,\text{ are iid}.\tag{6.8} \end{align}\]

Unfortunately, the cdf of (6.8) does not admit a simple analytical expression. It can, however, be approximated by Monte Carlo by sampling from the random variable (6.8).

Highlights and caveats. As with the previous tests, the Anderson–Darling test is also distribution-free if \(F_0\) is continuous and there are no ties in the sample. Otherwise, the null asymptotic distribution is different from the one of (6.8). As for the Cramér–von Mises test, there is also empirical evidence indicating that the Anderson–Darling test is more powerful than the Kolmogorov–Smirnov test for a broad class of alternative hypotheses. In addition, due to its construction, the Anderson–Darling test is able to detect better the situations in which \(F_0\) and \(F\) differ on the tails (that is, for extreme data).

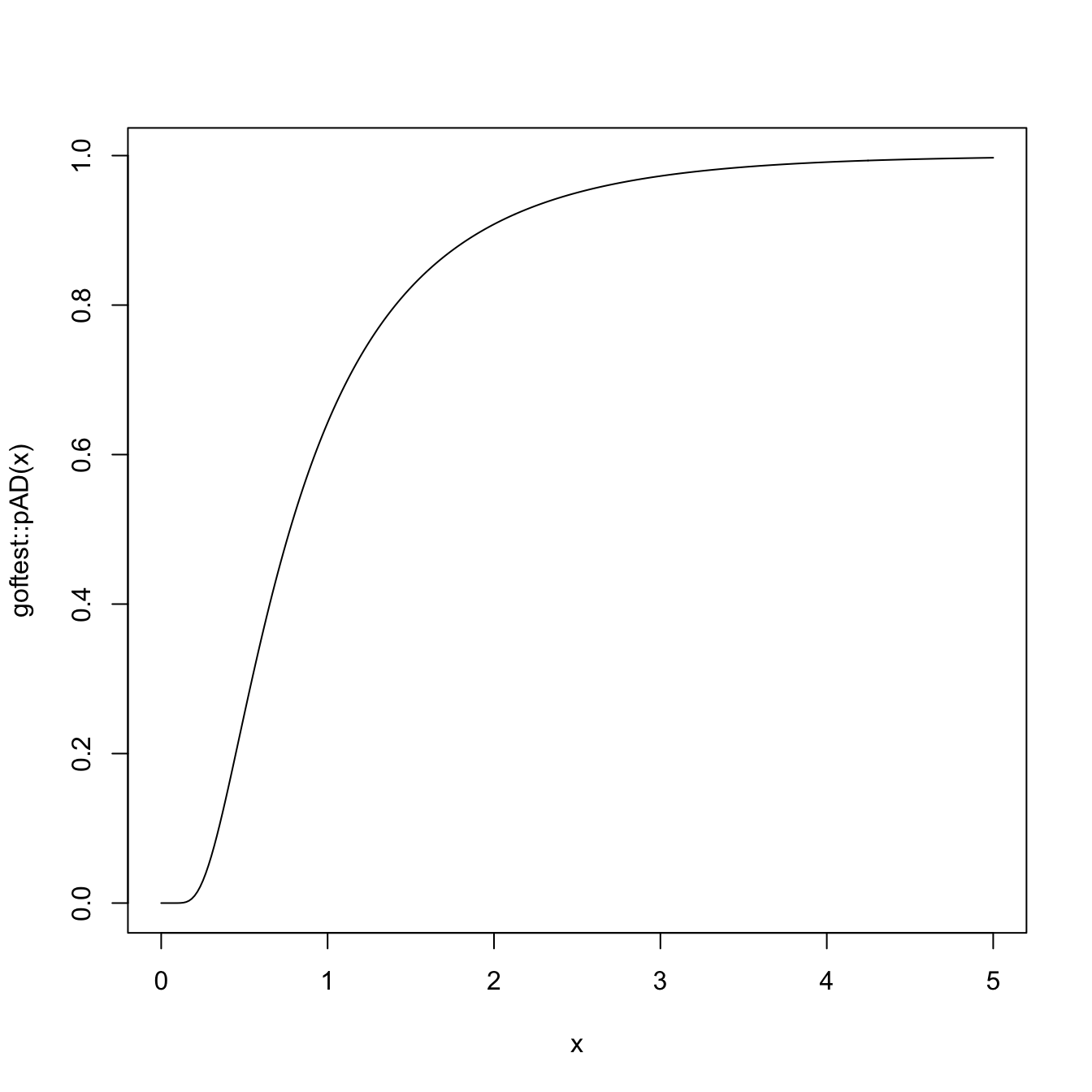

Implementation in R. For continuous data, the test statistic \(A_n^2\) and the asymptotic \(p\)-value are implemented in the

goftest::ad.test()function. The asymptotic cdf of (6.8) is given ingoftest::pAD()(goftest::qAD()computes its inverse). For discrete \(F_0,\) seedgof::cvm.test()withtype = "A2".

The following code chunk illustrates the implementation of the Anderson–Darling test.

# Sample data from a N(0, 1)

set.seed(3245678)

n <- 50

x <- rnorm(n = n)

# Anderson-Darling test for H_0: F = N(0, 1). Does not reject

goftest::ad.test(x = x, null = "pnorm")

##

## Anderson-Darling test of goodness-of-fit

## Null hypothesis: Normal distribution

## Parameters assumed to be fixed

##

## data: x

## An = 0.18502, p-value = 0.994

# Sample data from a Pois(5)

set.seed(3245678)

n <- 100

x <- rpois(n = n, lambda = 5)

# Anderson-Darling test for H_0: F = Pois(5) without specifying that the

# distribution is discrete. Rejects (!?) without giving a warning message

goftest::ad.test(x = x, null = "ppois", lambda = 5)

##

## Anderson-Darling test of goodness-of-fit

## Null hypothesis: Poisson distribution

## with parameter lambda = 5

## Parameters assumed to be fixed

##

## data: x

## An = 3.7279, p-value = 0.01191

# We rely on dgof::cvm.test() and run an Anderson-Darling test for H_0: F = Pois(5)

# adapted for discrete data, with data coming from a Pois(5)

x_eval <- 0:20

ppois_stepfun <- stepfun(x = x_eval, y = c(0, ppois(q = x_eval, lambda = 5)))

dgof::cvm.test(x = x, y = ppois_stepfun, type = "A2")

##

## Cramer-von Mises - A2

##

## data: x

## A2 = 0.3128, p-value = 0.9057

## alternative hypothesis: Two.sided

# Plot the asymptotic null distribution function

curve(goftest::pAD(x), from = 0, to = 5, n = 300)

Exercise 6.6 Implement the Anderson–Darling test by:

- Coding the Anderson–Darling statistic (6.7) from a sample \(X_1,\ldots,X_n\) and a cdf \(F_0.\) Check that the implementation coincides with the one of the

goftest::ad.test()function. - Computing the asymptotic \(p\)-value using

goftest::pAD(). Compare this asymptotic \(p\)-value with the exact \(p\)-value given bygoftest::ad.test(), observing that for large \(n\) the difference is inappreciable.

Exercise 6.7 Verify the correctness of the asymptotic representation of \(A_n^2\) that was given in (6.8). To do so:

- Simulate \(M=1,000\) samples of size \(n=200\) under \(H_0,\) obtain \(M\) statistics \(A_{n;1}^{2},\ldots, A_{n;M}^{2},\) and draw its histogram.

- Simulate \(M\) samples from the random variable (6.8) and draw its histogram.

- Check that the two histograms approximately coincide.

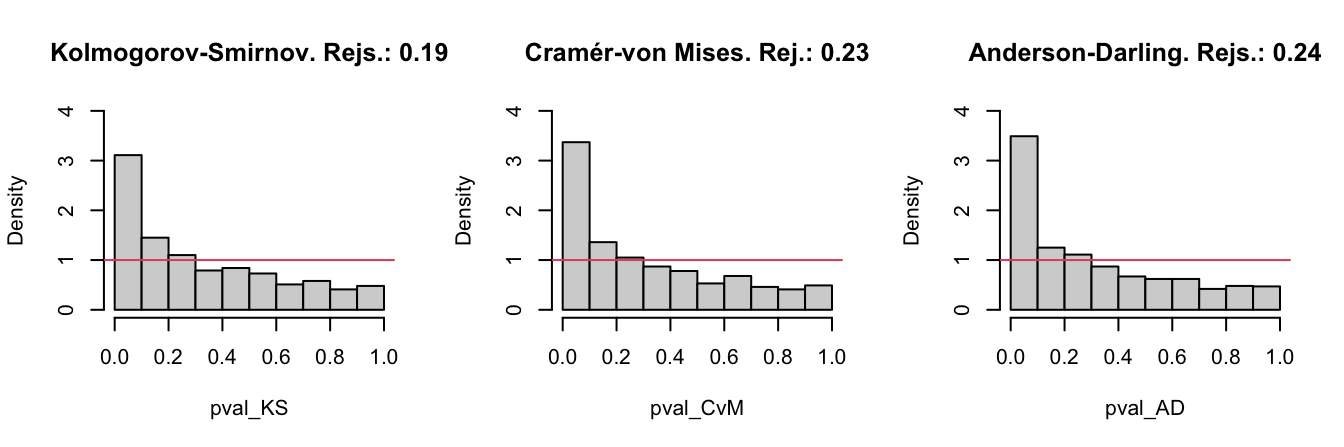

Exercise 6.8 Let’s investigate empirically the performance of the Kolmogorov–Smirnov, Cramér–von Mises, and Anderson–Darling tests for a specific scenario. We consider \(H_0:X\sim \mathcal{N}(0,1)\) and the following simulation study:

- Generate \(M=1,000\) samples of size \(n\) from \(\mathcal{N}(\mu,1).\)

- For each sample, test \(H_0\) with the three tests.

- Obtain the relative frequency of rejections at level \(\alpha\) for each test.

- Draw three histograms for the \(p\)-values in the spirit of Figure 6.3.

In order to cleanly perform the previous steps for several choices of \((n,\mu,\alpha),\) code a function that performs the simulation study from those arguments and gives something similar to Figure 6.3 as output. Then, use the following settings and accurately comment on the outcome of each of them:

-

\(H_0\) holds.

- Take \(n=25,\) \(\mu=0,\) and \(\alpha=0.05.\) Check that the relative frequency of rejections is about \(\alpha.\)

- Take \(n=25,\) \(\mu=0,\) and \(\alpha=0.10.\)

- Take \(n=100,\) \(\mu=0,\) and \(\alpha=0.10.\)

-

\(H_0\) does not hold.

- \(n=25,\) \(\mu=0.25,\) \(\alpha=0.05.\) Check that the relative frequency of rejections is above \(\alpha.\)

- \(n=50,\) \(\mu=0.25,\) \(\alpha=0.05.\)

- \(n=25,\) \(\mu=0.50,\) \(\alpha=0.05.\)

- Replace \(\mathcal{N}(\mu,1)\) with \(t_{10}.\) Take \(n=50\) and \(\alpha=0.05.\) Which test is clearly better? Why?

Figure 6.3: The histograms of the \(p\)-values and the relative rejection frequencies for the Kolmogorov–Smirnov, Cramér–von Mises, and Anderson–Darling tests. The null hypothesis is \(H_0:X\sim \mathcal{N}(0,1)\) and the sample of size \(n=25\) is generated from a \(\mathcal{N}(0.25,1).\) The significance level is \(\alpha=0.05\).

6.1.2 Normality tests

The tests seen in the previous section have a very notable practical limitation: they require complete knowledge of \(F_0,\) the hypothesized distribution for \(X.\) In practice, such a precise knowledge about \(X\) is unrealistic. Practitioners are more interested in answering more general questions, one of them being:

Is the data “normally distributed”?

With the statement “\(X\) is normally distributed” we refer to the fact that \(X\sim \mathcal{N}(\mu,\sigma^2)\) for certain parameters \(\mu\in\mathbb{R}\) and \(\sigma\in\mathbb{R^+},\) possibly unknown. This statement is notably more general than “\(X\) is distributed as a \(\mathcal{N}(0,1)\)” or “\(X\) is distributed as a \(\mathcal{N}(0.1,1.3)\)”.

The test for normality is formalized as follows. Given an iid sample \(X_1,\ldots,X_n\) from the distribution \(F,\) we test the null hypothesis

\[\begin{align} H_0:F\in\mathcal{F}_{\mathcal{N}}:=\left\{\Phi\left(\frac{\cdot-\mu}{\sigma}\right):\mu\in\mathbb{R},\sigma\in\mathbb{R}^+\right\},\tag{6.9} \end{align}\]

where \(\mathcal{F}_{\mathcal{N}}\) is the class of normal distributions, against the most general alternative

\[\begin{align*} H_1:F\not\in\mathcal{F}_{\mathcal{N}}. \end{align*}\]

Comparing (6.9) with (6.1), it is evident that the former is more general, as a range of possible values for \(F\) is included in \(H_0.\) This is the reason why \(H_0\) is called a composite null hypothesis, rather than a simple null hypothesis, as (6.1) was.

The testing of (6.9) requires the estimation of the unknown parameters \((\mu,\sigma).\) Their maximum likelihood estimators are \((\bar{X},S),\) where \(S:=\sqrt{\frac{1}{n}\sum_{i=1}^n(X_i-\bar{X})^2}\) is the sample standard deviation. Therefore, a tempting possibility is to apply the tests seen in Section 6.1.1 to

\[\begin{align*} H_0:F=\Phi\left(\frac{\cdot-\bar{X}}{S}\right). \end{align*}\]

However, as warned at the beginning of Section 6.1, this naive approach would result in seriously conservative tests that would fail to reject \(H_0\) adequately. The intuitive explanation is simple: when estimating \((\mu,\sigma)\) from the sample we are obtaining the closest normal cdf to \(F,\) that is, \(\Phi\left(\frac{\cdot-\bar{X}}{S}\right).\) Therefore, setting \(F_0=\Phi\left(\frac{\cdot-\bar{X}}{S}\right)\) and then running one of the ecdf-based tests on \(H_0:F=F_0\) will ignore this estimation step, which will bias the test decision towards \(H_0\).

Adequately accounting for the estimation of \((\mu,\sigma)\) amounts to study the asymptotic distributions under \(H_0\) of the test statistics that are computed with the data-dependent cdf \(\Phi\left(\frac{\cdot-\bar{X}}{S}\right)\) in the place of \(F_0.\) This is what is precisely done by the tests that adapt the ecdf-based tests seen in Section 6.1.1 by

- replacing \(F_0\) with \(\Phi\left(\frac{\cdot-\bar{X}}{S}\right)\) in the formulation of the test statistics;

- replacing \(U_i\) with \(P_i:=\Phi\left(\frac{X_i-\bar{X}}{\hat{S}}\right),\) \(i=1,\ldots,n,\) in the computational forms of the statistics;222

- obtaining a different null asymptotic distribution that is usually more convoluted and is approximated by tabulation or simulation.

6.1.2.1 Lilliefors test (Kolmogorov–Smirnov test for normality)

Test purpose. Given \(X_1,\ldots,X_n,\) it tests \(H_0: F\in\mathcal{F}_\mathcal{N}\) vs. \(H_1: F\not\in\mathcal{F}_\mathcal{N}\) consistently against all the alternatives in \(H_1.\)

-

Statistic definition and computation. The test statistic uses the supremum distance between \(F_n\) and \(\Phi\left(\frac{\cdot-\bar{X}}{S}\right)\):

\[\begin{align*} D_n=&\,\sqrt{n}\sup_{x\in\mathbb{R}} \left|F_n(x)-\Phi\left(\frac{x-\bar{X}}{S}\right)\right|=\max(D_n^+,D_n^-),\\ D_n^+=&\,\sqrt{n}\max_{1\leq i\leq n}\left\{\frac{i}{n}-P_{(i)}\right\},\\ D_n^-=&\,\sqrt{n}\max_{1\leq i\leq n}\left\{P_{(i)}-\frac{i-1}{n}\right\}, \end{align*}\]

where \(P_{(j)}\) stands for the \(j\)-th sorted \(P_i=\Phi\left(\frac{X_i-\bar{X}}{S}\right),\) \(i=1,\ldots,n.\) Clearly, if \(H_0\) holds, then \(D_n\) tends to be small. Hence, rejection happens when \(D_n\) is large.

Distribution under \(H_0.\) If \(H_0\) holds, the critical values at level of significance \(\alpha\) can be obtained from Lilliefors (1967).223 Dallal and Wilkinson (1986) provides an analytical approximation to the null distribution.

Highlights and caveats. The Lilliefors test is not distribution-free, in the sense that the null distribution of the test statistic clearly depends on the normality assumption. However, it is parameter-free, since the distribution does not depend on the actual parameters \((\mu,\sigma)\) for which \(F=\Phi\left(\frac{\cdot-\mu}{\sigma}\right)\) is satisfied. This result comes from the iid sample \(X_1,\ldots,X_n\stackrel{H_0}{\sim} \mathcal{N}(\mu,\sigma^2)\) generating the id sample \(\frac{X_1-\bar{X}}{S},\ldots,\frac{X_n-\bar{X}}{S}\stackrel{H_0}{\sim} \tilde{f}_0,\) where \(\tilde{f}_0\) is a certain pdf that does not depend on \((\mu,\sigma).\)224 Therefore, \(P_1,\ldots,P_n\) is an id sample that does not depend on \((\mu,\sigma).\)

Implementation in R. The

nortest::lillie.test()function implements the test.

6.1.2.2 Cramér–von Mises normality test

Test purpose. Given \(X_1,\ldots,X_n,\) it tests \(H_0: F\in\mathcal{F}_\mathcal{N}\) vs. \(H_1: F\not\in\mathcal{F}_\mathcal{N}\) consistently against all the alternatives in \(H_1.\)

-

Statistic definition and computation. The test statistic uses the quadratic distance between \(F_n\) and \(\Phi\left(\frac{\cdot-\bar{X}}{S}\right)\):

\[\begin{align*} W_n^2=&\,n\int\left(F_n(x)-\Phi\left(\frac{x-\bar{X}}{S}\right)\right)^2\,\mathrm{d}\Phi\left(\frac{x-\bar{X}}{S}\right)\\ =&\,\sum_{i=1}^n\left\{P_{(i)}-\frac{2i-1}{2n}\right\}^2+\frac{1}{12n}, \end{align*}\]

where \(P_{(j)}\) stands for the \(j\)-th sorted \(P_i=\Phi\left(\frac{X_i-\bar{X}}{S}\right),\) \(i=1,\ldots,n.\) Rejection happens when \(W_n^2\) is large.

Distribution under \(H_0.\) If \(H_0\) holds, the \(p\)-values of the test can be approximated using Table 4.9 in D’Agostino and Stephens (1986).

Highlights and caveats. Like the Lilliefors test, the Cramér–von Mises test is also parameter-free. Its usual power superiority over the Kolmogorov–Smirnov also extends to the testing of normality.

Implementation in R. The

nortest::cvm.test()function implements the test.

6.1.2.3 Anderson–Darling normality test

Test purpose. Given \(X_1,\ldots,X_n,\) it tests \(H_0: F\in\mathcal{F}_\mathcal{N}\) vs. \(H_1: F\not\in\mathcal{F}_\mathcal{N}\) consistently against all the alternatives in \(H_1.\)

-

Statistic definition and computation. The test statistic uses a weighted quadratic distance between \(F_n\) and \(\Phi\left(\frac{\cdot-\bar{X}}{S}\right)\):

\[\begin{align*} A_n^2=&\,n\int\frac{\left(F_n(x)-\Phi\left(\frac{x-\bar{X}}{S}\right)\right)^2}{\Phi\left(\frac{x-\bar{X}}{S}\right)\left(1-\Phi\left(\frac{x-\bar{X}}{S}\right)\right)}\,\mathrm{d}\Phi\left(\frac{x-\bar{X}}{S}\right)\\ =&\,-n-\frac{1}{n}\sum_{i=1}^n\left\{(2i-1)\log(P_{(i)})+(2n+1-2i)\log(1-P_{(i)})\right\}. \end{align*}\]

Rejection happens when \(A_n^2\) is large.

Distribution under \(H_0.\) If \(H_0\) holds, the \(p\)-values of the test can be approximated using Table 4.9 in D’Agostino and Stephens (1986).

Highlights and caveats. The test is also parameter-free. Since the test statistic places more weight on the tails than the Cramér–von Mises, it is better suited for detecting heavy-tailed deviations from normality.

Implementation in R. The

nortest::ad.test()function implements the test.

The following code chunk gives the implementation of these normality tests.

# Sample data from a N(10, 1)

set.seed(981589472)

n <- 200

x <- rnorm(n = n, mean = 10)

# Normality tests -- do not reject H0

nortest::lillie.test(x = x)

##

## Lilliefors (Kolmogorov-Smirnov) normality test

##

## data: x

## D = 0.037754, p-value = 0.6951

nortest::cvm.test(x = x)

##

## Cramer-von Mises normality test

##

## data: x

## W = 0.052993, p-value = 0.4662

nortest::ad.test(x = x)

##

## Anderson-Darling normality test

##

## data: x

## A = 0.38898, p-value = 0.3816

# Sample data from a Student's t with 3 degrees of freedom

x <- rt(n = n, df = 3)

# Normality tests -- reject H0

nortest::lillie.test(x = x)

##

## Lilliefors (Kolmogorov-Smirnov) normality test

##

## data: x

## D = 0.06958, p-value = 0.01979

nortest::cvm.test(x = x)

##

## Cramer-von Mises normality test

##

## data: x

## W = 0.24584, p-value = 0.001432

nortest::ad.test(x = x)

##

## Anderson-Darling normality test

##

## data: x

## A = 1.5929, p-value = 0.000415

# Flawed normality tests -- do not reject because the null distribution

# that is employed is wrong!

ks.test(x = x, y = "pnorm", mean = mean(x), sd = sd(x))

##

## Asymptotic one-sample Kolmogorov-Smirnov test

##

## data: x

## D = 0.06958, p-value = 0.2876

## alternative hypothesis: two-sided

goftest::cvm.test(x = x, null = "pnorm", mean = mean(x), sd = sd(x))

##

## Cramer-von Mises test of goodness-of-fit

## Null hypothesis: Normal distribution

## with parameters mean = 0.0238254313803778, sd = 1.6388504082364

## Parameters assumed to be fixed

##

## data: x

## omega2 = 0.24584, p-value = 0.1938

goftest::ad.test(x = x, null = "pnorm", mean = mean(x), sd = sd(x))

##

## Anderson-Darling test of goodness-of-fit

## Null hypothesis: Normal distribution

## with parameters mean = 0.0238254313803778, sd = 1.6388504082364

## Parameters assumed to be fixed

##

## data: x

## An = 1.5929, p-value = 0.15586.1.2.4 Shapiro–Francia normality test

We now consider a different normality test that is not based on the ecdf, the Shapiro–Francia normality test. This test is a modification of the wildly popular Shapiro–Wilk test, but the Shapiro–Francia test is easier to interpret and explain.225

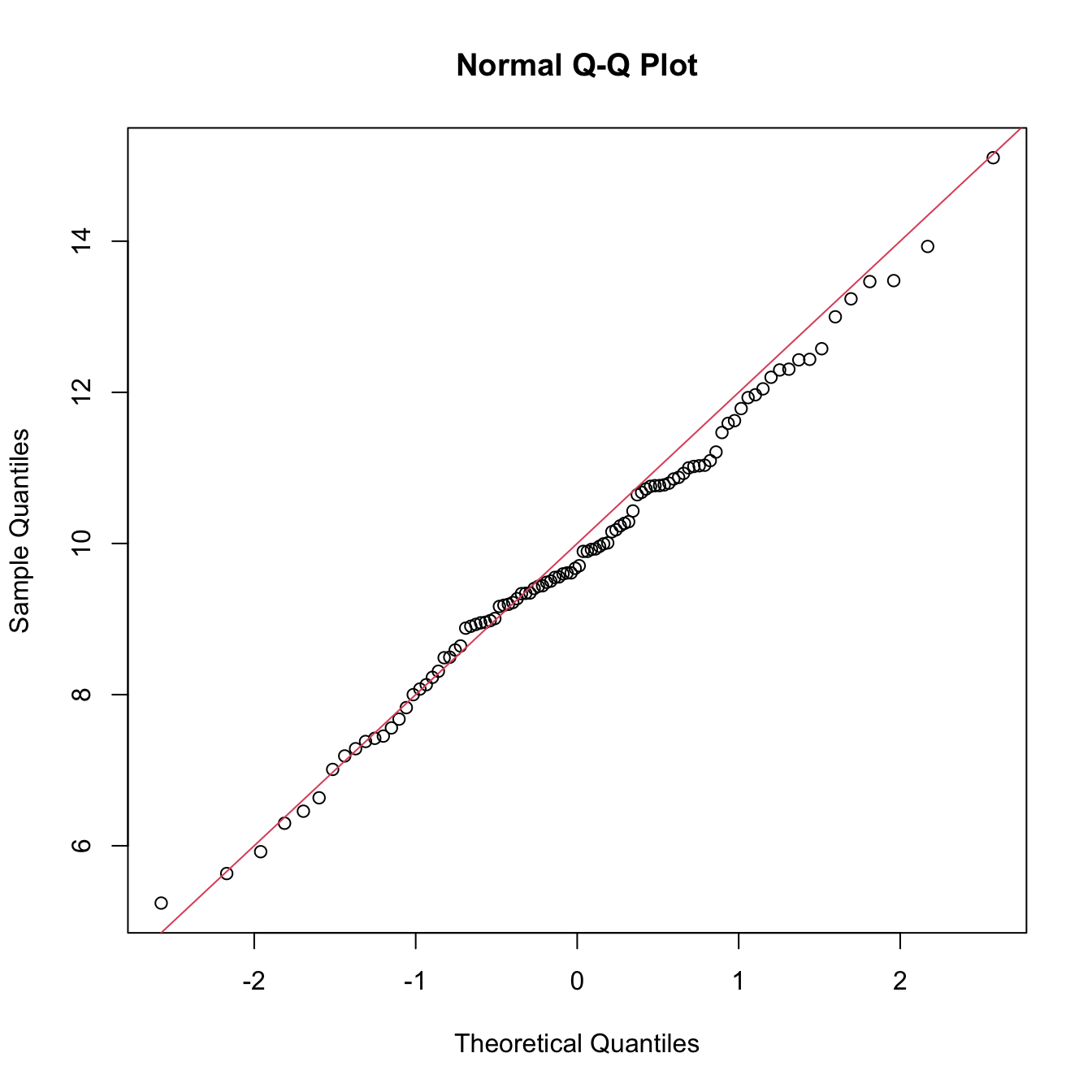

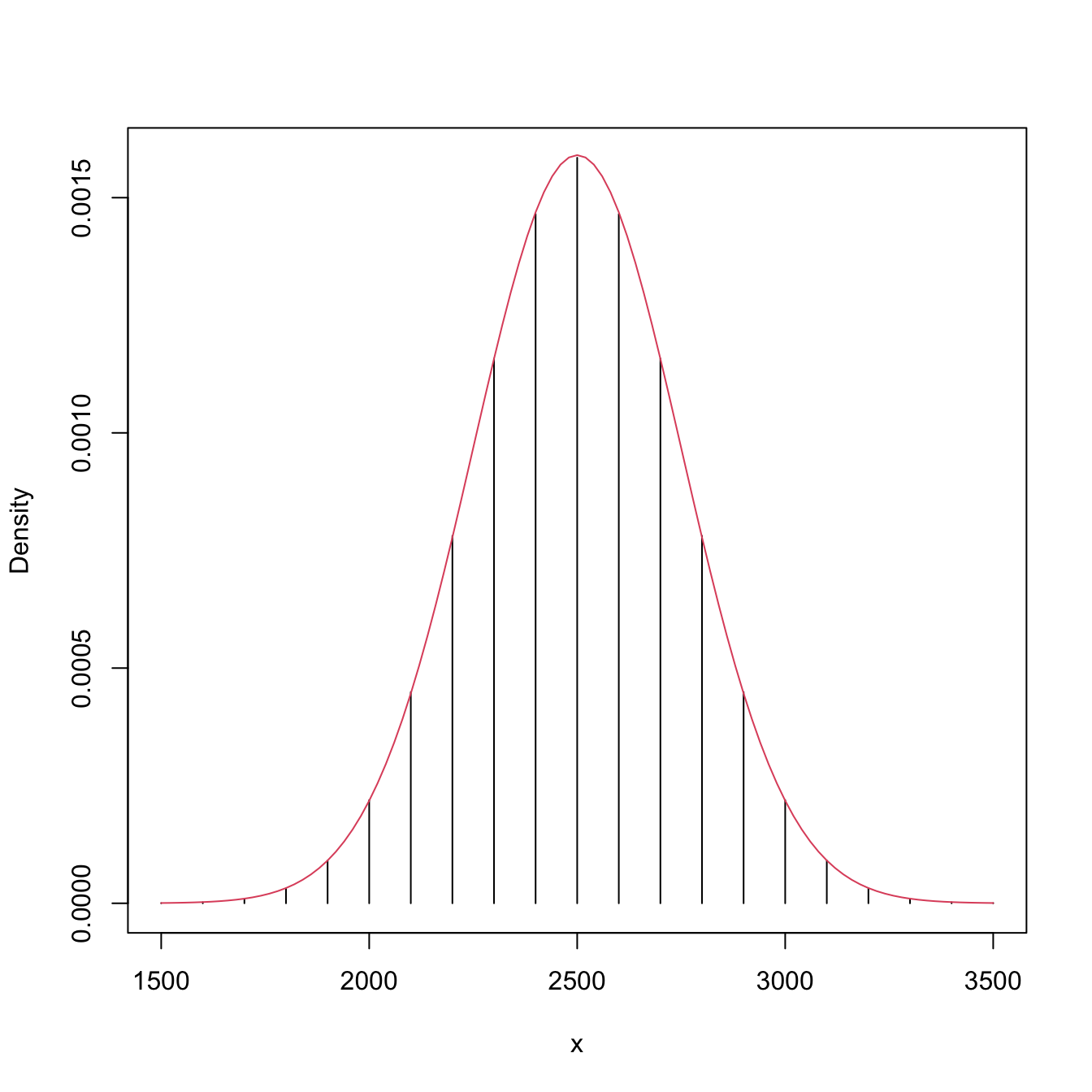

The test is based on the QQ-plot of the sample \(X_1,\ldots,X_n.\) The plot is composed of the pairs \(\left(\Phi^{-1}\left(\frac{i}{n+1}\right),X_{(i)}\right)\,,\) for \(i=1,\ldots,n.\)226 That is, the QQ-plot plots \(\mathcal{N}(0,1)\)-quantiles against sample quantiles.

Since the \(\alpha\)-lower quantile of a \(\mathcal{N}(\mu,\sigma^2),\) \(z_{\alpha;\mu,\sigma},\) verifies that

\[\begin{align} z_{\alpha;\mu,\sigma}=\mu+\sigma z_{\alpha;0,1},\tag{6.10} \end{align}\]

then, if the sample is distributed as a \(\mathcal{N}(\mu,\sigma^2),\) the points of the QQ-plot are expected to align about the straight line (6.10). This is illustrated in the code below.

n <- 100

mu <- 10

sigma <- 2

set.seed(12345678)

x <- rnorm(n, mean = mu, sd = sigma)

qqnorm(x)

abline(a = mu, b = sigma, col = 2)

Figure 6.4: QQ-plot (qqnorm()) for data simulated from a \(\mathcal{N}(\mu,\sigma^2)\) and theoretical line \(y=\mu+\sigma x\) (red).

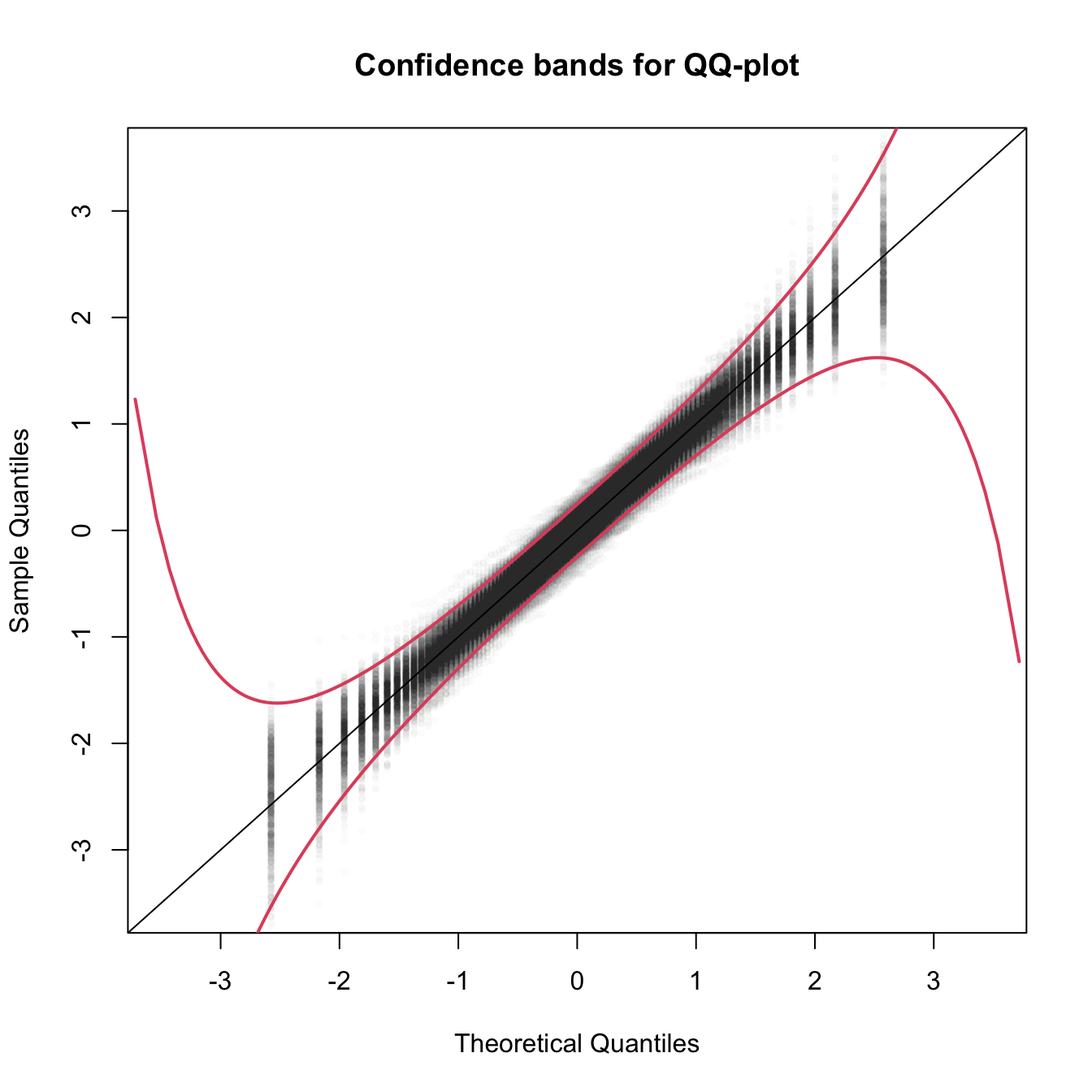

The QQ-plot can therefore be used as a graphical check for normality: if the data is distributed about some straight line, then it likely is normally distributed. However, this graphical check is subjective and, to complicate things further, it is usual for departures from the diagonal to be larger in the extremes than in the center, even under normality, although these departures are more evident if the data is non-normal.227 Figure 6.5, generated with the code below, depicts the uncertainty behind the QQ-plot.

M <- 1e3

n <- 100

plot(0, 0, xlim = c(-3.5, 3.5), ylim = c(-3.5, 3.5), type = "n",

xlab = "Theoretical Quantiles", ylab = "Sample Quantiles",

main = "Confidence bands for QQ-plot")

x <- matrix(rnorm(M * n), nrow = n, ncol = M)

matpoints(qnorm(ppoints(n)), apply(x, 2, sort), pch = 19, cex = 0.5,

col = gray(0, alpha = 0.01))

abline(a = 0, b = 1)

p <- seq(0, 1, l = 1e4)

xi <- qnorm(p)

lines(xi, xi - qnorm(0.975) / sqrt(n) * sqrt(p * (1 - p)) / dnorm(xi),

col = 2, lwd = 2)

lines(xi, xi + qnorm(0.975) / sqrt(n) * sqrt(p * (1 - p)) / dnorm(xi),

col = 2, lwd = 2)

Figure 6.5: The uncertainty behind the QQ-plot. The figure aggregates \(M=1,000\) different QQ-plots of \(\mathcal{N}(0,1)\) data with \(n=100,\) displaying for each of them the pairs \((x_p,\hat x_p)\) evaluated at \(p=\frac{i-1/2}{n},\) \(i=1,\ldots,n\) (as they result from ppoints(n)). The uncertainty is measured by the asymptotic \(100(1-\alpha)\%\) CIs for \(\hat x_p,\) given by \(\left(x_p\pm\frac{z_{\alpha/2}}{\sqrt{n}}\frac{\sqrt{p(1-p)}}{\phi(x_p)}\right).\) These curves are displayed in red for \(\alpha=0.05.\) Observe that the vertical strips arise because the \(x_p\) coordinate is deterministic.

The Shapiro–Francia test evaluates how tightly distributed the QQ plot is about the linear trend that must arise if the data is normally distributed.

Test purpose. Given \(X_1,\ldots,X_n,\) it tests \(H_0: F\in\mathcal{F}_\mathcal{N}\) vs. \(H_1: F\not\in\mathcal{F}_\mathcal{N}\) consistently against all the alternatives in \(H_1.\)

-

Statistic definition and computation. The test statistic, \(W_n',\) is simply the squared correlation coefficient for the sample228

\[\begin{align*} \left(\Phi^{-1}\left(\frac{i - 3/8}{n-1/4}\right),X_{(i)}\right),\quad i=1,\ldots,n. \end{align*}\]

Distribution under \(H_0.\) Royston (1993) derived a simple analytical formula that serves to approximate the null distribution of \(W_n'\) for \(5\leq n\leq 5,000.\)

Highlights and caveats. The test is also parameter-free, since its distribution does not depend on the actual parameters \((\mu,\sigma)\) for which the equality \(H_0\) holds.

Implementation in R. The

nortest::sf.test()function implements the test. The condition \(5\leq n\leq 5,000\) is enforced by the function.229

The use of the function is described below.

# Does not reject H0

set.seed(123456)

n <- 100

x <- rnorm(n = n, mean = 10)

nortest::sf.test(x)

##

## Shapiro-Francia normality test

##

## data: x

## W = 0.99336, p-value = 0.8401

# Rejects H0

x <- rt(n = n, df = 3)

nortest::sf.test(x)

##

## Shapiro-Francia normality test

##

## data: x

## W = 0.91545, p-value = 2.754e-05

# Test statistic

cor(x = sort(x), y = qnorm(ppoints(n, a = 3/8)))^2

## [1] 0.9154466Exercise 6.9 The gamma distribution can be approximated by a normal distribution. Precisely, \(\Gamma(\lambda,n)\approx\mathcal{N}\left(\frac{n}{\lambda},\frac{n}{\lambda^2}\right)\) when \(n\to\infty.\) The following simulation study is designed to evaluate the accuracy of the approximation with \(n\to\infty\) using goodness-of-fit tests. For that purpose, we generate \(X_1,\ldots,X_m\sim\Gamma(\lambda,n)\) and run the following tests:

- The Kolmogorov–Smirnov, Cramér–von Mises, and Anderson–Darling tests, where \(F_0\) stands for the cdf of \(\mathcal{N}\left(\frac{n}{\lambda},\frac{n}{\lambda^2}\right).\)

- The normality adaptations of the previous tests.

Do:

- Code a function that simulates \(X_1,\ldots,X_m\sim\Gamma(\lambda,n)\) and returns the \(p\)-values of the aforementioned tests. The function must take as input \(\lambda,\) \(n,\) and \(m.\) Call this function

simulation(). - Call the

simulation()function \(M=500\) times for \(\lambda=1,\) \(n=20,\) and \(m=100,\) and represent in a \(2\times 3\) plot the histograms of the \(p\)-values of the six tests. Comment on the output. - Repeat the experiment for: (i) \(n=50,200\) with \(m=100;\) and (ii) \(m=50,200\) with \(n=50.\) Explain the interpretations of the effects of \(n\) and \(m\) in the results.

6.1.3 Bootstrap-based approach to goodness-of-fit testing

Each normality test discussed in the previous section is an instance of a goodness-of-fit test for a parametric distribution model. Precisely, the normality tests are goodness-of-fit tests for the normal distribution model. Therefore, they address a specific instance of the test of the null composite hypothesis

\[\begin{align*} H_0:F\in\mathcal{F}_{\Theta}:=\left\{F_\theta:\theta\in\Theta\subset\mathbb{R}^q\right\}, \end{align*}\]

where \(\mathcal{F}_{\Theta}\) denotes a certain parametric family of distributions indexed by the (possibly multidimensional) parameter \(\theta\in\Theta,\) versus the most general alternative

\[\begin{align*} H_1:F\not\in\mathcal{F}_{\Theta}. \end{align*}\]

The normality case demonstrated that the goodness-of-fit tests for the simple hypothesis were not readily applicable, and that the null distributions were affected by the estimation of the unknown parameters \(\theta=(\mu,\sigma).\) In addition, these null distributions tend to be cumbersome, requiring analytical approximations that have to be done on a test-by-test basis in order to obtain computable \(p\)-values.

To make things worse, the derivations that were done to obtain the asymptotic distributions of the normality test are not reusable if \(\mathcal{F}_\Theta\) is different. For example, if we wanted to test exponentiality, that is,

\[\begin{align} H_0:F\in\mathcal{F}_{\mathbb{R}^+}=\left\{F_\theta(x)=(1-e^{-\theta x})1_{\{x>0\}}:\theta\in\mathbb{R}^+\right\},\tag{6.11} \end{align}\]

then new asymptotic distributions with their corresponding new analytical approximations would need to be derived. Clearly, this is not a very practical approach if we are to evaluate the goodness-of-fit of several parametric models. A practical computational-based solution is to rely on bootstrap, specifically, on parametric bootstrap.

Since the main problem is to establish the distribution of the test statistic under \(H_0,\) then a possibility is to approximate this distribution by the distribution of the bootstrapped statistic. Precisely, let \(T_n\) be the statistic computed from the sample

\[\begin{align*} X_1,\ldots,X_n\stackrel{H_0}{\sim}F_\theta \end{align*}\]

and let \(T_n^*\) be its bootstrapped version, that is, the statistic computed from the simulated sample230

\[\begin{align} X^*_1,\ldots,X^*_n \sim F_{\hat\theta}.\tag{6.12} \end{align}\]

Then, if \(H_0\) holds, the distribution of \(T_n\) is approximated231 by the one of \(T_n^*\). In addition, since the sampling in (6.12) is completely under our control, we can approximate arbitrarily well the distribution of \(T_n^*\) by Monte Carlo. For example, the computation of the upper tail probability \(\mathbb{P}^*[T_n^*>x],\) required to obtain \(p\)-values in all the tests we have seen, can be done by

\[\begin{align*} \mathbb{P}^*[T_n^* > x]\approx\frac{1}{B}\sum_{b=1}^B 1_{\{T_n^{*b}> x\}}, \end{align*}\]

where \(T_n^{*1},\ldots,T_n^{*B}\) is a sample from \(T_n^*\) obtained by simulating \(B\) bootstrap samples from (6.12).

The whole approach can be summarized in the following bootstrap-based procedure for performing a goodness-of-fit test for a parametric distribution model:

Estimate \(\theta\) from the sample \(X_1,\ldots,X_n,\) obtaining \(\hat\theta\) (for example, use maximum likelihood).

Compute the statistic \(T_n\) from \(X_1,\ldots,X_n\) and \(\hat\theta.\)

-

Enter the “bootstrap world”. For \(b=1,\ldots,B\):

- Simulate a bootstrap sample \(X_1^{*b},\ldots,X_n^{*b}\) from \(F_{\hat\theta}.\)

- Compute \(\hat\theta^{*b}\) from \(X_1^{*b},\ldots,X_n^{*b}\) exactly in the same form that \(\hat\theta\) was computed from \(X_1,\ldots,X_n.\)

- Compute \(T_n^{*b}\) from \(X_1^{*b},\ldots,X_n^{*b}\) and \(\hat\theta^{*b}.\)

-

Obtain the \(p\)-value approximation

\[\begin{align*} p\text{-value}\approx\frac{1}{B}\sum_{b=1}^B 1_{\{T_n^{*b}> T_n\}} \end{align*}\]

and emit a test decision from it. Modify it accordingly if rejection of \(H_0\) does not happen for large values of \(T_n.\)

The following chunk of code provides a template function for implementing the previous algorithm. The template is initialized with the specifics for testing (6.11), for which \(\hat\theta_{\mathrm{ML}}=1/{\bar X}.\) The function uses the boot::boot() function for carrying out the parametric bootstrap.

# A goodness-of-fit test of the exponential distribution using the

# Cramér-von Mises statistic

cvm_exp_gof <- function(x, B = 5e3, plot_boot = TRUE) {

# Test statistic function (depends on the data only)

Tn <- function(data) {

# Maximum likelihood estimator -- MODIFY DEPENDING ON THE PROBLEM

theta_hat <- 1 / mean(data)

# Test statistic -- MODIFY DEPENDING ON THE PROBLEM

goftest::cvm.test(x = data, null = "pexp", rate = theta_hat)$statistic

}

# Function to simulate bootstrap samples X_1^*, ..., X_n^*. Requires TWO

# arguments, one being the data X_1, ..., X_n and the other containing

# the parameter theta

r_mod <- function(data, theta) {

# Simulate from an exponential. In this case, the function only uses

# the sample size from the data argument to estimate theta -- MODIFY

# DEPENDING ON THE PROBLEM

rexp(n = length(data), rate = 1 / theta)

}

# Estimate of theta -- MODIFY DEPENDING ON THE PROBLEM

theta_hat <- 1 / mean(x)

# Perform bootstrap resampling with the aid of boot::boot()

Tn_star <- boot::boot(data = x, statistic = Tn, sim = "parametric",

ran.gen = r_mod, mle = theta_hat, R = B)

# Test information -- MODIFY DEPENDING ON THE PROBLEM

method <- "Bootstrap-based Cramér-von Mises test for exponentiality"

alternative <- "any alternative to exponentiality"

# p-value: modify if rejection does not happen for large values of the

# test statistic. $t0 is the original statistic and $t has the bootstrapped

# ones.

pvalue <- mean(Tn_star$t > Tn_star$t0)

# Construct an "htest" result

result <- list(statistic = c("stat" = Tn_star$t0), p.value = pvalue,

theta_hat = theta_hat, statistic_boot = drop(Tn_star$t),

B = B, alternative = alternative, method = method,

data.name = deparse(substitute(x)))

class(result) <- "htest"

# Plot the position of the original statistic with respect to the

# bootstrap replicates?

if (plot_boot) {

hist(result$statistic_boot, probability = TRUE,

main = paste("p-value:", result$p.value),

xlab = latex2exp::TeX("$T_n^*$"))

rug(result$statistic_boot)

abline(v = result$statistic, col = 2, lwd = 2)

text(x = result$statistic, y = 1.5 * mean(par()$usr[3:4]),

labels = latex2exp::TeX("$T_n$"), col = 2, pos = 4)

}

# Return "htest"

return(result)

}

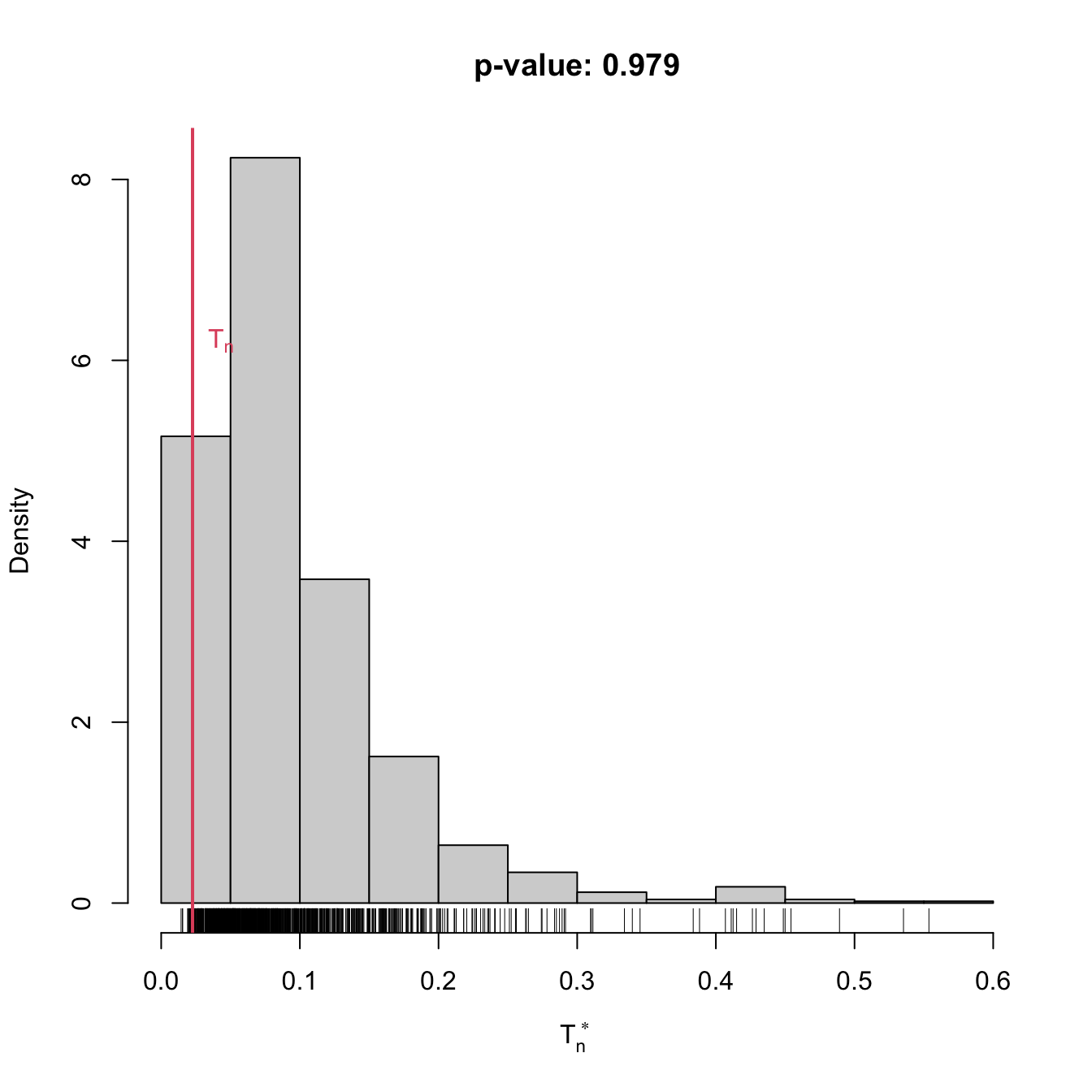

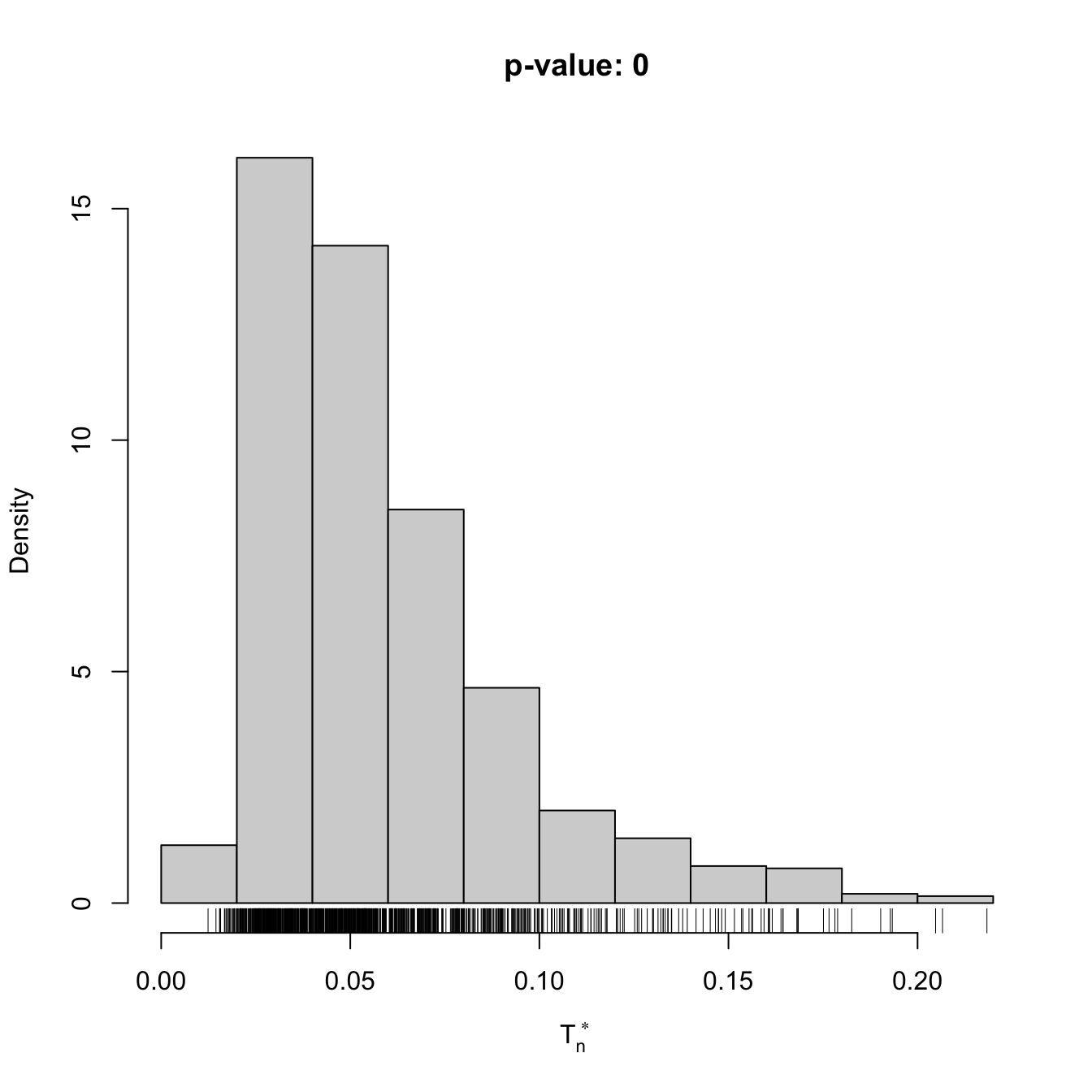

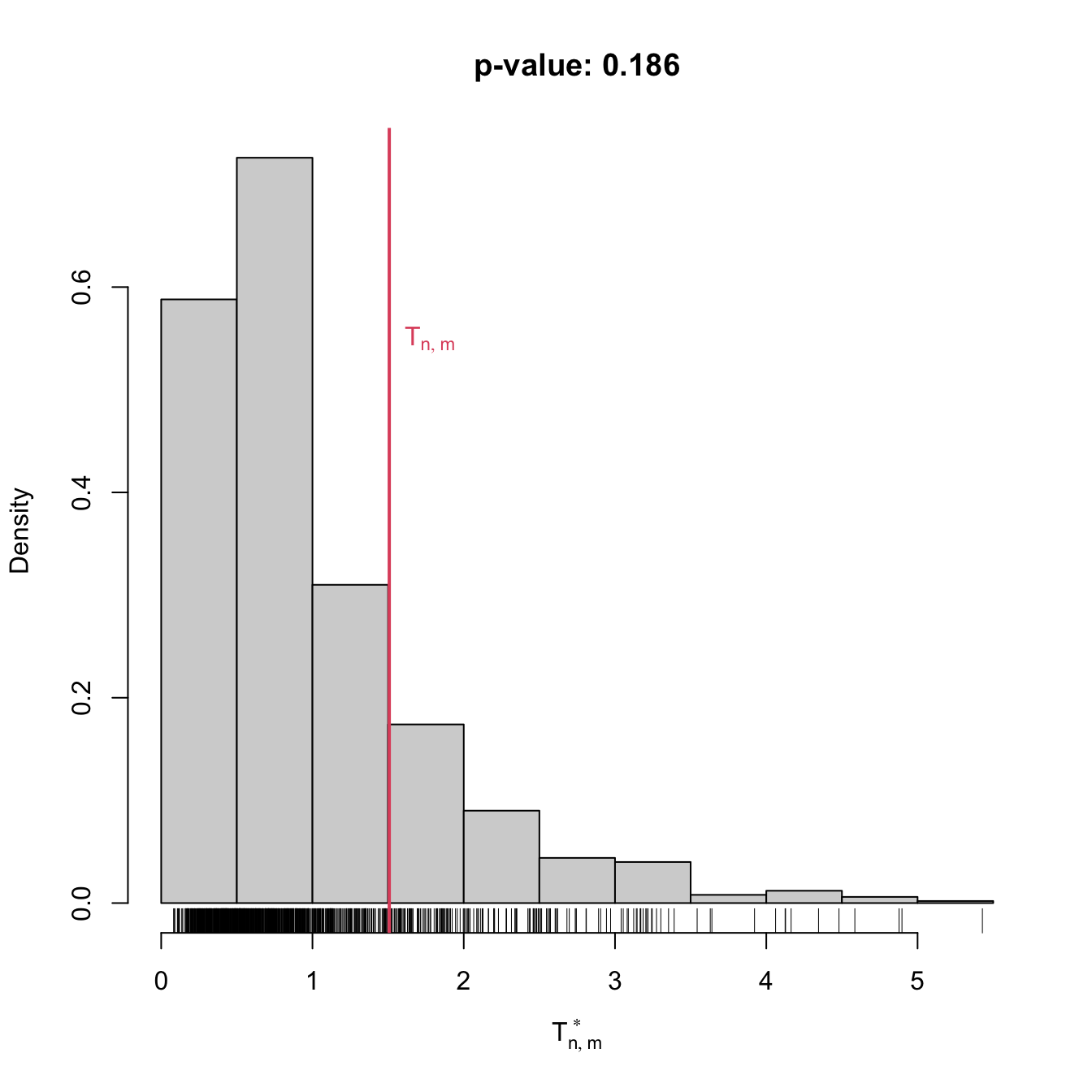

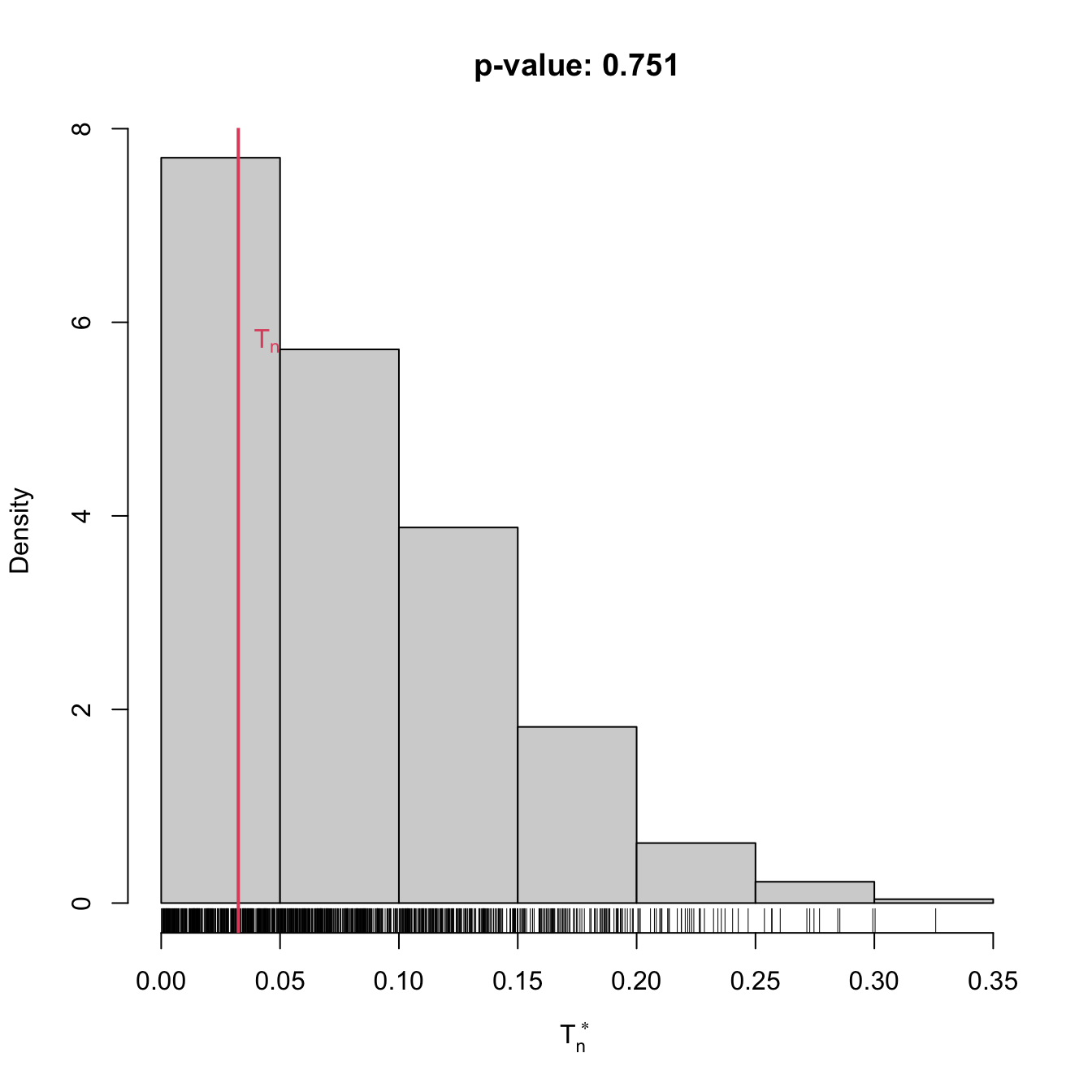

# Check the test for H0 true

set.seed(123456)

x <- rgamma(n = 100, shape = 1, scale = 1)

gof0 <- cvm_exp_gof(x = x, B = 1e3)

gof0

##

## Bootstrap-based Cramér-von Mises test for exponentiality

##

## data: x

## stat.omega2 = 0.022601, p-value = 0.979

## alternative hypothesis: any alternative to exponentiality

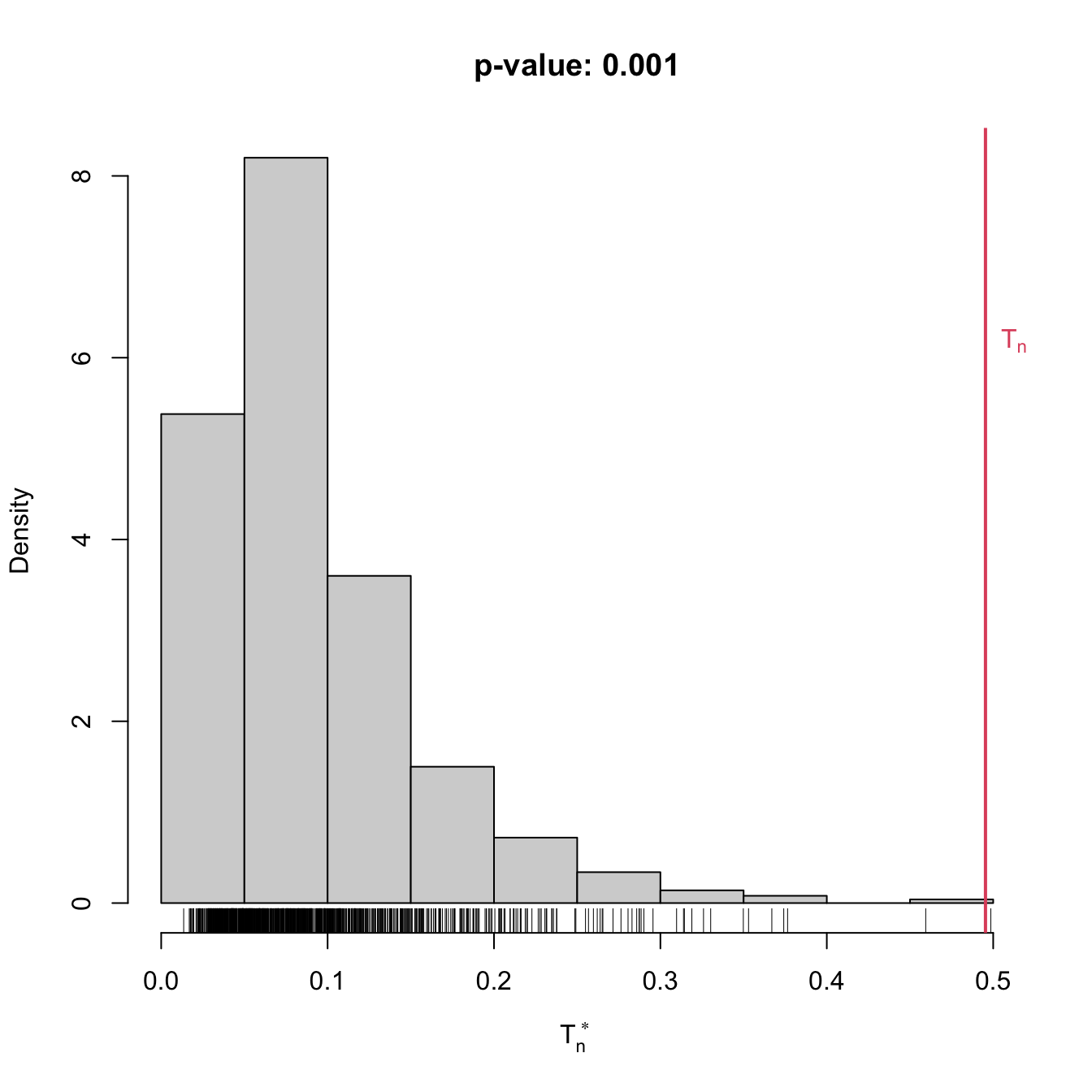

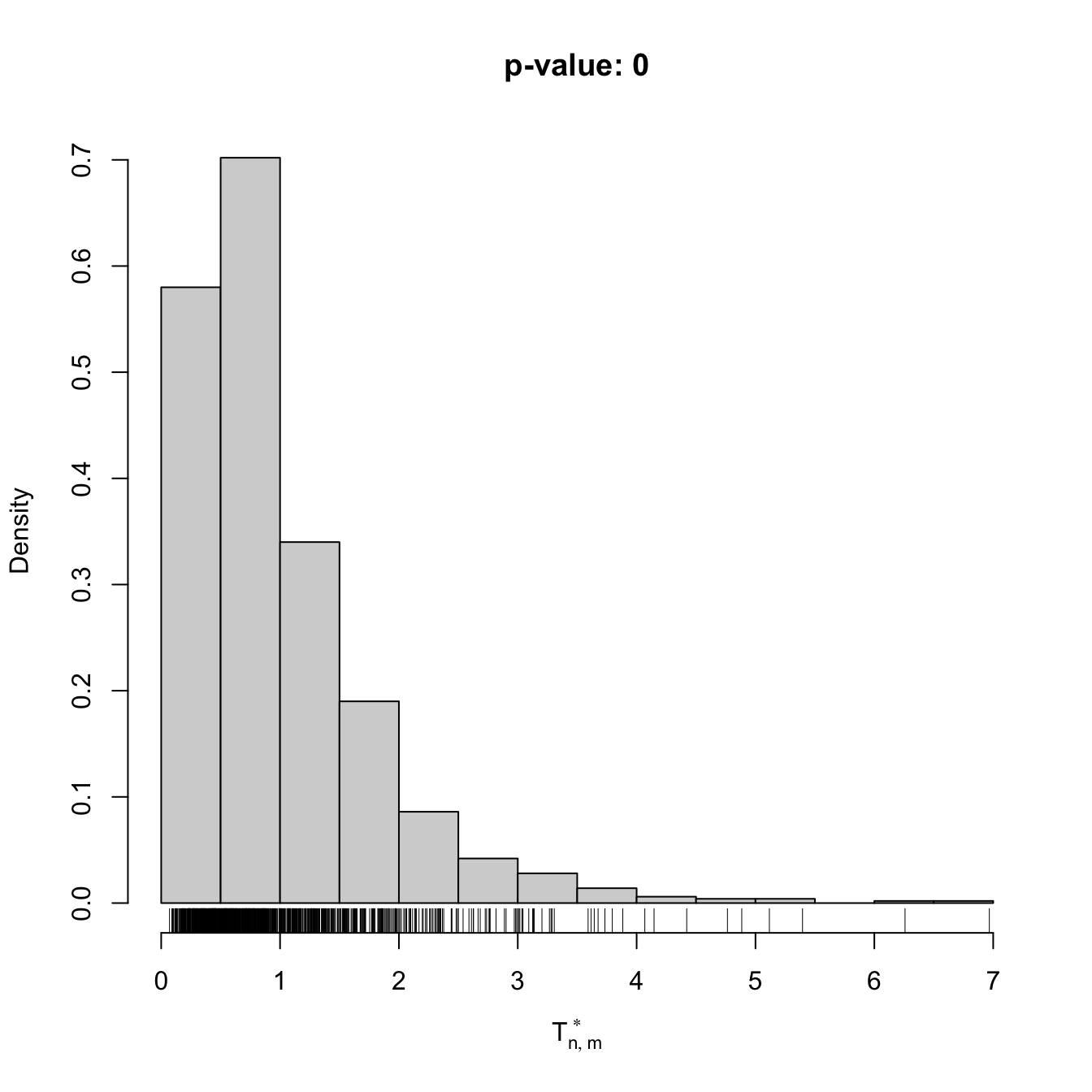

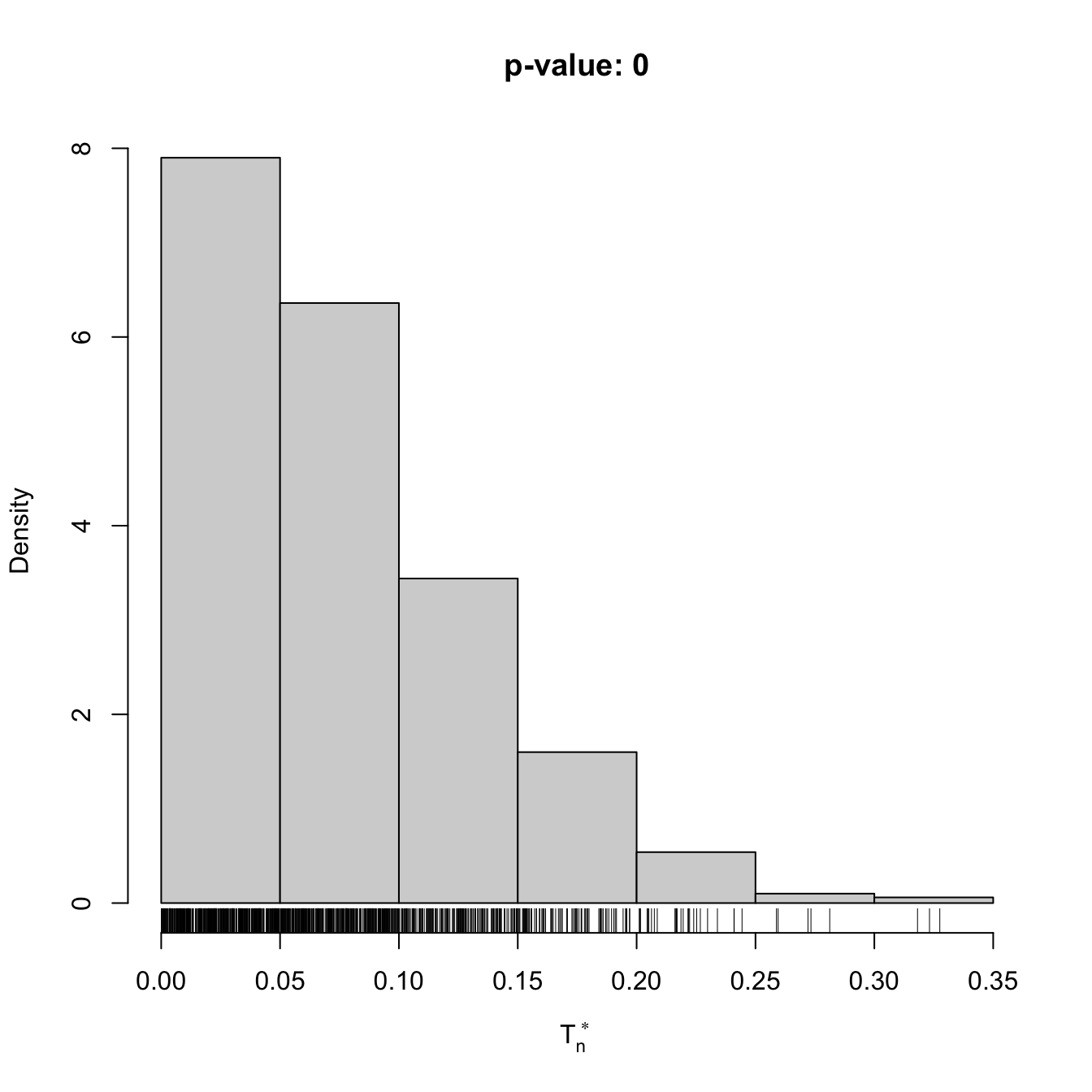

# Check the test for H0 false

x <- rgamma(n = 100, shape = 2, scale = 1)

gof1 <- cvm_exp_gof(x = x, B = 1e3)

gof1

##

## Bootstrap-based Cramér-von Mises test for exponentiality

##

## data: x

## stat.omega2 = 0.49536, p-value = 0.001

## alternative hypothesis: any alternative to exponentialityAnother example is given below. It adapts the previous template for the flexible class of mixtures of normal distributions given in (2.32).

# A goodness-of-fit test of a mixture of m normals using the

# Cramér-von Mises statistic

cvm_nm_gof <- function(x, m, B = 1e3, plot_boot = TRUE) {

# Test statistic function (depends on the data only)

Tn <- function(data) {

# EM algorithm for fitting normal mixtures. With trace = 0 we disable the

# default convergence messages or otherwise they will saturate the screen

# with the bootstrap loop. Be aware that this is a potentially dangerous

# practice, as we may lose important information about the convergence of

# the EM algorithm

theta_hat <- nor1mix::norMixEM(x = data, m = m, trace = 0)

# Test statistic

goftest::cvm.test(x = data, null = nor1mix::pnorMix,

obj = theta_hat)$statistic

}

# Function to simulate bootstrap samples X_1^*, ..., X_n^*. Requires TWO

# arguments, one being the data X_1, ..., X_n and the other containing

# the parameter theta

r_mod <- function(data, theta) {

nor1mix::rnorMix(n = length(data), obj = theta)

}

# Estimate of theta

theta_hat <- nor1mix::norMixEM(x = x, m = m, trace = 0)

# Perform bootstrap resampling with the aid of boot::boot()

Tn_star <- boot::boot(data = x, statistic = Tn, sim = "parametric",

ran.gen = r_mod, mle = theta_hat, R = B)

# Test information

method <- "Bootstrap-based Cramér-von Mises test for normal mixtures"

alternative <- paste("any alternative to a", m, "normal mixture")

# p-value: modify if rejection does not happen for large values of the

# test statistic. $t0 is the original statistic and $t has the bootstrapped

# ones.

pvalue <- mean(Tn_star$t > Tn_star$t0)

# Construct an "htest" result

result <- list(statistic = c("stat" = Tn_star$t0), p.value = pvalue,

theta_hat = theta_hat, statistic_boot = drop(Tn_star$t),

B = B, alternative = alternative, method = method,

data.name = deparse(substitute(x)))

class(result) <- "htest"

# Plot the position of the original statistic with respect to the

# bootstrap replicates?

if (plot_boot) {

hist(result$statistic_boot, probability = TRUE,

main = paste("p-value:", result$p.value),

xlab = latex2exp::TeX("$T_n^*$"))

rug(result$statistic_boot)

abline(v = result$statistic, col = 2, lwd = 2)

text(x = result$statistic, y = 1.5 * mean(par()$usr[3:4]),

labels = latex2exp::TeX("$T_n$"), col = 2, pos = 4)

}

# Return "htest"

return(result)

}

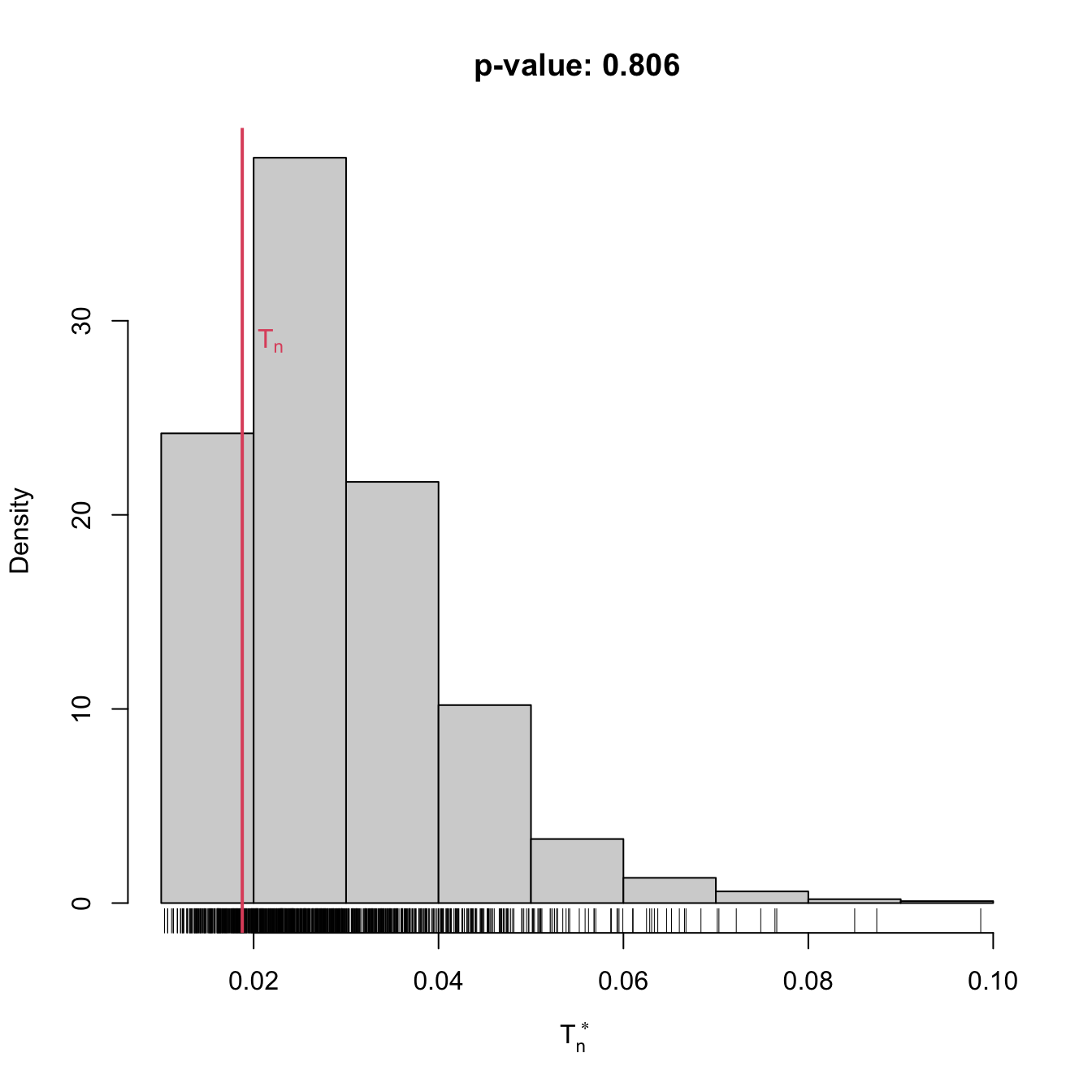

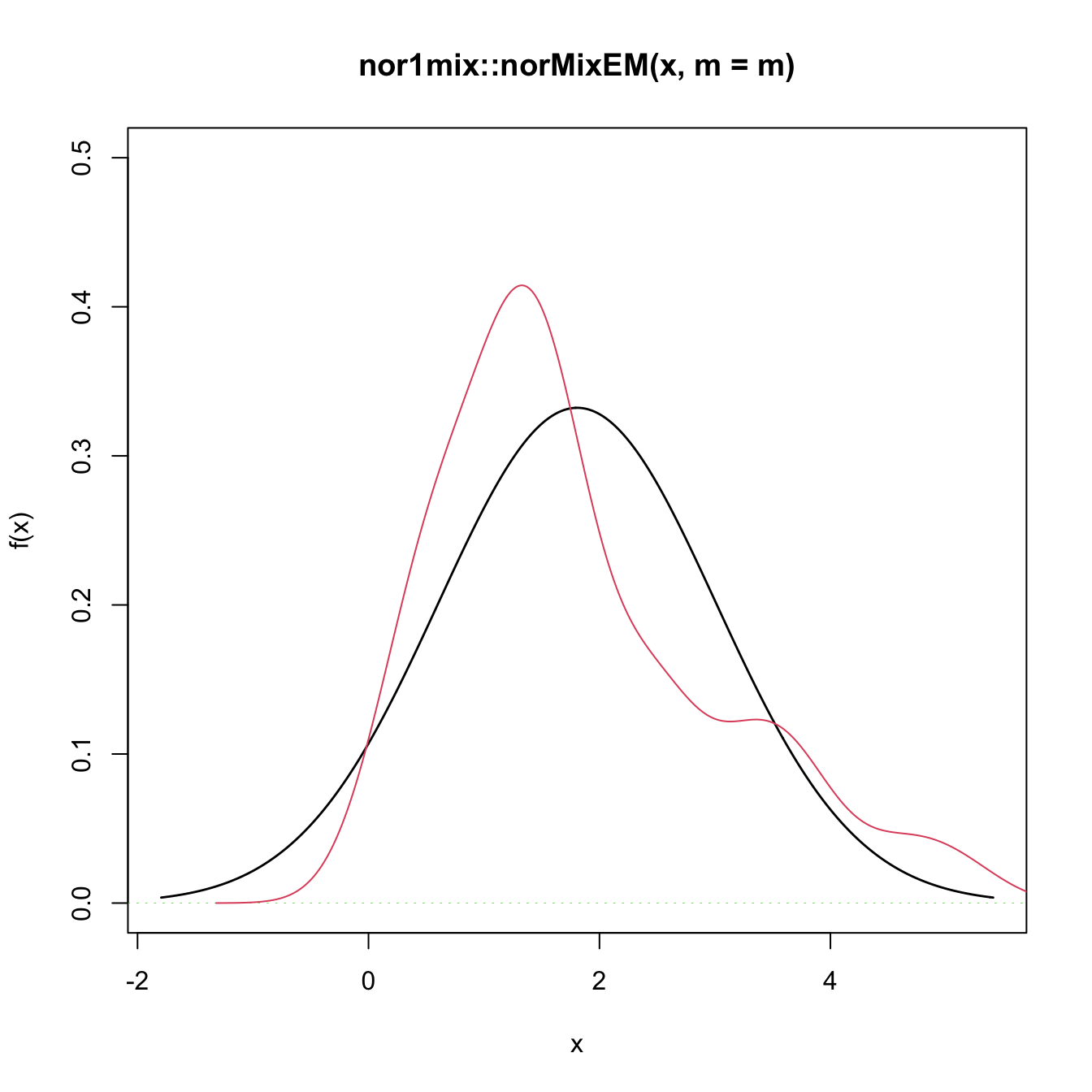

# Check the test for H0 true

set.seed(123456)

x <- c(rnorm(n = 100, mean = 2, sd = 0.5), rnorm(n = 100, mean = -2))

gof0 <- cvm_nm_gof(x = x, m = 2, B = 1e3)

gof0

##

## Bootstrap-based Cramér-von Mises test for normal mixtures

##

## data: x

## stat.omega2 = 0.018769, p-value = 0.806

## alternative hypothesis: any alternative to a 2 normal mixture

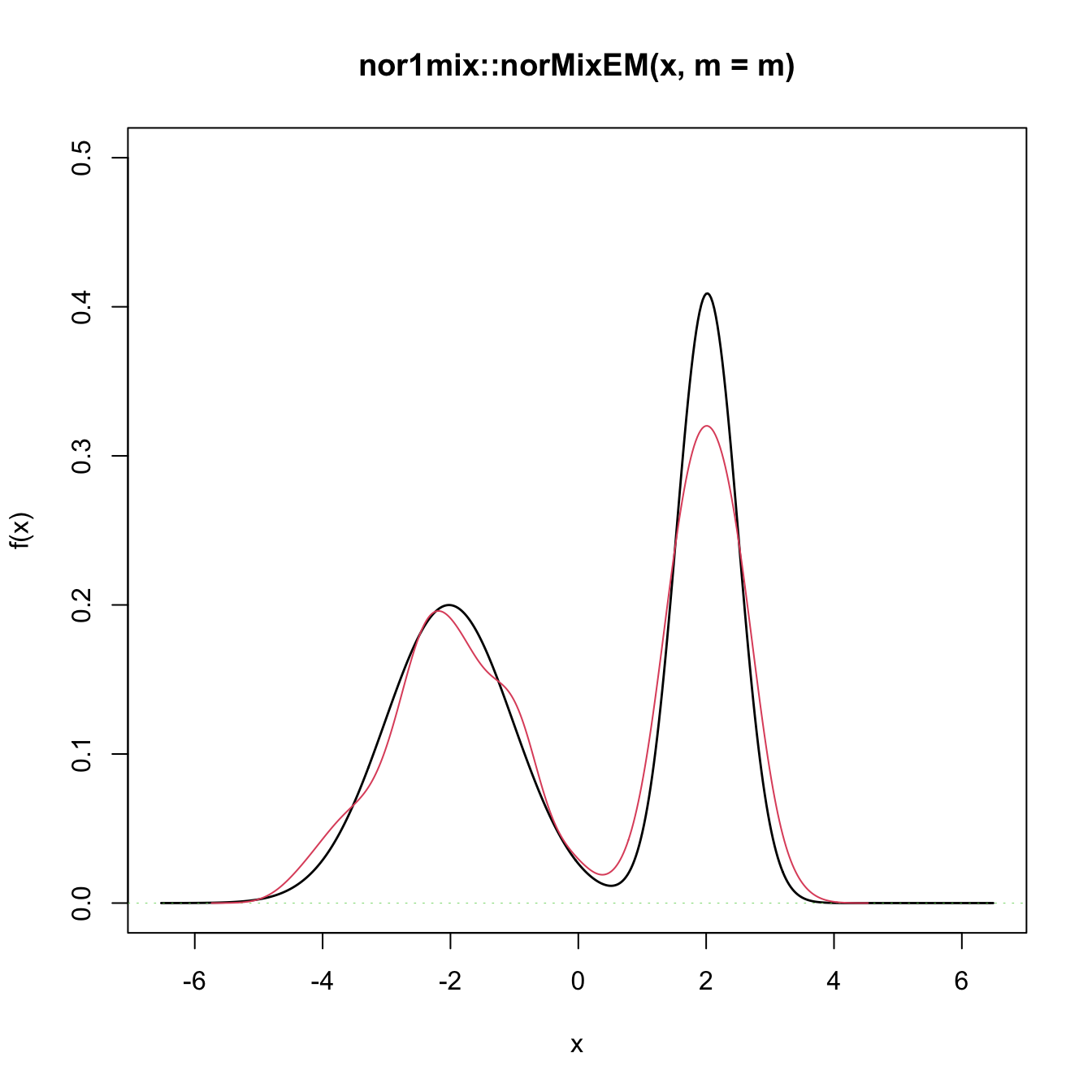

# Graphical assessment that H0 (parametric fit) and data (kde) match

plot(gof0$theta_hat, p.norm = FALSE, ylim = c(0, 0.5))

plot(ks::kde(x), col = 2, add = TRUE)

# Check the test for H0 false

x <- rgamma(n = 100, shape = 2, scale = 1)

gof1 <- cvm_nm_gof(x = x, m = 1, B = 1e3)

gof1

##

## Bootstrap-based Cramér-von Mises test for normal mixtures

##

## data: x

## stat.omega2 = 0.44954, p-value < 2.2e-16

## alternative hypothesis: any alternative to a 1 normal mixture

# Graphical assessment that H0 (parametric fit) and data (kde) do not match

plot(gof1$theta_hat, p.norm = FALSE, ylim = c(0, 0.5))

plot(ks::kde(x), col = 2, add = TRUE)

Exercise 6.10 Change in the previous example the number of mixture components to \(m=3\) and \(m=10,\) using the same sample as in the example. Do you reject the null hypothesis? Why? What about \(m=1\)? Try with new simulated datasets.

Exercise 6.11 Adapt the previous template to perform the following tasks:

- Test the goodness-of-fit of the Weibull distribution using the Anderson–Darling statistic. Check with a small simulation study that the test is correctly implemented (such that the significance level \(\alpha\) is respected if \(H_0\) holds).

- Test the goodness-of-fit of the lognormal distribution using the Anderson–Darling statistic. Check with a small simulation study that the test is correctly implemented.

- Apply the previous two tests to inspect if

av_gray_onefrom Exercise 2.25 and if temps-other.txt are distributed according to a Weibull or lognormal. Explain the results.

6.2 Comparison of two distributions

Assume that two iid samples \(X_1,\ldots,X_n\) and \(Y_1,\ldots,Y_m\) arbitrary distributions \(F\) and \(G\) are given. We next address the two-sample problem of comparing the unknown distributions \(F\) and \(G.\)

6.2.1 Homogeneity tests

The comparison of \(F\) and \(G\) can be done by testing their equality, which is known as the testing for homogeneity of the samples \(X_1,\ldots,X_n\) and \(Y_1,\ldots,Y_m.\) We can therefore address the two-sided hypothesis test

\[\begin{align} H_0:F=G\quad\text{vs.}\quad H_1:F\neq G.\tag{6.13} \end{align}\]

Recall that, differently from the simple and composite hypothesis that appears in goodness-of-fit problems, in the homogeneity problem the null hypothesis \(H_0:F=G\) does not specify any form for the distributions \(F\) and \(G,\) only their equality.

The one-sided hypothesis in which \(H_1:F<G\) (or \(H_1:F>G\)) is also very relevant. Here and henceforth “\(F<G\)” has a special meaning:

“\(F<G\)”: there exists at least one \(x\in\mathbb{R}\) such that \(F(x)<G(x).\)232

Obviously, the alternative \(H_1:F<G\) is implied if, for all \(x\in\mathbb{R},\)

\[\begin{align} F(x)<G(x)&\iff \mathbb{P}[X\leq x]<\mathbb{P}[Y\leq x]\nonumber\\ &\iff \mathbb{P}[X>x]>\mathbb{P}[Y>x].\tag{6.14} \end{align}\]

Consequently, (6.14) means that \(X\) produces observations above any fixed threshold \(x\in\mathbb{R}\) more likely than \(Y\). This concept is known as (strict)233 stochastic dominance and, precisely, it is said that \(X\) is stochastically greater than \(Y\) if (6.14) holds.234

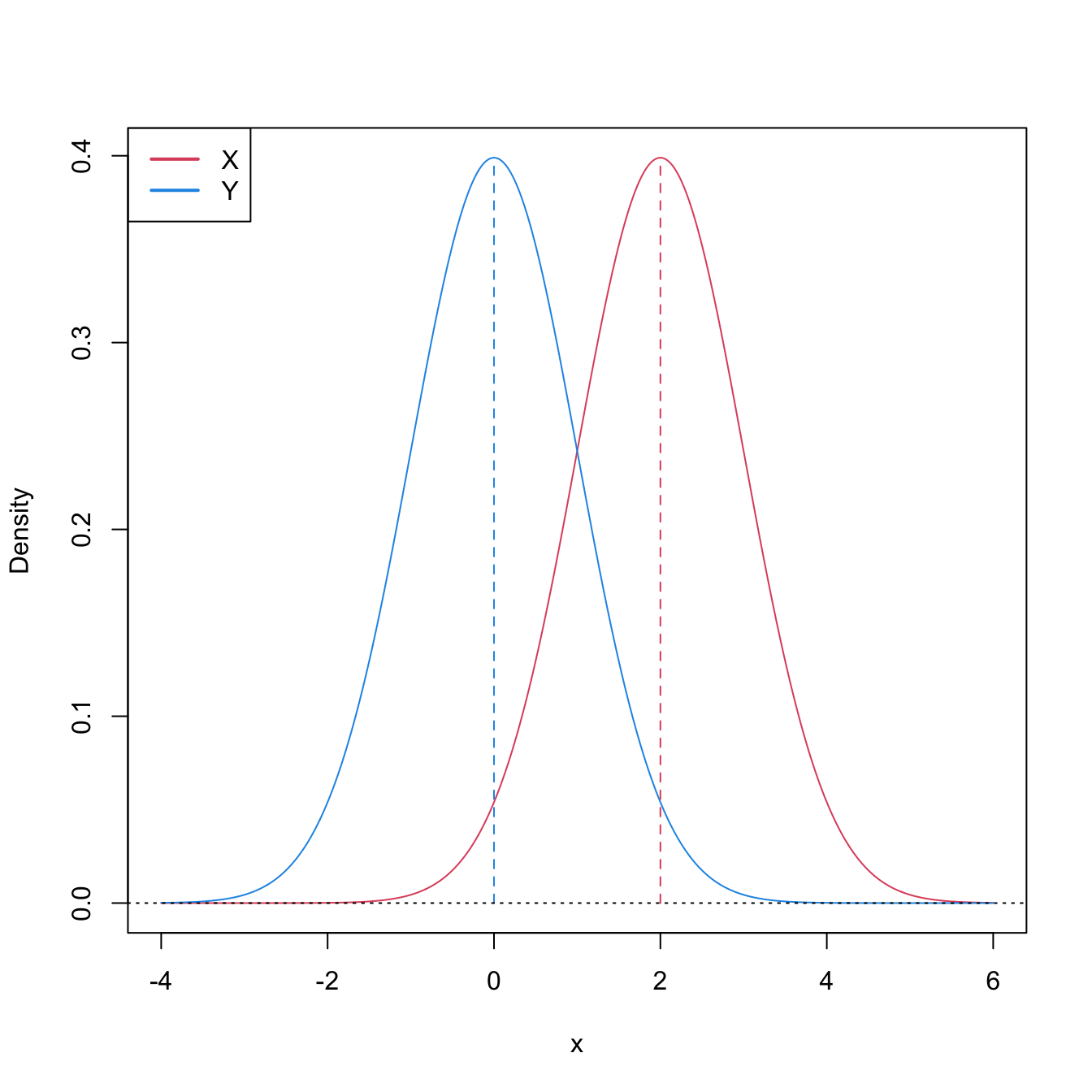

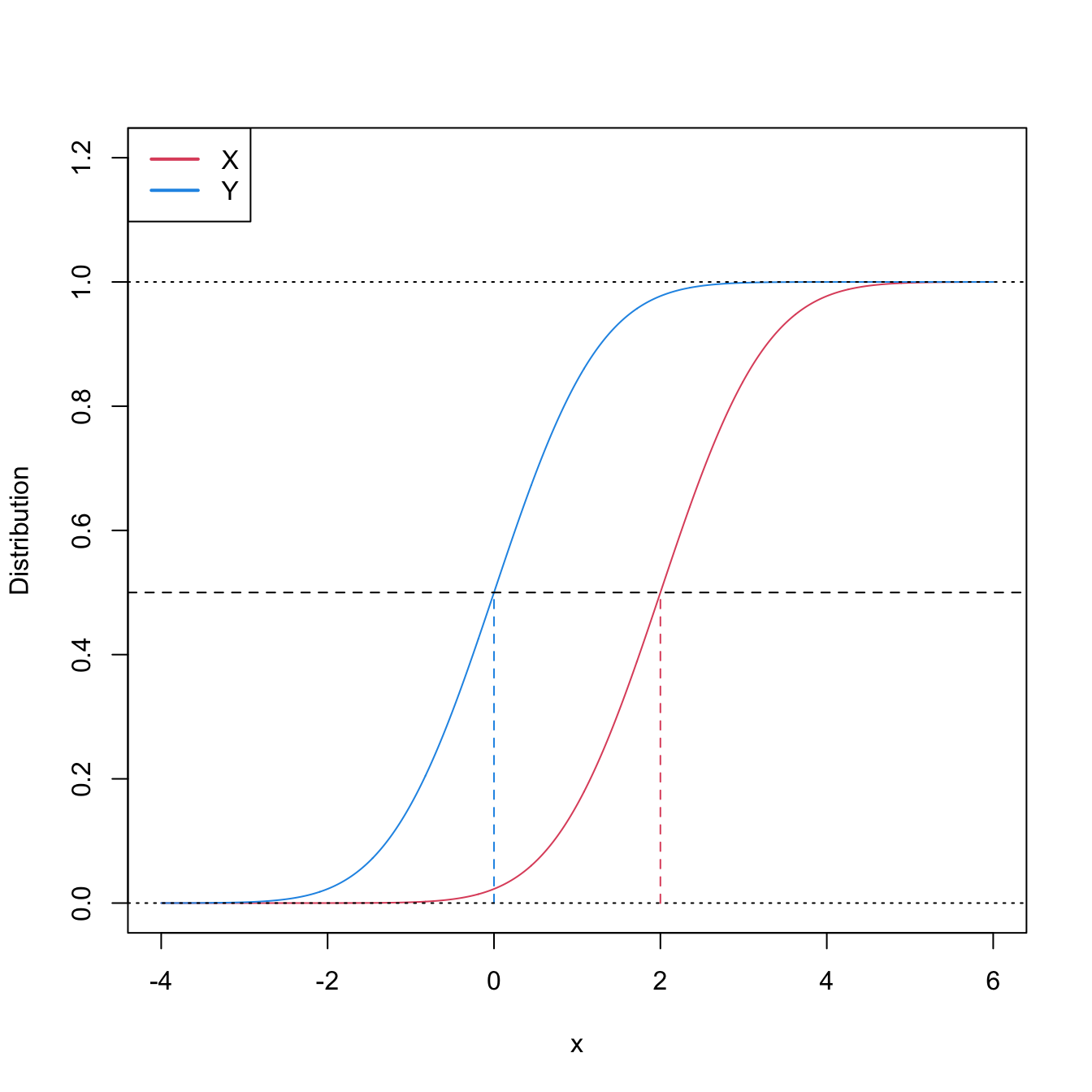

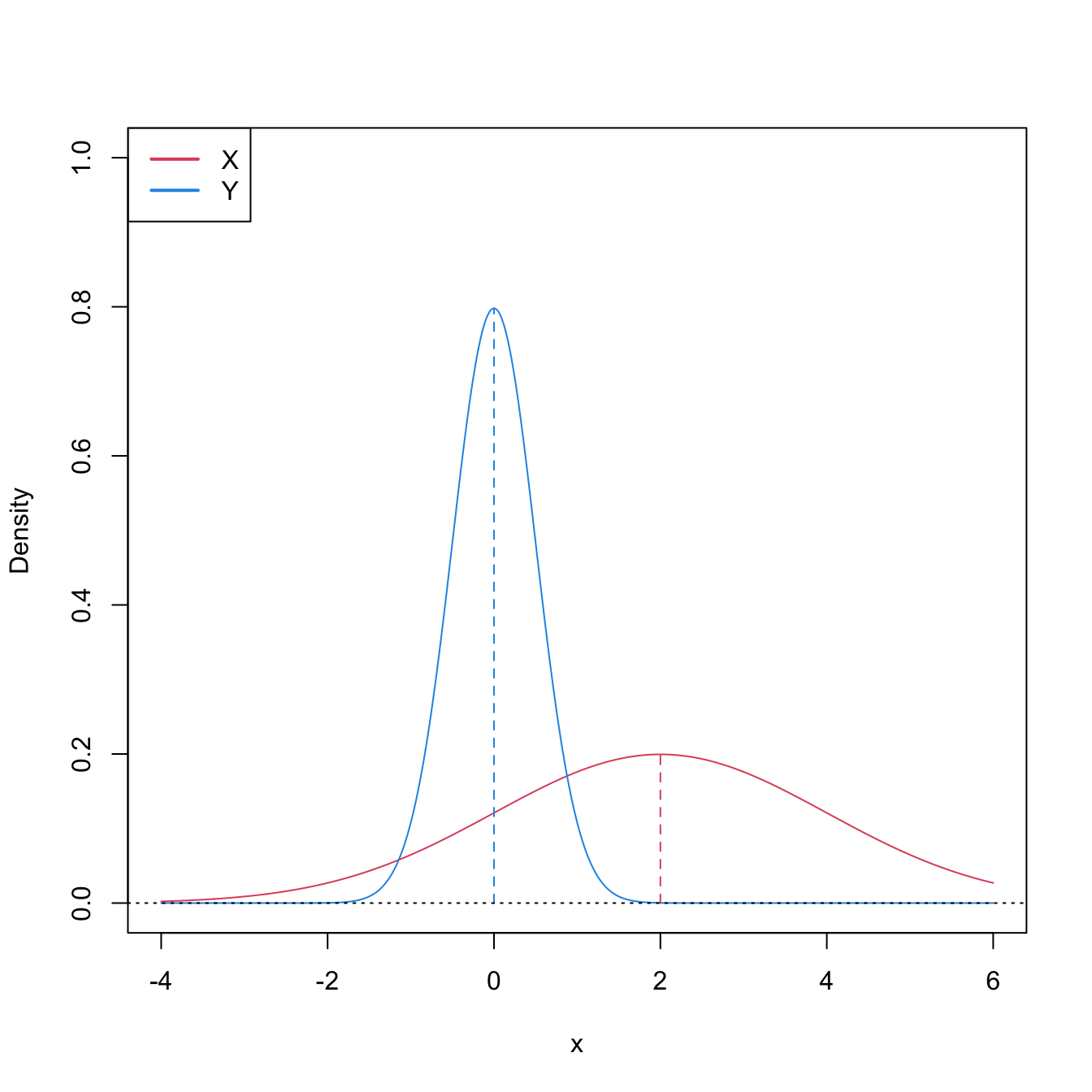

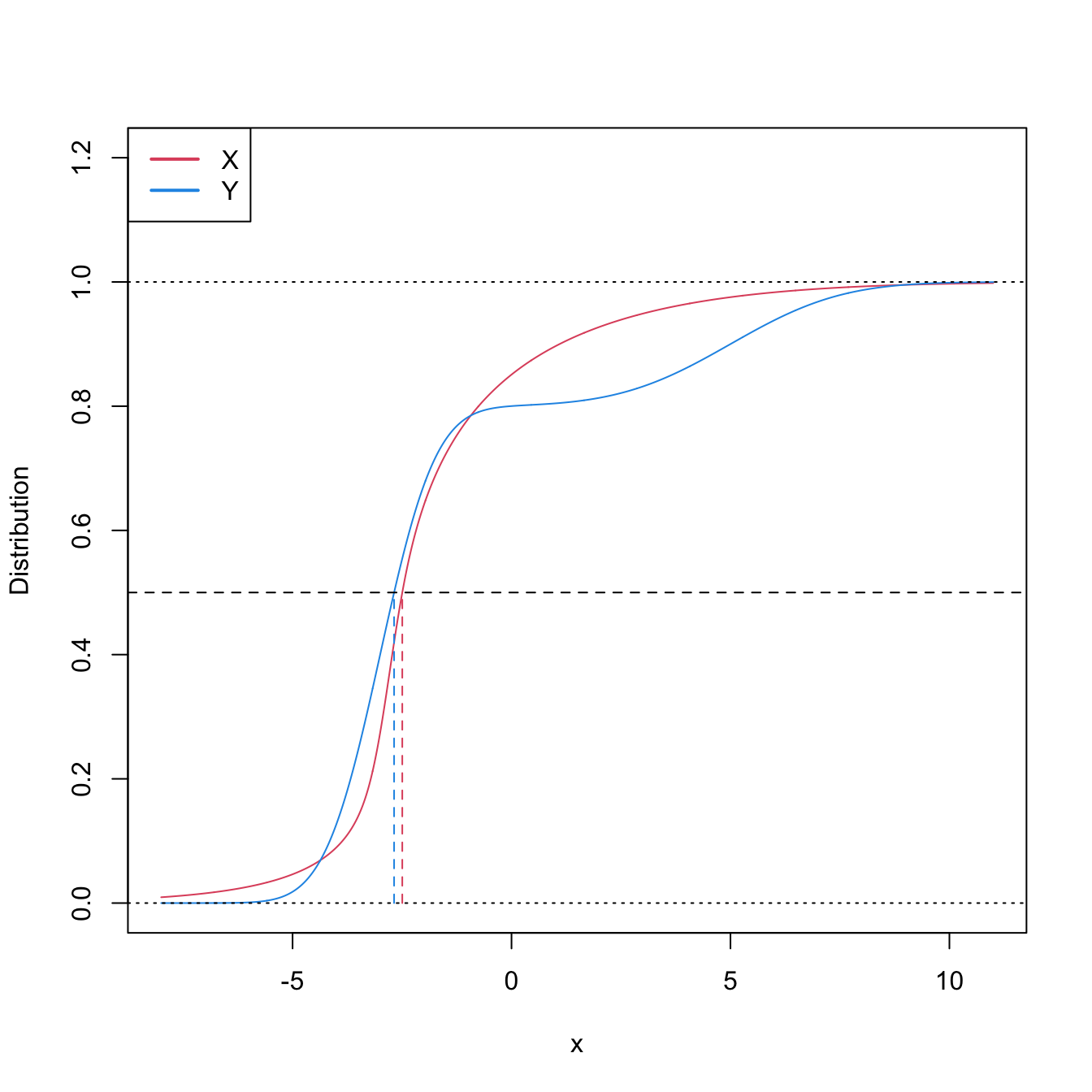

Stochastic dominance is an intuitive concept to interpret \(H_1:F<G,\) although it is a stronger statement on the relation of \(F\) and \(G.\) A more accurate, yet still intuitive, way of regarding \(H_1:F<G\) is as a local stochastic dominance: \(X\) is stochastically greater than \(Y\) only for some specific thresholds \(x\in\mathbb{R}.\)235 Figures 6.6 and 6.8 give two examples of presence/absence of stochastic dominance where \(H_1:F<G\) holds.

Figure 6.6: Pdfs and cdfs of \(X\sim\mathcal{N}(2,1)\) and \(Y\sim\mathcal{N}(0,1).\) \(X\) is stochastically greater than \(Y,\) which is visualized in terms of the pdfs (shift in the mean) and cdfs (domination of \(Y\)’s cdf). The means are shown in solid vertical lines. Note that the variances of \(X\) and \(Y\) are common; compare this situation with Figure 6.8.

The ecdf-based goodness-of-fit tests seen in Section 6.1.1 can be adapted to the homogeneity problem, with varying difficulty and versatility. The two-sample Kolmogorov–Smirnov test offers the highest simplicity and versatility, yet its power is inferior to that of the two-sample Cramér–von Mises and Anderson–Darling tests.

6.2.1.1 Two-sample Kolmogorov–Smirnov test (two-sided)

Test purpose. Given \(X_1,\ldots,X_n\sim F\) and \(Y_1,\ldots,Y_m\sim G,\) it tests \(H_0: F=G\) vs. \(H_1: F\neq G\) consistently against all the alternatives in \(H_1.\)

-

Statistic definition. The test statistic uses the supremum distance between \(F_n\) and \(G_m\):236

\[\begin{align*} D_{n,m}:=\sqrt{\frac{nm}{n+m}}\sup_{x\in\mathbb{R}} \left|F_n(x)-G_m(x)\right|. \end{align*}\]

If \(H_0:F=G\) holds, then \(D_{n,m}\) tends to be small. Conversely, when \(F\neq G,\) larger values of \(D_{n,m}\) are expected, and the test rejects \(H_0\) when \(D_{n,m}\) is large.

-

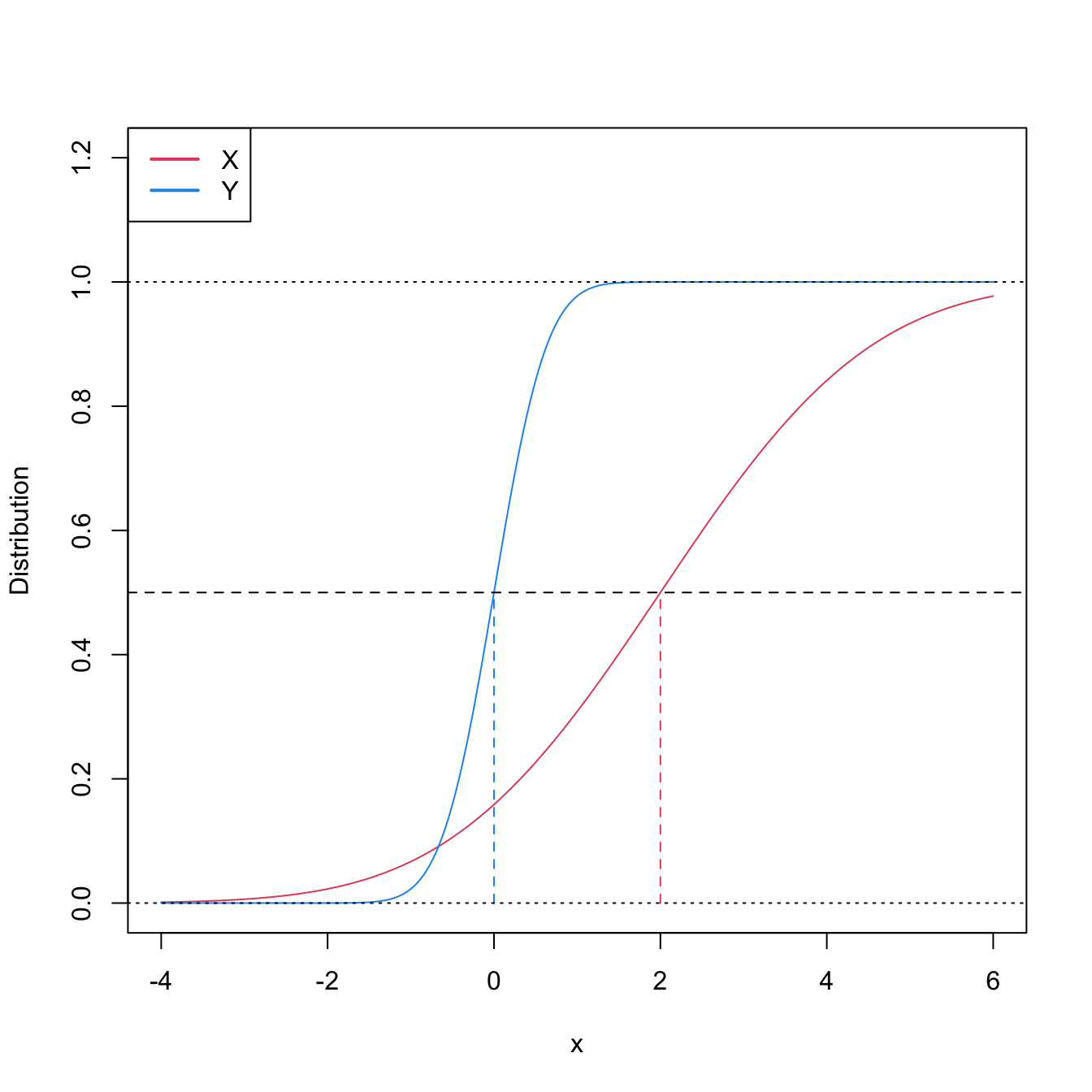

Statistic computation. The computation of \(D_{n,m}\) can be efficiently achieved by realizing that the maximum difference between \(F_n\) and \(G_m\) happens at \(x=X_i\) or \(x=Y_j,\) for a certain \(X_i\) or \(Y_j\) (observe Figure 6.7). Therefore,

\[\begin{align} D_{n,m}=&\,\max(D_{n,m,1},D_{n,m,2}),\tag{6.15}\\ D_{n,m,1}:=&\,\sqrt{\frac{nm}{n+m}}\max_{1\leq i\leq n}\left|\frac{i}{n}-G_m(X_{(i)})\right|,\nonumber\\ D_{n,m,2}:=&\,\sqrt{\frac{nm}{n+m}}\max_{1\leq j\leq m}\left|F_n(Y_{(j)})-\frac{j}{m}\right|.\nonumber \end{align}\]

-

Distribution under \(H_0.\) If \(H_0\) holds and \(F=G\) is continuous, then \(D_{n,m}\) has the same asymptotic cdf as \(D_n\) (check (6.3)).237 That is,

\[\begin{align*} \lim_{n,m\to\infty}\mathbb{P}[D_{n,m}\leq x]=K(x). \end{align*}\]

Highlights and caveats. The two-sample Kolmogorov–Smirnov test is distribution-free if \(F=G\) is continuous and the samples \(X_1,\ldots,X_n\) and \(Y_1,\ldots,Y_m\) have no ties. If these assumptions are met, then the iid sample \(X_1,\ldots,X_n,Y_1,\ldots,Y_m\stackrel{H_0}{\sim} F=G\) generates the iid sample \(U_1,\ldots,U_{n+m}\stackrel{H_0}{\sim} \mathcal{U}(0,1),\) where \(U_i:=F(X_i)\) and \(U_{n+j}:=G(Y_j),\) for \(i=1,\ldots,n,\) \(j=1,\ldots,m.\) As a consequence, the distribution of (6.2) does not depend on \(F=G.\) If \(F=G\) is not continuous or there are ties on the sample, the \(K\) function is not the true asymptotic distribution.

Implementation in R. For continuous data and continuous \(F=G,\) the test statistic \(D_{n,m}\) and the asymptotic \(p\)-value are readily available through the

ks.test()function (with its defaultalternative = "two-sided"). For discrete \(F=G,\) a test implementation can be achieved through the permutation approach to be seen in Section 6.2.3.

The construction of the two-sample Kolmogorov–Smirnov test statistic is illustrated in the following chunk of code.

# Sample data

n <- 10; m <- 10

mu1 <- 0; sd1 <- 1

mu2 <- 0; sd2 <- 2

set.seed(1998)

samp1 <- rnorm(n = n, mean = mu1, sd = sd1)

samp2 <- rnorm(n = m, mean = mu2, sd = sd2)

# Fn vs. Gm

plot(ecdf(samp1), main = "", ylab = "Probability", xlim = c(-3.5, 3.5),

ylim = c(0, 1.3))

lines(ecdf(samp2), main = "", col = 4)

# Add Dnm1

samp1_sorted <- sort(samp1)

samp2_sorted <- sort(samp2)

Dnm_1 <- abs((1:n) / n - ecdf(samp2)(samp1_sorted))

i1 <- which.max(Dnm_1)

lines(rep(samp2_sorted[i1], 2),

c(i1 / m, ecdf(samp1_sorted)(samp2_sorted[i1])),

col = 3, lwd = 2, type = "o", pch = 16, cex = 0.75)

rug(samp1, col = 1)

# Add Dnm2

Dnm_2 <- abs(ecdf(samp1)(samp2_sorted) - (1:m) / m)

i2 <- which.max(Dnm_2)

lines(rep(samp1_sorted[i2], 2),

c(i2 / n, ecdf(samp2_sorted)(samp1_sorted[i2])),

col = 2, lwd = 2, type = "o", pch = 16, cex = 0.75)

rug(samp2, col = 4)

legend("topleft", lwd = 2, col = c(1, 4, 3, 2), legend =

latex2exp::TeX(c("$F_n$", "$G_m$", "$D_{n,m,1}$", "$D_{n,m,2}$")))

Figure 6.7: Kolmogorov–Smirnov distance \(D_{n,m}=\max(D_{n,m,1},D_{n,m,2})\) for two samples of sizes \(n=m=10\) coming from \(F(\cdot)=\Phi\left(\frac{\cdot-\mu_1}{\sigma_1}\right)\) and \(G(\cdot)=\Phi\left(\frac{\cdot-\mu_2}{\sigma_2}\right),\) where \(\mu_1=\mu_2=0,\) and \(\sigma_1=1\) and \(\sigma_2=2.\)

An instance of the use of the ks.test() function for the two-sided homogeneity test is given below.

# Check the test for H0 true

set.seed(123456)

x0 <- rgamma(n = 50, shape = 1, scale = 1)

y0 <- rgamma(n = 100, shape = 1, scale = 1)

ks.test(x = x0, y = y0)

##

## Exact two-sample Kolmogorov-Smirnov test

##

## data: x0 and y0

## D = 0.14, p-value = 0.5185

## alternative hypothesis: two-sided

# Check the test for H0 false

x1 <- rgamma(n = 50, shape = 2, scale = 1)

y1 <- rgamma(n = 75, shape = 1, scale = 1)

ks.test(x = x1, y = y1)

##

## Exact two-sample Kolmogorov-Smirnov test

##

## data: x1 and y1

## D = 0.35333, p-value = 0.0008513

## alternative hypothesis: two-sided6.2.1.2 Two-sample Kolmogorov–Smirnov test (one-sided)

Test purpose. Given \(X_1,\ldots,X_n\sim F\) and \(Y_1,\ldots,Y_m\sim G,\) it tests \(H_0: F=G\) vs. \(H_1: F<G.\)238 Rejection of \(H_0\) in favor of \(H_1\) gives evidence for the local stochastic dominance of \(X\) over \(Y\) (which may or may not be global).

-

Statistic definition. The test statistic uses the maximum signed distance between \(F_n\) and \(G_m\):

\[\begin{align*} D_{n,m}^-:=\sqrt{\frac{nm}{n+m}}\sup_{x\in\mathbb{R}} (G_m(x)-F_n(x)). \end{align*}\]

If \(H_1:F<G\) holds, then \(D_{n,m}^-\) tends to have large positive values. Conversely, when \(H_0:F=G\) or \(F>G\) holds, smaller values of \(D_{n,m}^-\) are expected, possibly negative. The test rejects \(H_0\) when \(D_{n,m}^-\) is large.

-

Statistic computation. The computation of \(D_{n,m}^-\) is analogous to that of \(D_{n,m}\) given in (6.15), but removing absolute values and changing its sign:239

\[\begin{align*} D_{n,m}^-=&\,\max(D_{n,m,1}^-,D_{n,m,2}^-),\\ D_{n,m,1}^-:=&\,\sqrt{\frac{nm}{n+m}}\max_{1\leq i\leq n}\left(G_m(X_{(i)})-\frac{i}{n}\right),\\ D_{n,m,2}^-:=&\,\sqrt{\frac{nm}{n+m}}\max_{1\leq j\leq m}\left(\frac{j}{m}-F_n(Y_{(j)})\right). \end{align*}\]

Distribution under \(H_0.\) If \(H_0\) holds and \(F=G\) is continuous, then \(D_{n,m}^-\) has the same asymptotic cdf as \(D_{n,m}\).

Highlights and caveats. The one-sided two-sample Kolmogorov–Smirnov test is also distribution-free if \(F=G\) is continuous and the samples \(X_1,\ldots,X_n\) and \(Y_1,\ldots,Y_m\) have no ties.

Implementation in R. For continuous data and continuous \(F=G,\) the test statistic \(D_{n,m}^-\) and the asymptotic \(p\)-value are readily available through the

ks.test()function. The one-sided test is carried out ifalternative = "less"oralternative = "greater"(not the defaults). The argumentalternativemeans the direction of cdf dominance:"less"\(\equiv F\boldsymbol{<}G;\)"greater"\(\equiv F\boldsymbol{>}G.\)240 \(\!\!^,\)241 Permutations (see Section 6.2.3) can be used for obtaining non-asymptotic \(p\)-values and dealing with discrete samples.

# Check the test for H0 true

set.seed(123456)

x0 <- rgamma(n = 50, shape = 1, scale = 1)

y0 <- rgamma(n = 100, shape = 1, scale = 1)

ks.test(x = x0, y = y0, alternative = "less") # H1: F < G

##

## Exact two-sample Kolmogorov-Smirnov test

##

## data: x0 and y0

## D^- = 0.08, p-value = 0.643

## alternative hypothesis: the CDF of x lies below that of y

ks.test(x = x0, y = y0, alternative = "greater") # H1: F > G

##

## Exact two-sample Kolmogorov-Smirnov test

##

## data: x0 and y0

## D^+ = 0.14, p-value = 0.2638

## alternative hypothesis: the CDF of x lies above that of y

# Check the test for H0 false

x1 <- rnorm(n = 50, mean = 1, sd = 1)

y1 <- rnorm(n = 75, mean = 0, sd = 1)

ks.test(x = x1, y = y1, alternative = "less") # H1: F < G

##

## Exact two-sample Kolmogorov-Smirnov test

##

## data: x1 and y1

## D^- = 0.52667, p-value = 2.205e-08

## alternative hypothesis: the CDF of x lies below that of y

ks.test(x = x1, y = y1, alternative = "greater") # H1: F > G

##

## Exact two-sample Kolmogorov-Smirnov test

##

## data: x1 and y1

## D^+ = 1.4502e-15, p-value = 1

## alternative hypothesis: the CDF of x lies above that of y

# Interpretations:

# 1. Strong rejection of H0: F = G in favor of H1: F < G when

# alternative = "less", as in reality x1 is stochastically greater than y1.

# This outcome allows stating that "there is strong evidence supporting that

# x1 is locally stochastically greater than y1"

# 2. No rejection ("strong acceptance") of H0: F = G versus H1: F > G when

# alternative = "greater". Even if in reality x1 is stochastically greater than

# y1 (so H0 is false), the alternative to which H0 is confronted is even less

# plausible. A p-value ~ 1 indicates that one is probably conducting the test

# in the uninteresting direction alternative!Exercise 6.12 Consider the two populations described in Figure 6.8. \(X\sim F\) is not stochastically greater than \(Y\sim G.\) However, \(Y\) is locally stochastically greater than \(X\) in \((-\infty,-0.75).\) Therefore, although hard to detect, the two-sample Kolmogorov–Smirnov test should eventually reject \(H_0:F=G\) in favor of \(H_1:F>G.\) Conduct a simulation study to verify how fast this rejection takes place:

- Simulate \(X_1,\ldots,X_n\sim F\) and \(Y_1,\ldots,Y_n\sim G.\)

- Apply

ks.test()with the correspondingalternativeand evaluate if it rejects \(H_0\) at a significance level \(\alpha.\) - Repeat Steps 1–2 \(M=100\) times and approximate the rejection probability by Monte Carlo.

- Perform Steps 1–3 for a suitably increasing sequence of sample sizes \(n,\) then plot the estimated power as a function of \(n.\) Consider a sequence of sample sizes that shows the power curve converging to one.

- Compare the previous curve against the rejection probability curves for \(H_1:F<G\) and \(H_1:F\neq G.\)

- Once you have a working solution, increase \(M\) to \(M=1,000.\)

- Summarize your conclusions.

6.2.1.3 Two-sample Cramér–von Mises test

Test purpose. Given \(X_1,\ldots,X_n\sim F,\) it tests \(H_0: F=G\) vs. \(H_1: F\neq G\) consistently against all the alternatives in \(H_1.\)

-

Statistic definition. The test statistic proposed by Anderson (1962) uses a quadratic distance between \(F_n\) and \(G_m\):

\[\begin{align} W_{n,m}^2:=\frac{nm}{n+m}\int(F_n(z)-G_m(z))^2\,\mathrm{d}H_{n+m}(z),\tag{6.16} \end{align}\]

where \(H_{n+m}\) is the ecdf of the pooled sample \(Z_1,\ldots,Z_{n+m},\) where \(Z_i:=X_i\) and \(Z_{j+n}:=Y_j,\) \(i=1,\ldots,n\) and \(j=1,\ldots,m.\)242 Therefore, \(H_{n+m}(z)=\frac{n}{n+m}F_n(z)+\frac{m}{n+m}G_m(z),\) \(z\in\mathbb{R},\) and (6.16) equals243

\[\begin{align} W_{n,m}^2=\frac{nm}{(n+m)^2}\sum_{k=1}^{n+m}(F_n(Z_k)-G_m(Z_k))^2.\tag{6.17} \end{align}\]

Statistic computation. The formula (6.17) is reasonably direct. Better computational efficiency may be obtained with ranks from the pooled sample.

Distribution under \(H_0.\) If \(H_0\) holds and \(F=G\) is continuous, then \(W_{n,m}^2\) has the same asymptotic cdf as \(W_n^2\) (see (6.6)).

Highlights and caveats. The two-sample Cramér–von Mises test is also distribution-free if \(F=G\) is continuous and the sample has no ties. Otherwise, (6.6) is not the true asymptotic distribution. As in the one-sample case, empirical evidence suggests that the Cramér–von Mises test is often more powerful than the Kolmogorov–Smirnov test. However, the Cramér–von Mises is less versatile, since it does not admit simple modifications to test against one-sided alternatives.

Implementation in R. See below for the statistic implementation. Asymptotic \(p\)-values can be obtained using

goftest::pCvM(). Permutations (see Section 6.2.3) can be used for obtaining non-asymptotic \(p\)-values and dealing with discrete samples.

# Two-sample Cramér-von Mises statistic

cvm2_stat <- function(x, y) {

# Sample sizes

n <- length(x)

m <- length(y)

# Pooled sample

z <- c(x, y)

# Statistic computation via ecdf()

(n * m / (n + m)^2) * sum((ecdf(x)(z) - ecdf(y)(z))^2)

}

# Check the test for H0 true

set.seed(123456)

x0 <- rgamma(n = 50, shape = 1, scale = 1)

y0 <- rgamma(n = 100, shape = 1, scale = 1)

cvm0 <- cvm2_stat(x = x0, y = y0)

pval0 <- 1 - goftest::pCvM(q = cvm0)

c("statistic" = cvm0, "p-value"= pval0)

## statistic p-value

## 0.1294889 0.4585971

# Check the test for H0 false

x1 <- rgamma(n = 50, shape = 2, scale = 1)

y1 <- rgamma(n = 75, shape = 1, scale = 1)

cvm1 <- cvm2_stat(x = x1, y = y1)

pval1 <- 1 - goftest::pCvM(q = cvm1)

c("statistic" = cvm1, "p-value"= pval1)

## statistic p-value

## 1.3283733333 0.00042645986.2.1.4 Two-sample Anderson–Darling test

Test purpose. Given \(X_1,\ldots,X_n\sim F,\) it tests \(H_0: F=G\) vs. \(H_1: F\neq G\) consistently against all the alternatives in \(H_1.\)

-

Statistic definition. The test statistic proposed by Pettitt (1976) uses a weighted quadratic distance between \(F_n\) and \(G_m\):

\[\begin{align} A_{n,m}^2:=\frac{nm}{n+m}\int\frac{(F_n(z)-G_m(z))^2}{H_{n+m}(z)(1-H_{n+m}(z))}\,\mathrm{d}H_{n+m}(z),\tag{6.18} \end{align}\]

where \(H_{n+m}\) is the ecdf of \(Z_1,\ldots,Z_{n+m}.\) Therefore, (6.18) equals

\[\begin{align} A_{n,m}^2=\frac{nm}{(n+m)^2}\sum_{k=1}^{n+m}\frac{(F_n(Z_k)-G_m(Z_k))^2}{H_{n+m}(Z_k)(1-H_{n+m}(Z_k))}.\tag{6.19} \end{align}\]

In (6.19), it is implicitly assumed that the largest observation of the pooled sample, \(Z_{(n+m)},\) is excluded from the sum, since \(H_{n+m}(Z_{(n+m)})=1.\)244

-

Statistic computation. The formula (6.19) is reasonably direct for computation. Better computational efficiency may be obtained with ranks from the pooled sample. The implicit exclusion of \(Z_{(n+m)}\) from the sum in (6.19) can be made explicit with

\[\begin{align*} A_{n,m}^2=\frac{nm}{(n+m)^2}\sum_{k=1}^{n+m-1}\frac{(F_n(Z_{(k)})-G_m(Z_{(k)}))^2}{H_{n+m}(Z_{(k)})(1-H_{n+m}(Z_{(k)}))}. \end{align*}\]

Distribution under \(H_0.\) If \(H_0\) holds and \(F=G\) is continuous, then \(A_{n,m}^2\) has the same asymptotic cdf as \(A_n^2\) (see (6.8)).

Highlights and caveats. The two-sample Anderson–Darling test is also distribution-free if \(F=G\) is continuous and the sample has no ties. Otherwise, (6.8) is not the true asymptotic distribution. As in the one-sample case, empirical evidence suggests that the Anderson–Darling test is often more powerful than the Kolmogorov–Smirnov and Cramér–von Mises tests. The two-sample Anderson–Darling test is also less versatile, since it does not admit simple modifications to test against one-sided alternatives.

Implementation in R. See below for the statistic implementation. Asymptotic \(p\)-values can be obtained using

goftest::pAD(). Permutations (see Section 6.2.3) can be used for obtaining non-asymptotic \(p\)-values and dealing with discrete samples.

# Two-sample Anderson-Darling statistic

ad2_stat <- function(x, y) {

# Sample sizes

n <- length(x)

m <- length(y)

# Pooled sample and pooled ecdf

z <- c(x, y)

z <- z[-which.max(z)] # Exclude the largest point

H <- rank(z) / (n + m)

# Statistic computation via ecdf()

(n * m / (n + m)^2) * sum((ecdf(x)(z) - ecdf(y)(z))^2 / ((1 - H) * H))

}

# Check the test for H0 true

set.seed(123456)

x0 <- rgamma(n = 50, shape = 1, scale = 1)

y0 <- rgamma(n = 100, shape = 1, scale = 1)

ad0 <- ad2_stat(x = x0, y = y0)

pval0 <- 1 - goftest::pAD(q = ad0)

c("statistic" = ad0, "p-value"= pval0)

## statistic p-value

## 0.8603617 0.4394751

# Check the test for H0 false

x1 <- rgamma(n = 50, shape = 2, scale = 1)

y1 <- rgamma(n = 75, shape = 1, scale = 1)

ad1 <- ad2_stat(x = x1, y = y1)

pval1 <- 1 - goftest::pAD(q = ad1)

c("statistic" = ad1, "p-value"= pval1)

## statistic p-value

## 6.7978295422 0.0004109238Exercise 6.13 Verify the correctness of the asymptotic null distributions of \(W_{n,m}^2\) and \(A_{n,m}^2.\) To do so:

- Simulate \(M=1,000\) pairs of samples of sizes \(n=200\) and \(m=150\) under \(H_0.\)

- Obtain the statistics \(W_{n,m;1}^2,\ldots, W_{n,m;M}^2\) and plot their ecdf.

- Overlay the asymptotic cdf of \(W_{n,m},\) provided by

goftest::pCvM()for the one-sample test. - Repeat Steps 2–3 for \(A_{n,m}^2.\)

Exercise 6.14 Implement in R a single function to properly conduct either the two-sample Cramér–von Mises test or the two-sample Anderson–Darling test. The function must return a tidied "htest" object. Is your implementation of \(W_{n,m}^2\) and \(A_{n,m}^2\) able to improve cvm2_stat() and ad2_stat() in speed? Measure the running times for \(n=300\) and \(m=200\) with the microbenchmark::microbenchmark() function.

6.2.2 Specific tests for distribution shifts

Among the previous ecdf-based tests of homogeneity, only the two-sample Kolmogorov–Smirnov test was able to readily deal with one-sided alternatives. However, this test is often more conservative than the Cramér–von Mises and the Anderson–Darling, so it is apparent that there is room for improvement. We next see two nonparametric tests, the Wilcoxon–Mann–Whitney test245 and the Wilcoxon signed-rank test,246 which are designed to detect one-sided alternatives related to \(X\) being stochastically greater than \(Y\). Both tests are more powerful than the one-sided Kolmogorov–Smirnov.

In a very vague and imprecise form, these tests can be interpreted as “nonparametric \(t\)-tests” for unpaired and paired data.247 The rationale is that, very often, the aforementioned one-sided alternatives are related to differences in the distributions produced by a shift in their main masses of probability. However, as we will see, there are many subtleties and sides to the previous interpretation. Unfortunately, these nuances are usually ignored in the literature and, as a consequence, the Wilcoxon–Mann–Whitney and Wilcoxon signed-rank tests are massively misunderstood and misused.

6.2.2.1 Wilcoxon–Mann–Whitney test

The Wilcoxon–Mann–Whitney test addresses the hypothesis testing of

\[\begin{align*} H_0:F=G\quad\text{vs.}\quad H_1:\mathbb{P}[X\geq Y]>0.5. \end{align*}\]

The alternative hypothesis \(H_1\) requires special care:

That \(\mathbb{P}[X\geq Y]>0.5\) intuitively means that the main mass of probability of \(X\) is above that of \(Y\): it is more likely to observe \(X\geq Y\) than \(X<Y.\)

-

\(H_1\) is related to \(X\) being stochastically greater than \(Y.\) Precisely, \(H_1\) is essentially248 implied by the latter:

\[\begin{align*} F(x)<G(x) \text{ for all } x\in\mathbb{R} \implies \mathbb{P}[X\geq Y]\geq 0.5. \end{align*}\]

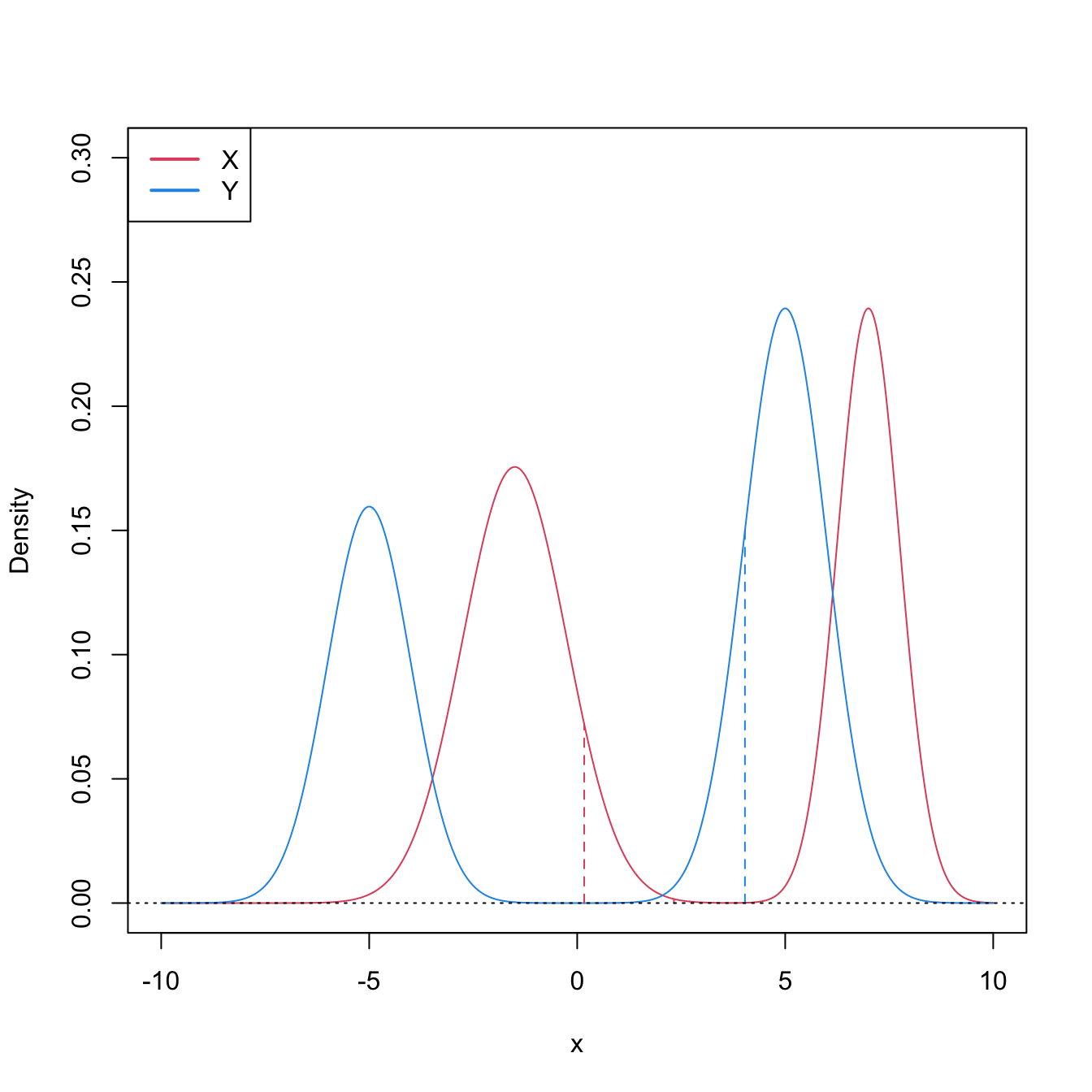

The converse implication is false (see Figure 6.8). Hence, \(H_1:\mathbb{P}[X\geq Y]>0.5\) is more specific than “\(X\) is stochastically greater than \(Y\)”. \(H_1\) neither implies nor is implied by the two-sample Kolmogorov–Smirnov’s alternative \(H_1':F<G\) (which can be regarded as local stochastic dominance).249

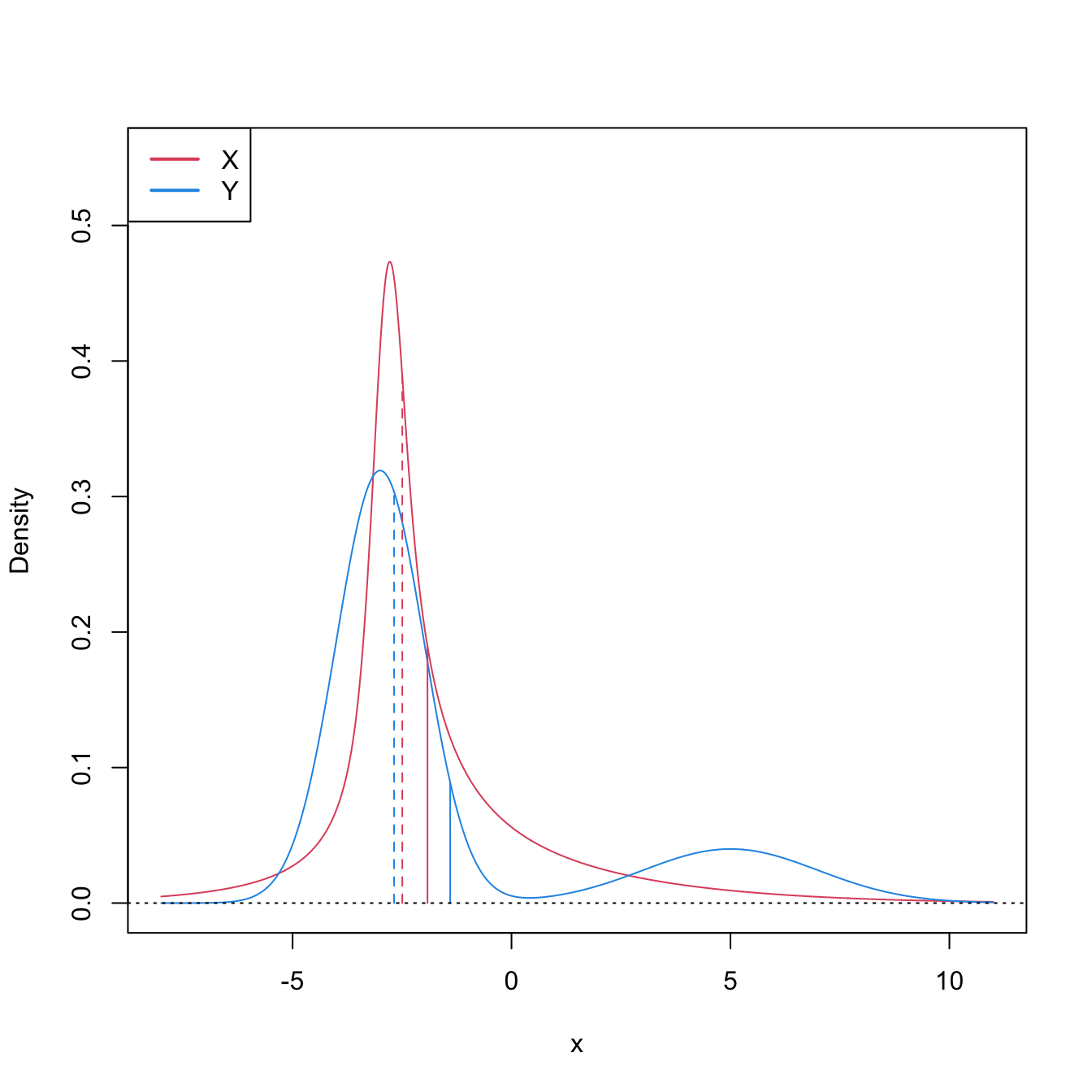

Figure 6.8: Pdfs and cdfs of \(X\sim\mathcal{N}(2,4)\) and \(Y\sim\mathcal{N}(0,0.25).\) \(X\) is not stochastically greater than \(Y,\) as the cdfs cross, but \(\mathbb{P}\lbrack X\geq Y\rbrack=0.834.\) \(Y\) is locally stochastically greater than \(X\) in \((-\infty,-0.75).\) The means are shown in vertical lines. Note that the variances of \(X\) and \(Y\) are not common; recall the difference with respect to the situation in Figure 6.6.

-

In general, \(H_1\) does not directly inform on the medians/means of \(X\) and \(Y.\) Thus, in general, the Wilcoxon–Mann–Whitney test does not compare medians/means. The probability in \(H_1\) is, in general, exogenous to medians/means:250

\[\begin{align} \mathbb{P}[X\geq Y]=\int F_Y(x)\,\mathrm{d}F_X(x).\tag{6.20} \end{align}\]

Only in very specific circumstances \(H_1\) is related to medians/means. If \(X\sim F\) and \(Y\sim G\) are symmetric random variables with medians \(m_X\) and \(m_Y\) (if the means exist, they equal the medians), then

\[\begin{align} \mathbb{P}[X\geq Y]>0.5 \iff m_X>m_Y.\tag{6.21} \end{align}\]

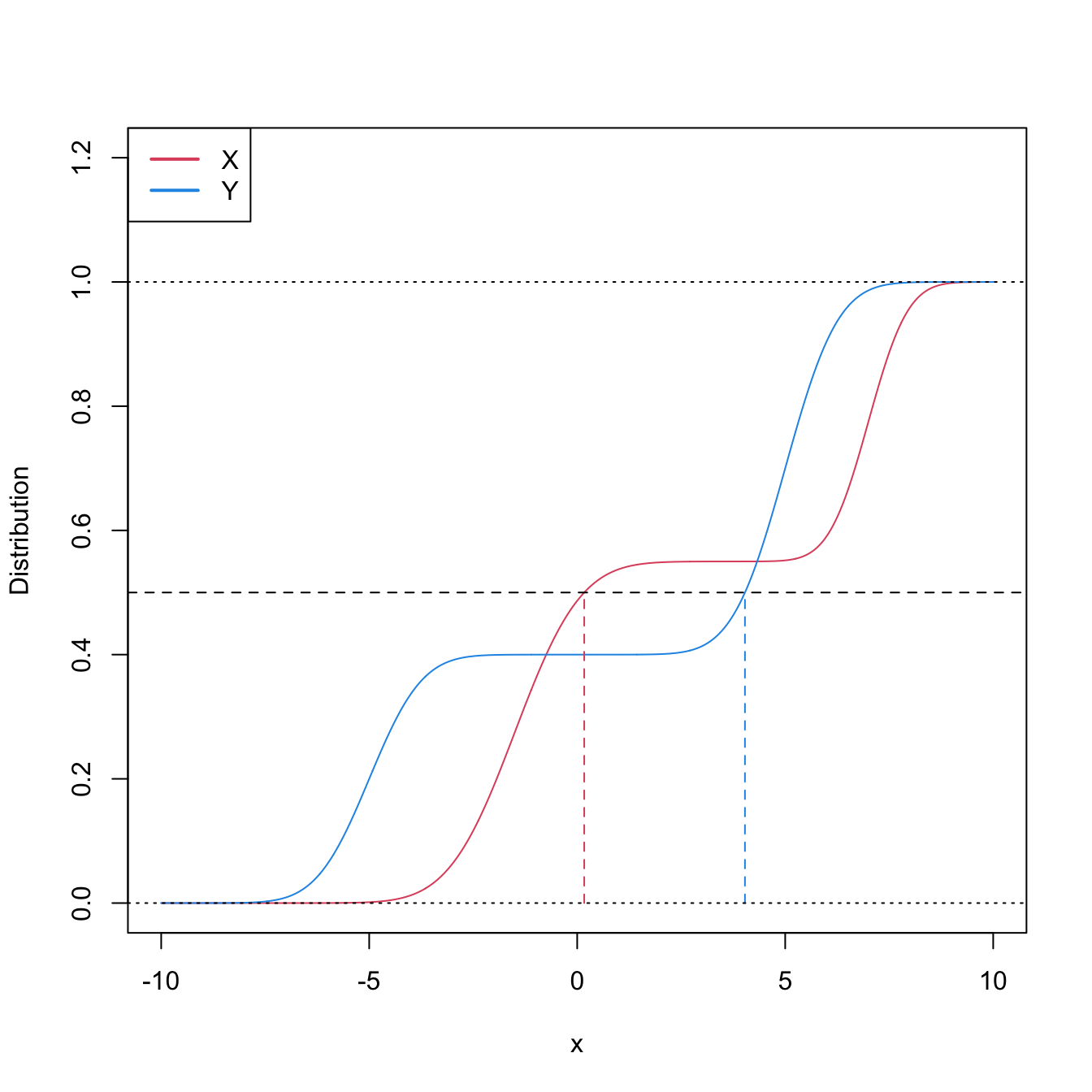

This characterization informs on the closest the Wilcoxon–Mann–Whitney test gets to a nonparametric version of the \(t\)-test.251 However, as the two counterexamples in Figures 6.9 and 6.10 respectively illustrate, (6.21) is not true in general, neither for means nor for medians. It may happen that: (i) \(\mathbb{P}[X\geq Y]>0.5\) but \(\mu_X<\mu_Y;\) or (ii) \(\mathbb{P}[X\geq Y]>0.5\) but \(m_X<m_Y.\)

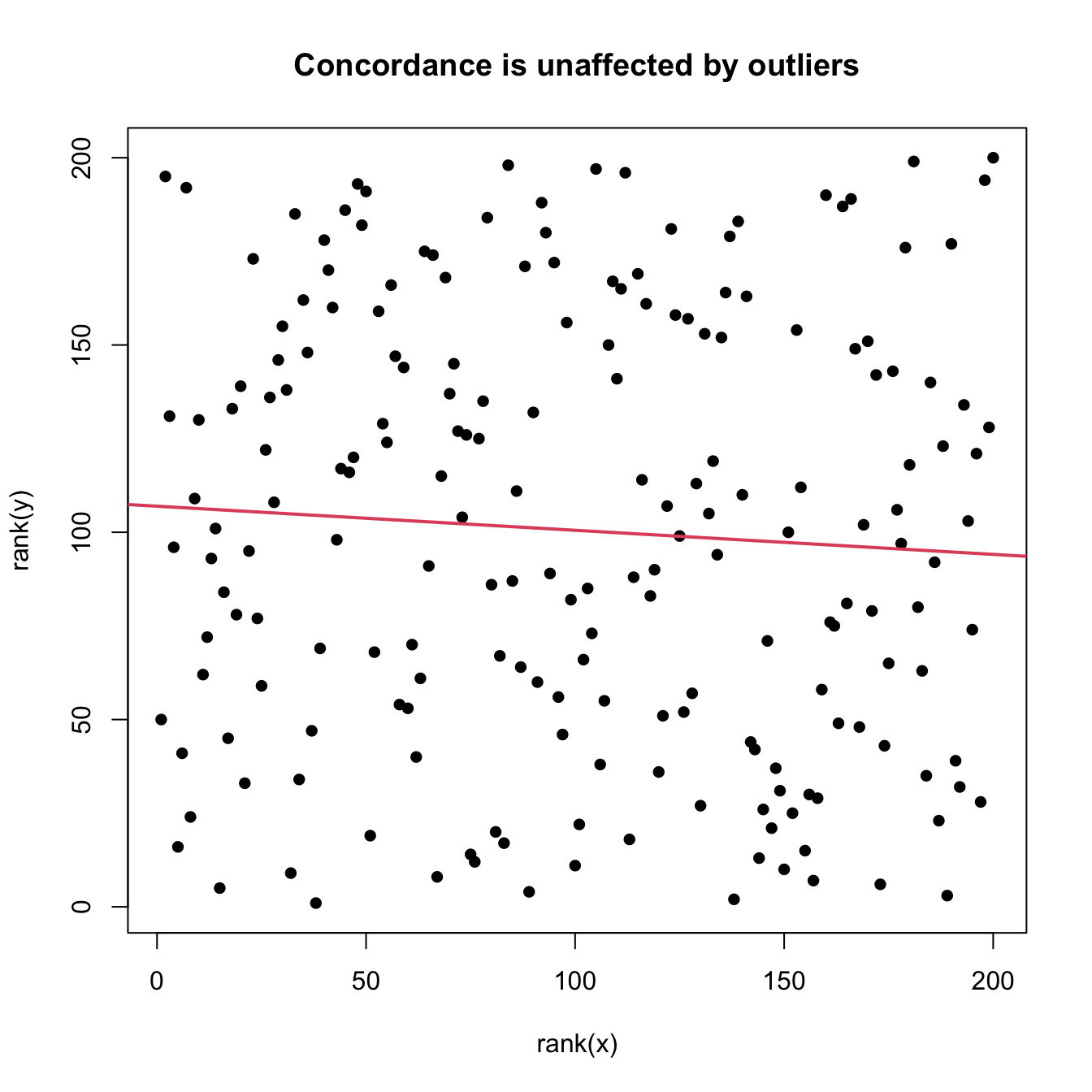

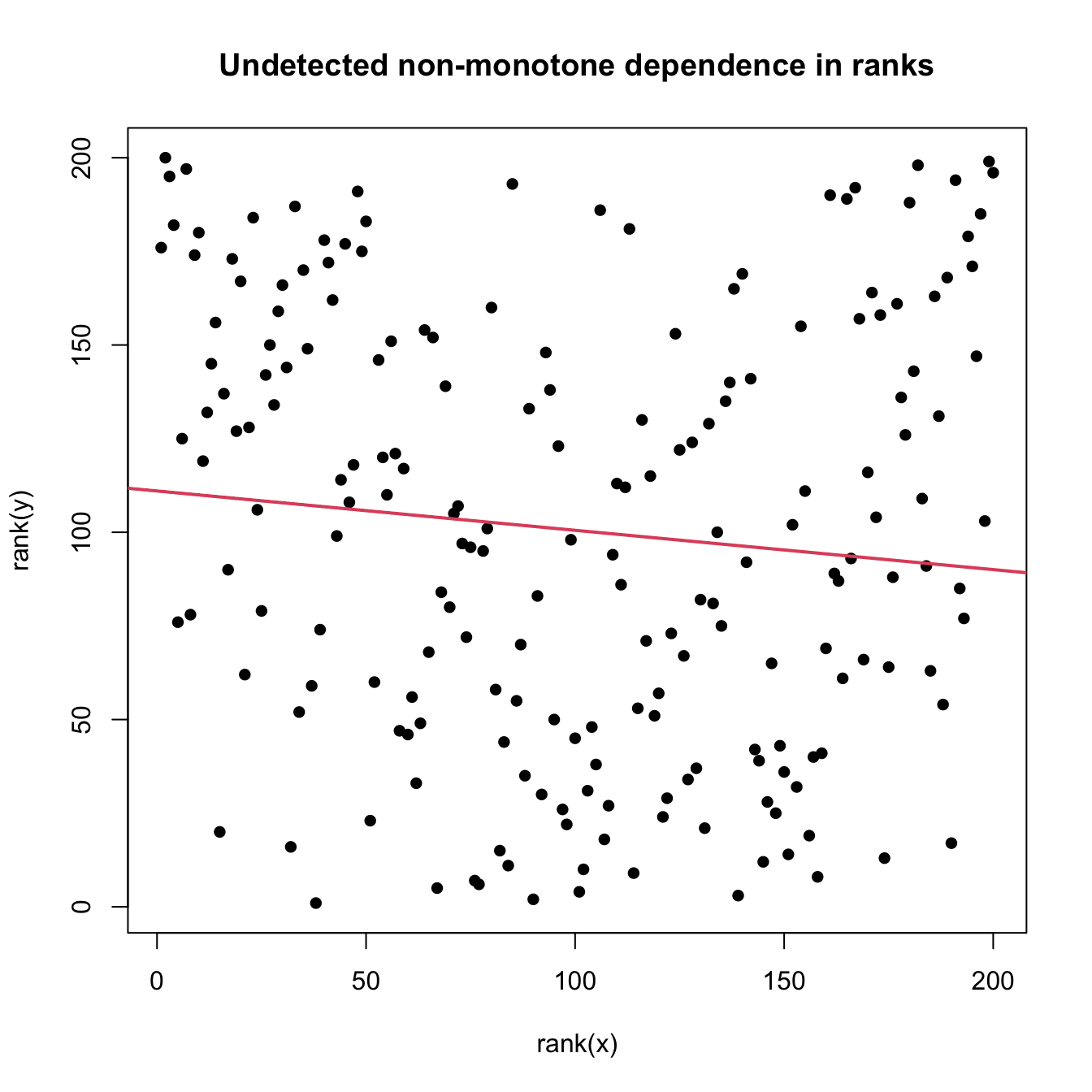

Figure 6.9: Pdfs and cdfs of \(X\) and \(Y\sim0.8\mathcal{N}(-3,1)+0.2\mathcal{N}(5,4),\) where \(X\) is distributed as the mixture nor1mix::MW.nm3 but with its standard deviations multiplied by \(5\). \(\mathbb{P}\lbrack X\geq Y\rbrack=0.5356\) but \(\mu_X<\mu_Y,\) since \(\mu_X=-1.9189\) and \(\mu_Y=-1.4.\) The means are shown in solid vertical lines; the dashed vertical lines stand for the medians \(m_X=-2.4944\) and \(m_Y=-2.6814.\)