5 Generalized linear models

As we saw in Chapter 2, linear regression assumes that the response variable \(Y\) is such that

\[\begin{align*} Y \mid (X_1=x_1,\ldots,X_p=x_p)\sim \mathcal{N}(\beta_0+\beta_1x_1+\cdots+\beta_px_p,\sigma^2) \end{align*}\]

and hence

\[\begin{align*} \mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p]=\beta_0+\beta_1x_1+\cdots+\beta_px_p. \end{align*}\]

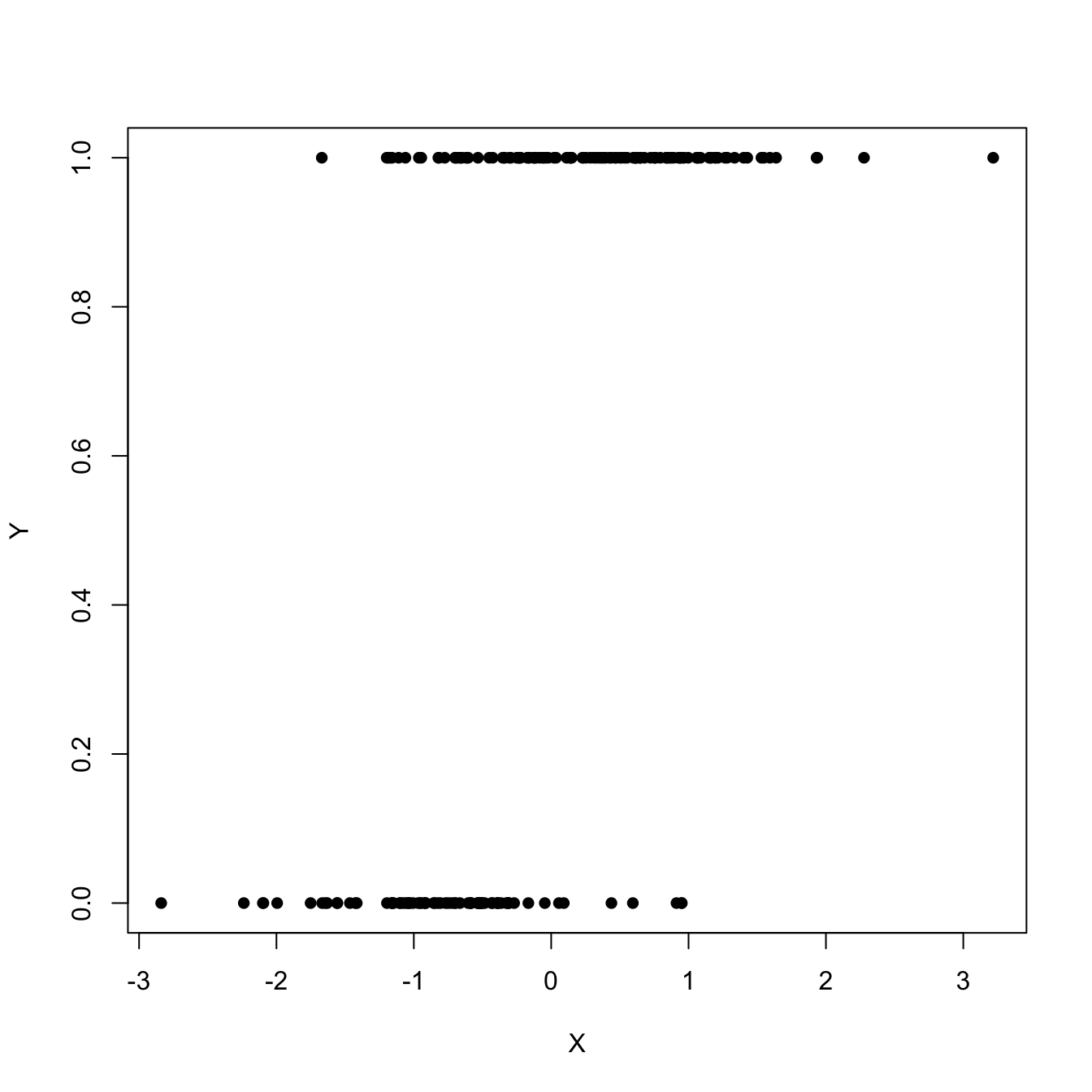

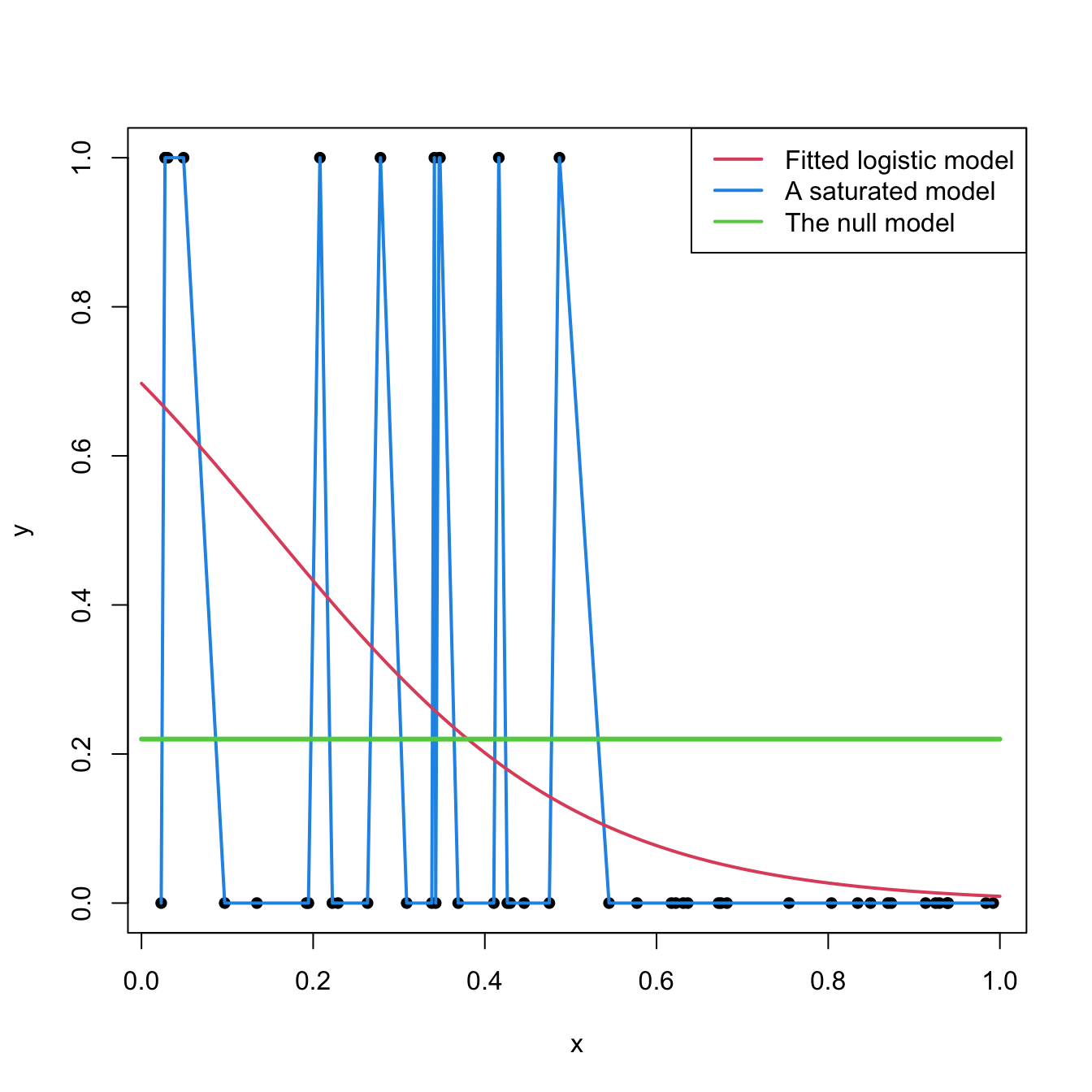

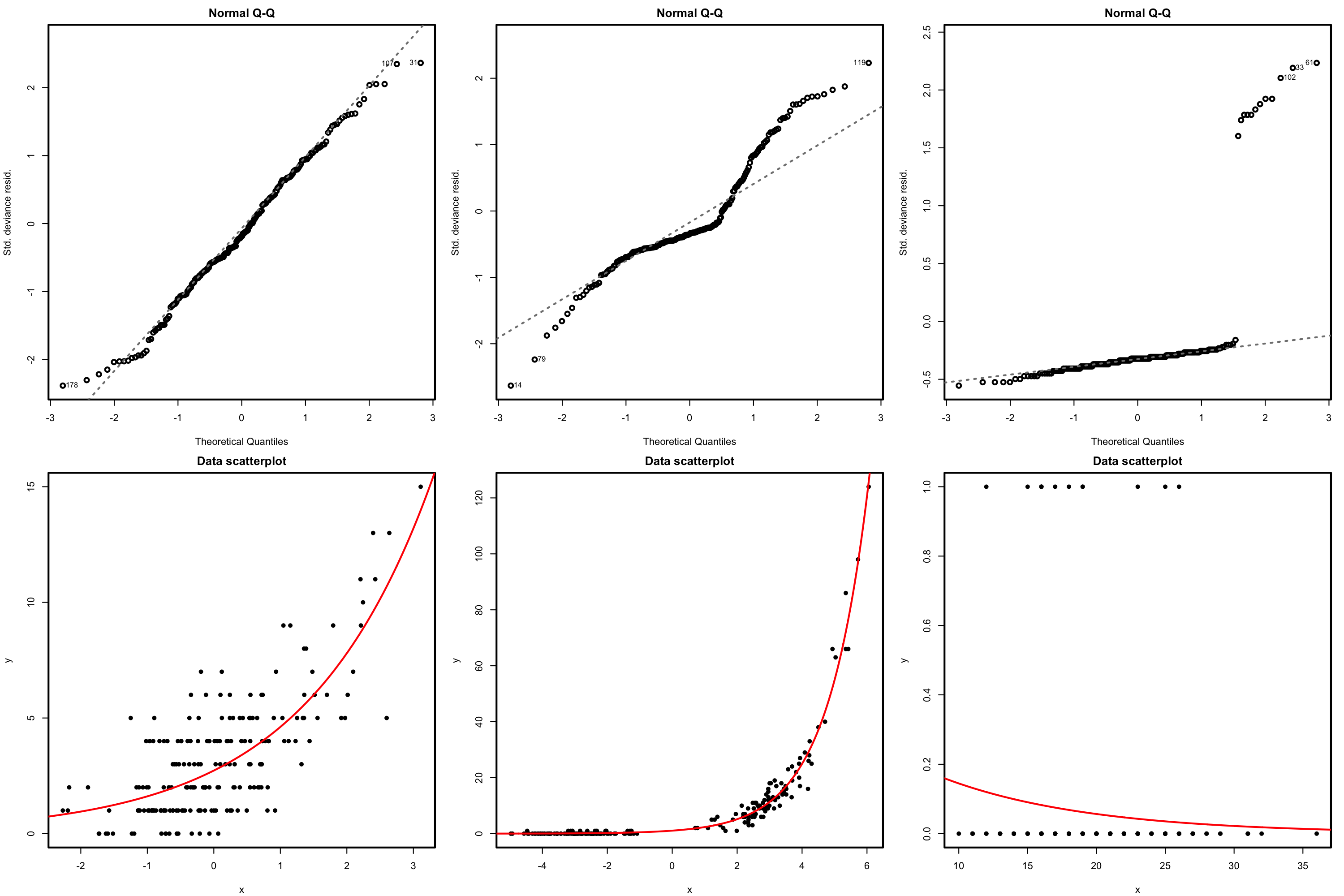

This, in particular, implies that \(Y\) is continuous. In this chapter we will see how generalized linear models can deal with other kinds of distributions for \(Y \mid (X_1=x_1,\ldots,X_p=x_p),\) particularly with discrete responses, by modeling the transformed conditional expectation. The simplest generalized linear model is logistic regression, which arises when \(Y\) is a binary response, that is, a variable encoding two categories with \(0\) and \(1.\) This model would be useful, for example, to predict \(Y\) given \(X\) from the sample \(\{(X_i,Y_i)\}_{i=1}^n\) in Figure 5.1.

Figure 5.1: Scatterplot of a sample \(\{(X_i,Y_i)\}_{i=1}^n\) sampled from a logistic regression.

5.1 Case study: The Challenger disaster

The Challenger disaster occurred on the 28th January of 1986, when the NASA Space Shuttle orbiter Challenger broke apart and disintegrated at 73 seconds into its flight, leading to the deaths of its seven crew members. The accident had serious consequences for NASA’s credibility and resulted in an interruption of 32 months in the shuttle program. The Presidential Rogers Commission (formed by astronaut Neil A. Armstrong and Nobel laureate Richard P. Feynman,147 among others) was created in order to investigate the causes of the disaster.

Figure 5.2: Challenger launch and subsequent explosion, as broadcast live by NBC in 28/01/1986. Video also available here.

The Rogers Commission elaborated a report (Presidential Commission on the Space Shuttle Challenger Accident 1986) with all the findings. The commission determined that the disintegration began with the failure of an O-ring seal in the solid rocket booster due to the unusually cold temperature (\(-0.6\) degrees Celsius; \(30.92\) degrees Fahrenheit) during the launch. This failure produced a breach of burning gas through the solid rocket booster that compromised the whole shuttle structure, resulting in its disintegration due to the extreme aerodynamic forces.

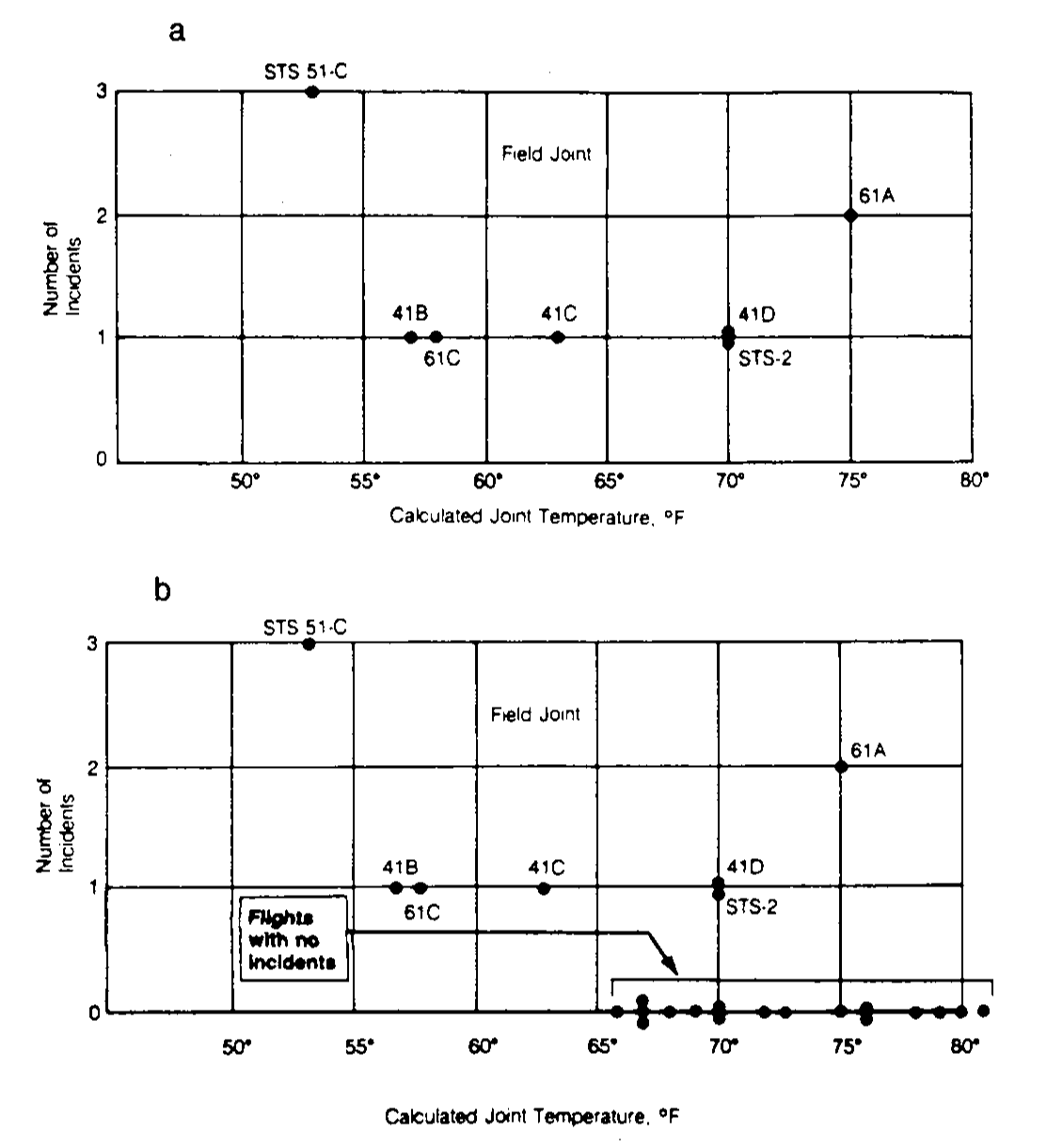

The problem with O-rings was something known. The night before the launch, there was a three-hour teleconference between rocket engineers at Thiokol, the manufacturer company of the solid rocket boosters, and NASA. In the teleconference, the effect on the O-rings performance of the low temperature forecasted for the launch was discussed, and eventually a launch decision was reached.148 Figure 5.3a influenced the data analysis conclusion that sustained the launch decision:

“Temperature data [is] not conclusive on predicting primary O-ring blowby.”

Figure 5.3: Number of incidents in the O-rings (filed joints) versus temperatures. Panel a includes only flights with incidents. Panel b contains all flights (with and without incidents).

The Rogers Commission noted a major flaw in Figure 5.3a, the one presented by the solid rocket booster engineers at the teleconference: the flights with zero incidents were excluded from the plot by the engineers because it was felt that these flights did not contribute any information about the temperature effect (Figure 5.3b). The Rogers Commission therefore concluded:

“A careful analysis of the flight history of O-ring performance would have revealed the correlation of O-ring damage in low temperature”.

The purpose of this case study, inspired by Siddhartha et al. (1989), is to quantify what was the influence of the temperature on the probability of having at least one incident related to the O-rings. Specifically, we want to address the following questions:

- Q1. Is the temperature associated with O-ring incidents?

- Q2. In which way was the temperature affecting the probability of O-ring incidents?

- Q3. What was the predicted probability of an incident in an O-ring for the temperature of the launch day?

- Q4. What was the predicted maximum probability of an incident in an O-ring if the launch were postponed until the temperature was above \(11.67\) degrees Celsius, as the vice president of engineers of Thiokol recommended?

To try to answer these questions, we analyze the challenger dataset (download), partially collected in Table 5.1. The dataset contains information regarding the state of the solid rocket boosters after launch149 for \(23\) flights prior to the Challenger launch. Each row has, among others, the following variables:

-

fail.field,fail.nozzle: binary variables indicating whether there was an incident with the O-rings in the field joints or in the nozzles of the solid rocket boosters.1codifies an incident and0its absence. For the analysis, we focus on the O-rings of the field joint as those were the most determinant for the accident. -

nfail.field,nfail.nozzle: number of incidents with the O-rings in the field joints and in the nozzles. -

temp: temperature in the day of launch, measured in degrees Celsius. -

pres.field,pres.nozzle: leak-check pressure tests of the O-rings. These tests assured that the rings would seal the joint.

| flight | date | nfails.field | nfails.nozzle | fail.field | fail.nozzle | temp |

|---|---|---|---|---|---|---|

| 1 | 12/04/81 | 0 | 0 | 0 | 0 | 18.9 |

| 2 | 12/11/81 | 1 | 0 | 1 | 0 | 21.1 |

| 3 | 22/03/82 | 0 | 0 | 0 | 0 | 20.6 |

| 5 | 11/11/82 | 0 | 0 | 0 | 0 | 20.0 |

| 6 | 04/04/83 | 0 | 2 | 0 | 1 | 19.4 |

| 7 | 18/06/83 | 0 | 0 | 0 | 0 | 22.2 |

| 8 | 30/08/83 | 0 | 0 | 0 | 0 | 22.8 |

| 9 | 28/11/83 | 0 | 0 | 0 | 0 | 21.1 |

| 41-B | 03/02/84 | 1 | 1 | 1 | 1 | 13.9 |

| 41-C | 06/04/84 | 1 | 1 | 1 | 1 | 17.2 |

| 41-D | 30/08/84 | 1 | 1 | 1 | 1 | 21.1 |

| 41-G | 05/10/84 | 0 | 0 | 0 | 0 | 25.6 |

| 51-A | 08/11/84 | 0 | 0 | 0 | 0 | 19.4 |

| 51-C | 24/01/85 | 2 | 2 | 1 | 1 | 11.7 |

| 51-D | 12/04/85 | 0 | 2 | 0 | 1 | 19.4 |

| 51-B | 29/04/85 | 0 | 2 | 0 | 1 | 23.9 |

| 51-G | 17/06/85 | 0 | 2 | 0 | 1 | 21.1 |

| 51-F | 29/07/85 | 0 | 0 | 0 | 0 | 27.2 |

| 51-I | 27/08/85 | 0 | 0 | 0 | 0 | 24.4 |

| 51-J | 03/10/85 | 0 | 0 | 0 | 0 | 26.1 |

| 61-A | 30/10/85 | 2 | 0 | 1 | 0 | 23.9 |

| 61-B | 26/11/85 | 0 | 2 | 0 | 1 | 24.4 |

| 61-C | 12/01/86 | 1 | 2 | 1 | 1 | 14.4 |

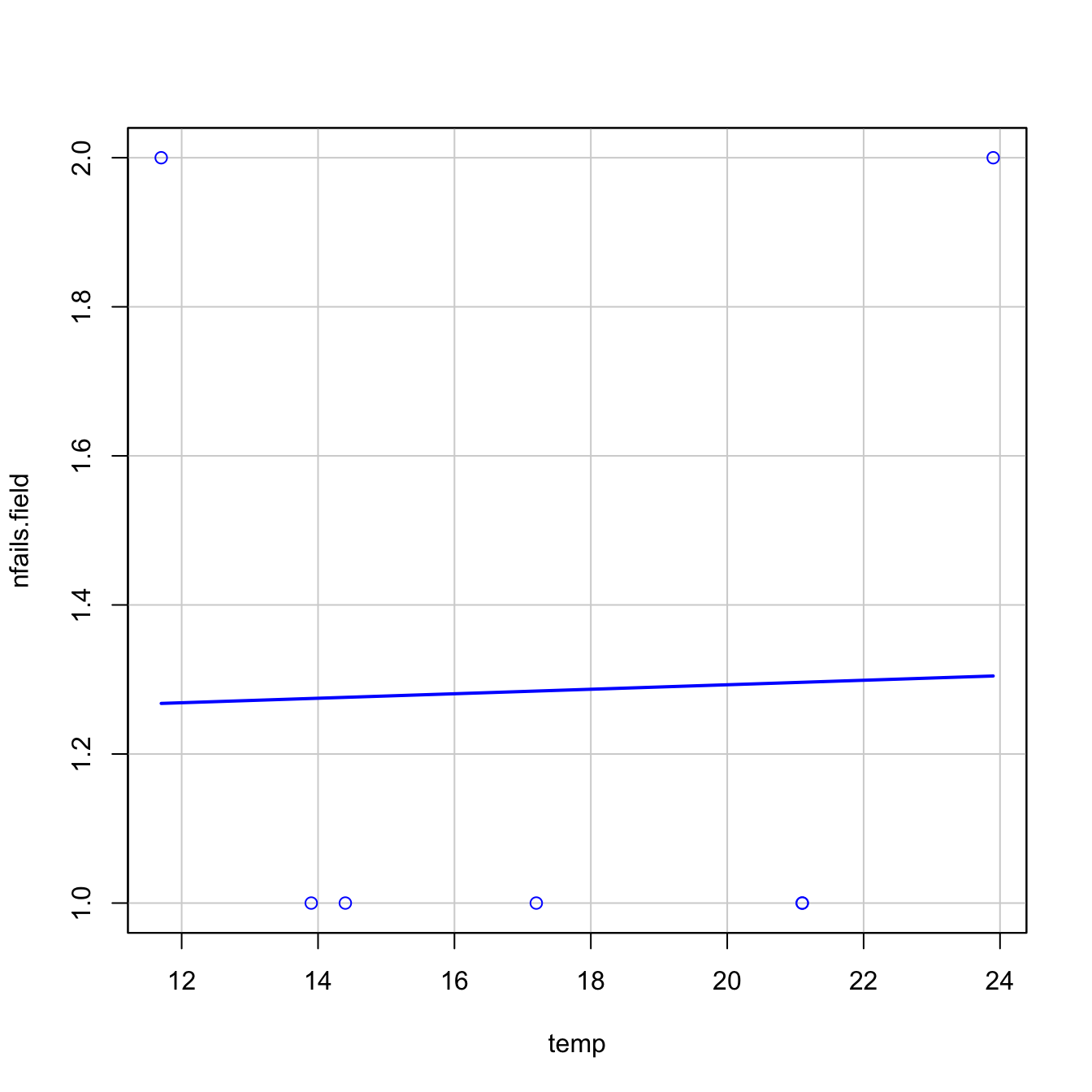

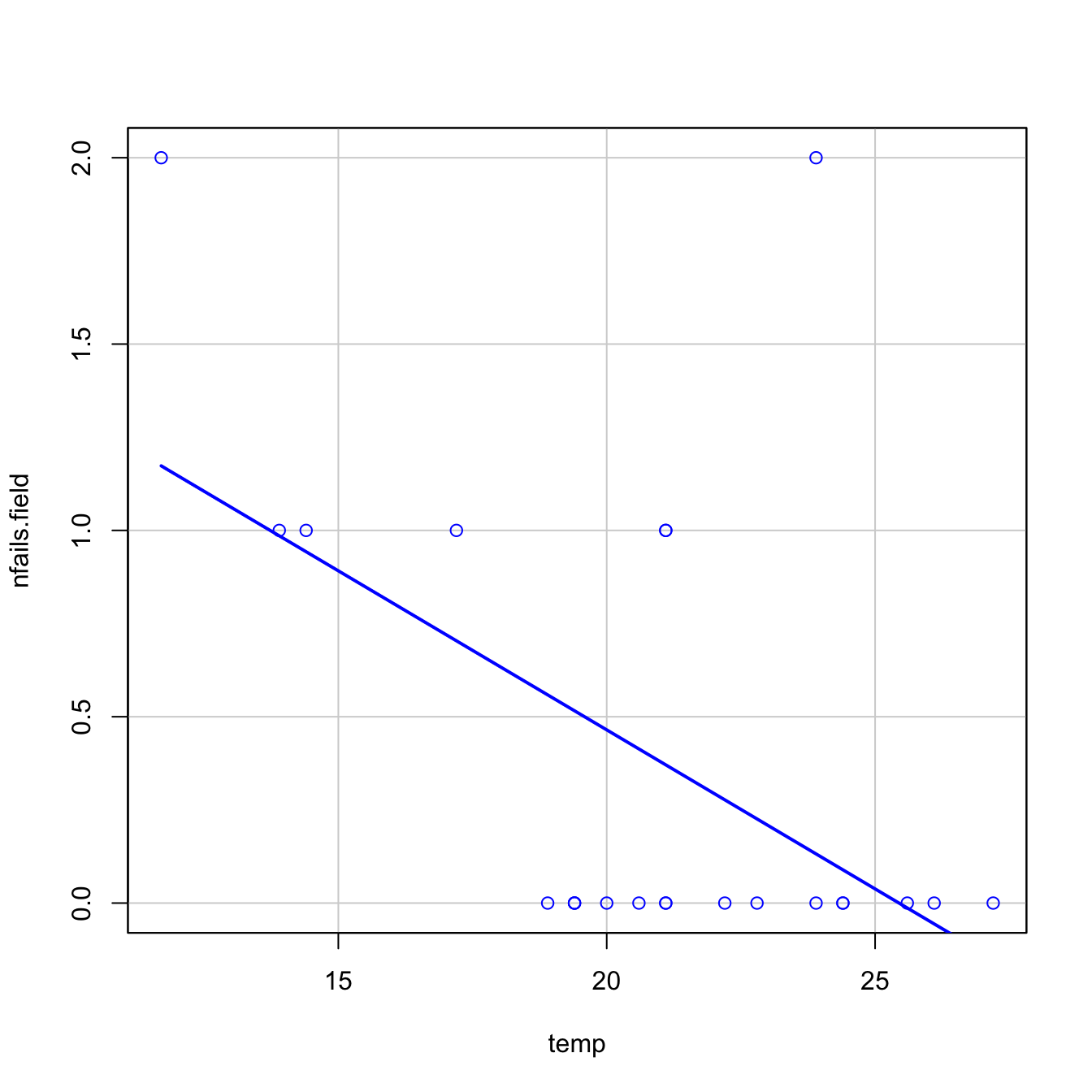

Let’s begin the analysis by replicating Figures 5.3a and 5.3b, and by checking that linear regression is not the right tool for addressing Q1–Q4.

# Read data

challenger <- read.table(file = "challenger.txt", header = TRUE, sep = "\t")

# Figures 5.3a and 5.3b

car::scatterplot(nfails.field ~ temp, smooth = FALSE, boxplots = FALSE,

data = challenger, subset = nfails.field > 0)

car::scatterplot(nfails.field ~ temp, smooth = FALSE, boxplots = FALSE,

data = challenger)

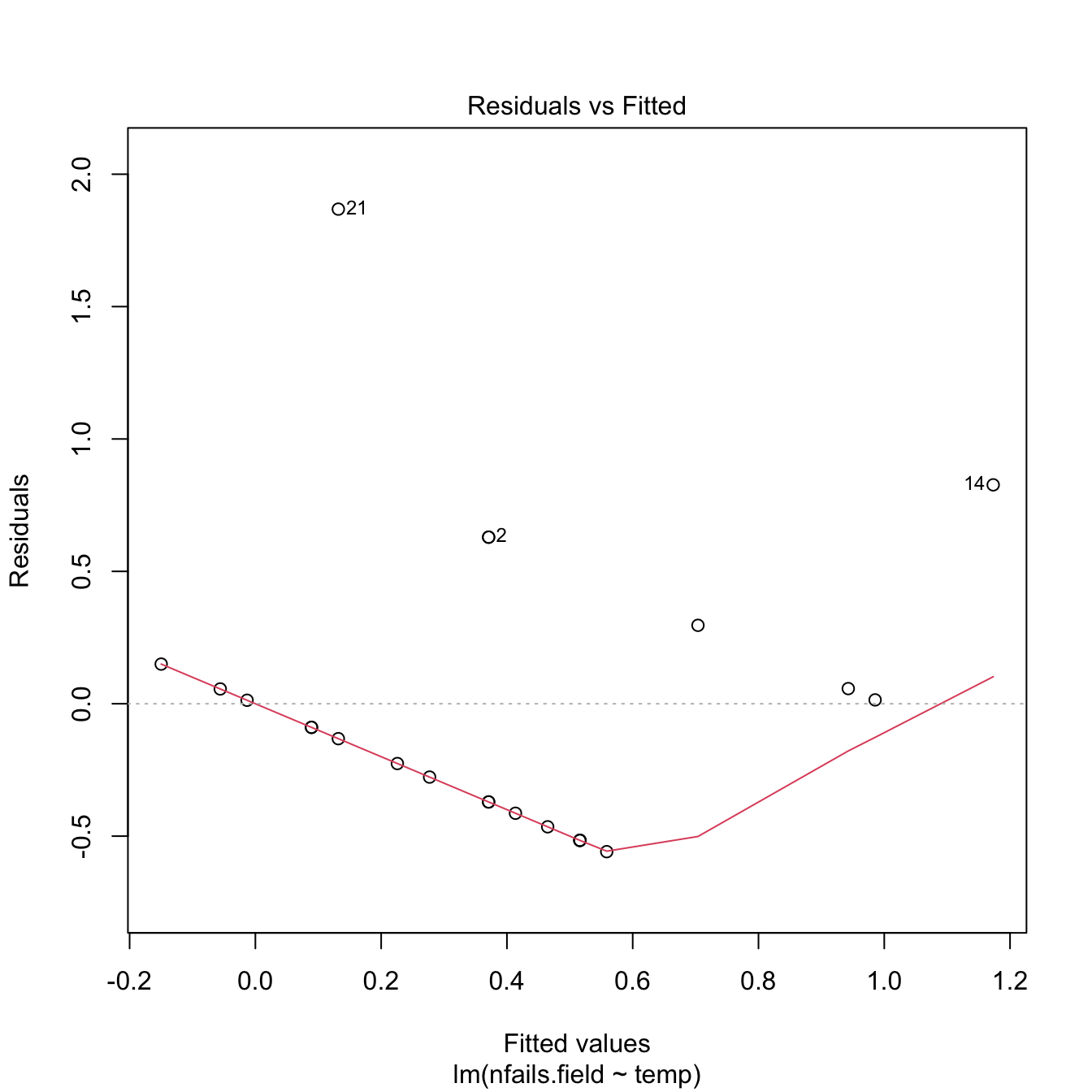

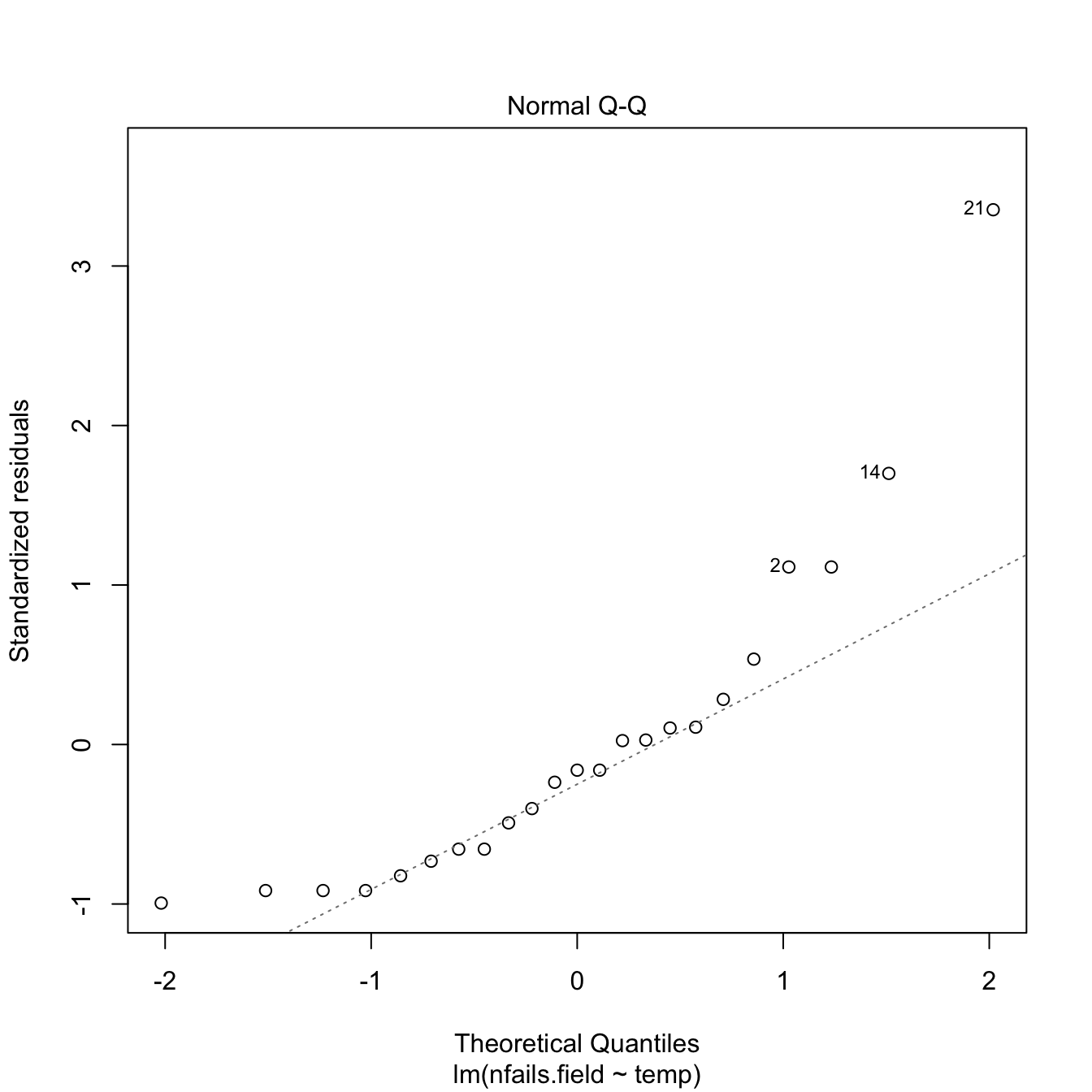

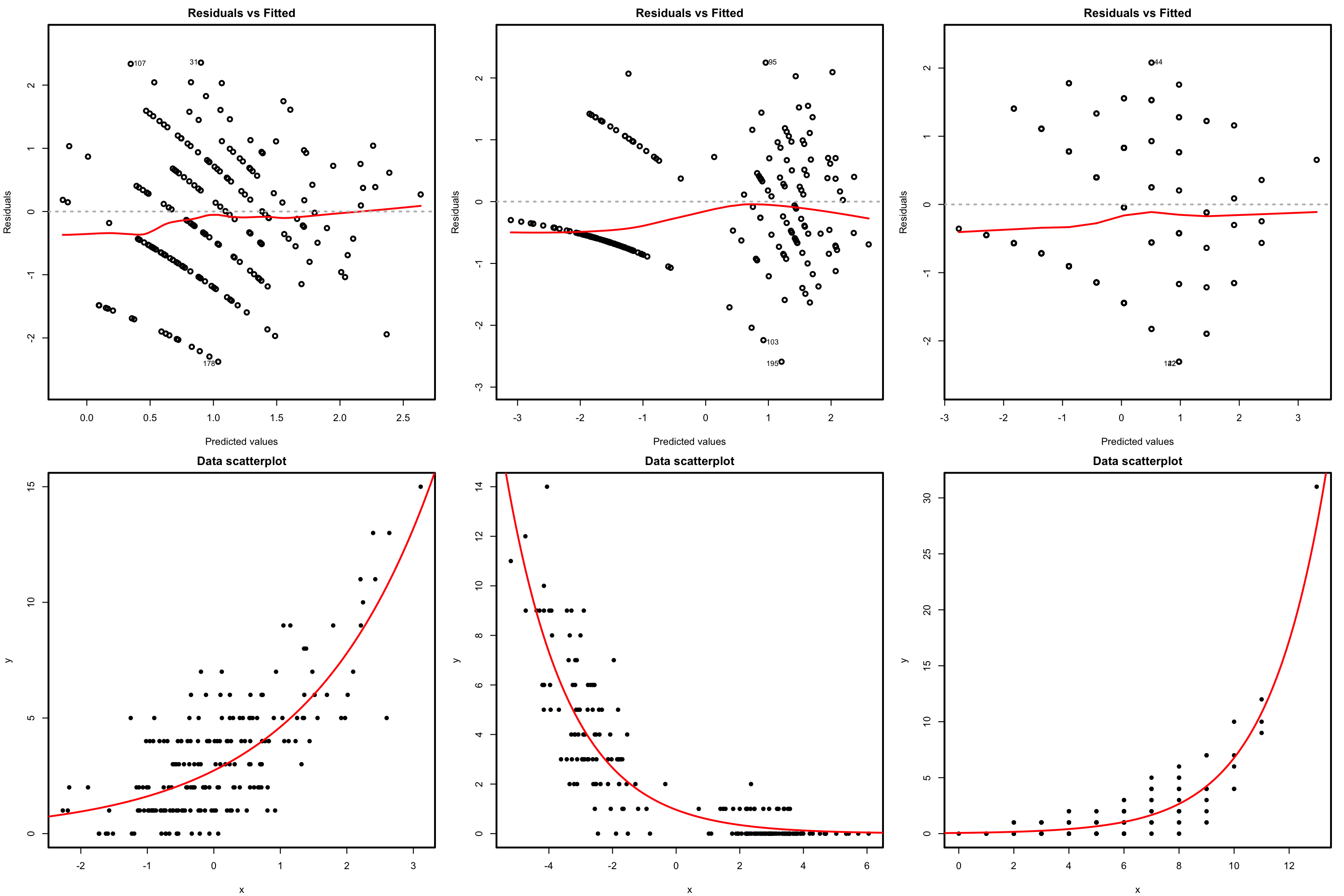

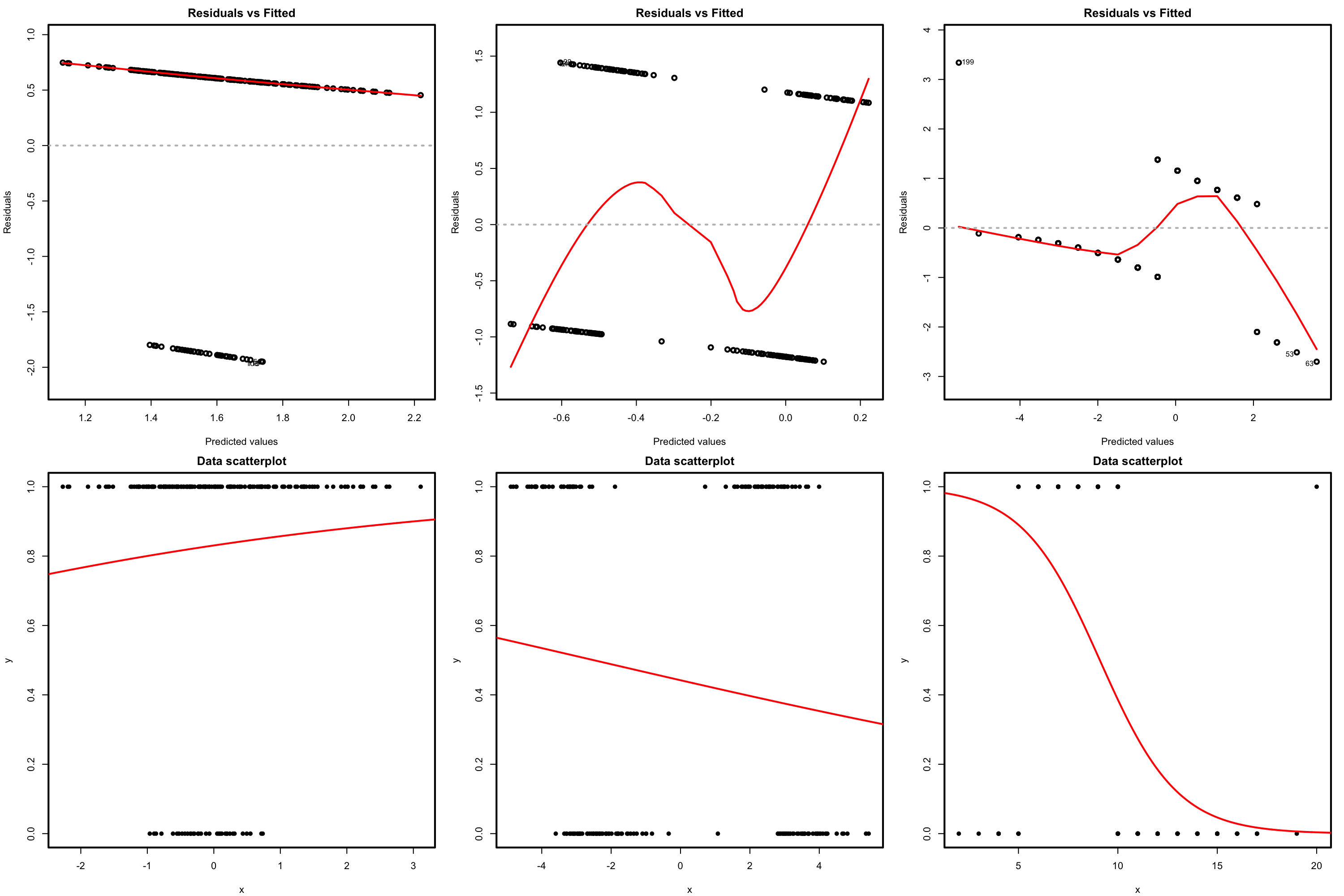

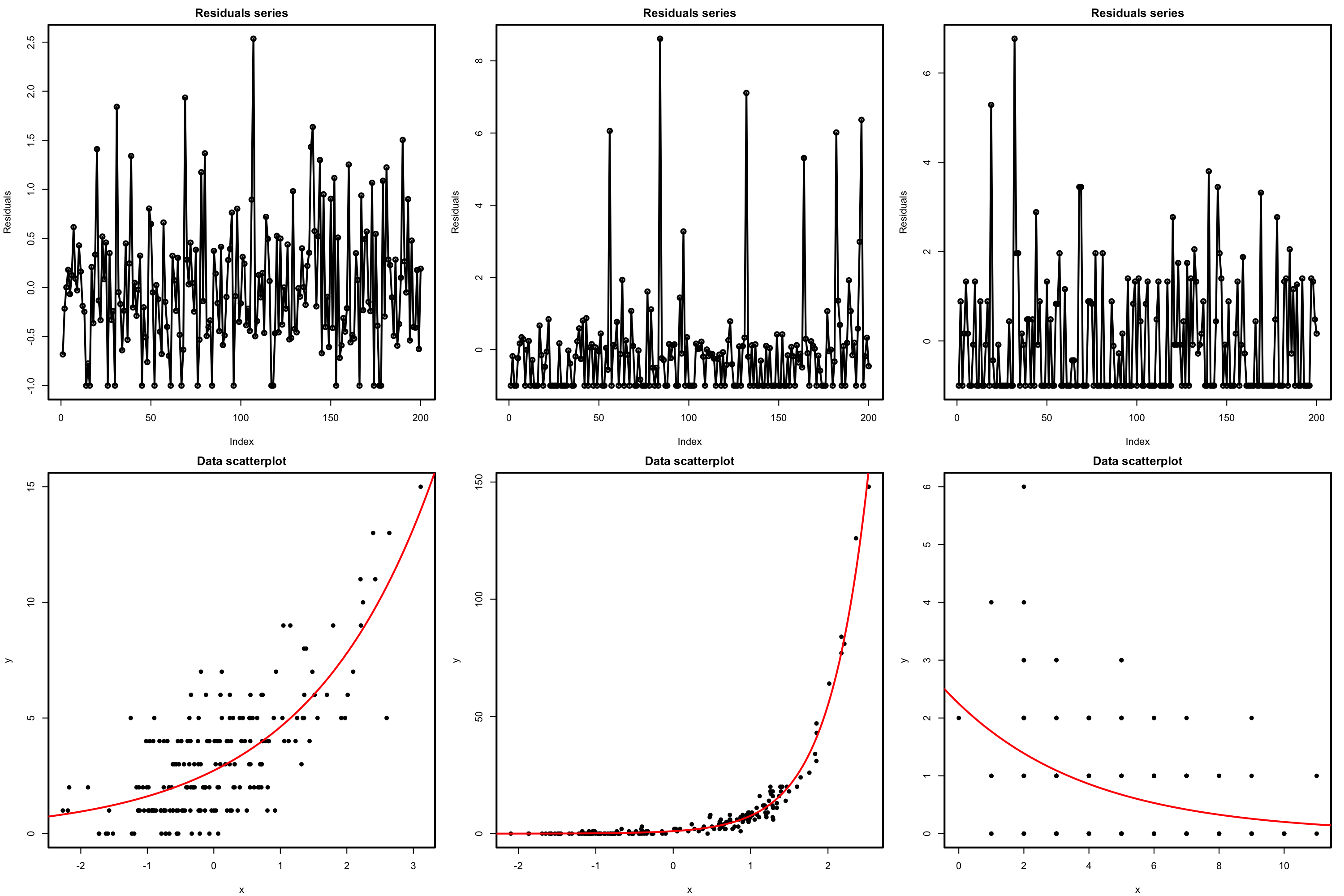

There is a fundamental problem in using linear regression for this data: the response is not continuous. As a consequence, there is no linearity and the errors around the mean are not normal (indeed, they are strongly non-normal). Let’s check this with the corresponding diagnostic plots:

# Fit linear model, and run linearity and normality diagnostics

mod <- lm(nfails.field ~ temp, data = challenger)

plot(mod, 1)

plot(mod, 2)

Although linear regression is not the appropriate tool for this data, it is able to detect the obvious difference between the two plots:

- The trend for launches with incidents is flat, hence suggesting there is no correlation on the temperature (Figure 5.3a). This was one of the arguments behind NASA’s decision to launch the rocket at a temperature of \(-0.6\) degrees Celsius.

- However, as Figure 5.3b reveals, the trend for all launches indicates a clear negative dependence between temperature and number of incidents! Think about it in this way: the minimum temperature for a launch without incidents ever recorded was above \(18\) degrees Celsius, and the Challenger was launched at \(-0.6\) without clearly knowing the effects of such low temperatures.

Along this chapter we will see the required tools for answering precisely Q1–Q4.

5.2 Model formulation and estimation

For simplicity, we first study the logistic regression and then study the general case of a generalized linear model.

5.2.1 Logistic regression

As we saw in Section 2.2, the multiple linear model described the relation between the random variables \(X_1,\ldots,X_p\) and \(Y\) by assuming a linear relation in the conditional expectation:

\[\begin{align} \mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p]=\beta_0+\beta_1x_1+\cdots+\beta_px_p.\tag{5.1} \end{align}\]

In addition, it made three more assumptions on the data (see Section 2.3), which resulted in the following one-line summary of the linear model:

\[\begin{align*} Y \mid (X_1=x_1,\ldots,X_p=x_p)\sim \mathcal{N}(\beta_0+\beta_1x_1+\cdots+\beta_px_p,\sigma^2). \end{align*}\]

Recall that a necessary condition for the linear model to hold is that \(Y\) is continuous, in order to satisfy the normality of the errors. Therefore, the linear model is designed for a continuous response.

The situation when \(Y\) is discrete (naturally ordered values) or categorical (non-ordered categories) requires a different treatment. The simplest situation is when \(Y\) is binary: it can only take two values, codified for convenience as \(1\) (success) and \(0\) (failure). For binary variables there is no fundamental distinction between the treatment of discrete and categorical variables. Formally, a binary variable is referred to as a Bernoulli variable:150 \(Y\sim\mathrm{Ber}(p),\) \(0\leq p\leq1\;\)151, if

\[\begin{align*} Y=\left\{\begin{array}{ll}1,&\text{with probability }p,\\0,&\text{with probability }1-p,\end{array}\right. \end{align*}\]

or, equivalently, if

\[\begin{align} \mathbb{P}[Y=y]=p^y(1-p)^{1-y},\quad y=0,1.\tag{5.2} \end{align}\]

Recall that a Bernoulli variable is completely determined by the probability \(p.\) Therefore, so are its mean and variance:

\[\begin{align*} \mathbb{E}[Y]=\mathbb{P}[Y=1]=p\quad\text{and}\quad\mathbb{V}\mathrm{ar}[Y]=p(1-p). \end{align*}\]

Assume then that \(Y\) is a Bernoulli variable and that \(X_1,\ldots,X_p\) are predictors associated with \(Y.\) The purpose in logistic regression is to model

\[\begin{align} \mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p]=\mathbb{P}[Y=1 \mid X_1=x_1,\ldots,X_p=x_p],\tag{5.3} \end{align}\]

that is, to model how the conditional expectation of \(Y\) or, equivalently, the conditional probability of \(Y=1,\) is changing according to particular values of the predictors. At sight of (5.1), a tempting possibility is to consider the model

\[\begin{align*} \mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p]=\beta_0+\beta_1x_1+\cdots+\beta_px_p=:\eta. \end{align*}\]

However, such a model will run into serious problems inevitably: negative probabilities and probabilities larger than one may happen.

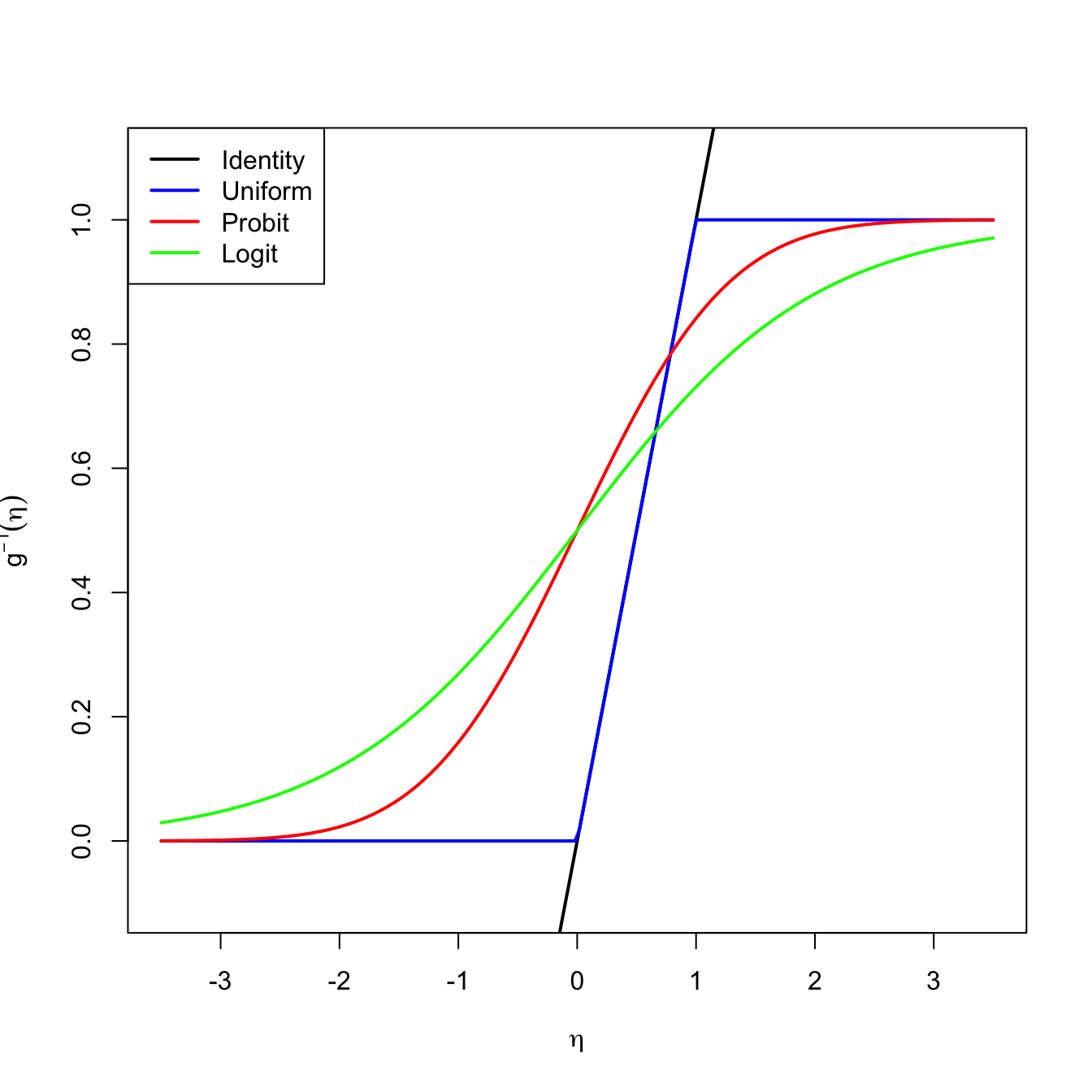

A solution is to consider a link function \(g\) to encapsulate the value of \(\mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p]\) and map it back to \(\mathbb{R}.\) Or, alternatively, a function \(g^{-1}\) that takes \(\eta\in\mathbb{R}\) and maps it to \([0,1],\) the support of \(\mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p].\) There are several link functions \(g\) with associated \(g^{-1}.\) Each link generates a different model:

- Uniform link. Based on the truncation \(g^{-1}(\eta)=\eta\mathbb{1}_{\{0<\eta<1\}}+\mathbb{1}_{\{\eta\geq1\}}.\)

- Probit link. Based on the normal cdf, that is, \(g^{-1}(\eta)=\Phi(\eta).\)

- Logit link. Based on the logistic cdf:152

\[\begin{align*} g^{-1}(\eta)=\mathrm{logistic}(\eta):=\frac{e^\eta}{1+e^\eta}=\frac{1}{1+e^{-\eta}}. \end{align*}\]

Figure 5.4: Transformations \(g^{-1}\) associated with different link functions. The transformations \(g^{-1}\) map the response of a linear regression \(\eta=\beta_0+\beta_1x_1+\cdots+\beta_px_p\) to \(\lbrack 0,1\rbrack.\)

The logistic transformation is the most employed due to its tractability, interpretability, and smoothness.153 Its inverse, \(g:[0,1]\longrightarrow\mathbb{R},\) is known as the logit function:

\[\begin{align*} \mathrm{logit}(p):=\mathrm{logistic}^{-1}(p)=\log\left(\frac{p}{1-p}\right). \end{align*}\]

In conclusion, with the logit link function we can map the domain of \(Y\) to \(\mathbb{R}\) in order to apply a linear model. The logistic model can be then equivalently stated as

\[\begin{align} \mathbb{P}[Y=1 \mid X_1=x_1,\ldots,X_p=x_p]&=\mathrm{logistic}(\eta)=\frac{1}{1+e^{-\eta}}\tag{5.4}, \end{align}\]

or as

\[\begin{align} \mathrm{logit}(\mathbb{P}[Y=1 \mid X_1=x_1,\ldots,X_p=x_p])=\eta \tag{5.5} \end{align}\]

where recall that

\[\begin{align*} \eta=\beta_0+\beta_1x_1+\cdots+\beta_px_p. \end{align*}\]

There is a clear interpretation of the role of the linear predictor \(\eta\) in (5.4) when we come back to (5.3):

- If \(\eta=0,\) then \(\mathbb{P}[Y=1 \mid X_1=x_1,\ldots,X_p=x_p]=\frac{1}{2}\) (\(Y=1\) and \(Y=0\) are equally likely).

- If \(\eta<0,\) then \(\mathbb{P}[Y=1 \mid X_1=x_1,\ldots,X_p=x_p]<\frac{1}{2}\) (\(Y=1\) is less likely).

- If \(\eta>0,\) then \(\mathbb{P}[Y=1 \mid X_1=x_1,\ldots,X_p=x_p]>\frac{1}{2}\) (\(Y=1\) is more likely).

To be more precise on the interpretation of the coefficients we need to introduce the odds. The odds is an equivalent way of expressing the distribution of probabilities in a binary variable \(Y.\) Instead of using \(p\) to characterize the distribution of \(Y,\) we can use

\[\begin{align} \mathrm{odds}(Y):=\frac{p}{1-p}=\frac{\mathbb{P}[Y=1]}{\mathbb{P}[Y=0]}.\tag{5.6} \end{align}\]

The odds is thus the ratio between the probability of success and the probability of failure.154 It is extensively used in betting.155 due to its better interpretability156 Conversely, if the odds of \(Y\) is given, we can easily know what is the probability of success \(p,\) using the inverse of (5.6):157

\[\begin{align*} p=\mathbb{P}[Y=1]=\frac{\text{odds}(Y)}{1+\text{odds}(Y)}. \end{align*}\]

Recall that the odds is a number in \([0,+\infty].\) The \(0\) and \(+\infty\) values are attained for \(p=0\) and \(p=1,\) respectively. The log-odds (or logit) is a number in \([-\infty,+\infty].\)

We can rewrite (5.4) in terms of the odds (5.6)158 so we get:

\[\begin{align} \mathrm{odds}(Y \mid X_1=x_1,\ldots,X_p=x_p)=e^{\eta}=e^{\beta_0}e^{\beta_1x_1}\cdots e^{\beta_px_p}.\tag{5.7} \end{align}\]

Alternatively, taking logarithms, we have the log-odds (or logit)

\[\begin{align} \log(\mathrm{odds}(Y \mid X_1=x_1,\ldots,X_p=x_p))=\beta_0+\beta_1x_1+\cdots+\beta_px_p.\tag{5.8} \end{align}\]

The conditional log-odds (5.8) plays the role of the conditional mean for multiple linear regression. Therefore, we have an analogous interpretation for the coefficients:

- \(\beta_0\): is the log-odds when \(X_1=\cdots=X_p=0.\)

- \(\beta_j,\) \(1\leq j\leq p\): is the additive increment of the log-odds for an increment of one unit in \(X_j=x_j,\) provided that the remaining variables \(X_1,\ldots,X_{j-1},X_{j+1},\ldots,X_p\) do not change.

The log-odds is not as easy to interpret as the odds. For that reason, an equivalent way of interpreting the coefficients, this time based on (5.7), is:

- \(e^{\beta_0}\): is the odds when \(X_1=\cdots=X_p=0.\)

- \(e^{\beta_j},\) \(1\leq j\leq p\): is the multiplicative increment of the odds for an increment of one unit in \(X_j=x_j,\) provided that the remaining variables \(X_1,\ldots,X_{j-1},X_{j+1},\ldots,X_p\) do not change. If the increment in \(X_j\) is of \(r\) units, then the multiplicative increment in the odds is \((e^{\beta_j})^r.\)

As a consequence of this last interpretation, we have:

5.2.1.1 Case study application

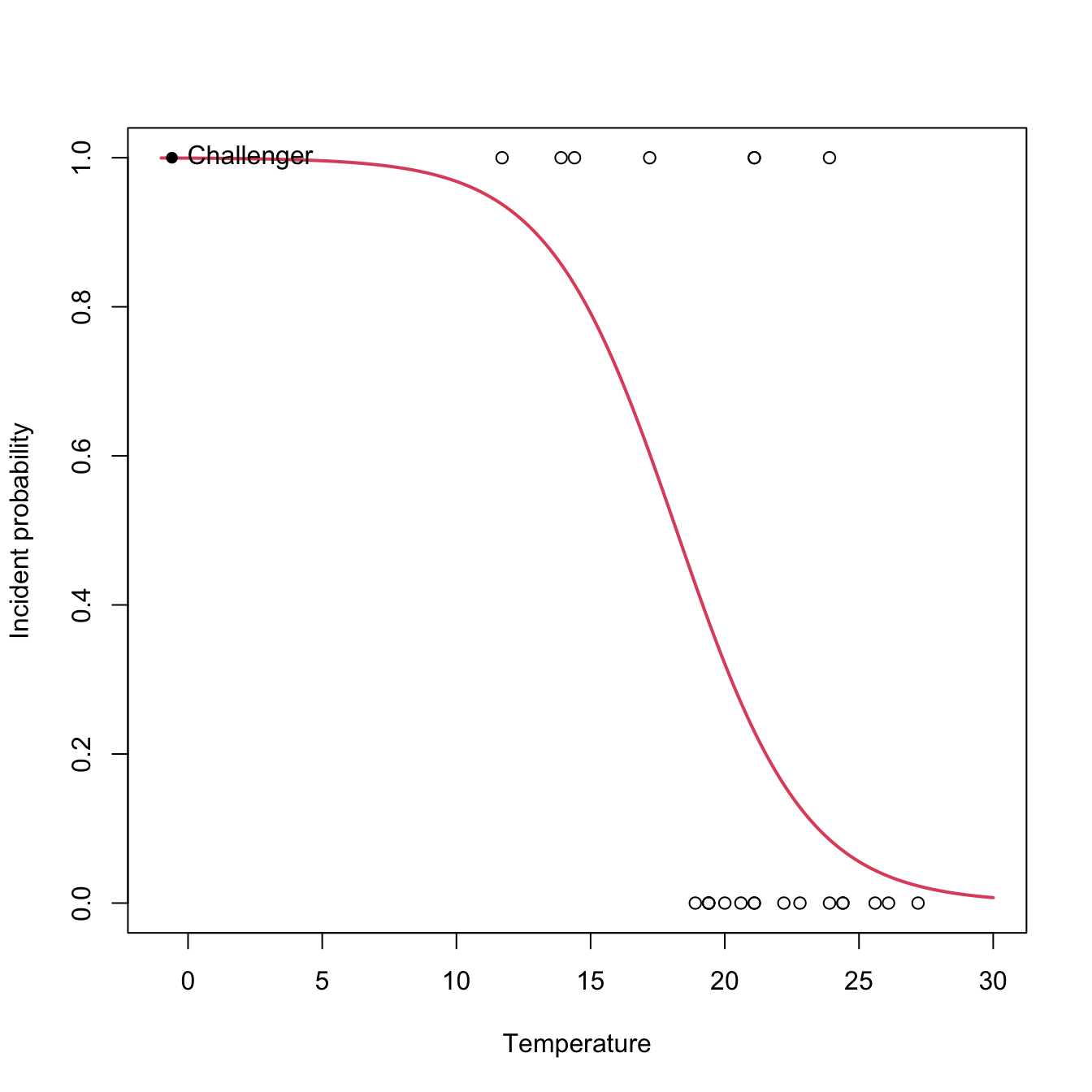

In the Challenger case study we used fail.field as an indicator of whether “there was at least an incident with the O-rings” (1 = yes, 0 = no). Let’s see if the temperature was associated with O-ring incidents (Q1). For that, we compute the logistic regression of fail.field on temp and we plot the fitted logistic curve.

# Logistic regression: computed with glm and family = "binomial"

nasa <- glm(fail.field ~ temp, family = "binomial", data = challenger)

nasa

##

## Call: glm(formula = fail.field ~ temp, family = "binomial", data = challenger)

##

## Coefficients:

## (Intercept) temp

## 7.5837 -0.4166

##

## Degrees of Freedom: 22 Total (i.e. Null); 21 Residual

## Null Deviance: 28.27

## Residual Deviance: 20.33 AIC: 24.33

# Plot data

plot(challenger$temp, challenger$fail.field, xlim = c(-1, 30),

xlab = "Temperature", ylab = "Incident probability")

# Draw the fitted logistic curve

x <- seq(-1, 30, l = 200)

eta <- nasa$coefficients[1] + nasa$coefficients[2] * x

y <- 1 / (1 + exp(-eta))

lines(x, y, col = 2, lwd = 2)

# The Challenger

points(-0.6, 1, pch = 16)

text(-0.6, 1, labels = "Challenger", pos = 4)

At the sight of this curve and the summary it seems that the temperature was affecting the probability of an O-ring incident (Q1). Let’s quantify this statement and answer Q2 by looking to the coefficients of the model:

# Exponentiated coefficients ("odds ratios")

exp(coef(nasa))

## (Intercept) temp

## 1965.9743592 0.6592539The exponentials of the estimated coefficients are:

- \(e^{\hat\beta_0}=1965.974.\) This means that, when the temperature is zero, the fitted odds is \(1965.974,\) so the (estimated) probability of having an incident (\(Y=1\)) is \(1965.974\) times larger than the probability of not having an incident (\(Y=0\)). Or, in other words, the probability of having an incident at temperature zero is \(\frac{1965.974}{1965.974+1}=0.999.\)

- \(e^{\hat\beta_1}=0.659.\) This means that each Celsius degree increment on the temperature multiplies the fitted odds by a factor of \(0.659\approx\frac{2}{3},\) hence reducing it.

However, for the moment we cannot say whether these findings are significant or are just an artifact of the randomness of the data, since we do not have information on the variability of the estimates of \(\boldsymbol{\beta}.\) We will need inference for that.

5.2.1.2 Estimation by maximum likelihood

The estimation of \(\boldsymbol{\beta}\) from a sample \(\{(\mathbf{x}_i,Y_i)\}_{i=1}^n\;\)159 is done by Maximum Likelihood Estimation (MLE). As it can be seen in Appendix B, in the linear model, under the assumptions mentioned in Section 2.3, MLE is equivalent to least squares estimation. In the logistic model, we assume that160

\[\begin{align*} Y_i\mid (X_{1}=x_{i1},\ldots,X_{p}=x_{ip})\sim \mathrm{Ber}(\mathrm{logistic}(\eta_i)),\quad i=1,\ldots,n, \end{align*}\]

where \(\eta_i:=\beta_0+\beta_1x_{i1}+\cdots+\beta_px_{ip}.\) Denoting \(p_i(\boldsymbol{\beta}):=\mathrm{logistic}(\eta_i),\) the log-likelihood of \(\boldsymbol{\beta}\) is

\[\begin{align} \ell(\boldsymbol{\beta})&=\log\left(\prod_{i=1}^np_i(\boldsymbol{\beta})^{Y_i}(1-p_i(\boldsymbol{\beta}))^{1-Y_i}\right)\nonumber\\ &=\sum_{i=1}^n\left[Y_i\log(p_i(\boldsymbol{\beta}))+(1-Y_i)\log(1-p_i(\boldsymbol{\beta}))\right].\tag{5.9} \end{align}\]

The ML estimate of \(\boldsymbol{\beta}\) is

\[\begin{align*} \hat{\boldsymbol{\beta}}:=\arg\max_{\boldsymbol{\beta}\in\mathbb{R}^{p+1}}\ell(\boldsymbol{\beta}). \end{align*}\]

Unfortunately, due to the nonlinearity of (5.9), there is no explicit expression for \(\hat{\boldsymbol{\beta}}\) and it has to be obtained numerically by means of an iterative procedure. We will see that with more detail in the next section. Just be aware that this iterative procedure may fail to converge in low sample size situations with perfect classification, where the likelihood might be numerically unstable.

Figure 5.5: The logistic regression fit and its dependence on \(\beta_0\) (horizontal displacement) and \(\beta_1\) (steepness of the curve). Recall the effect of the sign of \(\beta_1\) in the curve: if positive, the logistic curve has an ‘s’ form; if negative, the form is a reflected ‘s’. Application available here.

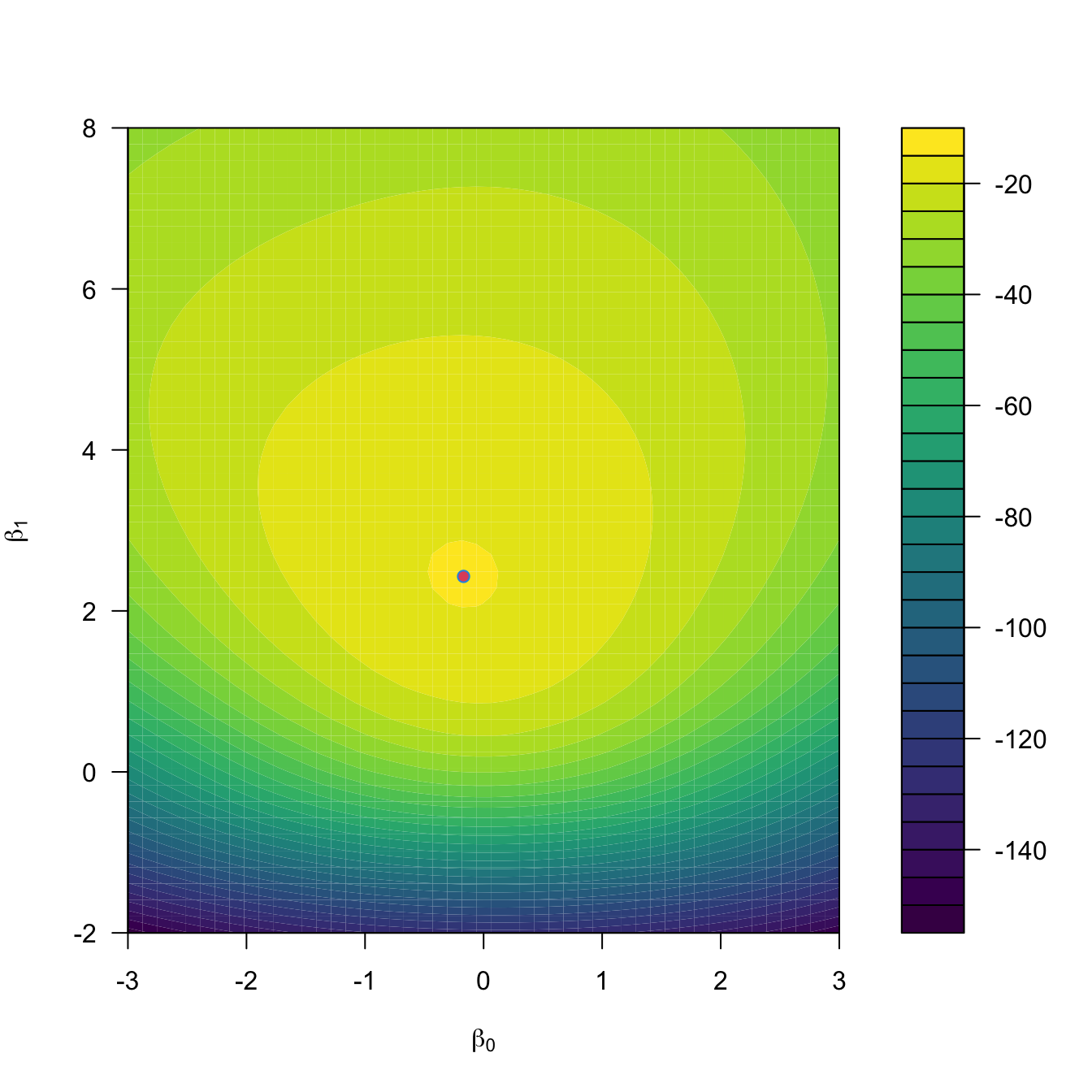

Figure 5.5 shows how the log-likelihood changes with respect to the values for \((\beta_0,\beta_1)\) in three data patterns. The data of the illustration has been generated with the next chunk of code.

# Data

set.seed(987204452)

x <- rnorm(50, sd = 1.5)

y1 <- -0.5 + 3 * x

y2 <- 0.5 - 2 * x

y3 <- -2 + 5 * x

y1 <- rbinom(50, size = 1, prob = 1 / (1 + exp(-y1)))

y2 <- rbinom(50, size = 1, prob = 1 / (1 + exp(-y2)))

y3 <- rbinom(50, size = 1, prob = 1 / (1 + exp(-y3)))

# Data

data_mle <- data.frame(x = x, y1 = y1, y2 = y2, y3 = y3)For fitting a logistic model we employ glm, which has the syntax glm(formula = response ~ predictor, family = "binomial", data = data), where response is a binary variable. Note that family = "binomial" is referring to the fact that the response is a binomial variable (since it is a Bernoulli). Let’s check that indeed the coefficients given by glm are the ones that maximize the likelihood given in the animation of Figure 5.5. We do so for y1 ~ x.

# Call glm

mod <- glm(y1 ~ x, family = "binomial", data = data_mle)

mod$coefficients

## (Intercept) x

## -0.9464403 2.8566464

# -loglik(beta)

minus_log_lik <- function(beta) {

p <- 1 / (1 + exp(-(beta[1] + beta[2] * x)))

-sum(y1 * log(p) + (1 - y1) * log(1 - p))

}

# Optimization using as starting values beta = c(0, 0)

opt <- optim(par = c(0, 0), fn = minus_log_lik)

opt

## $par

## [1] -0.9464609 2.8558362

##

## $value

## [1] 11.5585

##

## $counts

## function gradient

## 63 NA

##

## $convergence

## [1] 0

##

## $message

## NULL

# Visualization of the negative log-likelihood surface

beta0 <- seq(-4, 2, l = 200)

beta1 <- seq(0, 8, l = 200)

L <- matrix(nrow = length(beta0), ncol = length(beta1))

for (i in seq_along(beta0)) {

for (j in seq_along(beta1)) {

L[i, j] <- minus_log_lik(c(beta0[i], beta1[j]))

}

}

# Log-spaced levels concentrate the contour density around the minimum of L

n_lev <- 30

lev_breaks <- 10^seq(log10(min(L)), log10(max(L)), length.out = n_lev + 1)

lev_lines <- lev_breaks[-c(1, length(lev_breaks))]

image(beta0, beta1, L, breaks = lev_breaks,

col = viridis::viridis(n_lev, direction = -1),

xlab = expression(beta[0]), ylab = expression(beta[1]), las = 1)

contour(beta0, beta1, L, levels = lev_lines, labels = round(lev_lines),

add = TRUE, labcex = 0.5, lwd = 0.4)

points(mod$coefficients[1], mod$coefficients[2], col = 2, pch = 16)

points(opt$par[1], opt$par[2], col = 4)

Figure 5.6: Negative log-likelihood surface \(-\ell(\beta_0,\beta_1)\) and its global minimum \((\hat\beta_0,\hat\beta_1),\) which corresponds to the maximum likelihood estimator.

Exercise 5.1 For the regressions y2 ~ x and y3 ~ x, do the following:

5.2.2 General case

The same idea we used in logistic regression, namely transforming the conditional expectation of \(Y\) into something that can be modeled by a linear model (that is, a quantity that lives in \(\mathbb{R}\)), can be generalized. This raises the family of generalized linear models, which extends the linear model to different kinds of response variables and provides a convenient parametric framework.

The first ingredient is a link function \(g,\) that is monotonic and differentiable, which is going to produce a transformed expectation161 to be modeled by a linear combination of the predictors:

\[\begin{align*} g\left(\mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p]\right)=\eta \end{align*}\]

or, equivalently,

\[\begin{align*} \mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p]&=g^{-1}(\eta), \end{align*}\]

where

\[\begin{align*} \eta:=\beta_0+\beta_1x_1+\cdots+\beta_px_p \end{align*}\]

is the linear predictor.

The second ingredient of generalized linear models is a distribution for \(Y \mid (X_1,\ldots,X_p),\) just as the linear model assumes normality or the logistic model assumes a Bernoulli random variable. Thus, we have two linked generalizations with respect to the usual linear model:

- The conditional mean may be modeled by a transformation \(g^{-1}\) of the linear predictor \(\eta.\)

- The distribution of \(Y \mid (X_1,\ldots,X_p)\) may be different from the normal.

Generalized linear models are intimately related with the exponential family,162 163 which is the family of distributions with pdf expressible as

\[\begin{align} f(y;\theta,\phi)=\exp\left\{\frac{y\theta-b(\theta)}{a(\phi)}+c(y,\phi)\right\},\tag{5.10} \end{align}\]

where \(a(\cdot),\) \(b(\cdot),\) and \(c(\cdot,\cdot)\) are specific functions. If \(Y\) has the pdf (5.10), then we write \(Y\sim\mathrm{E}(\theta,\phi,a,b,c).\) If the scale parameter \(\phi\) is known, this is an exponential family with canonical parameter \(\theta\) (if \(\phi\) is unknown, then it may or may not be a two-parameter exponential family).

Distributions from the exponential family have some nice properties. Importantly, if \(Y\sim\mathrm{E}(\theta,\phi,a,b,c),\) then

\[\begin{align} \mu:=\mathbb{E}[Y]=b'(\theta),\quad \sigma^2:=\mathbb{V}\mathrm{ar}[Y]=b''(\theta)a(\phi).\tag{5.11} \end{align}\]

The canonical link function is the function \(g\) that transforms \(\mu=b'(\theta)\) into the canonical parameter \(\theta\). For \(\mathrm{E}(\theta,\phi,a,b,c),\) this happens if

\[\begin{align} \theta=g(\mu) \tag{5.12} \end{align}\]

or, more explicitly due to (5.11), if

\[\begin{align} g(\mu)=(b')^{-1}(\mu). \tag{5.13} \end{align}\]

In the case of canonical link function, the one-line summary of the generalized linear model is (independence is implicit)

\[\begin{align} Y \mid (X_1=x_1,\ldots,X_p=x_p)\sim\mathrm{E}(\eta,\phi,a,b,c).\tag{5.14} \end{align}\]

Expression (5.14) gives insight on what a generalized linear model does:

- Select a member of the exponential family in (5.10) for modeling \(Y.\)

- The canonical link function \(g\) is \(g(\mu)=(b')^{-1}(\mu).\) In this case, \(\theta=g(\mu).\)

- The generalized linear model associated with the member of the exponential family and \(g\) models the conditional \(\theta,\) given \(X_1,\ldots,X_n,\) by means of the linear predictor \(\eta.\) This is equivalent to modeling the conditional \(\mu\) by means of \(g^{-1}(\eta).\)

The linear model arises as a particular case of (5.14) with

\[\begin{align*} a(\phi)=\phi,\quad b(\theta)=\frac{\theta^2}{2},\quad c(y,\phi)=-\frac{1}{2}\left\{\frac{y^2}{\phi}+\log(2\pi\phi)\right\}, \end{align*}\]

and scale parameter \(\phi=\sigma^2.\) In this case, \(\mu=\theta\) and the canonical link function \(g\) is the identity.

Exercise 5.2 Show that the normal, Bernoulli, exponential, and Poisson distributions are members of the exponential family. For that, express their pdfs in terms of (5.10) and identify who is \(\theta\) and \(\phi.\)

Exercise 5.3 Show that the binomial and gamma (which includes exponential and chi-squared) distributions are members of the exponential family. For that, express their pdfs in terms of (5.10) and identify who is \(\theta\) and \(\phi.\)

The following table lists some useful generalized linear models. Recall that the linear and logistic models of Sections 2.2.3 and 5.2.1 are obtained from the first and second rows, respectively.

| Support of \(Y\) | Generating distribution | Link \(g(\mu)\) | Expectation \(g^{-1}(\eta)\) | Scale \(\phi\) | Distribution of \(Y \mid \mathbf{X}=\mathbf{x}\) |

|---|---|---|---|---|---|

| \(\mathbb{R}\) | \(\mathcal{N}(\mu,\sigma^2)\) | \(\mu\) | \(\eta\) | \(\sigma^2\) | \(\mathcal{N}(\eta,\sigma^2)\) |

| \(\{0,1\}\) | \(\mathrm{Ber}(p)\) | \(\mathrm{logit}(\mu)\) | \(\mathrm{logistic}(\eta)\) | \(1\) | \(\mathrm{Ber}\left(\mathrm{logistic}(\eta)\right)\) |

| \(\{0,\ldots,N\}\) | \(\mathrm{B}(N,p)\) | \(\log\left(\frac{\mu}{N-\mu}\right)\) | \(N\cdot\mathrm{logistic}(\eta)\) | \(1\) | \(\mathrm{B}(N,\mathrm{logistic}(\eta))\) |

| \(\{0,1,\ldots\}\) | \(\mathrm{Pois}(\lambda)\) | \(\log(\mu)\) | \(e^\eta\) | \(1\) | \(\mathrm{Pois}(e^{\eta})\) |

| \((0,\infty)\) | \(\Gamma(a,\nu)\;\)164 | \(-\frac{1}{\mu}\) | \(-\frac{1}{\eta}\) | \(\frac{1}{\nu}\) | \(\Gamma(-\eta \nu,\nu)\;\)165 |

Exercise 5.4 Obtain the canonical link function for the exponential distribution \(\mathrm{Exp}(\lambda).\) What is the scale parameter? What is the distribution of \(Y \mid (X_1=x_1,\ldots,X_p=x_p)\) in such model?

5.2.2.1 Poisson regression

Poisson regression is usually employed for modeling count data that arises from the recording of the frequencies of a certain phenomenon. It considers that

\[\begin{align*} Y \mid (X_1=x_1,\ldots,X_p=x_p)\sim\mathrm{Pois}(e^{\eta}), \end{align*}\]

that is,

\[\begin{align} \mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p]&=\lambda(Y \mid X_1=x_1,\ldots,X_p=x_p)\nonumber\\ &=e^{\beta_0+\beta_1x_1+\cdots+\beta_px_p}.\tag{5.15} \end{align}\]

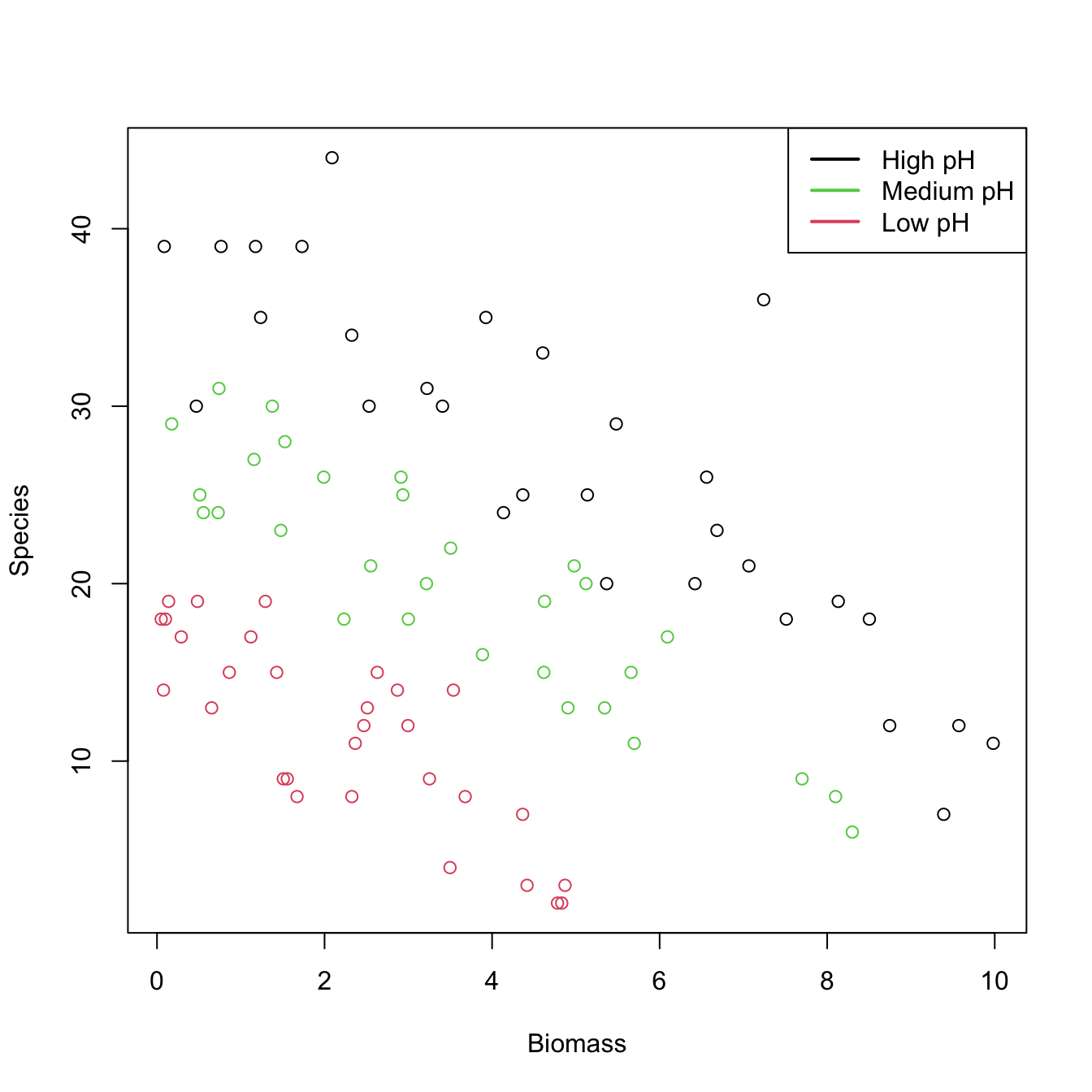

Let’s see how to apply a Poisson regression. For that aim we consider the species (download) dataset. The goal is to analyze whether the Biomass and the pH (a factor) of the terrain are influential on the number of Species. Incidentally, it will serve to illustrate that the use of factors within glm is completely analogous to what we did with lm.

# Read data

species <- read.table("species.txt", header = TRUE)

species$pH <- as.factor(species$pH)

# Plot data

plot(Species ~ Biomass, data = species, col = as.numeric(pH))

legend("topright", legend = c("High pH", "Medium pH", "Low pH"),

col = c(1, 3, 2), lwd = 2) # colors according to as.numeric(pH)

# Fit Poisson regression

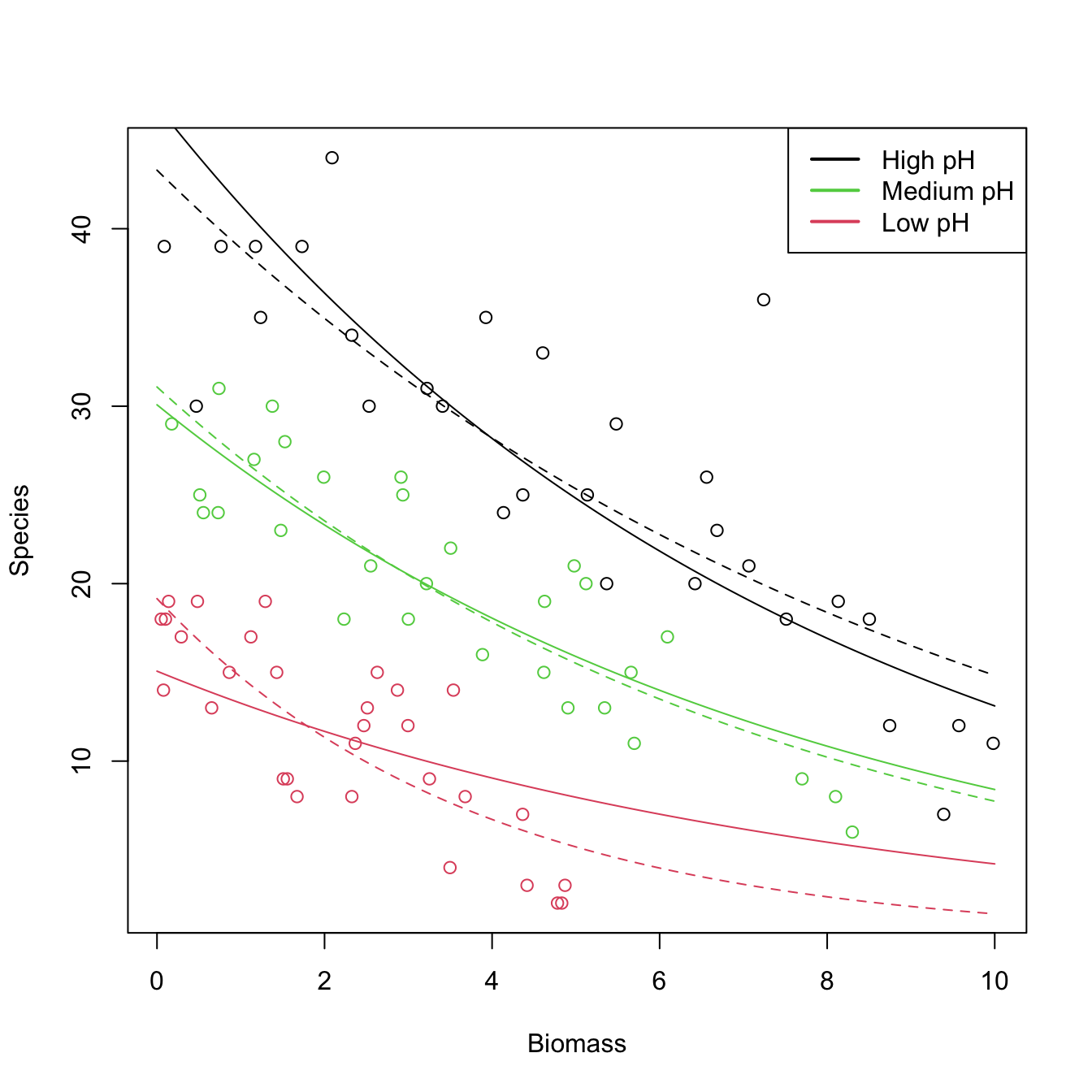

species1 <- glm(Species ~ ., data = species, family = poisson)

summary(species1)

##

## Call:

## glm(formula = Species ~ ., family = poisson, data = species)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) 3.84894 0.05281 72.885 < 2e-16 ***

## pHlow -1.13639 0.06720 -16.910 < 2e-16 ***

## pHmed -0.44516 0.05486 -8.114 4.88e-16 ***

## Biomass -0.12756 0.01014 -12.579 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for poisson family taken to be 1)

##

## Null deviance: 452.346 on 89 degrees of freedom

## Residual deviance: 99.242 on 86 degrees of freedom

## AIC: 526.43

##

## Number of Fisher Scoring iterations: 4

# Took 4 iterations of the IRLS

# Interpretation of the coefficients:

exp(species1$coefficients)

## (Intercept) pHlow pHmed Biomass

## 46.9433686 0.3209744 0.6407222 0.8802418

# - 46.9433 is the average number of species when Biomass = 0 and the pH is high

# - For each increment in one unit in Biomass, the number of species decreases

# by a factor of 0.88 (12% reduction)

# - If pH decreases to med (low), then the number of species decreases by a factor

# of 0.6407 (0.3209)

# With interactions

species2 <- glm(Species ~ Biomass * pH, data = species, family = poisson)

summary(species2)

##

## Call:

## glm(formula = Species ~ Biomass * pH, family = poisson, data = species)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) 3.76812 0.06153 61.240 < 2e-16 ***

## Biomass -0.10713 0.01249 -8.577 < 2e-16 ***

## pHlow -0.81557 0.10284 -7.931 2.18e-15 ***

## pHmed -0.33146 0.09217 -3.596 0.000323 ***

## Biomass:pHlow -0.15503 0.04003 -3.873 0.000108 ***

## Biomass:pHmed -0.03189 0.02308 -1.382 0.166954

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for poisson family taken to be 1)

##

## Null deviance: 452.346 on 89 degrees of freedom

## Residual deviance: 83.201 on 84 degrees of freedom

## AIC: 514.39

##

## Number of Fisher Scoring iterations: 4

exp(species2$coefficients)

## (Intercept) Biomass pHlow pHmed Biomass:pHlow Biomass:pHmed

## 43.2987424 0.8984091 0.4423865 0.7178730 0.8563910 0.9686112

# - If pH decreases to med (low), then the effect of the biomass in the number

# of species decreases by a factor of 0.9686 (0.8564). The higher the pH, the

# stronger the effect of the Biomass in Species

# Draw fits

plot(Species ~ Biomass, data = species, col = as.numeric(pH))

legend("topright", legend = c("High pH", "Medium pH", "Low pH"),

col = c(1, 3, 2), lwd = 2) # colors according to as.numeric(pH)

# Without interactions

bio <- seq(0, 10, l = 100)

z <- species1$coefficients[1] + species1$coefficients[4] * bio

lines(bio, exp(z), col = 1)

lines(bio, exp(species1$coefficients[2] + z), col = 2)

lines(bio, exp(species1$coefficients[3] + z), col = 3)

# With interactions seems to provide a significant improvement

bio <- seq(0, 10, l = 100)

z <- species2$coefficients[1] + species2$coefficients[2] * bio

lines(bio, exp(z), col = 1, lty = 2)

lines(bio, exp(species2$coefficients[3] + species2$coefficients[5] * bio + z),

col = 2, lty = 2)

lines(bio, exp(species2$coefficients[4] + species2$coefficients[6] * bio + z),

col = 3, lty = 2)

Exercise 5.5 For the challenger dataset, do the following:

- Do a Poisson regression of the total number of incidents,

nfails.field + nfails.nozzle, ontemp. - Plot the data and the fitted Poisson regression curve.

- Predict the expected number of incidents at temperatures \(-0.6\) and \(11.67.\)

5.2.2.2 Binomial regression

Binomial regression is an extension of logistic regression that allows us to model discrete responses \(Y\) in \(\{0,1,\ldots,N\},\) where \(N\) is fixed. In its most vanilla version, it considers the model

\[\begin{align} Y \mid (X_1=x_1,\ldots,X_p=x_p)\sim\mathrm{B}(N,\mathrm{logistic}(\eta)),\tag{5.16} \end{align}\]

that is,

\[\begin{align} \mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p]=N\cdot\mathrm{logistic}(\eta).\tag{5.17} \end{align}\]

Comparing (5.17) with (5.4), it is clear that the logistic regression is a particular case with \(N=1.\) The interpretation of the coefficients is therefore clear from the interpretation of (5.4), given that \(\mathrm{logistic}(\eta)\) models the probability of success of each of the \(N\) experiments of the binomial \(\mathrm{B}(N,\mathrm{logistic}(\eta)).\)

The extra flexibility that binomial regression has offers interesting applications. First, we can use (5.16) as an approach to model proportions166 \(Y/N\in[0,1].\) In this case, (5.17) becomes167

\[\begin{align*} \mathbb{E}[Y/N \mid X_1=x_1,\ldots,X_p=x_p]=\mathrm{logistic}(\eta). \end{align*}\]

Second, we can let \(N\) be dependent on the predictors to accommodate group structures, perhaps the most common usage of binomial regression:

\[\begin{align} Y \mid \mathbf{X}=\mathbf{x}\sim\mathrm{B}(N_\mathbf{x},\mathrm{logistic}(\eta)),\tag{5.18} \end{align}\]

where the size of the binomial distribution, \(N_\mathbf{x},\) depends on the values of the predictors. For example, imagine that the predictors are two quantitative variables and two dummy variables encoding three categories. Then \(\mathbf{X}=(X_1,X_2, D_1,D_2)^\top\) and \(\mathbf{x}=(x_1,x_2, d_1,d_2)^\top.\) In this case, \(N_\mathbf{x}\) could for example take the form

\[\begin{align*} N_\mathbf{x}=\begin{cases} 30,&d_1=0,d_2=0,\\ 25,&d_1=1,d_2=0,\\ 50,&d_1=0,d_2=1, \end{cases} \end{align*}\]

that is, we have a different number of experiments on each category, and we want to model the number (or, equivalently, the proportion) of successes for each one, also taking into account the effects of other qualitative variables. This is a very common situation in practice, when one encounters the sample version of (5.18):

\[\begin{align} Y_i\mid \mathbf{X}_i=\mathbf{x}_i\sim\mathrm{B}(N_i,\mathrm{logistic}(\eta_i)),\quad i=1,\ldots,n.\tag{5.19} \end{align}\]

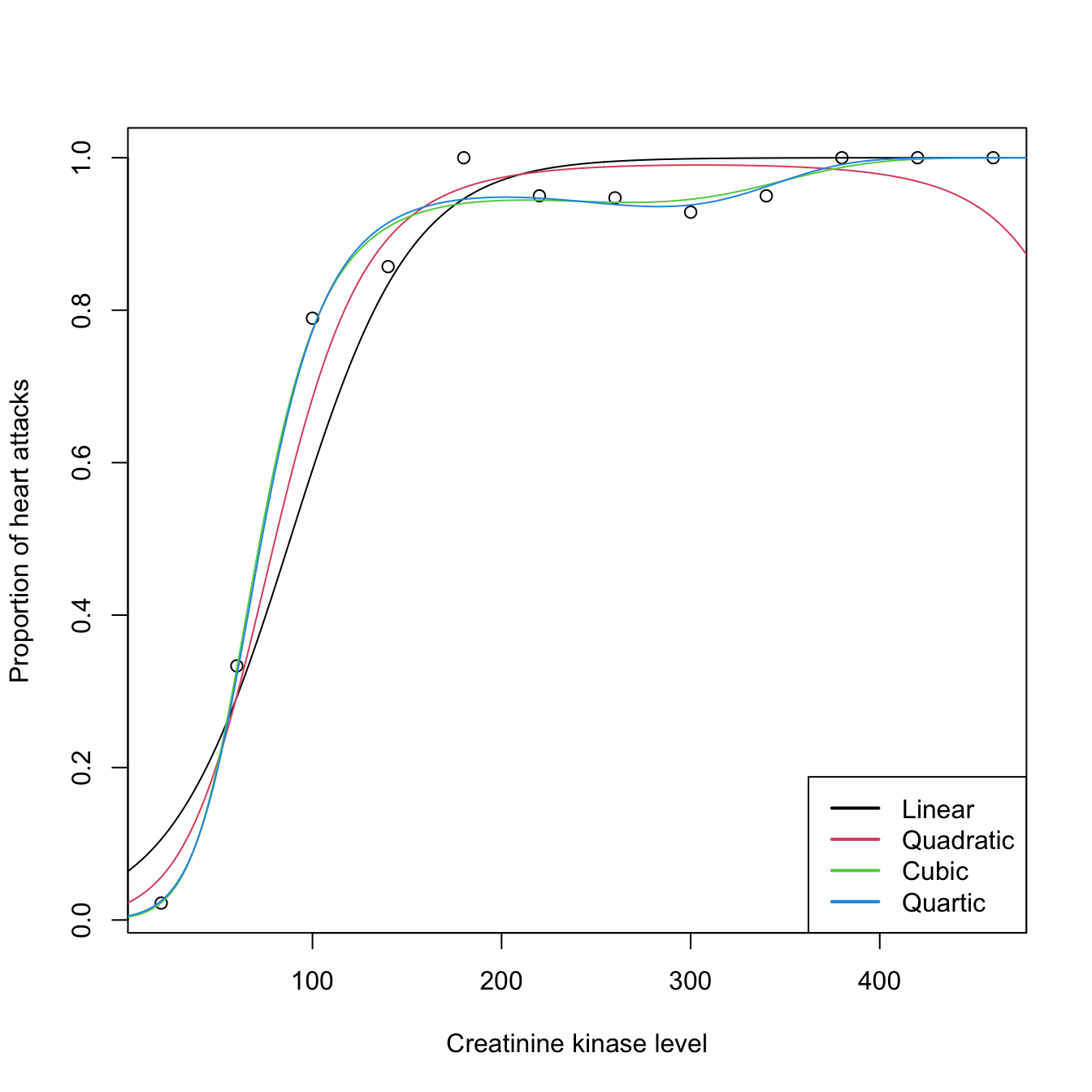

Let’s see an example of binomial regression that illustrates the particular usage of glm() in this case. The example is a data application from Wood (2006) featuring different binomial sizes. It employs the heart (download) dataset. The goal is to investigate whether the level of creatinine kinase level present in the blood, ck, is a good diagnostic for determining if a patient is likely to have a future heart attack. The number of patients that did not have a heart attack (ok) and that had a heart attack (ha) was established after ck was measured. In total, there are \(226\) patients that have been aggregated into \(n=12\;\)168 categories of different sizes that have been created according to the average level of ck. Table 5.2 shows the data.

# Read data

heart <- read.table("heart.txt", header = TRUE)

# Sizes for each observation (Ni's)

heart$Ni <- heart$ok + heart$ha

# Proportions of patients with heart attacks

heart$prop <- heart$ha / (heart$ha + heart$ok)| ck | ha | ok | Ni | prop |

|---|---|---|---|---|

| 20 | 2 | 88 | 90 | 0.022 |

| 60 | 13 | 26 | 39 | 0.333 |

| 100 | 30 | 8 | 38 | 0.789 |

| 140 | 30 | 5 | 35 | 0.857 |

| 180 | 21 | 0 | 21 | 1.000 |

| 220 | 19 | 1 | 20 | 0.950 |

| 260 | 18 | 1 | 19 | 0.947 |

| 300 | 13 | 1 | 14 | 0.929 |

| 340 | 19 | 1 | 20 | 0.950 |

| 380 | 15 | 0 | 15 | 1.000 |

| 420 | 7 | 0 | 7 | 1.000 |

| 460 | 8 | 0 | 8 | 1.000 |

# Plot of proportions versus ck: twelve observations, each requiring

# Ni patients to determine the proportion

plot(heart$ck, heart$prop, xlab = "Creatinine kinase level",

ylab = "Proportion of heart attacks")

# Fit binomial regression: recall the cbind() to pass the number of successes

# and failures

heart1 <- glm(cbind(ha, ok) ~ ck, family = binomial, data = heart)

summary(heart1)

##

## Call:

## glm(formula = cbind(ha, ok) ~ ck, family = binomial, data = heart)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) -2.758358 0.336696 -8.192 2.56e-16 ***

## ck 0.031244 0.003619 8.633 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 271.712 on 11 degrees of freedom

## Residual deviance: 36.929 on 10 degrees of freedom

## AIC: 62.334

##

## Number of Fisher Scoring iterations: 6

# Alternatively: put proportions as responses, but then it is required to

# inform about the binomial size of each observation

heart1 <- glm(prop ~ ck, family = binomial, data = heart, weights = Ni)

summary(heart1)

##

## Call:

## glm(formula = prop ~ ck, family = binomial, data = heart, weights = Ni)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) -2.758358 0.336696 -8.192 2.56e-16 ***

## ck 0.031244 0.003619 8.633 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 271.712 on 11 degrees of freedom

## Residual deviance: 36.929 on 10 degrees of freedom

## AIC: 62.334

##

## Number of Fisher Scoring iterations: 6

# Add fitted line

ck <- 0:500

newdata <- data.frame(ck = ck)

logistic <- function(eta) 1 / (1 + exp(-eta))

lines(ck, logistic(cbind(1, ck) %*% heart1$coefficients))

# It seems that a polynomial fit could better capture the "wiggly" pattern

# of the data

heart2 <- glm(prop ~ poly(ck, 2, raw = TRUE), family = binomial, data = heart,

weights = Ni)

heart3 <- glm(prop ~ poly(ck, 3, raw = TRUE), family = binomial, data = heart,

weights = Ni)

heart4 <- glm(prop ~ poly(ck, 4, raw = TRUE), family = binomial, data = heart,

weights = Ni)

# Best fit given by heart3

BIC(heart1, heart2, heart3, heart4)

## df BIC

## heart1 2 63.30371

## heart2 3 44.27018

## heart3 4 35.59736

## heart4 5 37.96360

summary(heart3)

##

## Call:

## glm(formula = prop ~ poly(ck, 3, raw = TRUE), family = binomial,

## data = heart, weights = Ni)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) -5.786e+00 9.268e-01 -6.243 4.30e-10 ***

## poly(ck, 3, raw = TRUE)1 1.102e-01 2.139e-02 5.153 2.57e-07 ***

## poly(ck, 3, raw = TRUE)2 -4.649e-04 1.381e-04 -3.367 0.00076 ***

## poly(ck, 3, raw = TRUE)3 6.448e-07 2.544e-07 2.535 0.01125 *

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 271.7124 on 11 degrees of freedom

## Residual deviance: 4.2525 on 8 degrees of freedom

## AIC: 33.658

##

## Number of Fisher Scoring iterations: 6

# All fits together

lines(ck, logistic(cbind(1, poly(ck, 2, raw = TRUE)) %*% heart2$coefficients),

col = 2)

lines(ck, logistic(cbind(1, poly(ck, 3, raw = TRUE)) %*% heart3$coefficients),

col = 3)

lines(ck, logistic(cbind(1, poly(ck, 4, raw = TRUE)) %*% heart4$coefficients),

col = 4)

legend("bottomright", legend = c("Linear", "Quadratic", "Cubic", "Quartic"),

col = 1:4, lwd = 2)

5.2.2.3 Estimation by maximum likelihood

The estimation of \(\boldsymbol{\beta}\) by MLE can be done in a unified framework, for all generalized linear models, thanks to the exponential family (5.10). Given \(\{(\mathbf{x}_i,Y_i)\}_{i=1}^n,\)169 and employing a canonical link function (5.13), we have that

\[\begin{align*} Y_i\mid (X_1=x_{i1},\ldots,X_p=x_{ip})\sim\mathrm{E}(\theta_i,\phi,a,b,c),\quad i=1,\ldots,n, \end{align*}\]

where

\[\begin{align*} \theta_i&:=\eta_i:=\beta_0+\beta_1x_{i1}+\cdots+\beta_px_{ip},\\ \mu_i&:=\mathbb{E}[Y_i \mid X_1=x_{i1},\ldots,X_p=x_{ip}]=g^{-1}(\eta_i). \end{align*}\]

Then, the log-likelihood is

\[\begin{align} \ell(\boldsymbol{\beta})=\sum_{i=1}^n\left(\frac{Y_i\theta_i-b(\theta_i)}{a(\phi)}+c(Y_i,\phi)\right).\tag{5.20} \end{align}\]

Differentiating with respect to \(\boldsymbol{\beta}\) gives

\[\begin{align*} \frac{\partial \ell(\boldsymbol{\beta})}{\partial \boldsymbol{\beta}}=\sum_{i=1}^{n}\frac{\left(Y_i-b'(\theta_i)\right)}{a(\phi)}\frac{\partial \theta_i}{\partial \boldsymbol{\beta}} \end{align*}\]

which, exploiting the properties of the exponential family, can be reduced to

\[\begin{align} \frac{\partial \ell(\boldsymbol{\beta})}{\partial\boldsymbol{\beta}}=\sum_{i=1}^{n}\frac{(Y_i-\mu_i)}{g'(\mu_i)V_i}\mathbf{x}_i,\tag{5.21} \end{align}\]

where \(\mathbf{x}_i\) now represents the \(i\)-th row of the design matrix \(\mathbb{X}\) and \(V_i:=\mathbb{V}\mathrm{ar}[Y_i]=a(\phi)b''(\theta_i).\) Solving explicitly the system of equations \(\frac{\partial \ell(\boldsymbol{\beta})}{\partial\boldsymbol{\beta}}=\mathbf{0}\) is not possible in general and a numerical procedure is required. Newton–Raphson is usually employed, which is based in obtaining \(\boldsymbol{\beta}_\mathrm{new}\) from the linear system170

\[\begin{align} \left.\frac{\partial^2 \ell(\boldsymbol{\beta})}{\partial \boldsymbol{\beta} \partial\boldsymbol{\beta}^\top}\right |_{\boldsymbol{\beta}=\boldsymbol{\beta}_\mathrm{old}}(\boldsymbol{\beta}_\mathrm{new} -\boldsymbol{\beta}_\mathrm{old})=-\left.\frac{\partial \ell(\boldsymbol{\beta})}{\partial \boldsymbol{\beta}} \right |_{\boldsymbol{\beta}=\boldsymbol{\beta}_\mathrm{old}}.\tag{5.22} \end{align}\]

A simplifying trick is to consider the expectation of \(\left.\frac{\partial^2 \ell(\boldsymbol{\beta})}{\partial \boldsymbol{\beta}\partial\boldsymbol{\beta}^\top}\right|_{\boldsymbol{\beta}=\boldsymbol{\beta}_\mathrm{old}}\) in (5.22), rather than its actual value. By doing so, we can arrive to a neat iterative algorithm called Iterative Reweighted Least Squares (IRLS). We use the following well-known property of the Fisher information matrix of the MLE theory:

\[\begin{align*} \mathbb{E}\left[\frac{\partial^2 \ell(\boldsymbol{\beta})}{\partial\boldsymbol{\beta} \partial\boldsymbol{\beta}^\top}\right]=-\mathbb{E}\left[\frac{\partial \ell(\boldsymbol{\beta})}{\partial \boldsymbol{\beta}}\left(\frac{\partial \ell(\boldsymbol{\beta})}{\partial \boldsymbol{\beta}}\right)^\top\right]. \end{align*}\]

Then, it can be seen that171

\[\begin{align} \mathbb{E}\left[\left.\frac{\partial^2 \ell(\boldsymbol{\beta})}{\partial\boldsymbol{\beta}\partial\boldsymbol{\beta}^\top}\right|_{\boldsymbol{\beta}=\boldsymbol{\beta}_\mathrm{old}} \right]= -\sum_{i=1}^{n} w_i \mathbf{x}_i \mathbf{x}_i^\top=-\mathbb{X}^\top \mathbf{W} \mathbb{X},\tag{5.23} \end{align}\]

where \(w_i:=\frac{1}{V_i(g'(\mu_i))^2}\) and \(\mathbf{W}:=\mathrm{diag}(w_1,\ldots,w_n).\) Using this notation and from (5.21),

\[\begin{align} \left. \frac{\partial \ell(\boldsymbol{\beta})}{\partial \boldsymbol{\beta}} \right|_{\boldsymbol{\beta}=\boldsymbol{\beta}_\mathrm{old}}= \mathbb{X}^\top\mathbf{W}(\mathbf{Y}-\boldsymbol{\mu}_\mathrm{old})\mathbf{g}^\top(\boldsymbol{\mu}_\mathrm{old}),\tag{5.24} \end{align}\]

Substituting (5.23) and (5.24) in (5.22), we have:

\[\begin{align} \boldsymbol{\beta}_\mathrm{new}&=\boldsymbol{\beta}_\mathrm{old}-\mathbb{E}\left [\left.\frac{\partial^2 \ell(\boldsymbol{\beta})}{\partial\boldsymbol{\beta} \boldsymbol{\beta}^\top}\right|_{\boldsymbol{\beta}=\boldsymbol{\beta}_\mathrm{old}} \right]^{-1}\left. \frac{\partial \ell(\boldsymbol{\beta})}{\partial \boldsymbol{\beta}} \right|_{\boldsymbol{\beta}=\boldsymbol{\beta}_\mathrm{old}}\nonumber\\ &=\boldsymbol{\beta}_\mathrm{old}+(\mathbb{X}^\top \mathbf{W} \mathbb{X})^{-1}\mathbb{X}^\top\mathbf{W}(\mathbf{Y}-\boldsymbol{\mu}_\mathrm{old})\mathbf{g}^\top(\boldsymbol{\mu}_\mathrm{old})\nonumber\\ &=(\mathbb{X}^\top \mathbf{W} \mathbb{X})^{-1}\mathbb{X}^\top\mathbf{W} \mathbf{z},\tag{5.25} \end{align}\]

where \(\mathbf{z}:=\mathbb{X}\boldsymbol{\beta}_\mathrm{old}+(\mathbf{Y}-\boldsymbol{\mu}_\mathrm{old})\mathbf{g}^\top(\boldsymbol{\mu}_\mathrm{old})\) is the working vector.

As a consequence, fitting a generalized linear model by IRLS amounts to performing a series of weighted linear models with changing weights and responses given by the working vector. IRLS can be summarized as:

- Set \(\boldsymbol{\beta}_\mathrm{old}\) with some initial estimation.

- Compute \(\boldsymbol{\mu}_\mathrm{old},\) \(\mathbf{W},\) and \(\mathbf{z}.\)

- Compute \(\boldsymbol{\beta}_\mathrm{new}\) using (5.25).

- Set \(\boldsymbol{\beta}_\mathrm{old}\) as \(\boldsymbol{\beta}_\mathrm{new}.\)

- Iterate Steps 2–4 until convergence, then set \(\hat{\boldsymbol{\beta}}=\boldsymbol{\beta}_\mathrm{new}.\)

5.3 Inference for model parameters

The assumptions on which a generalized linear model is constructed allow us to specify what is the asymptotic distribution of the random vector \(\hat{\boldsymbol{\beta}}\) through the theory of MLE. Again, the distribution is derived conditionally on the predictors’ sample \(\mathbf{X}_1,\ldots,\mathbf{X}_n.\) In other words, we assume that the randomness of \(Y\) comes only from \(Y \mid (X_1=x_1,\ldots,X_p=x_p)\) and not from the predictors.

For the ease of exposition, we will focus only on the logistic model rather than in the general case. The conceptual differences are not so big, but the simplification in terms of notation and the benefits on the intuition side are important.

There is an important difference between the inference results for the linear model and for logistic regression:

- In linear regression the inference is exact. This is due to the nice properties of the normal, least squares estimation, and linearity. As a consequence, the distributions of the coefficients are perfectly known assuming that the assumptions hold.

- In generalized linear models the inference is asymptotic. This means that the distributions of the coefficients are unknown except for large sample sizes \(n,\) for which we have approximations.172 The reason is the higher complexity of the model in terms of nonlinearity. This is the usual situation for the majority of regression models.173

5.3.1 Distributions of the fitted coefficients

The distribution of \(\hat{\boldsymbol{\beta}}\) is given by the asymptotic theory of MLE:

\[\begin{align} \hat{\boldsymbol{\beta}}\stackrel{a}{\sim}\mathcal{N}_{p+1}\left(\boldsymbol{\beta},\mathcal{I}(\boldsymbol{\beta})^{-1}\right) \tag{5.26} \end{align}\]

where \(\stackrel{a}{\sim} [\ldots]\) means “asymptotically distributed as \([\ldots]\) when \(n\to\infty\)” and

\[\begin{align*} \mathcal{I}(\boldsymbol{\beta}):=-\mathbb{E}\left[\frac{\partial^2 \ell(\boldsymbol{\beta})}{\partial \boldsymbol{\beta}\partial \boldsymbol{\beta}^\top}\right] \end{align*}\]

is the Fisher information matrix. The name comes from the fact that it measures the information available in the sample for estimating \(\boldsymbol{\beta}\). The “larger” the matrix (larger eigenvalues) is, the more precise the estimation of \(\boldsymbol{\beta}\) is, because that results in smaller variances in (5.26).

In the logistic model, the inverse of the Fisher information matrix is174

\[\begin{align} \mathcal{I}(\boldsymbol{\beta})^{-1}=(\mathbb{X}^\top\mathbf{V}\mathbb{X})^{-1}, \tag{5.27} \end{align}\]

where \(\mathbf{V}=\mathrm{diag}(V_1,\ldots,V_n)\) and \(V_i=\mathrm{logistic}(\eta_i)(1-\mathrm{logistic}(\eta_i)),\) with \(\eta_i=\beta_0+\beta_1x_{i1}+\cdots+\beta_px_{ip}.\) In the case of the multiple linear regression, \(\mathcal{I}(\boldsymbol{\beta})^{-1}=\sigma^2(\mathbb{X}^\top\mathbb{X})^{-1}\) (see (2.11)), so the presence of \(\mathbf{V}\) here is a consequence of the heteroscedasticity of the logistic model.

The interpretation of (5.26) and (5.27) gives some useful insights on what concepts affect the quality of the estimation:

Bias. The estimates are asymptotically unbiased.

-

Variance. It depends on:

- Sample size \(n\). Hidden inside \(\mathbb{X}^\top\mathbf{V}\mathbb{X}.\) As \(n\) grows, the precision of the estimators increases.

- Weighted predictor sparsity \((\mathbb{X}^\top\mathbf{V}\mathbb{X})^{-1}\). The more “disperse”175 the predictors are, the more precise \(\hat{\boldsymbol{\beta}}\) is. When \(p=1,\) \(\mathbb{X}^\top\mathbf{V}\mathbb{X}\) is a weighted version of \(s_x^2.\)

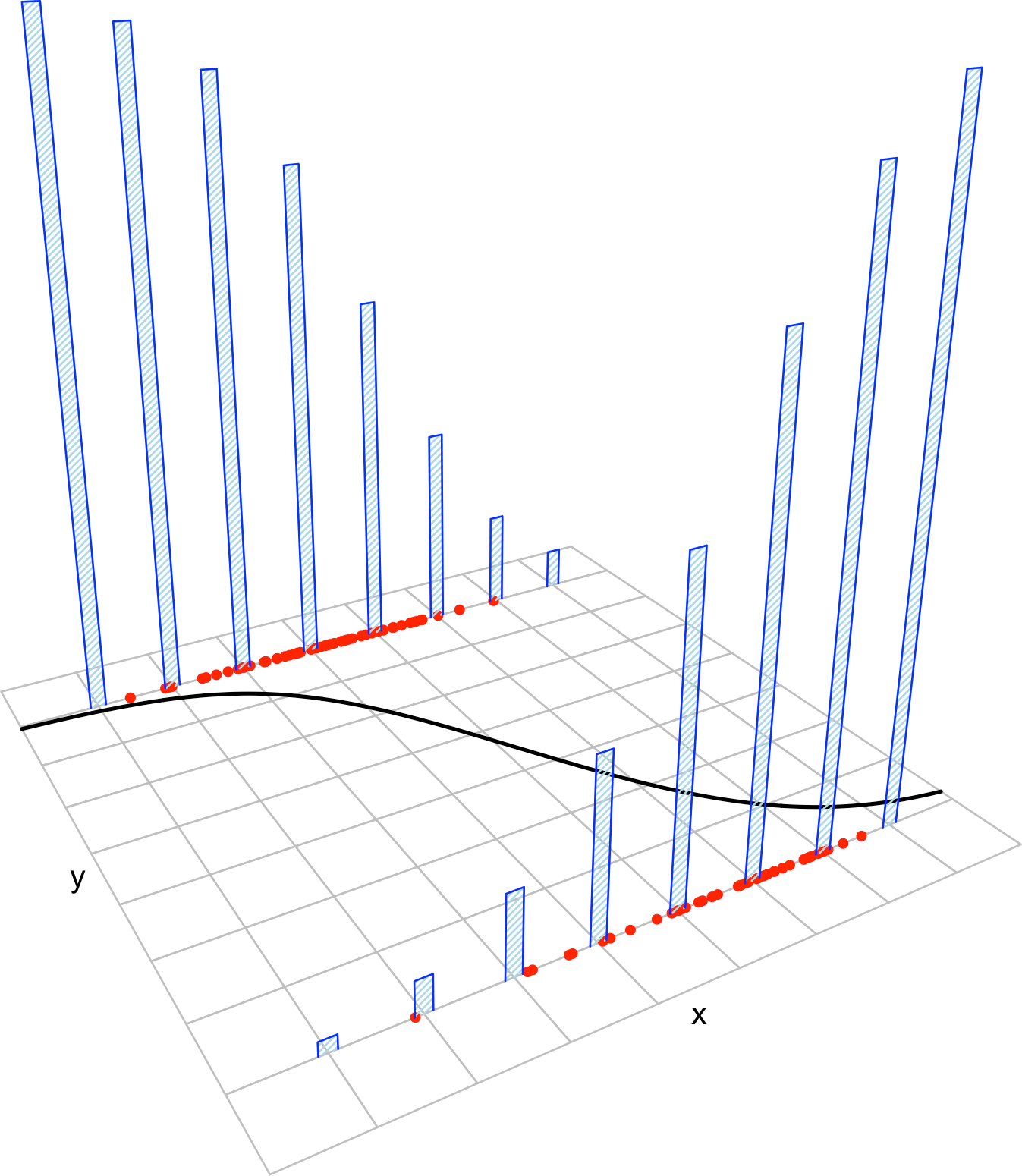

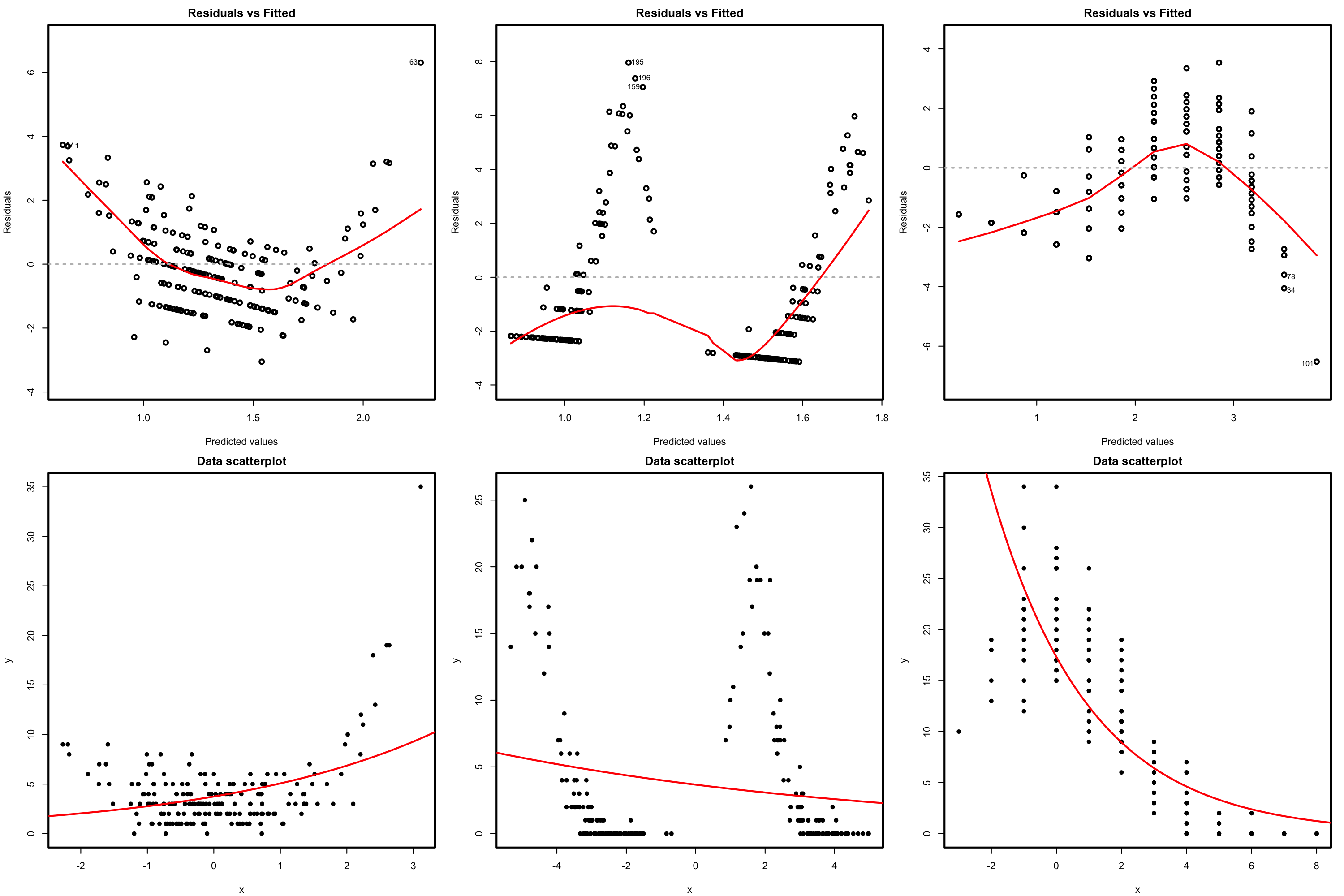

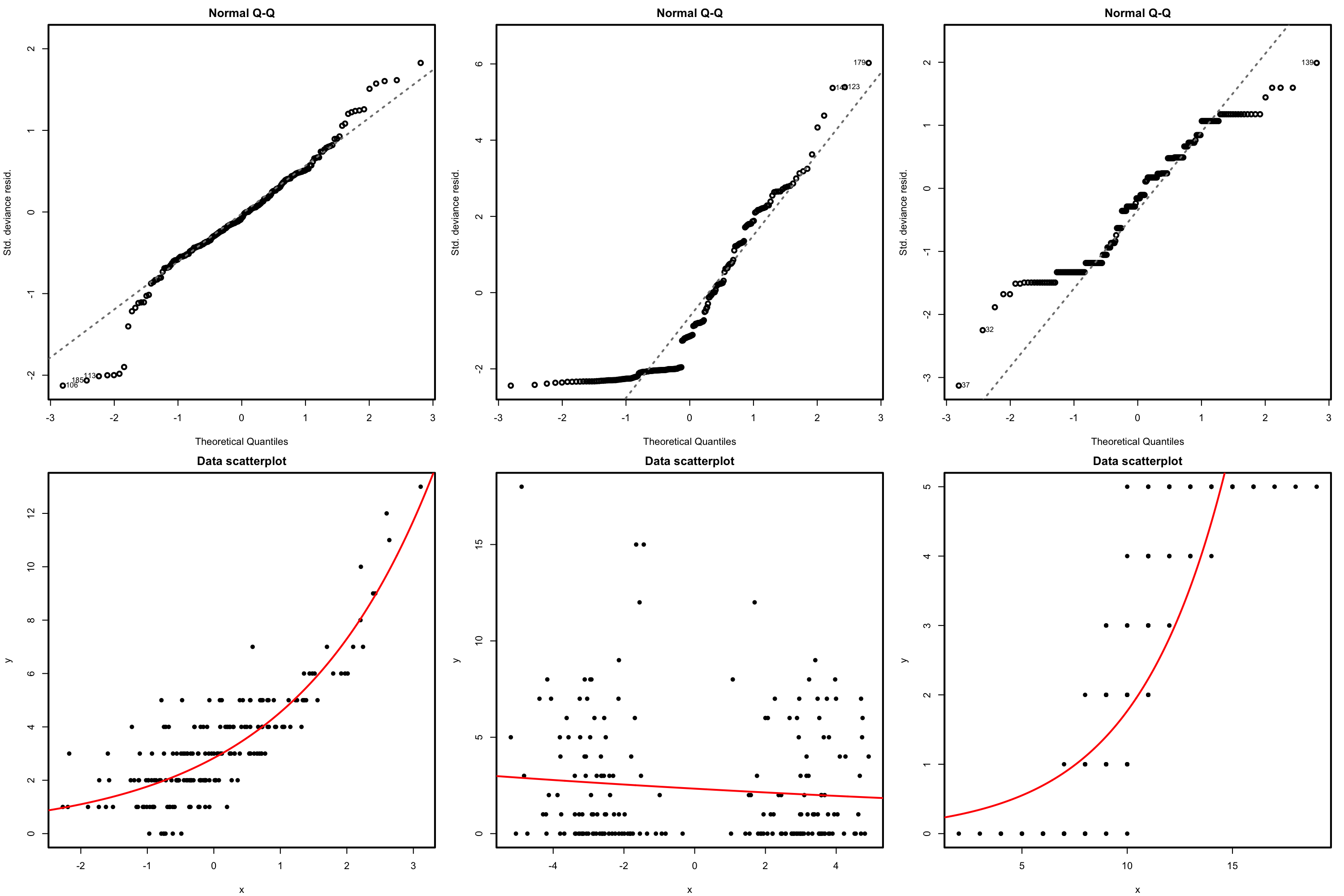

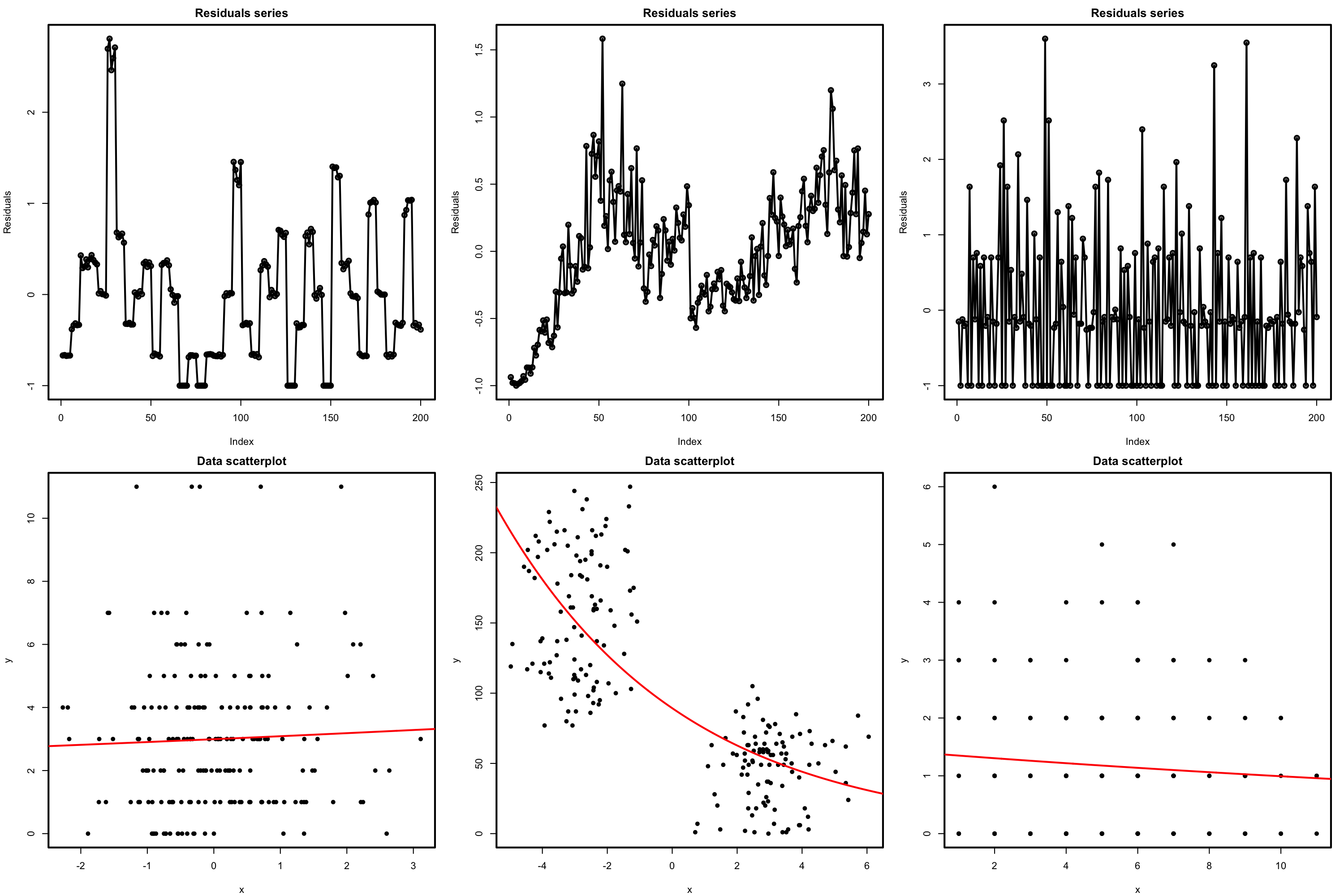

Figure 5.7 aids visualizing these insights.

Similar to linear regression, the problem with (5.26) and (5.27) is that \(\mathbf{V}\) is unknown in practice because it depends on \(\boldsymbol{\beta}.\) Plugging-in the estimate \(\hat{\boldsymbol{\beta}}\) into \(\boldsymbol{\beta}\) in \(\mathbf{V}\) gives the estimator \(\hat{\mathbf{V}}.\) Now we can use \(\hat{\mathbf{V}}\) to get

\[\begin{align} \frac{\hat\beta_j-\beta_j}{\hat{\mathrm{SE}}(\hat\beta_j)}\stackrel{a}{\sim} \mathcal{N}(0,1),\quad\hat{\mathrm{SE}}(\hat\beta_j)^2:=v_j\tag{5.28} \end{align}\]

where

\[\begin{align*} v_j\text{ is the }j\text{-th element of the diagonal of }(\mathbb{X}^\top\hat{\mathbf{V}}\mathbb{X})^{-1}. \end{align*}\]

The LHS of (5.28) is the Wald statistic for \(\beta_j,\) \(j=0,\ldots,p.\) They are employed for building marginal confidence intervals and hypothesis tests, in a completely analogous way to how the \(t\)-statistics in linear regression operate.

Figure 5.7: Illustration of the randomness of the fitted coefficients \((\hat\beta_0,\hat\beta_1)\) and the influence of \(n,\) \((\beta_0,\beta_1)\) and \(s_x^2.\) The predictors’ sample \(x_1,\ldots,x_n\) are fixed and new responses \(Y_1,\ldots,Y_n\) are generated each time from a simple logistic model \(Y \mid X=x\sim\mathrm{Ber}(\mathrm{logistic}(\beta_0+\beta_1x)).\) Application available here.

5.3.2 Confidence intervals for the coefficients

Thanks to (5.28), we can have the \(100(1-\alpha)\%\) CI for the coefficient \(\beta_j,\) \(j=0,\ldots,p\):

\[\begin{align} \left(\hat\beta_j\pm\hat{\mathrm{SE}}(\hat\beta_j)z_{\alpha/2}\right)\tag{5.29} \end{align}\]

where \(z_{\alpha/2}\) is the \(\alpha/2\)-upper quantile of the \(\mathcal{N}(0,1)\). In case we are interested in the CI for \(e^{\beta_j},\) we can just simply take the exponential on the above CI.176 So the \(100(1-\alpha)\%\) CI for \(e^{\beta_j},\) \(j=0,\ldots,p,\) is

\[\begin{align*} e^{\left(\hat\beta_j\pm\hat{\mathrm{SE}}(\hat\beta_j)z_{\alpha/2}\right)}. \end{align*}\]

Of course, this CI is not the same as \(\left(e^{\hat\beta_j}\pm e^{\hat{\mathrm{SE}}(\hat\beta_j)z_{\alpha/2}}\right),\) which is not a valid CI for \(e^{\beta_j}\)!

5.3.3 Testing on the coefficients

The distributions in (5.28) also allow us to conduct a formal hypothesis test on the coefficients \(\beta_j,\) \(j=0,\ldots,p.\) For example, the test for significance:

\[\begin{align*} H_0:\beta_j=0 \end{align*}\]

for \(j=0,\ldots,p.\) The test of \(H_0:\beta_j=0\) with \(1\leq j\leq p\) is especially interesting, since it allows us to answer whether the variable \(X_j\) has a significant effect on \(Y\). The statistic used for testing for significance is the Wald statistic

\[\begin{align*} \frac{\hat\beta_j-0}{\hat{\mathrm{SE}}(\hat\beta_j)}, \end{align*}\]

which is asymptotically distributed as a \(\mathcal{N}(0,1)\) under the (veracity of) the null hypothesis. \(H_0\) is tested against the two-sided alternative hypothesis \(H_1:\beta_j\neq 0.\)

Is the CI for \(\beta_j\) below (above) \(0\) at level \(\alpha\)?

- Yes \(\rightarrow\) reject \(H_0\) at level \(\alpha.\) Conclude \(X_j\) has a significant negative (positive) effect on \(Y\) at level \(\alpha.\)

- No \(\rightarrow\) the criterion is not conclusive.

The tests for significance are built-in in the summary function. However, due to discrepancies between summary and confint, a note of caution is required when applying the previous rule of thumb for rejecting \(H_0\) in terms of the CI.

The significances given in summary and the output of

MASS::confint are slightly incoherent and the

previous rule of thumb does not apply. The reason is

because MASS::confint is using a more sophisticated method

(profile likelihood) to estimate the standard error of \(\hat\beta_j,\) \(\hat{\mathrm{SE}}(\hat\beta_j),\) and not

the asymptotic distribution behind the Wald statistic.

By changing confint to R’s default

confint.default, the results of the latter will be

completely equivalent to the significances in summary, and

the rule of thumb will still be completely valid. For the contents of

this course we prefer confint.default due to its better

interpretability. This point is exemplified in the next section.

5.3.4 Case study application

Let’s compute the summary of the nasa model in order to address the significance of the coefficients.

At the sight of this curve and the summary of the model we can conclude that the temperature was increasing the probability of an O-ring incident (Q2). Indeed, the confidence intervals for the coefficients show a significant negative correlation at level \(\alpha=0.05\):

# Summary of the model

summary(nasa)

##

## Call:

## glm(formula = fail.field ~ temp, family = "binomial", data = challenger)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) 7.5837 3.9146 1.937 0.0527 .

## temp -0.4166 0.1940 -2.147 0.0318 *

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 28.267 on 22 degrees of freedom

## Residual deviance: 20.335 on 21 degrees of freedom

## AIC: 24.335

##

## Number of Fisher Scoring iterations: 5

# Confidence intervals at 95%

confint.default(nasa)

## 2.5 % 97.5 %

## (Intercept) -0.08865488 15.25614140

## temp -0.79694430 -0.03634877

# Confidence intervals at other levels

confint.default(nasa, level = 0.90)

## 5 % 95 %

## (Intercept) 1.1448638 14.02262275

## temp -0.7358025 -0.09749059

# Confidence intervals for the factors affecting the odds

exp(confint.default(nasa))

## 2.5 % 97.5 %

## (Intercept) 0.9151614 4.223359e+06

## temp 0.4507041 9.643039e-01The coefficient for temp is significant at \(\alpha=0.05\) and the intercept is not (it is for \(\alpha=0.10\)). The \(95\%\) confidence interval for \(\beta_0\) is \((-0.0887, 15.2561)\) and for \(\beta_1\) is \((-0.7969, -0.0363).\) For \(e^{\beta_0}\) and \(e^{\beta_1},\) the CIs are \((0.9151, 4.2233\times10^6)\) and \((0.4507, 0.9643),\) respectively. Therefore, we can say with a \(95\%\) confidence that:

- When

temp=0, the probability offail.field=1 is not significantly larger than the probability offail.field=0 (using the CI for \(\beta_0\)).fail.field=1 is between \(0.9151\) and \(4.2233\times10^6\) more likely thanfail.field=0 (using the CI for \(e^{\beta_0}\)). -

temphas a significantly negative effect on the probability offail.field=1 (using the CI for \(\beta_1\)). Indeed, each unit increase intempproduces a reduction of the odds offail.fieldby a factor between \(0.4507\) and \(0.9643\) (using the CI for \(e^{\beta_1}\)).

This completes the answers to Q1 and Q2.

We conclude by illustrating the incoherence of summary and confint.

# Significances with asymptotic approximation for the standard errors

summary(nasa)

##

## Call:

## glm(formula = fail.field ~ temp, family = "binomial", data = challenger)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) 7.5837 3.9146 1.937 0.0527 .

## temp -0.4166 0.1940 -2.147 0.0318 *

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 28.267 on 22 degrees of freedom

## Residual deviance: 20.335 on 21 degrees of freedom

## AIC: 24.335

##

## Number of Fisher Scoring iterations: 5

# CIs with asymptotic approximation -- coherent with summary

confint.default(nasa, level = 0.95)

## 2.5 % 97.5 %

## (Intercept) -0.08865488 15.25614140

## temp -0.79694430 -0.03634877

confint.default(nasa, level = 0.99)

## 0.5 % 99.5 %

## (Intercept) -2.4994971 17.66698362

## temp -0.9164425 0.08314945

# CIs with profile likelihood -- incoherent with summary

confint(nasa, level = 0.95) # intercept still significant

## 2.5 % 97.5 %

## (Intercept) 1.3364047 17.7834329

## temp -0.9237721 -0.1089953

confint(nasa, level = 0.99) # temp still significant

## 0.5 % 99.5 %

## (Intercept) -0.3095128 22.26687651

## temp -1.1479817 -0.029940115.4 Prediction

Prediction in generalized linear models focuses mainly on predicting the values of the conditional mean

\[\begin{align*} \mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p]=g^{-1}(\eta)=g^{-1}(\beta_0+\beta_1x_1+\cdots+\beta_px_p) \end{align*}\]

by means of \(\hat{\eta}:=\hat\beta_0+\hat\beta_1x_1+\cdots+\hat\beta_px_p\) and not on predicting the conditional response. The reason is that confidence intervals, the main difference between both kinds of prediction, depend heavily on the family we are considering for the response.177

For the logistic model, the prediction of the conditional response follows immediately from \(\mathrm{logistic}(\hat{\eta})\):

\[\begin{align*} \hat{Y}\mid (X_1=x_1,\ldots,X_p=x_p)=\left\{ \begin{array}{ll} 1,&\text{with probability }\mathrm{logistic}(\hat{\eta}),\\ 0,&\text{with probability }1-\mathrm{logistic}(\hat{\eta}).\end{array}\right. \end{align*}\]

As a consequence, we can predict \(Y\) as \(1\) if \(\mathrm{logistic}(\hat{\eta})>\frac{1}{2}\) and as \(0\) otherwise.

To make predictions and compute CIs in practice we use predict. There are two differences with respect to its use for lm:

- The argument

type.type = "link"returns \(\hat{\eta}\) (the log-odds in the logistic model),type = "response"returns \(g^{-1}(\hat{\eta})\) (the probabilities in the logistic model). Observe thattype = "response"has a different behavior thanpredictforlm, where it returned the predictions for the conditional response. - There is no

intervalargument for usingpredictwithglm. That means that the computation of CIs for prediction is not implemented and has to be done manually from the standard errors returned whense.fit = TRUE(see Section 5.4.1).

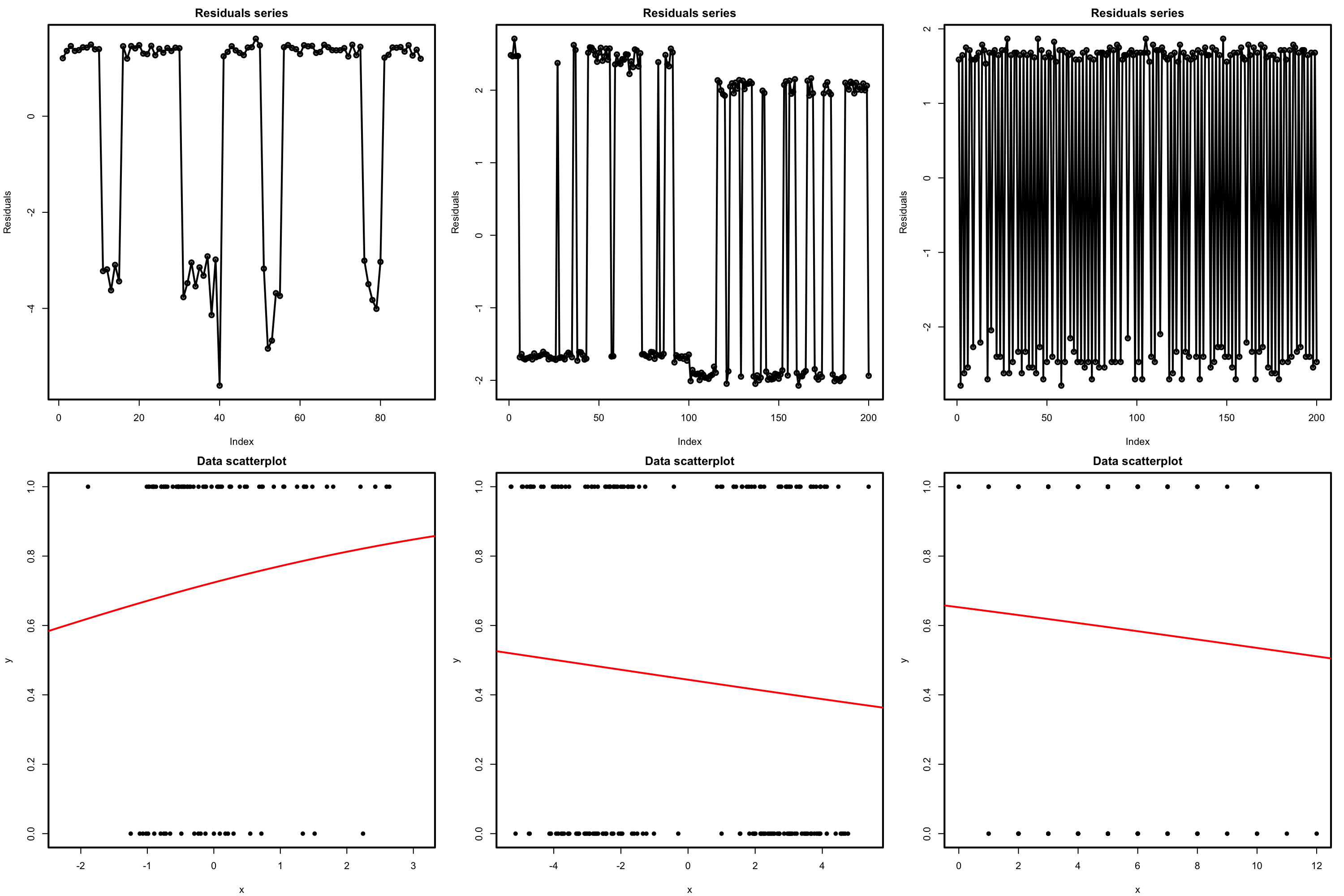

Figure 5.8 gives an interactive visualization of the CIs for the conditional probability in simple logistic regression. Their interpretation is very similar to the CIs for the conditional mean in the simple linear model, see Section 2.5 and Figure 2.15.

Figure 5.8: Illustration of the CIs for the conditional probability in the simple logistic regression. Application available here.

5.4.1 Case study application

Let’s compute what was the probability of having at least one incident with the O-rings in the launch day (answers Q3):

predict(nasa, newdata = data.frame(temp = -0.6), type = "response")

## 1

## 0.999604Recall that there is a serious problem of extrapolation in the prediction, which makes it less precise (or more variable). But this extrapolation, together with the evidence raised by the simple analysis we did, should have been strong arguments for postponing the launch.

Since it is a bit cumbersome to compute the CIs for the conditional response, we can code the function predict_cis_logistic to do it automatically.

# Function for computing the predictions and CIs for the conditional probability

predict_cis_logistic <- function(object, newdata, level = 0.95) {

# Compute predictions in the log-odds

pred <- predict(object = object, newdata = newdata, se.fit = TRUE)

# CI in the log-odds

za <- qnorm(p = (1 - level) / 2)

lwr <- pred$fit + za * pred$se.fit

upr <- pred$fit - za * pred$se.fit

# Transform to probabilities

fit <- 1 / (1 + exp(-pred$fit))

lwr <- 1 / (1 + exp(-lwr))

upr <- 1 / (1 + exp(-upr))

# Return a matrix with column names "fit", "lwr" and "upr"

result <- cbind(fit, lwr, upr)

colnames(result) <- c("fit", "lwr", "upr")

return(result)

}Let’s apply the function to our model:

# Data for which we want a prediction

newdata <- data.frame(temp = -0.6)

# Prediction of the conditional log-odds, the default

predict(nasa, newdata = newdata, type = "link")

## 1

## 7.833731

# Prediction of the conditional probability

predict(nasa, newdata = newdata, type = "response")

## 1

## 0.999604

# Simple call

predict_cis_logistic(nasa, newdata = newdata)

## fit lwr upr

## 1 0.999604 0.4838505 0.9999999

# The CI is large because there is no data around temp = -0.6 and

# that makes the prediction more variable (and also because we only

# have 23 observations)Finally, let’s answer Q4 and see what was the probability of having at least one incident with the O-rings if the launch was postponed until the temperature was above \(11.67\) degrees Celsius.

# Estimated probability for launching at 53 degrees Fahrenheit

predict_cis_logistic(nasa, newdata = data.frame(temp = 11.67))

## fit lwr upr

## 1 0.9382822 0.3504908 0.9976707The maximum predicted probability is \(0.94.\) Notice that this is the maximum in accordance to the suggestion of launching above \(11.67\) degrees Celsius. The probability of having at least one incident178 with the O-rings is still very high.

Exercise 5.6 For the challenger dataset, do the following:

- Regress

fail.nozzleontempandpres.nozzle. - Compute the predicted probability of

fail.nozzle=1fortemp\(=15\) andpres.nozzle\(=200.\) What is the predicted probability forfail.nozzle=0? - Compute the confidence interval for the two predicted probabilities at level \(95\%.\)

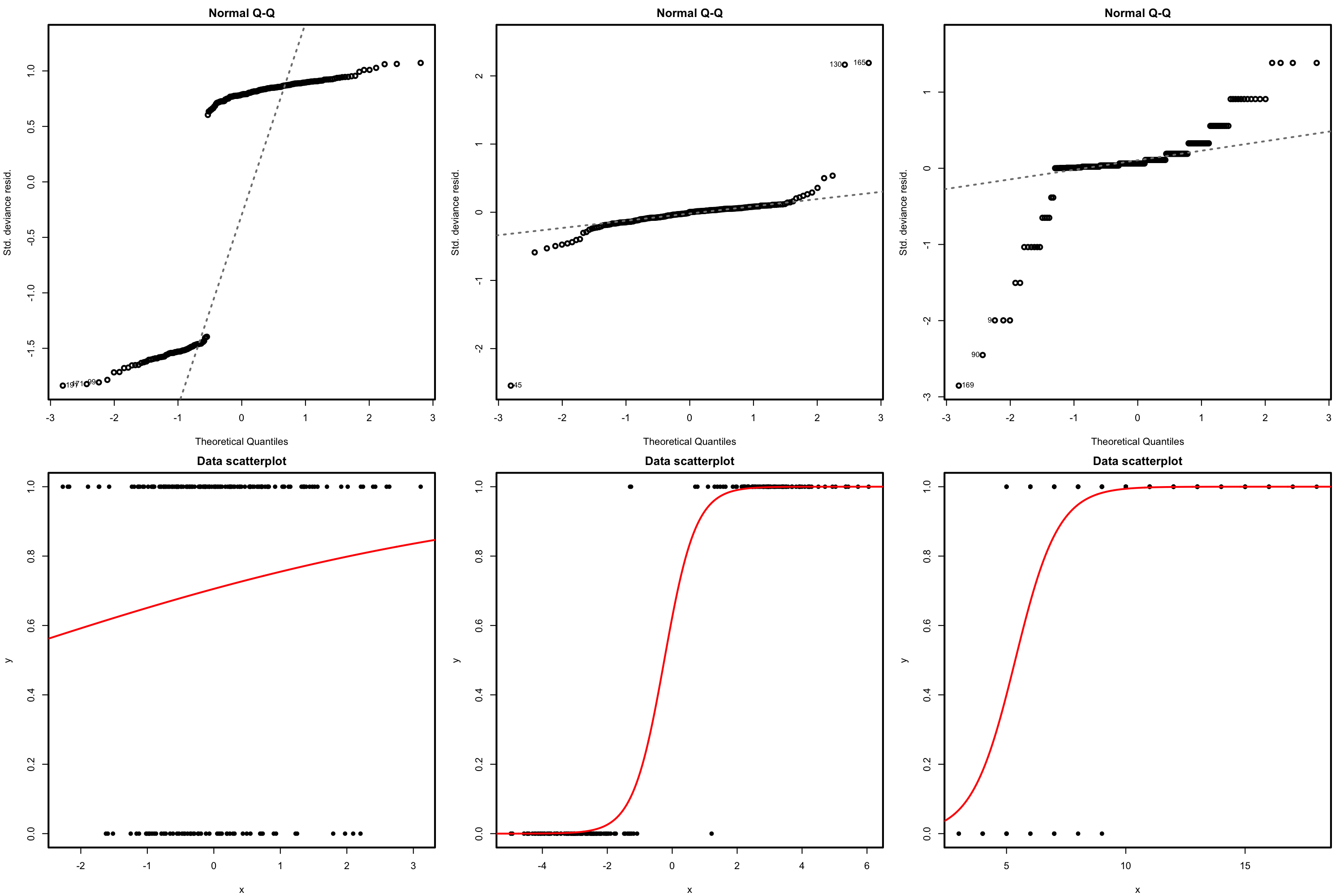

5.5 Deviance

The deviance is a key concept in generalized linear models. Intuitively, it measures the deviance of the fitted generalized linear model with respect to a perfect model for the sample \(\{(\mathbf{x}_i,Y_i)\}_{i=1}^n.\) This perfect model, known as the saturated model, is the model that perfectly fits the data at hand, in the sense that the fitted responses (\(\hat Y_i\)) equal the observed responses (\(Y_i\)).179 For example, in logistic regression this would be the model such that

\[\begin{align*} \hat{\mathbb{P}}[Y=1 \mid X_1=X_{i1},\ldots,X_p=X_{ip}]=Y_i,\quad i=1,\ldots,n. \end{align*}\]

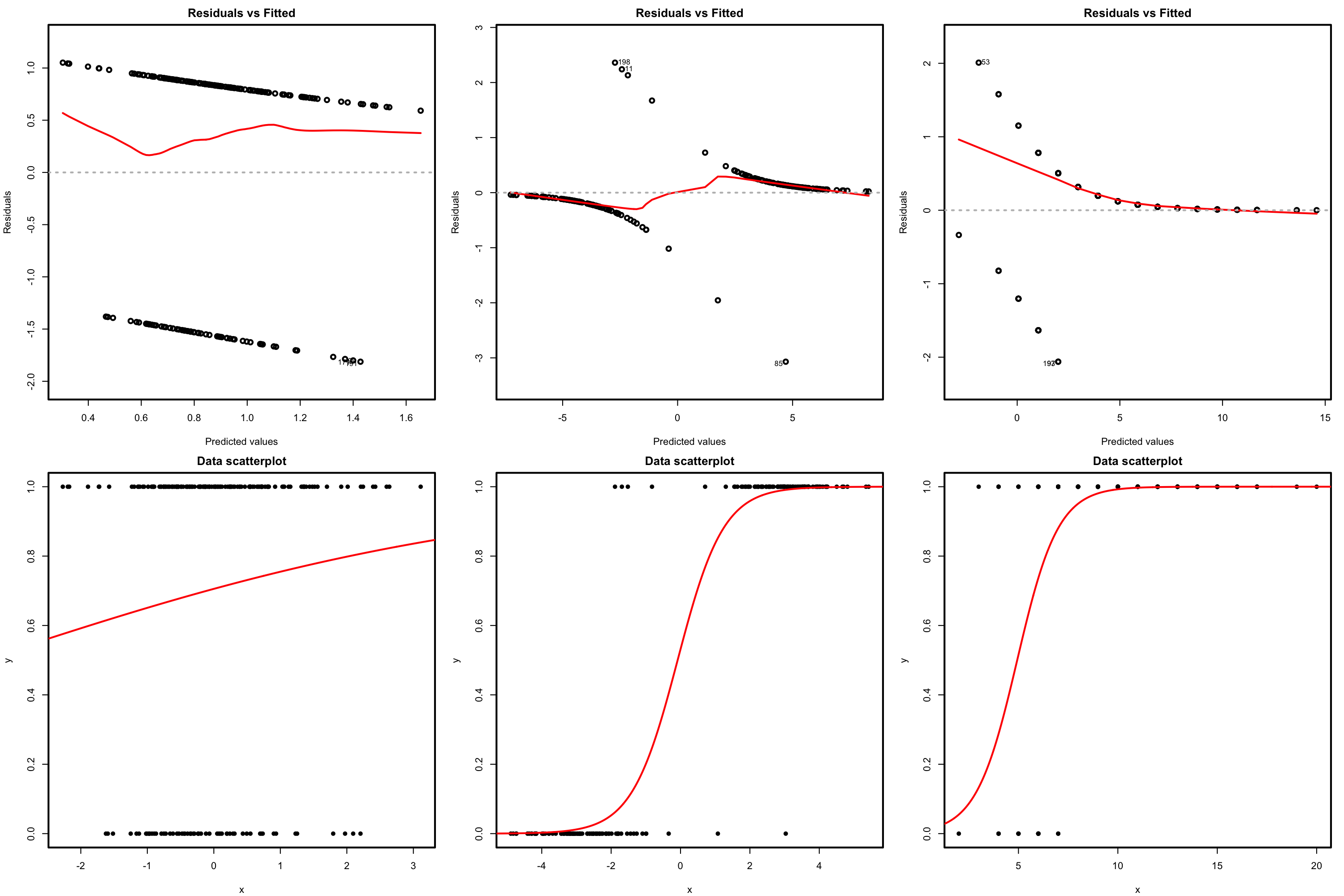

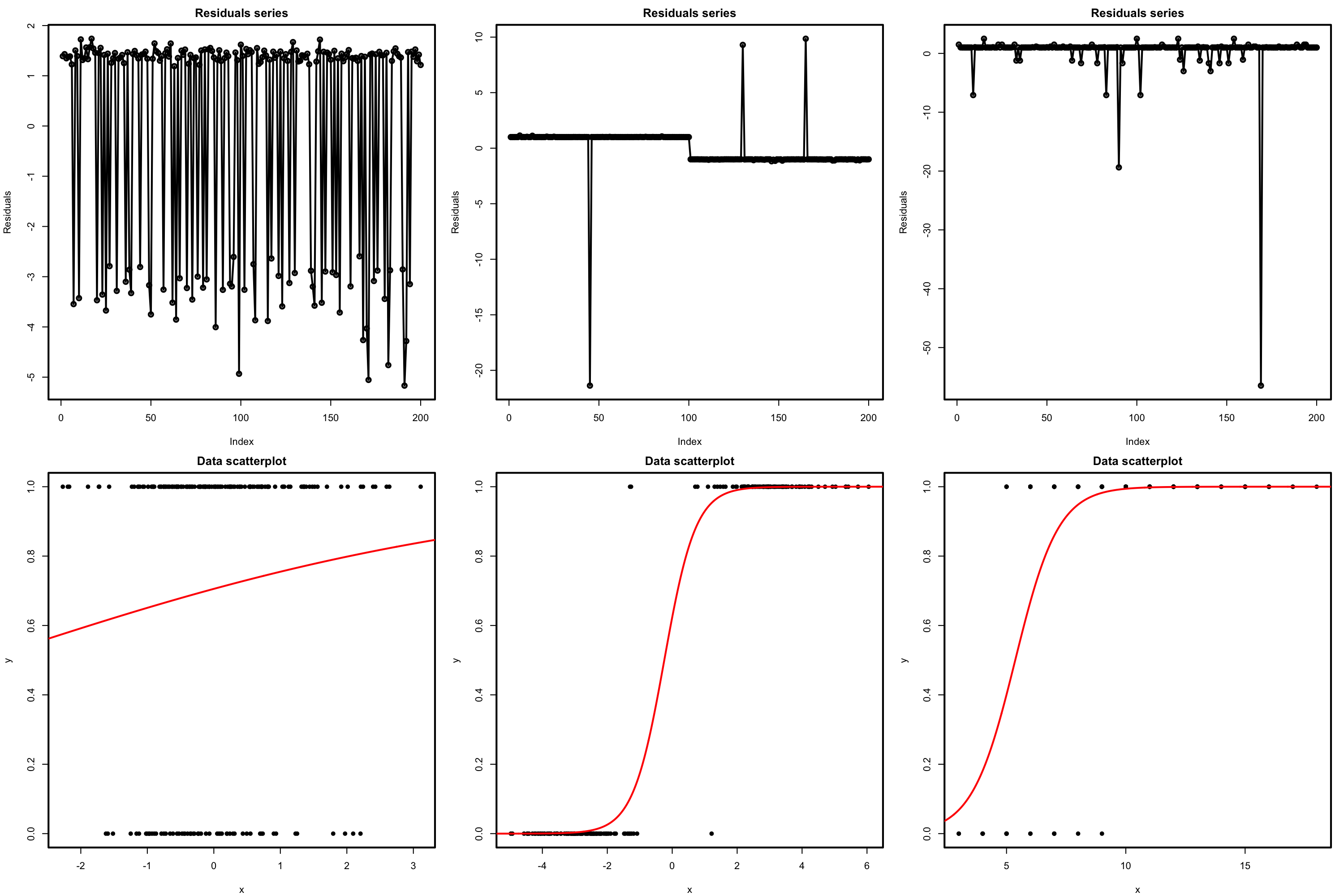

Figure 5.9 shows a180 saturated model and a fitted logistic model.

Figure 5.9: Fitted logistic regression versus a saturated model and the null model.

Formally, the deviance is defined through the difference of the log-likelihoods between the fitted model, \(\ell(\hat{\boldsymbol{\beta}}),\) and the saturated model, \(\ell_s.\) Computing \(\ell_s\) amounts to substitute \(\mu_i\) by \(Y_i\) in (5.20). If the canonical link function is used, this corresponds to setting \(\theta_i=g(Y_i)\) (recall (5.12)). The deviance is then defined as:

\[\begin{align*} D:=-2\left[\ell(\hat{\boldsymbol{\beta}})-\ell_s\right]\phi. \end{align*}\]

The log-likelihood \(\ell(\hat{\boldsymbol{\beta}})\) is always smaller than \(\ell_s\) (the saturated model is more likely given the sample, since it is the sample itself). As a consequence, the deviance is always greater than or equal to zero, being zero only if the fit of the model is perfect. It thus can be regarded as a distance between the fitted model and the saturated model.

If the canonical link function is employed, the deviance can be expressed as

\[\begin{align} D&=-\frac{2}{a(\phi)}\sum_{i=1}^n\left(Y_i\hat\theta_i-b(\hat\theta_i)-Y_ig(Y_i)+b(g(Y_i))\right)\phi\nonumber\\ &=\frac{2\phi}{a(\phi)}\sum_{i=1}^n\left(Y_i(g(Y_i)-\hat\theta_i)-b(g(Y_i))+b(\hat\theta_i)\right).\tag{5.30} \end{align}\]

In most of the cases, \(a(\phi)\propto\phi,\) so the deviance does not depend on \(\phi\). Expression (5.30) is interesting, since it delivers the following key insight:

The deviance generalizes the Residual Sum of Squares (RSS) of the linear model. The generalization is driven by the likelihood and its equivalence with the RSS in the linear model.

To see this insight, let’s consider the linear model in (5.30) by setting \(\phi=\sigma^2,\) \(a(\phi)=\phi,\) \(b(\theta)=\frac{\theta^2}{2},\) \(c(y,\phi)=-\frac{1}{2}\{\frac{y^2}{\phi}+\log(2\pi\phi)\},\) and \(\theta=\mu=\eta.\)181 Then:

\[\begin{align} D&=\frac{2\sigma^2}{\sigma^2}\sum_{i=1}^n\left(Y_i(Y_i-\hat{\eta}_i)-\frac{Y_i^2}{2}+\frac{\hat{\eta}_i^2}{2}\right)\nonumber\\ &=\sum_{i=1}^n\left(2Y_i^2-2Y_i\hat{\eta}_i-Y_i^2+\hat{\eta}_i^2\right)\nonumber\\ &=\sum_{i=1}^n\left(Y_i-\hat{\eta}_i\right)^2\nonumber\\ &=\mathrm{RSS}(\hat{\boldsymbol{\beta}}),\tag{5.31} \end{align}\]

since \(\hat{\eta}_i=\hat\beta_0+\hat\beta_1x_{i1}+\cdots+\hat\beta_px_{ip}.\) Remember that \(\mathrm{RSS}(\hat{\boldsymbol{\beta}})\) is just another name for the SSE.

A benchmark for evaluating the scale of the deviance is the null deviance,

\[\begin{align*} D_0:=-2\left[\ell(\hat{\beta}_0)-\ell_s\right]\phi, \end{align*}\]

which is the deviance of the model without predictors, the one featuring only an intercept, to the perfect model. In logistic regression, this model is

\[\begin{align*} Y \mid (X_1=x_1,\ldots,X_p=x_p)\sim \mathrm{Ber}(\mathrm{logistic}(\beta_0)) \end{align*}\]

and, in this case, \(\hat\beta_0=\mathrm{logit}(\frac{m}{n})=\log\left(\frac{\frac{m}{n}}{1-\frac{m}{n}}\right)\) where \(m\) is the number of \(1\)’s in \(Y_1,\ldots,Y_n\) (see Figure 5.9).

Using again (5.30), we can see that the null deviance is a generalization of the total sum of squares of the linear model (see Section 2.6):

\[\begin{align*} D_0=\sum_{i=1}^n\left(Y_i-\hat{\eta}_i\right)^2=\sum_{i=1}^n\left(Y_i-\hat\beta_0\right)^2=\mathrm{SST}, \end{align*}\]

since \(\hat\beta_0=\bar Y\) because there are no predictors.

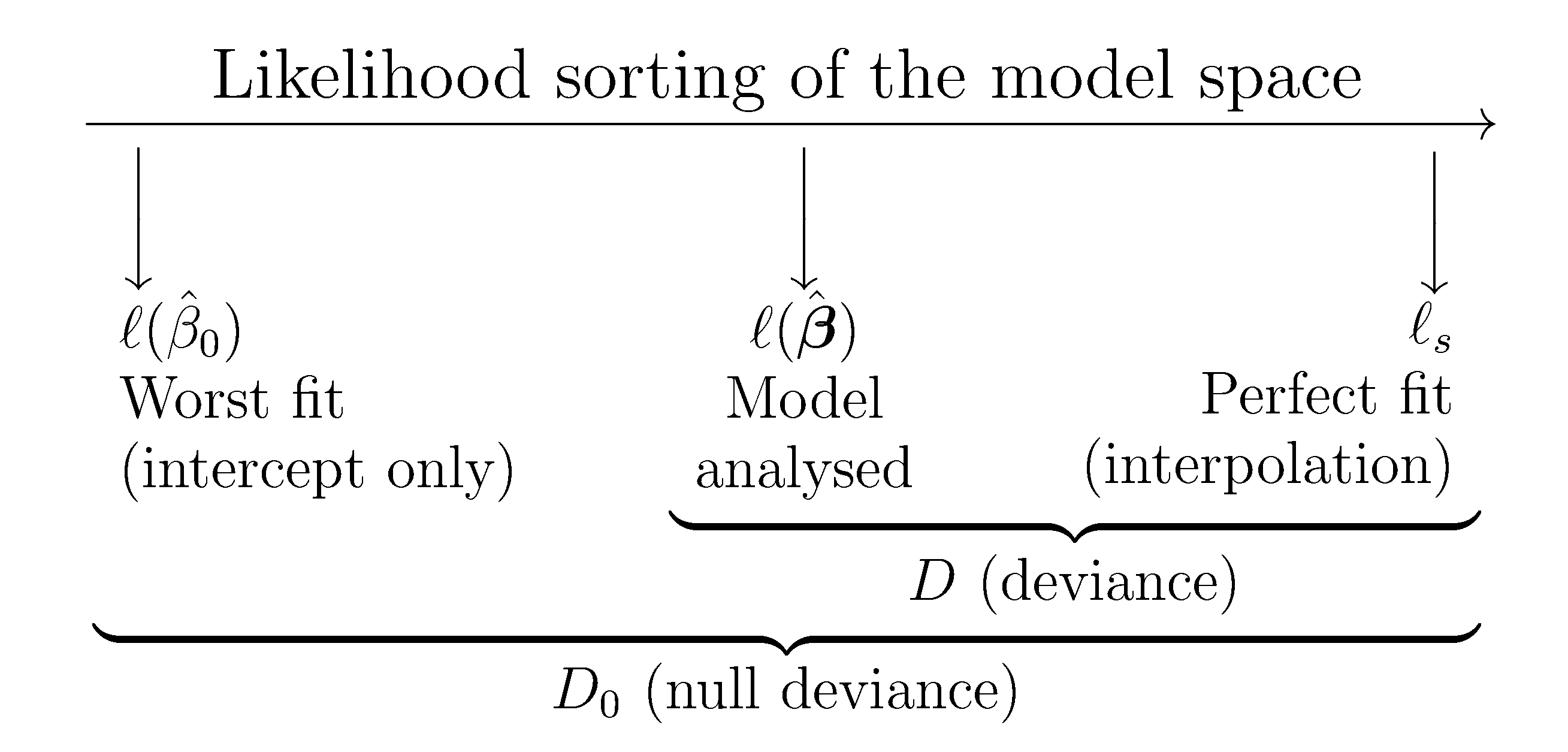

The deviance and the null deviance offer a likelihood-based generalization of the ANOVA decomposition of SST into the RSS and the regression sum of squares. The difference between the null deviance and the deviance can be regarded as a generalization of the regression sum of squares. Figure 5.10 illustrates this deviance decomposition.

Figure 5.10: Pictorial representation of the model space indexed by the likelihood. The “size” of this space is given by the null deviance (\(D_0\)), while the deviance (\(D\)) measures the distance of the fitted model from the perfect model. This deviance decomposition is a likelihood-based generalization of the ANOVA decomposition.

Using the deviance and the null deviance, we can compare how much the model has improved by adding the predictors \(X_1,\ldots,X_p\) and quantify the percentage of deviance explained. This can be done by means of the \(R^2\) statistic, which is a generalization of the determination coefficient for linear regression:

\[\begin{align*} R^2:=1-\frac{D}{D_0}\stackrel{\substack{\mathrm{linear}\\ \mathrm{model}\\{}}}{=}1-\frac{\mathrm{SSE}}{\mathrm{SST}}. \end{align*}\]

The \(R^2\) for generalized linear models is a measure that shares the same philosophy with the determination coefficient in linear regression: it is a proportion of how good the model fit is. If perfect, \(D=0\) and \(R^2=1.\) If the predictors are not model-related with \(Y,\) then \(D=D_0\) and \(R^2=0.\)

However, this \(R^2\) has a different interpretation than in linear regression. In particular:

- Is not the percentage of variance explained by the model, but rather a ratio indicating how close is the fit to being perfect or the worst.

- It is not related to any correlation coefficient.

The deviance is returned by summary. It is important to recall that R refers to the deviance as the 'Residual deviance' and the null deviance is referred to as 'Null deviance'.

# Summary of model

nasa <- glm(fail.field ~ temp, family = "binomial", data = challenger)

summary_log <- summary(nasa)

summary_log

##

## Call:

## glm(formula = fail.field ~ temp, family = "binomial", data = challenger)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) 7.5837 3.9146 1.937 0.0527 .

## temp -0.4166 0.1940 -2.147 0.0318 *

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 28.267 on 22 degrees of freedom

## Residual deviance: 20.335 on 21 degrees of freedom

## AIC: 24.335

##

## Number of Fisher Scoring iterations: 5

# 'Residual deviance' is the deviance; 'Null deviance' is the null deviance

# Null model (only intercept)

null <- glm(fail.field ~ 1, family = "binomial", data = challenger)

summary_null <- summary(null)

summary_null

##

## Call:

## glm(formula = fail.field ~ 1, family = "binomial", data = challenger)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) -0.8267 0.4532 -1.824 0.0681 .

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 28.267 on 22 degrees of freedom

## Residual deviance: 28.267 on 22 degrees of freedom

## AIC: 30.267

##

## Number of Fisher Scoring iterations: 4

# Compute the R^2 with a function -- useful for repetitive computations

r2glm <- function(model) {

summary_log <- summary(model)

1 - summary_log$deviance / summary_log$null.deviance

}

# R^2

r2glm(nasa)

## [1] 0.280619

r2glm(null)

## [1] -6.661338e-16A quantity related with the deviance is the scaled deviance:

\[\begin{align*} D^*:=\frac{D}{\phi}=-2\left[\ell(\hat{\boldsymbol{\beta}})-\ell_s\right]. \end{align*}\]

If \(\phi=1,\) such as in the binomial or Poisson regression models, then both the deviance and the scaled deviance agree. The scaled deviance has asymptotic distribution

\[\begin{align} D^*\stackrel{a}{\sim}\chi^2_{n-p-1},\tag{5.32} \end{align}\]

where \(\chi^2_{k}\) is the Chi-squared distribution with \(k\) degrees of freedom. In the case of the linear model, \(D^*=\frac{1}{\sigma^2}\mathrm{RSS}\) is exactly distributed as a \(\chi^2_{n-p-1}.\)

The result (5.32) provides a way of estimating \(\phi\) when it is unknown: match \(D^*\) with the expectation \(\mathbb{E}\left[\chi^2_{n-p-1}\right]=n-p-1.\) This provides

\[\begin{align*} \hat\phi_D:=\frac{-2(\ell(\hat{\boldsymbol{\beta}})-\ell_s)}{n-p-1}, \end{align*}\]

which, as expected, in the case of the linear model is equivalent to \(\hat\sigma^2\) as given in (2.15). More importantly, the scaled deviance can be used for performing hypotheses tests on sets of coefficients of a generalized linear model.

Assume we have one model, say \(M_2,\) with \(p_2\) predictors and another model, say \(M_1\), with \(p_1<p_2\) predictors that are contained in the set of predictors of the M2. In other words, assume \(M_1\) is nested within \(M_2.\) Then we can test the null hypothesis that the extra coefficients of \(M_2\) are simultaneously zero. For example, if \(M_1\) has the coefficients \(\{\beta_0,\beta_1,\ldots,\beta_{p_1}\}\) and M2 has coefficients \(\{\beta_0,\beta_1,\ldots,\beta_{p_1},\beta_{p_1+1},\ldots,\beta_{p_2}\},\) we can test

\[\begin{align*} H_0:\beta_{p_1+1}=\cdots=\beta_{p_2}=0\quad\text{vs.}\quad H_1:\beta_j\neq 0\text{ for any }p_1<j\leq p_2. \end{align*}\]

This can be done by means of the statistic182

\[\begin{align} D^*_{p_1}-D^*_{p_2}\stackrel{a,\,H_0}{\sim}\chi^2_{p_2-p_1}.\tag{5.33} \end{align}\]

If \(H_0\) is true,183 then \(D_{p_1}^*-D^*_{p_2}\) is expected to be small, thus we will reject \(H_0\) if the value of the statistic is above the \(\alpha\)-upper quantile of the \(\chi^2_{p_2-p_1}\), denoted as \(\chi^2_{\alpha;p_2-p_1}.\)

\(D^*\) apparently removes the effects of \(\phi,\) but it is still dependent on \(\phi,\) since this is hidden in the likelihood (see (5.30)). Therefore, \(D^*\) cannot be computed unless \(\phi\) is known, which forbids using (5.33). Hopefully, this dependence is removed by employing (5.32) and (5.33) and assuming that they are asymptotically independent. This gives the \(F\)-test for \(H_0\):

\[\begin{align*} F=\frac{(D^*_{p_1}-D^*_{p_2})/(p_2-p_1)}{D^*_{p_2}/(n-p_2-1)}=\frac{(D_{p_1}-D_{p_2})/(p_2-p_1)}{D_{p_2}/(n-p_2-1)}\stackrel{a,\,H_0}{\sim} F_{p_2-p_1,n-p_2-1}. \end{align*}\]

Note that \(F\) is perfectly computable, since \(\phi\) cancels due to the quotient (and because we assume that \(a(\phi)\propto\phi\)).

Note also that this is an extension of the \(F\)-test seen in Section 2.6: take \(p_1=0\) and \(p_2=p\) and then one tests the significance of all the predictors included in the model (both models contain intercept).

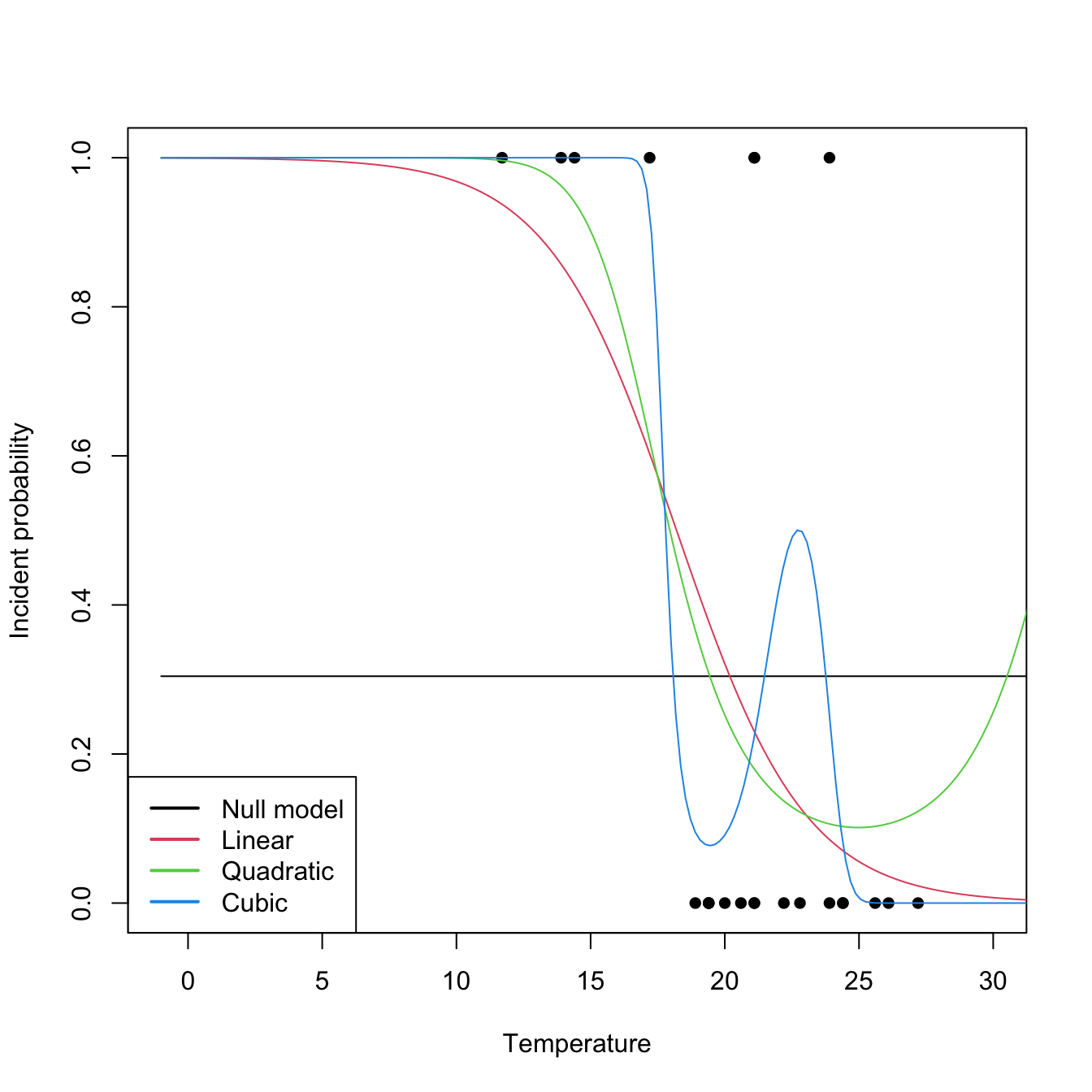

The computation of deviances and associated tests is done through anova, which implements the Analysis of Deviance. This is illustrated in the following code, which coincidentally also illustrates the inclusion of nonlinear transformations on the predictors.

# Polynomial predictors

nasa0 <- glm(fail.field ~ 1, family = "binomial", data = challenger)

nasa1 <- glm(fail.field ~ temp, family = "binomial", data = challenger)

nasa2 <- glm(fail.field ~ poly(temp, degree = 2), family = "binomial",

data = challenger)

nasa3 <- glm(fail.field ~ poly(temp, degree = 3), family = "binomial",

data = challenger)

# Plot fits

temp <- seq(-1, 35, l = 200)

tt <- data.frame(temp = temp)

plot(fail.field ~ temp, data = challenger, pch = 16, xlim = c(-1, 30),

xlab = "Temperature", ylab = "Incident probability")

lines(temp, predict(nasa0, newdata = tt, type = "response"), col = 1)

lines(temp, predict(nasa1, newdata = tt, type = "response"), col = 2)

lines(temp, predict(nasa2, newdata = tt, type = "response"), col = 3)

lines(temp, predict(nasa3, newdata = tt, type = "response"), col = 4)

legend("bottomleft", legend = c("Null model", "Linear", "Quadratic", "Cubic"),

lwd = 2, col = 1:4)

# R^2's

r2glm(nasa0)

## [1] -6.661338e-16

r2glm(nasa1)

## [1] 0.280619

r2glm(nasa2)

## [1] 0.3138925

r2glm(nasa3)

## [1] 0.4831863

# Chisq and F tests -- same results since phi is known

anova(nasa1, test = "Chisq")

## Analysis of Deviance Table

##

## Model: binomial, link: logit

##

## Response: fail.field

##

## Terms added sequentially (first to last)

##

##

## Df Deviance Resid. Df Resid. Dev Pr(>Chi)

## NULL 22 28.267

## temp 1 7.9323 21 20.335 0.004856 **

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

anova(nasa1, test = "F")

## Analysis of Deviance Table

##

## Model: binomial, link: logit

##

## Response: fail.field

##

## Terms added sequentially (first to last)

##

##

## Df Deviance Resid. Df Resid. Dev F Pr(>F)

## NULL 22 28.267

## temp 1 7.9323 21 20.335 7.9323 0.004856 **

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

# Incremental comparisons of nested models

anova(nasa1, nasa2, nasa3, test = "Chisq")

## Analysis of Deviance Table

##

## Model 1: fail.field ~ temp

## Model 2: fail.field ~ poly(temp, degree = 2)

## Model 3: fail.field ~ poly(temp, degree = 3)

## Resid. Df Resid. Dev Df Deviance Pr(>Chi)

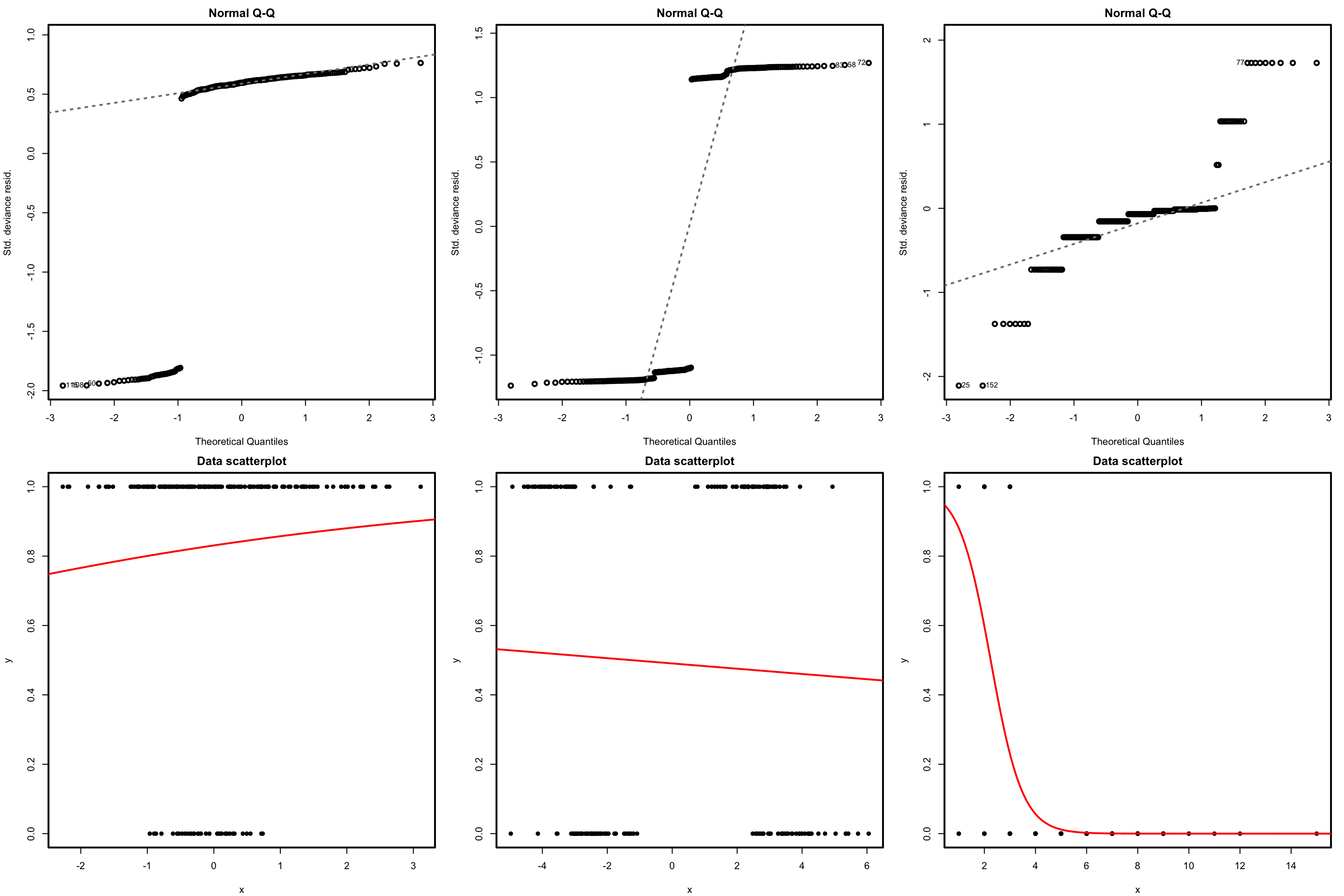

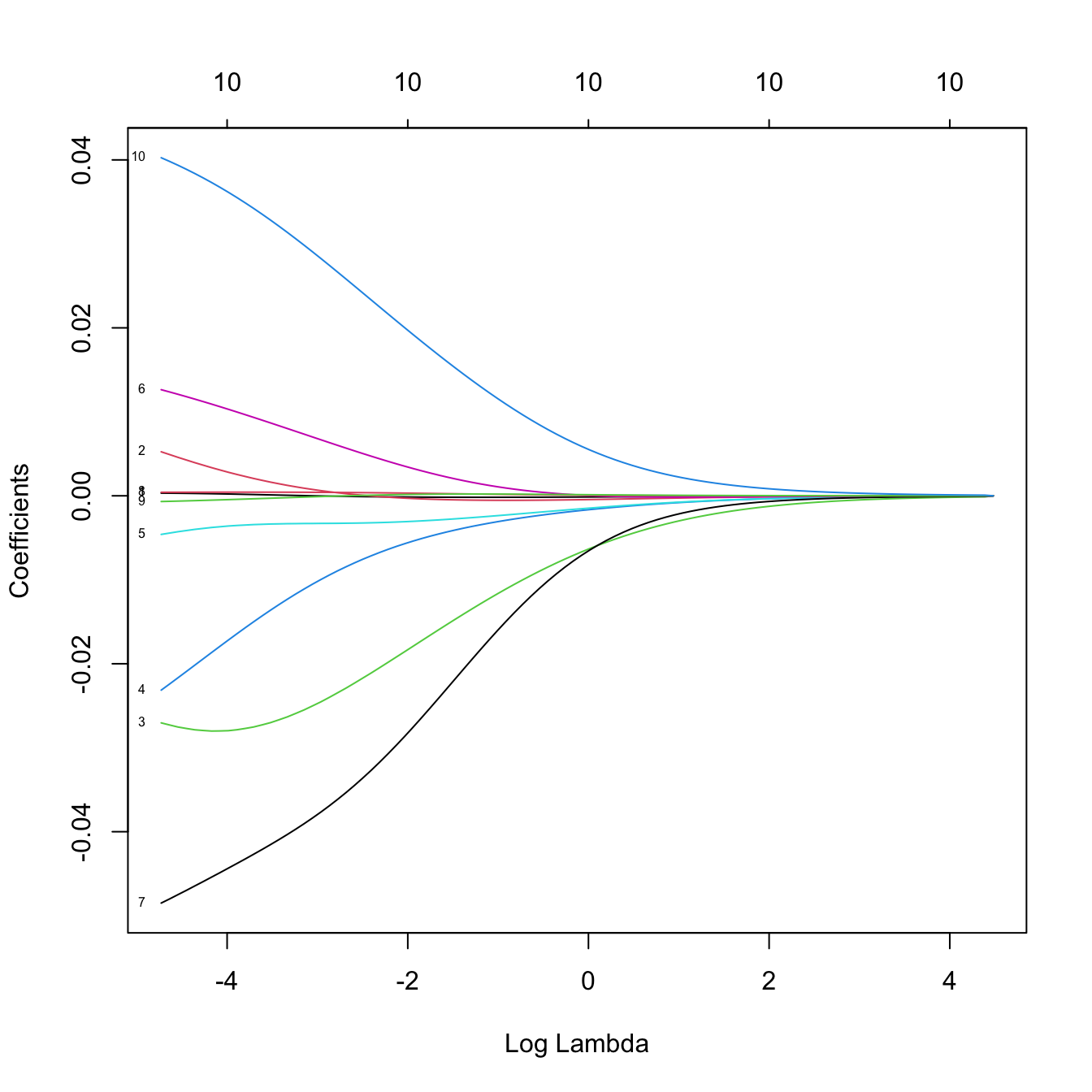

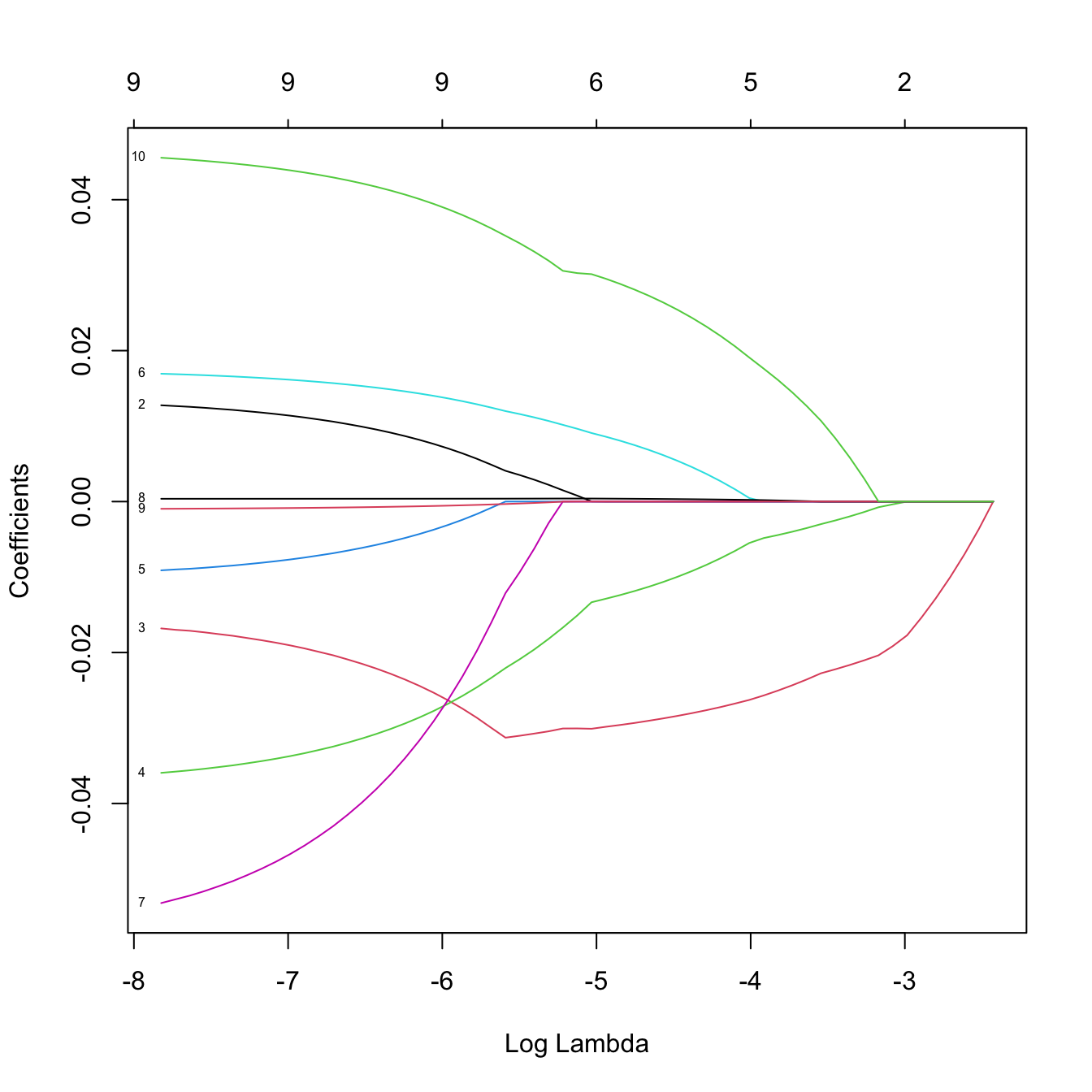

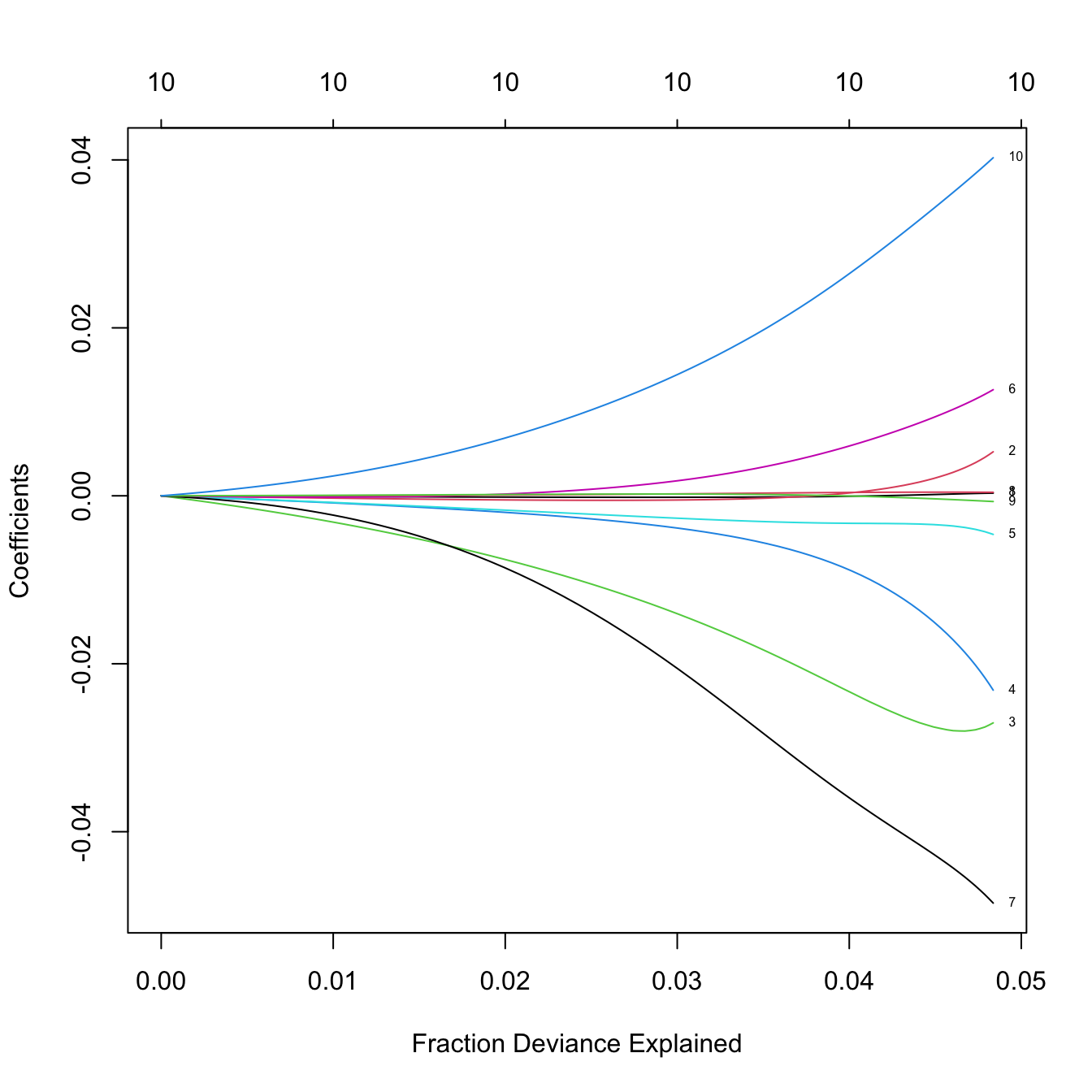

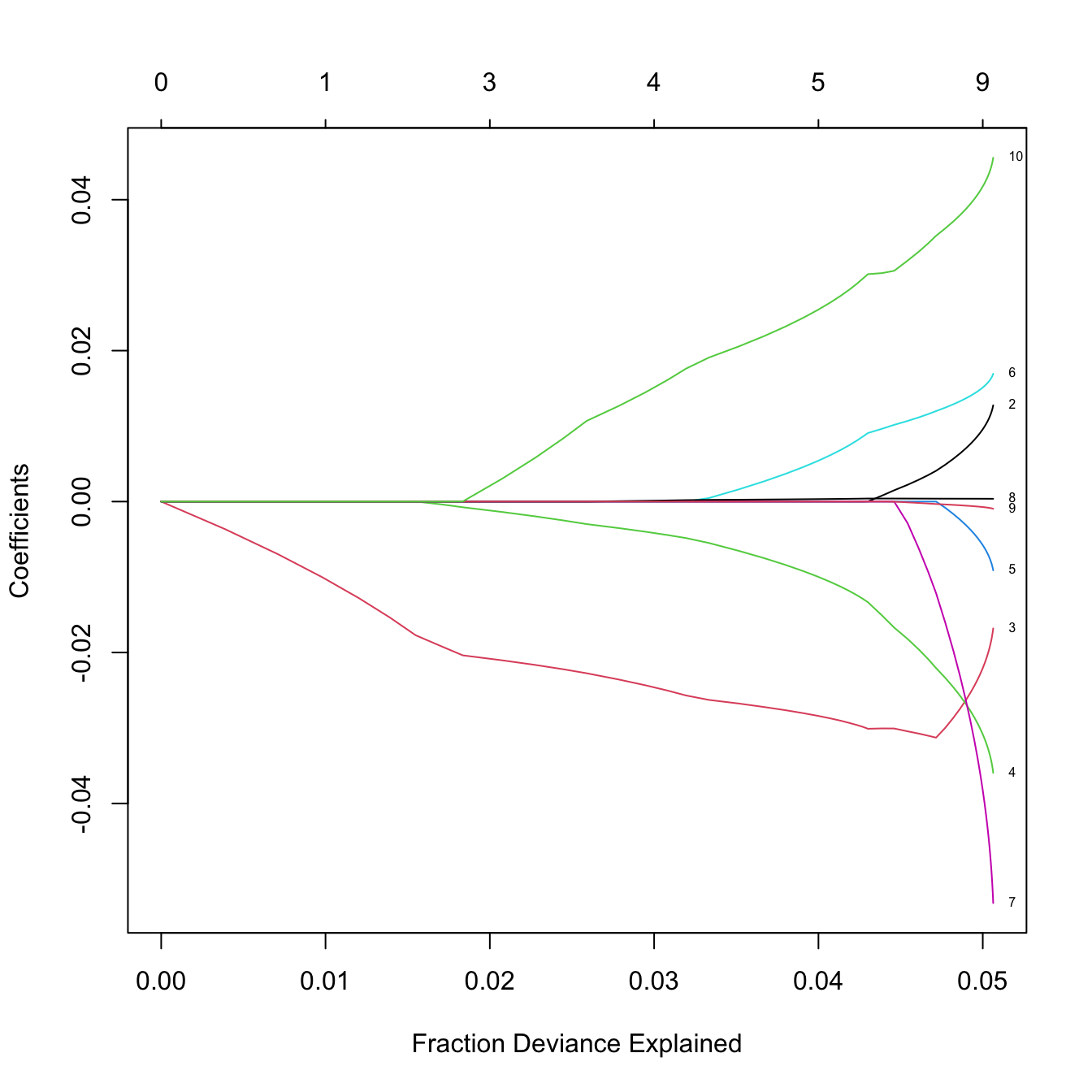

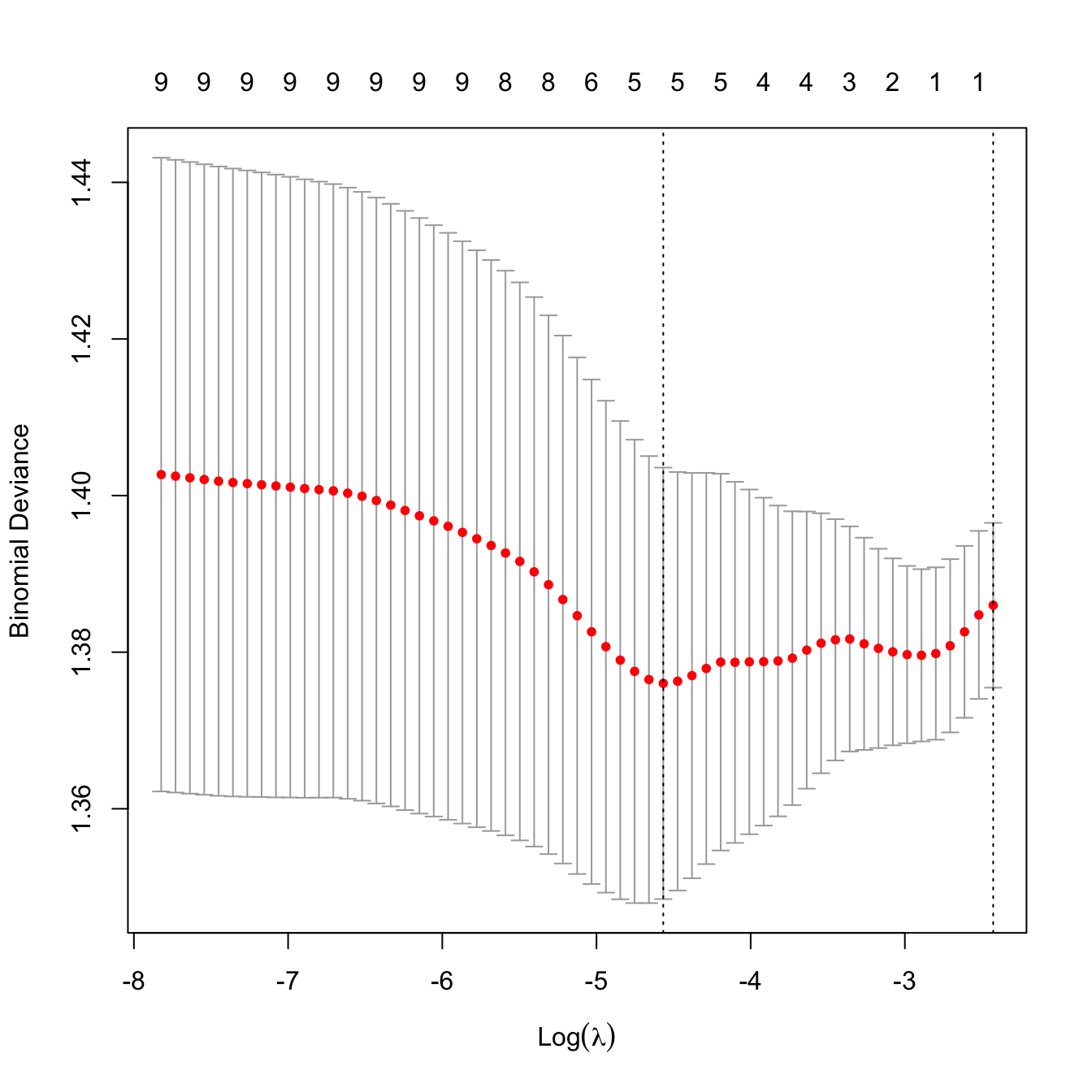

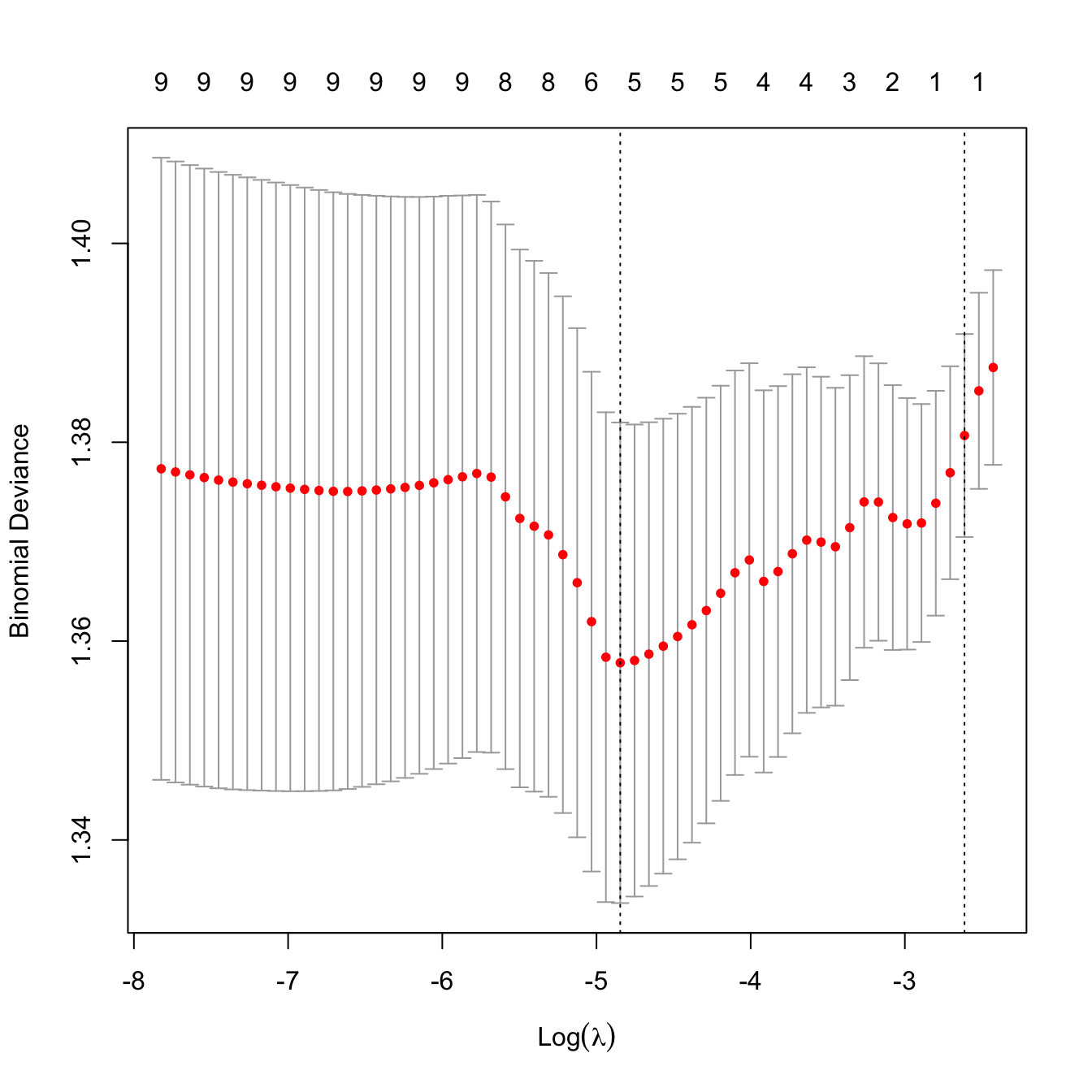

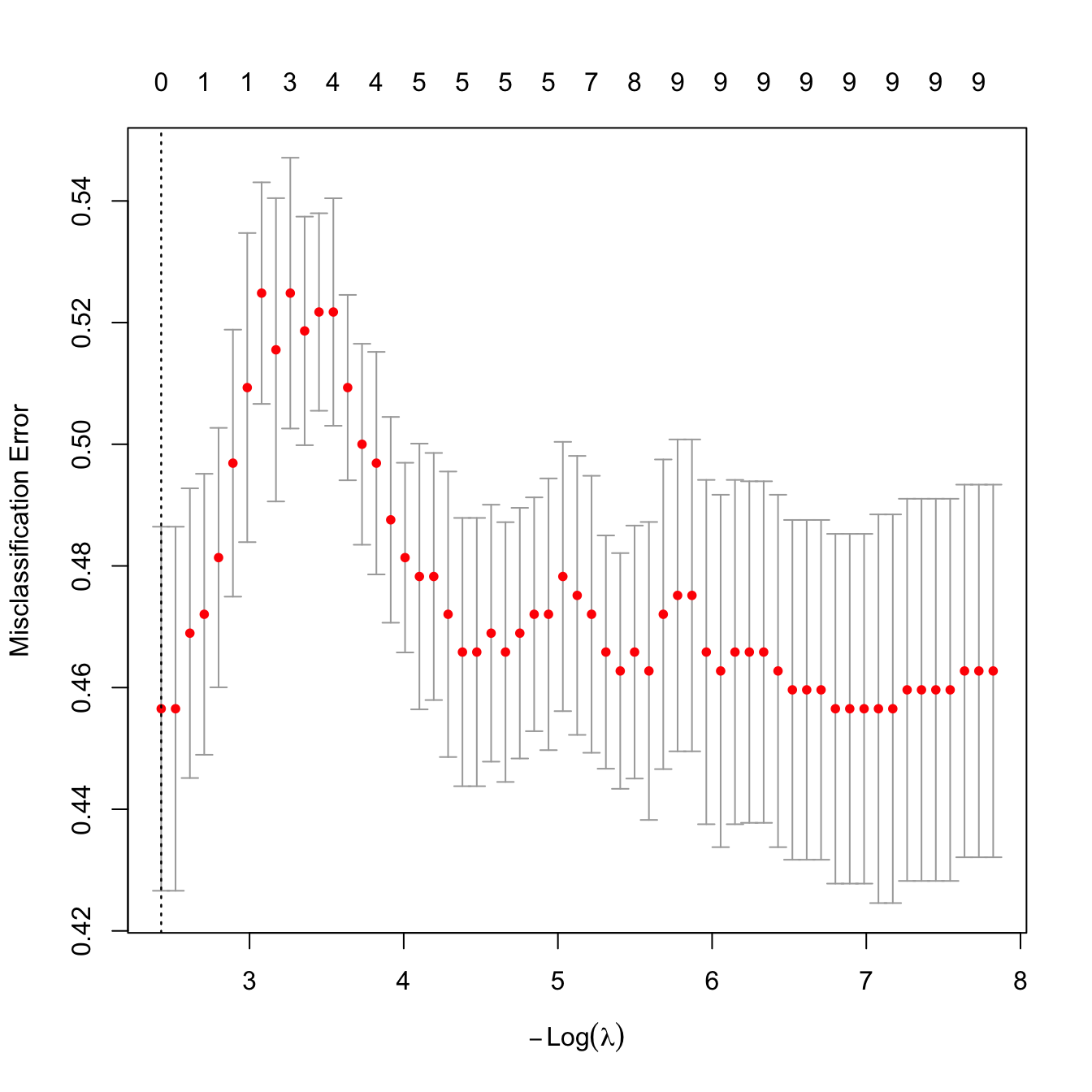

## 1 21 20.335