3 Linear models II: model selection, extensions, and diagnostics

Given the response \(Y\) and the predictors \(X_1,\ldots,X_p,\) many linear models can be built for predicting and explaining \(Y.\) In this chapter we will see how to address the problem of selecting the best subset of predictors \(X_1,\ldots,X_p\) for explaining \(Y.\) Among others, we will also see how to extend the linear model to account for nonlinear relations between \(Y\) and \(X_1,\ldots,X_p,\) how to check whether the assumptions of the model are realistic in practice, and how to incorporate dimension reduction within linear regression.

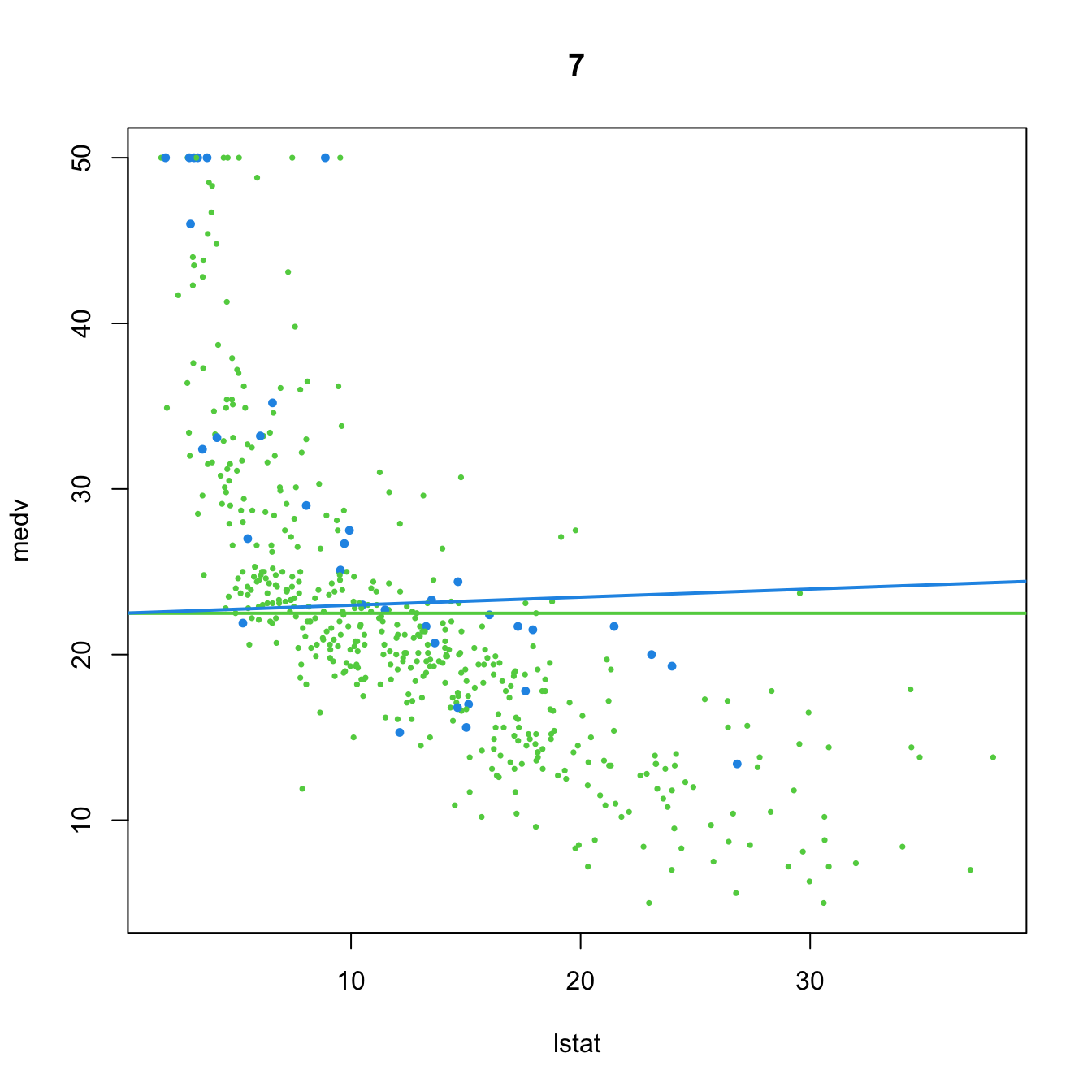

3.1 Case study: Housing values in Boston

This case study is motivated by Harrison and Rubinfeld (1978), who proposed a hedonic model52 for determining the willingness of house buyers to pay for clean air. The study of Harrison and Rubinfeld (1978) employed data from the Boston metropolitan area, containing 560 suburbs and 14 variables. The Boston dataset is available through the file Boston.xlsx and through the dataset MASS::Boston.

The description of the related variables can be found in ?Boston and Harrison and Rubinfeld (1978),53 but we summarize here the most important ones as they appear in Boston. They are aggregated into five categories:

-

Dependent variable:

medv, the median value of owner-occupied homes (in thousands of dollars). -

Structural variables indicating the house characteristics:

rm(average number of rooms “in owner units”) andage(proportion of owner-occupied units built prior to 1940). -

Neighborhood variables:

crim(crime rate),zn(proportion of residential areas),indus(proportion of non-retail business area),chas(whether there is river limitation),tax(cost of public services in each community),ptratio(pupil-teacher ratio),black(variable \(1000(B - 0.63)^2,\) where \(B\) is the proportion of black population – low and high values of \(B\) increase housing prices) andlstat(percent of lower status of the population). -

Accessibility variables:

dis(distances to five employment centers) andrad(accessibility to radial highways – larger index denotes better accessibility). -

Air pollution variable:

nox, the annual concentration of nitrogen oxide (in parts per ten million).

We begin by importing the data:

# Read data

Boston <- readxl::read_excel(path = "Boston.xlsx", sheet = 1, col_names = TRUE)

# # Alternatively

# data(Boston, package = "MASS")A quick summary of the data is shown below:

summary(Boston)

## crim zn indus chas nox rm

## Min. : 0.00632 Min. : 0.00 Min. : 0.46 Min. :0.00000 Min. :0.3850 Min. :3.561

## 1st Qu.: 0.08205 1st Qu.: 0.00 1st Qu.: 5.19 1st Qu.:0.00000 1st Qu.:0.4490 1st Qu.:5.886

## Median : 0.25651 Median : 0.00 Median : 9.69 Median :0.00000 Median :0.5380 Median :6.208

## Mean : 3.61352 Mean : 11.36 Mean :11.14 Mean :0.06917 Mean :0.5547 Mean :6.285

## 3rd Qu.: 3.67708 3rd Qu.: 12.50 3rd Qu.:18.10 3rd Qu.:0.00000 3rd Qu.:0.6240 3rd Qu.:6.623

## Max. :88.97620 Max. :100.00 Max. :27.74 Max. :1.00000 Max. :0.8710 Max. :8.780

## age dis rad tax ptratio black lstat

## Min. : 2.90 Min. : 1.130 Min. : 1.000 Min. :187.0 Min. :12.60 Min. : 0.32 Min. : 1.73

## 1st Qu.: 45.02 1st Qu.: 2.100 1st Qu.: 4.000 1st Qu.:279.0 1st Qu.:17.40 1st Qu.:375.38 1st Qu.: 6.95

## Median : 77.50 Median : 3.207 Median : 5.000 Median :330.0 Median :19.05 Median :391.44 Median :11.36

## Mean : 68.57 Mean : 3.795 Mean : 9.549 Mean :408.2 Mean :18.46 Mean :356.67 Mean :12.65

## 3rd Qu.: 94.08 3rd Qu.: 5.188 3rd Qu.:24.000 3rd Qu.:666.0 3rd Qu.:20.20 3rd Qu.:396.23 3rd Qu.:16.95

## Max. :100.00 Max. :12.127 Max. :24.000 Max. :711.0 Max. :22.00 Max. :396.90 Max. :37.97

## medv

## Min. : 5.00

## 1st Qu.:17.02

## Median :21.20

## Mean :22.53

## 3rd Qu.:25.00

## Max. :50.00The two goals of this case study are:

- Q1. Quantify the influence of the predictor variables in the housing prices.

- Q2. Obtain the “best possible” model for decomposing the housing prices and interpret it.

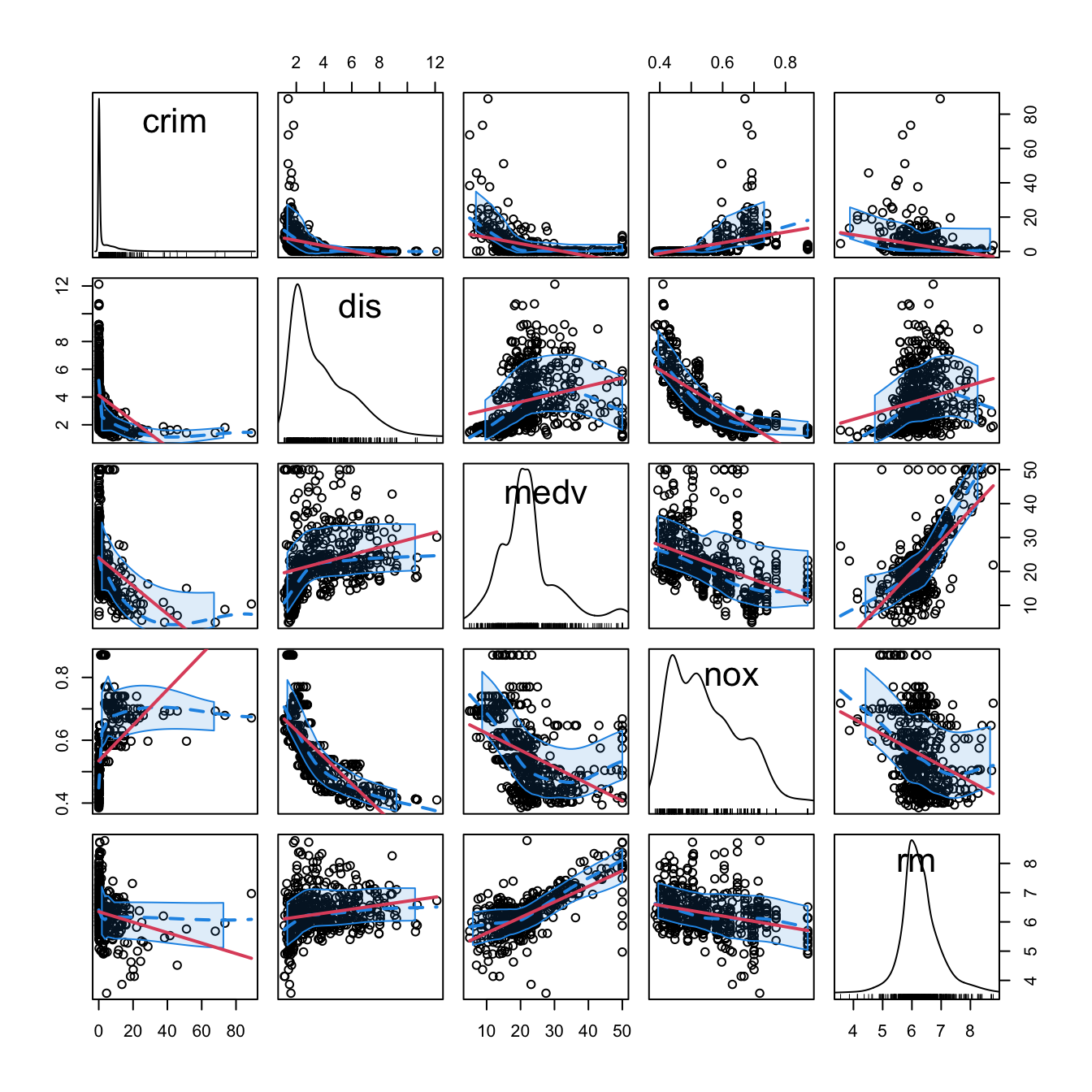

We begin by making an exploratory analysis of the data with a matrix scatterplot. Since the number of variables is high, we opt to plot only five variables: crim, dis, medv, nox, and rm. Each of them represents the five categories in which variables were classified.

car::scatterplotMatrix(~ crim + dis + medv + nox + rm, regLine = list(col = 2),

col = 1, smooth = list(col.smooth = 4, col.spread = 4),

data = Boston)

Figure 3.1: Scatterplot matrix for crim, dis, medv, nox, and rm from the Boston dataset. The diagonal panels show an estimate of the unknown pdf of each variable (see Section 6.1.2). The red and blue lines are linear and nonparametric (see Section 6.2) estimates of the regression functions for pairwise relations.

Note the peculiar distribution of crim, very concentrated at zero, and the asymmetry in medv, with a second mode associated with the most expensive properties. Inspecting the individual panels, it is clear that some nonlinearity exists in the data and that some predictors are going to be more important than others (and recall that we have plotted just a subset of all the predictors).

3.2 Model selection

In Chapter 2 we briefly saw that the inclusion of more predictors is not for free: there is a price to pay in terms of more variability in the coefficients estimates, harder interpretation, and possible inclusion of highly-dependent predictors. Indeed, there is a maximum number of predictors \(p\) that can be considered in a linear model for a sample size \(n\): \[\begin{align*} p\leq n-2. \end{align*}\] Equivalently, there is a minimum sample size \(n\) required for fitting a model with \(p\) predictors: \(n\geq p + 2.\)

The interpretation of this fact is simple if we think about the geometry for \(p=1\) and \(p=2\):

- If \(p=1,\) we need at least \(n=2\) points to uniquely fit a line. However, this line gives no information on the vertical variation about it, hence \(\sigma^2\) cannot be estimated.54 Therefore, we need at least \(n=3\) points, that is, \(n\geq p + 2=3.\)

- If \(p=2,\) we need at least \(n=3\) points to uniquely fit a plane. But again this plane gives no information on the variation of the data about it and hence \(\sigma^2\) cannot be estimated. Therefore, we need \(n\geq p + 2=4.\)

Another interpretation is the following:

The fitting of a linear model with \(p\) predictors involves the estimation of \(p+2\) parameters \((\boldsymbol{\beta},\sigma^2)\) from \(n\) data points. The closer \(p+2\) and \(n\) are, the more variable the estimates \((\hat{\boldsymbol{\beta}},\hat\sigma^2)\) will be, since less information is available for estimating each one. In the limit case \(n=p+2,\) each sample point determines a parameter estimate.

In the above discussion the degenerate case \(p=n-1\) was excluded, as it gives a perfect and useless fit for which \(\hat\sigma^2\) is not defined. However, \(\hat{\boldsymbol{\beta}}\) is actually computable if \(p=n-1.\) The output of the next chunk of code clarifies the distinction between \(p=n-1\) and \(p=n-2.\)

# Data: n observations and p = n - 1 predictors

set.seed(123456)

n <- 5

p <- n - 1

df <- data.frame(y = rnorm(n), x = matrix(rnorm(n * p), nrow = n, ncol = p))

# Case p = n - 1 = 4: beta can be estimated, but sigma^2 cannot (hence, no

# inference can be performed since it requires the estimated sigma^2)

summary(lm(y ~ ., data = df))

# Case p = n - 2 = 3: both beta and sigma^2 can be estimated (hence, inference

# can be performed)

summary(lm(y ~ . - x.1, data = df))The degrees of freedom \(n-p-1\) quantify the increase in the variability of \((\hat{\boldsymbol{\beta}},\hat\sigma^2)\) when \(p\) approaches \(n-2.\) For example:

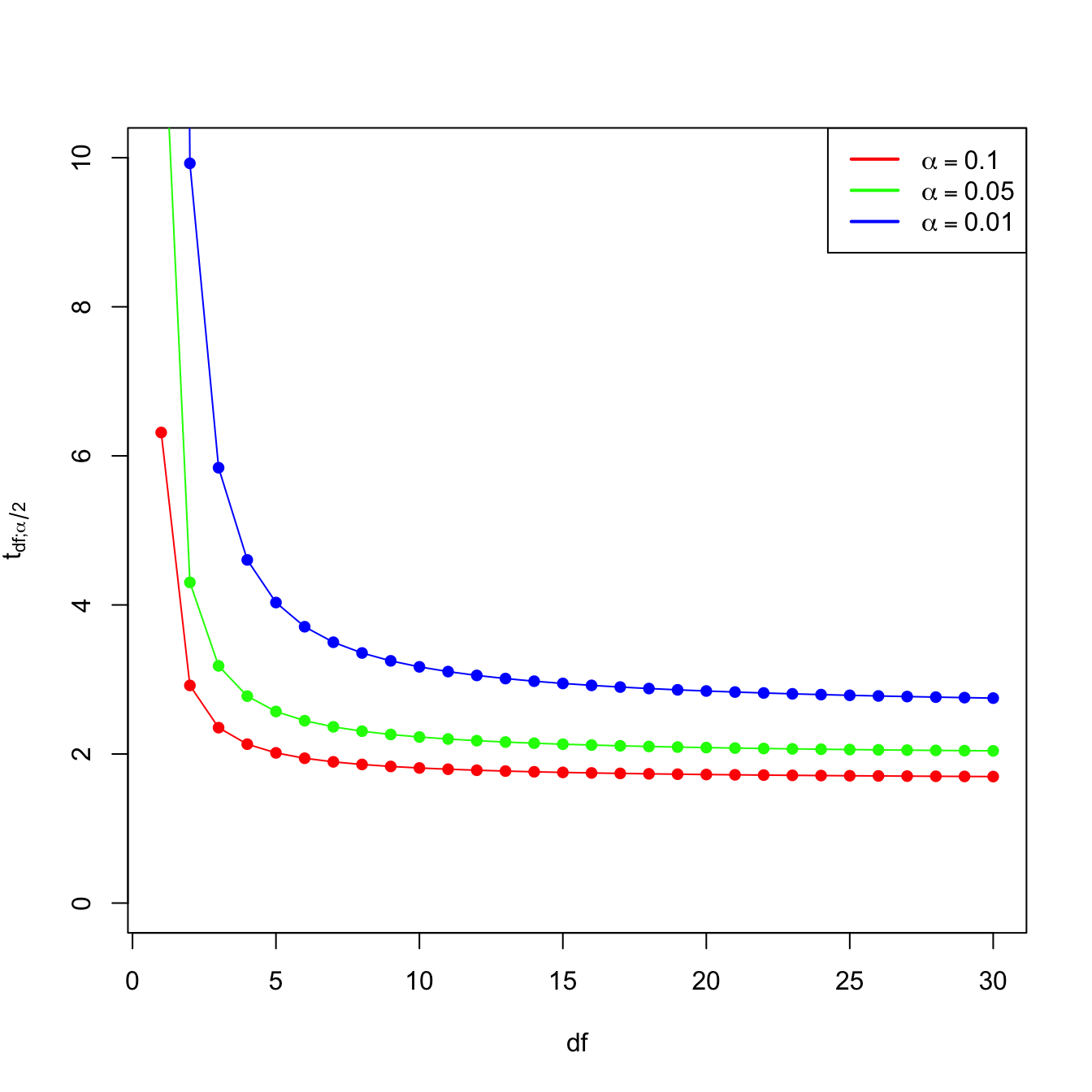

- \(t_{n-p-1;\alpha/2}\) appears in (2.16) and influences the length of the CIs for \(\beta_j,\) see (2.17). It also influences the length of the CIs for the prediction. As Figure 3.2 shows, when the degrees of freedom decrease, \(t_{n-p-1;\alpha/2}\) increases, thus the intervals widen.

- \(\hat\sigma^2=\frac{1}{n-p-1}\sum_{i=1}^n\hat\varepsilon_i^2\) influences the \(R^2\) and \(R^2_\text{Adj}.\) If no relevant variables are added to the model then \(\sum_{i=1}^n\hat\varepsilon_i^2\) will not change substantially. However, the factor \(\frac{1}{n-p-1}\) will increase as \(p\) augments, inflating \(\hat\sigma^2\) and its variance. This is exactly what happened in Figure 2.19.

Figure 3.2: Effect of \(\text{df}=n-p-1\) in \(t_{\text{df};\alpha/2}\) for \(\alpha=0.10,0.05,0.01.\)

Now that we have shed more light on the problem of having an excess of predictors, we turn the focus on selecting the most adequate predictors for a multiple regression model. This is a challenging task without a unique solution, and what is worse, without a method that is guaranteed to work in all the cases. However, there is a well-established procedure that usually gives good results: the stepwise model selection. Its principle is to sequentially compare multiple linear regression models with different predictors,55 improving iteratively a performance measure through a greedy search.

Stepwise model selection typically uses as measure of performance an information criterion. An information criterion balances the fitness of a model with the number of predictors employed. Hence, it determines objectively the best model as the one that minimizes the considered information criterion. Two common criteria are the Bayesian Information Criterion (BIC) and the Akaike Information Criterion (AIC). Both are based on balancing the model fitness and its complexity:

\[\begin{align} \text{BIC}(\text{model}) = \underbrace{-2\ell(\text{model})}_{\text{Model fitness}} + \underbrace{\text{npar(model)}\times\log(n)}_{\text{Complexity}}, \tag{3.1} \end{align}\]

where \(\ell(\text{model})\) is the log-likelihood of the model (how well the model fits the data) and \(\text{npar(model)}\) is the number of parameters considered in the model (how complex the model is). In the case of a multiple linear regression model with \(p\) predictors, \(\text{npar(model)}=p+2.\) The AIC replaces the \(\log(n)\) factor by a \(2\) in (3.1) so, compared with the BIC, it penalizes less the more complex models.56 This is one of the reasons why BIC is preferred by some practitioners for performing model comparison57

The BIC and AIC can be computed through the functions BIC and AIC. They take a model as the input.

# Two models with different predictors

mod1 <- lm(medv ~ age + crim, data = Boston)

mod2 <- lm(medv ~ age + crim + lstat, data = Boston)

# BICs

BIC(mod1)

## [1] 3581.893

BIC(mod2) # Smaller -> better

## [1] 3300.841

# AICs

AIC(mod1)

## [1] 3564.987

AIC(mod2) # Smaller -> better

## [1] 3279.708

# Check the summaries

summary(mod1)

##

## Call:

## lm(formula = medv ~ age + crim, data = Boston)

##

## Residuals:

## Min 1Q Median 3Q Max

## -13.940 -4.991 -2.420 2.110 32.033

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 29.80067 0.97078 30.698 < 2e-16 ***

## age -0.08955 0.01378 -6.499 1.95e-10 ***

## crim -0.31182 0.04510 -6.914 1.43e-11 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 8.157 on 503 degrees of freedom

## Multiple R-squared: 0.2166, Adjusted R-squared: 0.2134

## F-statistic: 69.52 on 2 and 503 DF, p-value: < 2.2e-16

summary(mod2)

##

## Call:

## lm(formula = medv ~ age + crim + lstat, data = Boston)

##

## Residuals:

## Min 1Q Median 3Q Max

## -16.133 -3.848 -1.380 1.970 23.644

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 32.82804 0.74774 43.903 < 2e-16 ***

## age 0.03765 0.01225 3.074 0.00223 **

## crim -0.08262 0.03594 -2.299 0.02193 *

## lstat -0.99409 0.05075 -19.587 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 6.147 on 502 degrees of freedom

## Multiple R-squared: 0.5559, Adjusted R-squared: 0.5533

## F-statistic: 209.5 on 3 and 502 DF, p-value: < 2.2e-16Let’s go back to the selection of predictors. If we have \(p\) predictors, a naive procedure would be to check all the possible models that can be constructed with them and then select the best one in terms of BIC/AIC. This exhaustive search is the so-called best subset selection – its application to be seen in Section 4.4. The problem is that there are \(2^p\) possible models to inspect!58 Fortunately, the step function (the base-R descendant of MASS::stepAIC) helps us navigating this ocean of models by implementing stepwise model selection. Stepwise selection will iteratively add predictors that decrease an information criterion and/or remove those that increase it, depending on the mode of stepwise search that is performed.

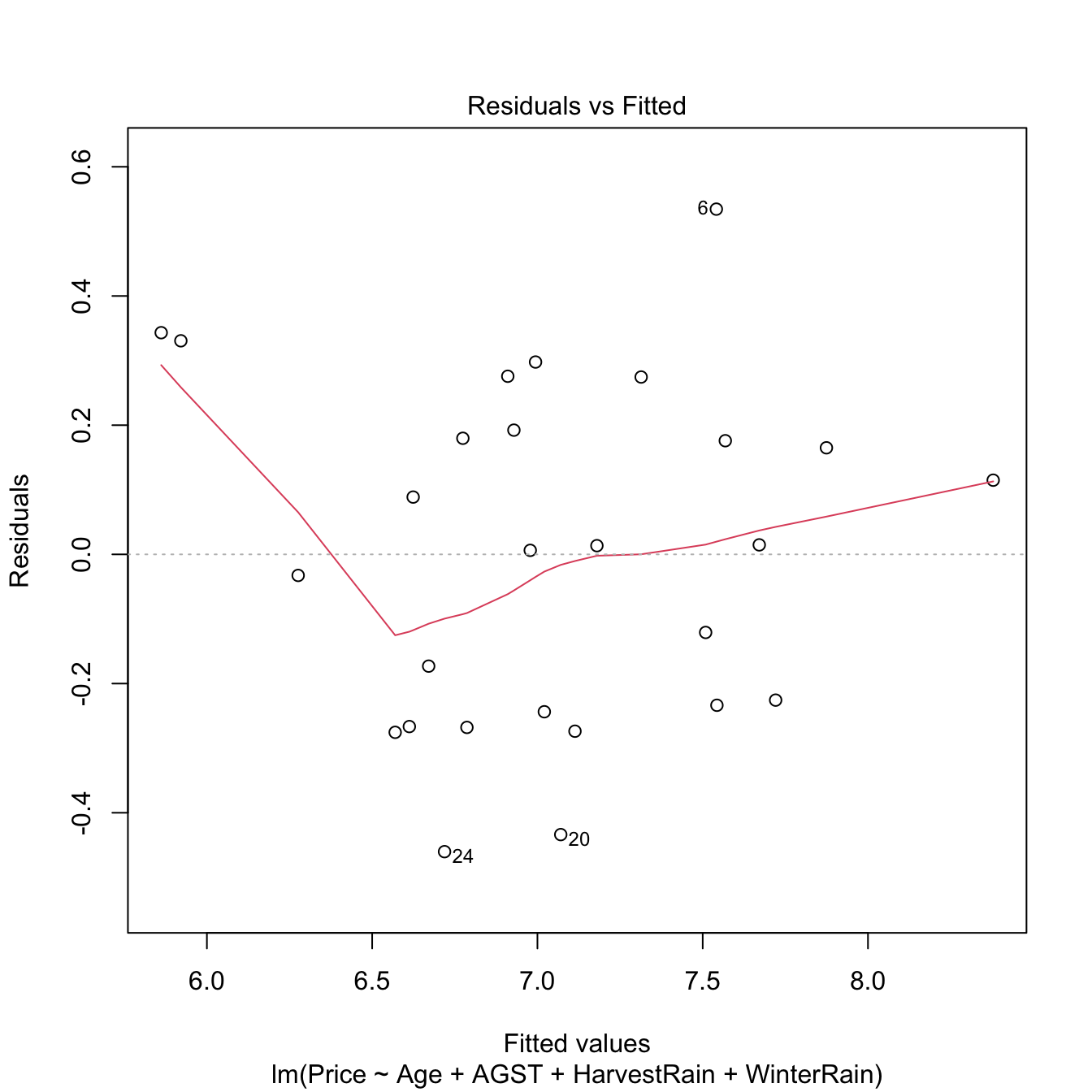

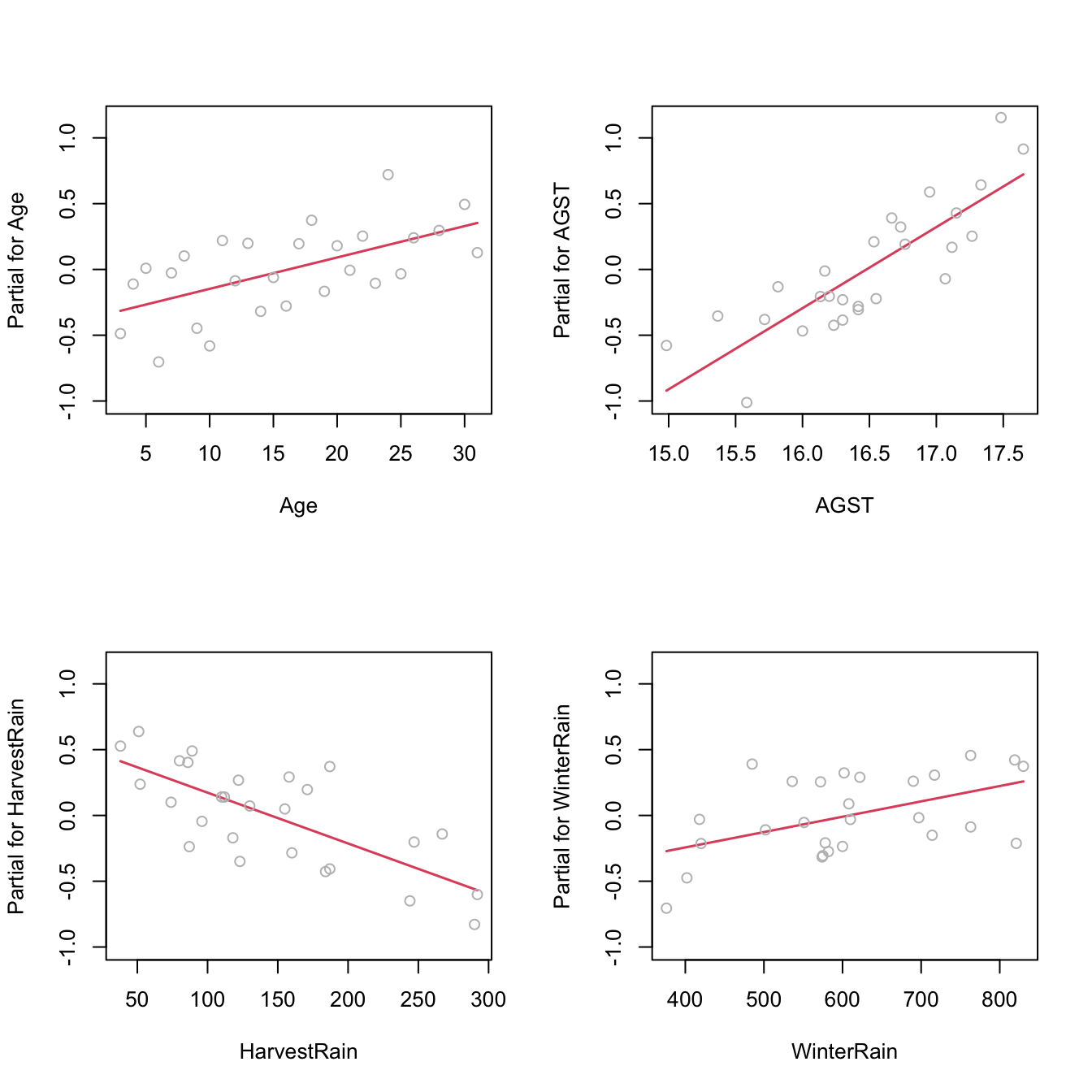

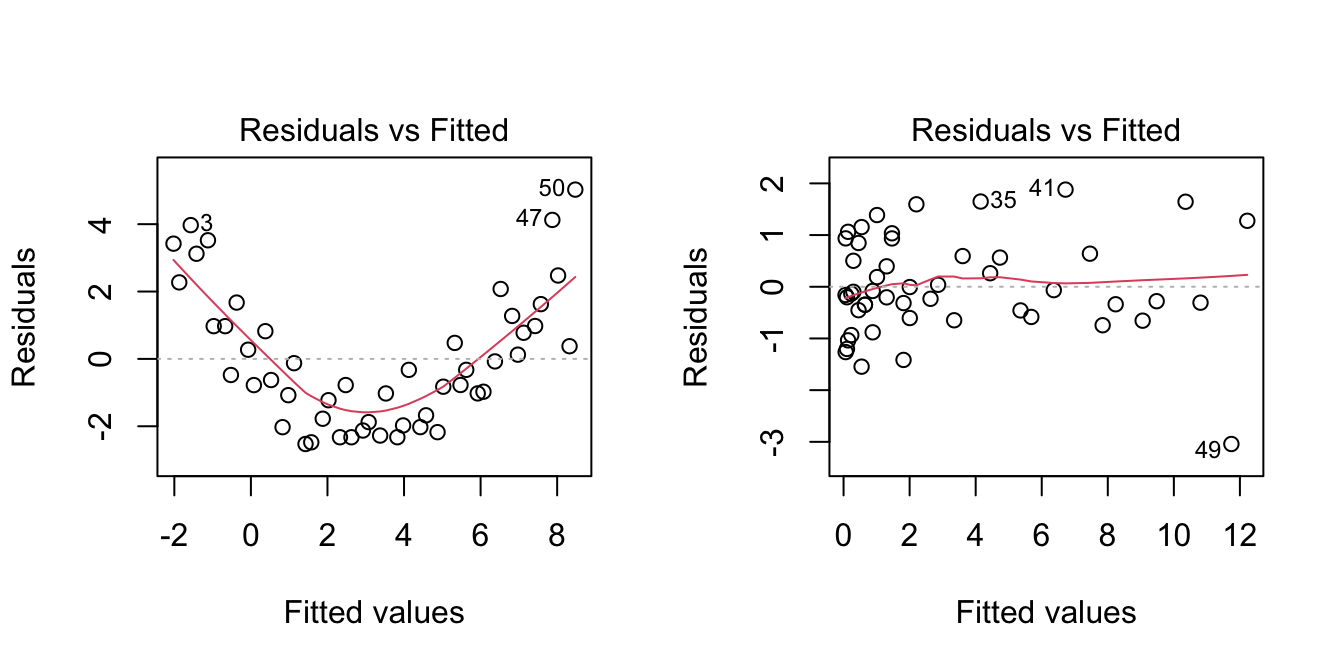

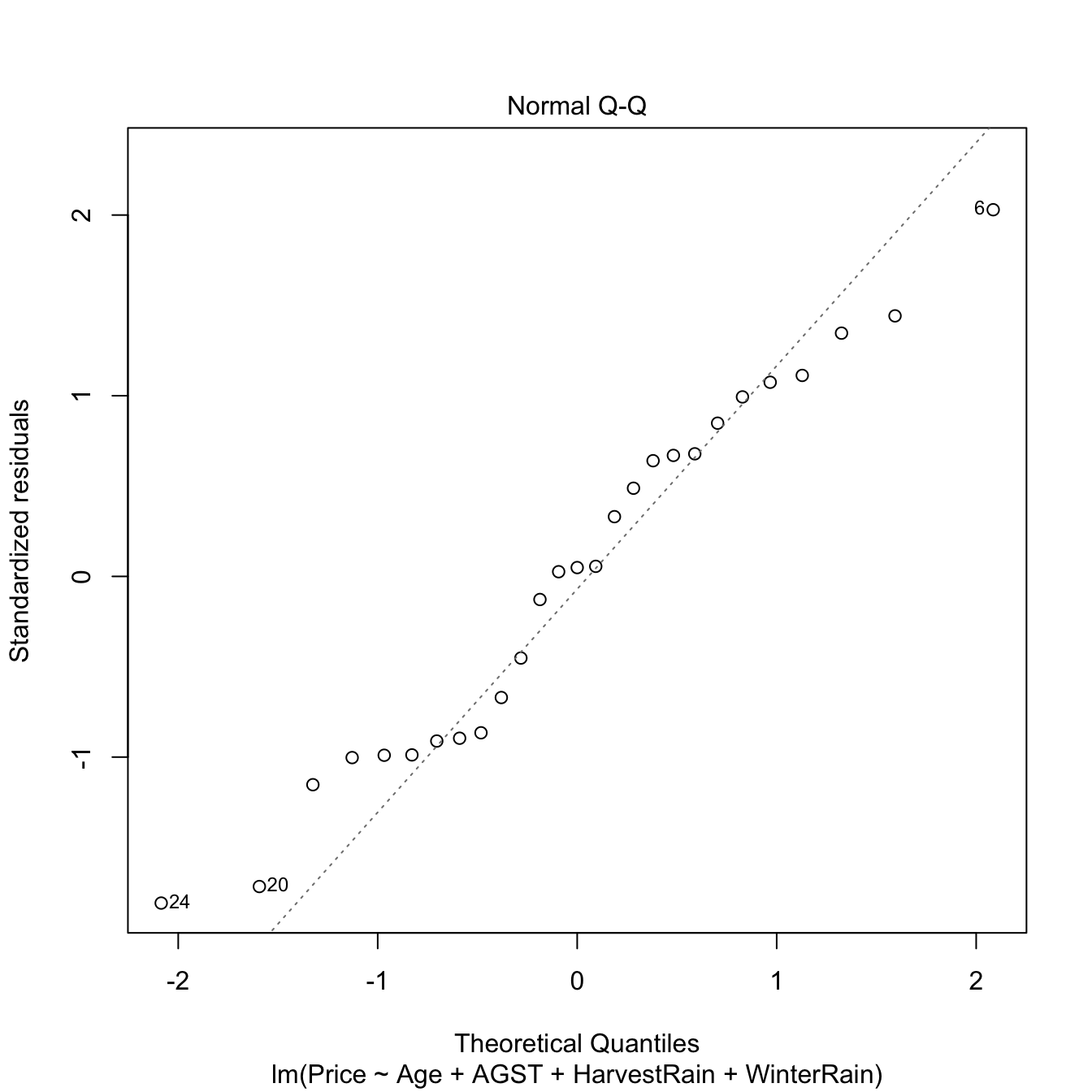

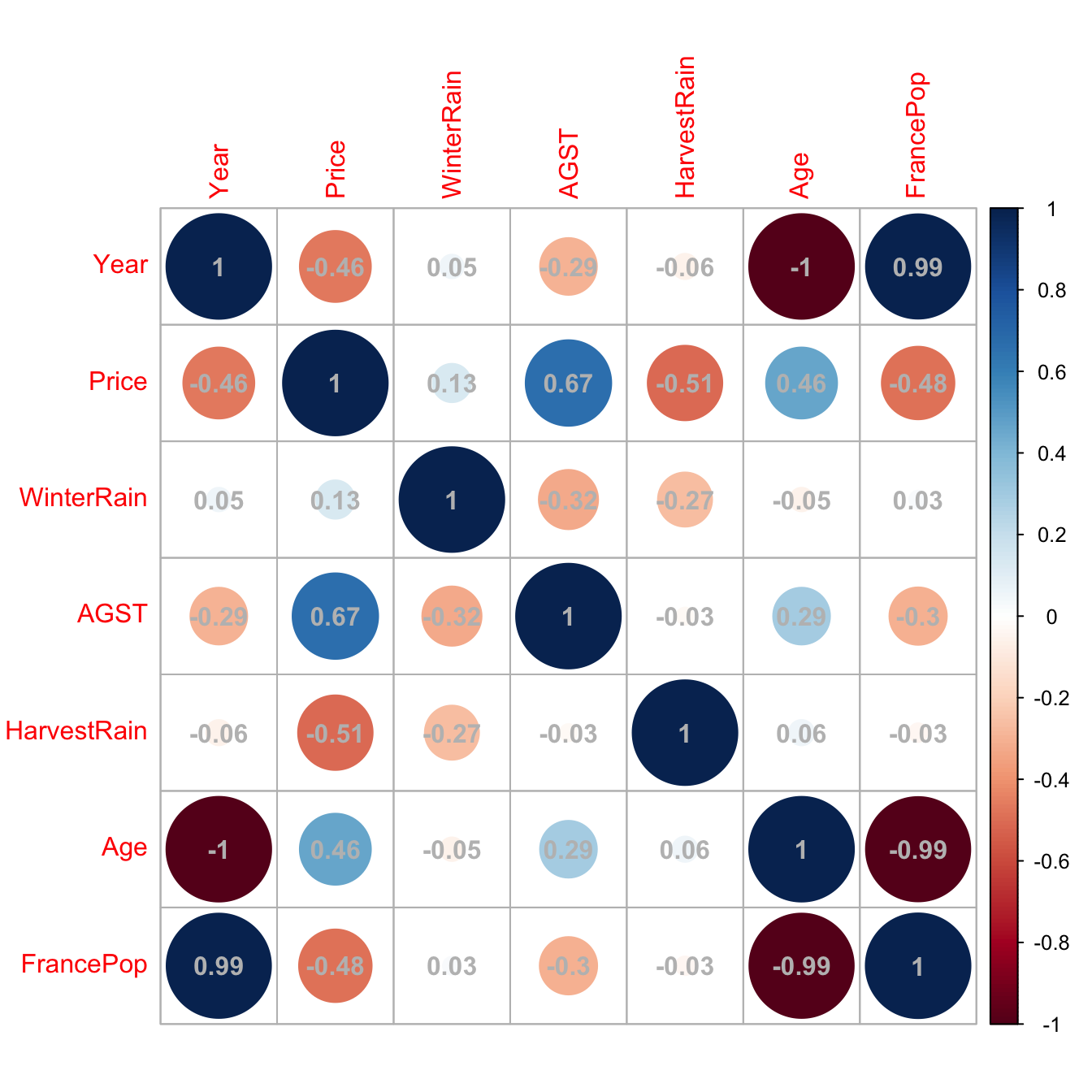

step takes as input an initial model to be improved and has several variants. Let’s see first how it works in its default mode using the already studied wine dataset.

# Load data -- notice that "Year" is also included

wine <- read.csv(file = "wine.csv", header = TRUE)We use as initial model the one featuring all the available predictors. The argument k of step stands for the factor post-multiplying \(\text{npar(model)}\) in (3.1). It defaults to \(2,\) which gives the AIC. If we set it to k = log(n), the function considers the BIC.

# Full model

mod <- lm(Price ~ ., data = wine)

# With AIC

mod_aic <- step(mod, k = 2)

## Start: AIC=-61.07

## Price ~ Year + WinterRain + AGST + HarvestRain + Age + FrancePop

##

##

## Step: AIC=-61.07

## Price ~ Year + WinterRain + AGST + HarvestRain + FrancePop

##

## Df Sum of Sq RSS AIC

## - FrancePop 1 0.0026 1.8058 -63.031

## - Year 1 0.0048 1.8080 -62.998

## <none> 1.8032 -61.070

## - WinterRain 1 0.4585 2.2617 -56.952

## - HarvestRain 1 1.8063 3.6095 -44.331

## - AGST 1 3.3756 5.1788 -34.584

##

## Step: AIC=-63.03

## Price ~ Year + WinterRain + AGST + HarvestRain

##

## Df Sum of Sq RSS AIC

## <none> 1.8058 -63.031

## - WinterRain 1 0.4809 2.2867 -58.656

## - Year 1 0.9089 2.7147 -54.023

## - HarvestRain 1 1.8760 3.6818 -45.796

## - AGST 1 3.4428 5.2486 -36.222

# The result is an lm object

summary(mod_aic)

##

## Call:

## lm(formula = Price ~ Year + WinterRain + AGST + HarvestRain,

## data = wine)

##

## Residuals:

## Min 1Q Median 3Q Max

## -0.46024 -0.23862 0.01347 0.18601 0.53443

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 43.6390418 14.6939240 2.970 0.00707 **

## Year -0.0238480 0.0071667 -3.328 0.00305 **

## WinterRain 0.0011667 0.0004820 2.420 0.02421 *

## AGST 0.6163916 0.0951747 6.476 1.63e-06 ***

## HarvestRain -0.0038606 0.0008075 -4.781 8.97e-05 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 0.2865 on 22 degrees of freedom

## Multiple R-squared: 0.8275, Adjusted R-squared: 0.7962

## F-statistic: 26.39 on 4 and 22 DF, p-value: 4.057e-08

# With BIC

mod_bic <- step(mod, k = log(nrow(wine)))

## Start: AIC=-53.29

## Price ~ Year + WinterRain + AGST + HarvestRain + Age + FrancePop

##

##

## Step: AIC=-53.29

## Price ~ Year + WinterRain + AGST + HarvestRain + FrancePop

##

## Df Sum of Sq RSS AIC

## - FrancePop 1 0.0026 1.8058 -56.551

## - Year 1 0.0048 1.8080 -56.519

## <none> 1.8032 -53.295

## - WinterRain 1 0.4585 2.2617 -50.473

## - HarvestRain 1 1.8063 3.6095 -37.852

## - AGST 1 3.3756 5.1788 -28.105

##

## Step: AIC=-56.55

## Price ~ Year + WinterRain + AGST + HarvestRain

##

## Df Sum of Sq RSS AIC

## <none> 1.8058 -56.551

## - WinterRain 1 0.4809 2.2867 -53.473

## - Year 1 0.9089 2.7147 -48.840

## - HarvestRain 1 1.8760 3.6818 -40.612

## - AGST 1 3.4428 5.2486 -31.039An explanation of what step did for mod_bic:

- At each step,

stepdisplayed information about the current value of the information criterion. For example, the BIC at the first step wasStep: AIC=-53.29and then it improved toStep: AIC=-56.55in the second step.59 - The next model to move on was decided by exploring the information criteria of the different models resulting from adding or removing a predictor (depending on the

directionargument, explained later). For example, in the first step the model arising from removing60FrancePophad a BIC equal to-56.551. - The stepwise regression proceeded then by removing

FrancePop, as it gave the lowest BIC. When repeating the previous exploration, it was found that removing<none>predictors was the best possible action.

The selected models mod_bic and mod_aic are equivalent to the mod_wine2 we selected in Section 2.7.3 as the best model. This is a simple illustration that the model selected by step is often a good starting point for further additions or deletions of predictors.

The direction argument of step controls the mode of the stepwise model search:

-

"backward": removes predictors sequentially from the given model. It produces a sequence of models of decreasing complexity until attaining the optimal one. -

"forward": adds predictors sequentially to the given model, using the available ones in thedataargument oflm. It produces a sequence of models of increasing complexity until attaining the optimal one. -

"both"(default): a forward-backward search that, at each step, decides whether to include or exclude a predictor. Differently from the previous modes, a predictor that was excluded/included previously can be later included/excluded.

The next chunk of code clearly explains how to exploit the direction argument, and other options of step, with a modified version of the wine dataset. An important warning is that in order to use direction = "forward" or direction = "both", scope needs to be properly defined. The practical advice to model selection is to run61 several of these three search modes and retain the model with minimum BIC/AIC, being specially careful with the scope argument.

# Add an irrelevant predictor to the wine dataset

set.seed(123456)

wine_noise <- wine

n <- nrow(wine_noise)

wine_noise$noisePredictor <- rnorm(n)

# Backward selection: removes predictors sequentially from the given model

# Starting from the model with all the predictors

mod_all <- lm(formula = Price ~ ., data = wine_noise)

step(mod_all, direction = "backward", k = log(n))

## Start: AIC=-50.13

## Price ~ Year + WinterRain + AGST + HarvestRain + Age + FrancePop +

## noisePredictor

##

##

## Step: AIC=-50.13

## Price ~ Year + WinterRain + AGST + HarvestRain + FrancePop +

## noisePredictor

##

## Df Sum of Sq RSS AIC

## - FrancePop 1 0.0036 1.7977 -53.376

## - Year 1 0.0038 1.7979 -53.374

## - noisePredictor 1 0.0090 1.8032 -53.295

## <none> 1.7941 -50.135

## - WinterRain 1 0.4598 2.2539 -47.271

## - HarvestRain 1 1.7666 3.5607 -34.923

## - AGST 1 3.3658 5.1599 -24.908

##

## Step: AIC=-53.38

## Price ~ Year + WinterRain + AGST + HarvestRain + noisePredictor

##

## Df Sum of Sq RSS AIC

## - noisePredictor 1 0.0081 1.8058 -56.551

## <none> 1.7977 -53.376

## - WinterRain 1 0.4771 2.2748 -50.317

## - Year 1 0.9162 2.7139 -45.552

## - HarvestRain 1 1.8449 3.6426 -37.606

## - AGST 1 3.4234 5.2212 -27.885

##

## Step: AIC=-56.55

## Price ~ Year + WinterRain + AGST + HarvestRain

##

## Df Sum of Sq RSS AIC

## <none> 1.8058 -56.551

## - WinterRain 1 0.4809 2.2867 -53.473

## - Year 1 0.9089 2.7147 -48.840

## - HarvestRain 1 1.8760 3.6818 -40.612

## - AGST 1 3.4428 5.2486 -31.039

##

## Call:

## lm(formula = Price ~ Year + WinterRain + AGST + HarvestRain,

## data = wine_noise)

##

## Coefficients:

## (Intercept) Year WinterRain AGST HarvestRain

## 43.639042 -0.023848 0.001167 0.616392 -0.003861

# Starting from an intermediate model

mod_inter <- lm(formula = Price ~ noisePredictor + Year + AGST,

data = wine_noise)

step(mod_inter, direction = "backward", k = log(n))

## Start: AIC=-32.38

## Price ~ noisePredictor + Year + AGST

##

## Df Sum of Sq RSS AIC

## - noisePredictor 1 0.0146 5.0082 -35.601

## <none> 4.9936 -32.384

## - Year 1 0.7522 5.7459 -31.891

## - AGST 1 3.2211 8.2147 -22.240

##

## Step: AIC=-35.6

## Price ~ Year + AGST

##

## Df Sum of Sq RSS AIC

## <none> 5.0082 -35.601

## - Year 1 0.7966 5.8049 -34.911

## - AGST 1 3.2426 8.2509 -25.417

##

## Call:

## lm(formula = Price ~ Year + AGST, data = wine_noise)

##

## Coefficients:

## (Intercept) Year AGST

## 41.49441 -0.02221 0.56067

# Recall that other predictors not included in mod_inter are not explored

# during the search (so the relevant predictor HarvestRain is not added)

# Forward selection: adds predictors sequentially from the given model

# Starting from the model with no predictors, only intercept (denoted as ~ 1)

mod_zero <- lm(formula = Price ~ 1, data = wine_noise)

step(mod_zero, direction = "forward",

scope = list(lower = ~ 1, upper = terms(Price ~ ., data = wine_noise)),

k = log(n))

## Start: AIC=-22.28

## Price ~ 1

##

## Df Sum of Sq RSS AIC

## + AGST 1 4.6655 5.8049 -34.911

## + HarvestRain 1 2.6933 7.7770 -27.014

## + FrancePop 1 2.4231 8.0472 -26.092

## + Age 1 2.2195 8.2509 -25.417

## + Year 1 2.2195 8.2509 -25.417

## <none> 10.4703 -22.281

## + WinterRain 1 0.1905 10.2798 -19.481

## + noisePredictor 1 0.1761 10.2942 -19.443

##

## Step: AIC=-34.91

## Price ~ AGST

##

## Df Sum of Sq RSS AIC

## + HarvestRain 1 2.50659 3.2983 -46.878

## + WinterRain 1 1.42392 4.3809 -39.214

## + FrancePop 1 0.90263 4.9022 -36.178

## + Age 1 0.79662 5.0082 -35.601

## + Year 1 0.79662 5.0082 -35.601

## <none> 5.8049 -34.911

## + noisePredictor 1 0.05900 5.7459 -31.891

##

## Step: AIC=-46.88

## Price ~ AGST + HarvestRain

##

## Df Sum of Sq RSS AIC

## + FrancePop 1 1.03572 2.2625 -53.759

## + Age 1 1.01159 2.2867 -53.473

## + Year 1 1.01159 2.2867 -53.473

## + WinterRain 1 0.58356 2.7147 -48.840

## <none> 3.2983 -46.878

## + noisePredictor 1 0.06084 3.2374 -44.085

##

## Step: AIC=-53.76

## Price ~ AGST + HarvestRain + FrancePop

##

## Df Sum of Sq RSS AIC

## + WinterRain 1 0.45456 1.8080 -56.519

## <none> 2.2625 -53.759

## + noisePredictor 1 0.00829 2.2542 -50.562

## + Year 1 0.00085 2.2617 -50.473

## + Age 1 0.00085 2.2617 -50.473

##

## Step: AIC=-56.52

## Price ~ AGST + HarvestRain + FrancePop + WinterRain

##

## Df Sum of Sq RSS AIC

## <none> 1.8080 -56.519

## + noisePredictor 1 0.0100635 1.7979 -53.374

## + Age 1 0.0048039 1.8032 -53.295

## + Year 1 0.0048039 1.8032 -53.295

##

## Call:

## lm(formula = Price ~ AGST + HarvestRain + FrancePop + WinterRain,

## data = wine_noise)

##

## Coefficients:

## (Intercept) AGST HarvestRain FrancePop WinterRain

## -5.945e-01 6.127e-01 -3.804e-03 -5.189e-05 1.136e-03

# It is very important to set the scope argument adequately when doing forward

# search! In the scope you have to define the "minimum" (lower) and "maximum"

# (upper) formulas (or fitted models) that delimit the explorable set. If not

# provided, the maximum is taken as the formula of the starting model (in this

# case mod_zero) and step will not do any search. Using terms(Price ~ ., data

# = wine_noise) materializes "." against the column names of wine_noise now,

# which avoids having to fit a throwaway "full" model only to define the scope.

# Starting from an intermediate model

step(mod_inter, direction = "forward",

scope = list(lower = ~ 1, upper = terms(Price ~ ., data = wine_noise)),

k = log(n))

## Start: AIC=-32.38

## Price ~ noisePredictor + Year + AGST

##

## Df Sum of Sq RSS AIC

## + HarvestRain 1 2.71878 2.2748 -50.317

## + WinterRain 1 1.35102 3.6426 -37.606

## <none> 4.9936 -32.384

## + FrancePop 1 0.24004 4.7536 -30.418

##

## Step: AIC=-50.32

## Price ~ noisePredictor + Year + AGST + HarvestRain

##

## Df Sum of Sq RSS AIC

## + WinterRain 1 0.47710 1.7977 -53.376

## <none> 2.2748 -50.317

## + FrancePop 1 0.02094 2.2539 -47.271

##

## Step: AIC=-53.38

## Price ~ noisePredictor + Year + AGST + HarvestRain + WinterRain

##

## Df Sum of Sq RSS AIC

## <none> 1.7977 -53.376

## + FrancePop 1 0.0036037 1.7941 -50.135

##

## Call:

## lm(formula = Price ~ noisePredictor + Year + AGST + HarvestRain +

## WinterRain, data = wine_noise)

##

## Coefficients:

## (Intercept) noisePredictor Year AGST HarvestRain WinterRain

## 44.096639 -0.019617 -0.024126 0.620522 -0.003840 0.001211

# Recall that predictors included in mod_inter are not dropped during the

# search (so the irrelevant noisePredictor is kept)

# Both selection: useful if starting from an intermediate model

# Removes the problems associated with "backward" and "forward" searches done

# from intermediate models

step(mod_inter, direction = "both",

scope = list(lower = ~ 1, upper = terms(Price ~ ., data = wine_noise)),

k = log(n))

## Start: AIC=-32.38

## Price ~ noisePredictor + Year + AGST

##

## Df Sum of Sq RSS AIC

## + HarvestRain 1 2.7188 2.2748 -50.317

## + WinterRain 1 1.3510 3.6426 -37.606

## - noisePredictor 1 0.0146 5.0082 -35.601

## <none> 4.9936 -32.384

## - Year 1 0.7522 5.7459 -31.891

## + FrancePop 1 0.2400 4.7536 -30.418

## - AGST 1 3.2211 8.2147 -22.240

##

## Step: AIC=-50.32

## Price ~ noisePredictor + Year + AGST + HarvestRain

##

## Df Sum of Sq RSS AIC

## - noisePredictor 1 0.01182 2.2867 -53.473

## + WinterRain 1 0.47710 1.7977 -53.376

## <none> 2.2748 -50.317

## + FrancePop 1 0.02094 2.2539 -47.271

## - Year 1 0.96258 3.2374 -44.085

## - HarvestRain 1 2.71878 4.9936 -32.384

## - AGST 1 2.94647 5.2213 -31.180

##

## Step: AIC=-53.47

## Price ~ Year + AGST + HarvestRain

##

## Df Sum of Sq RSS AIC

## + WinterRain 1 0.48087 1.8058 -56.551

## <none> 2.2867 -53.473

## + FrancePop 1 0.02497 2.2617 -50.473

## + noisePredictor 1 0.01182 2.2748 -50.317

## - Year 1 1.01159 3.2983 -46.878

## - HarvestRain 1 2.72157 5.0082 -35.601

## - AGST 1 2.96500 5.2517 -34.319

##

## Step: AIC=-56.55

## Price ~ Year + AGST + HarvestRain + WinterRain

##

## Df Sum of Sq RSS AIC

## <none> 1.8058 -56.551

## - WinterRain 1 0.4809 2.2867 -53.473

## + noisePredictor 1 0.0081 1.7977 -53.376

## + FrancePop 1 0.0026 1.8032 -53.295

## - Year 1 0.9089 2.7147 -48.840

## - HarvestRain 1 1.8760 3.6818 -40.612

## - AGST 1 3.4428 5.2486 -31.039

##

## Call:

## lm(formula = Price ~ Year + AGST + HarvestRain + WinterRain,

## data = wine_noise)

##

## Coefficients:

## (Intercept) Year AGST HarvestRain WinterRain

## 43.639042 -0.023848 0.616392 -0.003861 0.001167

# It is very important as well to correctly define the scope, because "both"

# resorts to "forward" (as well as to "backward")

# Using the defaults from the full model essentially does backward selection,

# but allowing predictors that were removed to enter again at later steps

step(mod_all, direction = "both", k = log(n))

## Start: AIC=-50.13

## Price ~ Year + WinterRain + AGST + HarvestRain + Age + FrancePop +

## noisePredictor

##

##

## Step: AIC=-50.13

## Price ~ Year + WinterRain + AGST + HarvestRain + FrancePop +

## noisePredictor

##

## Df Sum of Sq RSS AIC

## - FrancePop 1 0.0036 1.7977 -53.376

## - Year 1 0.0038 1.7979 -53.374

## - noisePredictor 1 0.0090 1.8032 -53.295

## <none> 1.7941 -50.135

## - WinterRain 1 0.4598 2.2539 -47.271

## - HarvestRain 1 1.7666 3.5607 -34.923

## - AGST 1 3.3658 5.1599 -24.908

##

## Step: AIC=-53.38

## Price ~ Year + WinterRain + AGST + HarvestRain + noisePredictor

##

## Df Sum of Sq RSS AIC

## - noisePredictor 1 0.0081 1.8058 -56.551

## <none> 1.7977 -53.376

## - WinterRain 1 0.4771 2.2748 -50.317

## + FrancePop 1 0.0036 1.7941 -50.135

## - Year 1 0.9162 2.7139 -45.552

## - HarvestRain 1 1.8449 3.6426 -37.606

## - AGST 1 3.4234 5.2212 -27.885

##

## Step: AIC=-56.55

## Price ~ Year + WinterRain + AGST + HarvestRain

##

## Df Sum of Sq RSS AIC

## <none> 1.8058 -56.551

## - WinterRain 1 0.4809 2.2867 -53.473

## + noisePredictor 1 0.0081 1.7977 -53.376

## + FrancePop 1 0.0026 1.8032 -53.295

## - Year 1 0.9089 2.7147 -48.840

## - HarvestRain 1 1.8760 3.6818 -40.612

## - AGST 1 3.4428 5.2486 -31.039

##

## Call:

## lm(formula = Price ~ Year + WinterRain + AGST + HarvestRain,

## data = wine_noise)

##

## Coefficients:

## (Intercept) Year WinterRain AGST HarvestRain

## 43.639042 -0.023848 0.001167 0.616392 -0.003861

# Omit lengthy outputs

step(mod_all, direction = "both", trace = 0,

scope = list(lower = ~ 1, upper = terms(Price ~ ., data = wine_noise)),

k = log(n))

##

## Call:

## lm(formula = Price ~ Year + WinterRain + AGST + HarvestRain,

## data = wine_noise)

##

## Coefficients:

## (Intercept) Year WinterRain AGST HarvestRain

## 43.639042 -0.023848 0.001167 0.616392 -0.003861Exercise 3.1 Run the previous stepwise selections for the Boston dataset, with the aim of clearly understanding the different search directions. Specifically:

- Do a

"forward"stepwise fit starting frommedv ~ 1. - Do a

"forward"stepwise fit starting frommedv ~ crim + lstat + age. - Do a

"both"stepwise fit starting frommedv ~ crim + lstat + age. - Do a

"both"stepwise fit starting frommedv ~ .. - Do a

"backward"stepwise fit starting frommedv ~ ..

We conclude with a couple of quirks of step to be aware of.

step assumes that no NA’s (missing values) are present in the data. It is advised to remove the missing values in the data before. Their presence might lead to errors. To do so, employ data = na.omit(dataset) in the call to lm (if your dataset is dataset). Also, see Appendix D for possible alternatives to deal with missing data.

step and friends (add1 and

drop1) compute a slightly different version of the

BIC/AIC than the BIC/AIC functions.

Precisely, the BIC/AIC they report come from the extractAIC

function, which differs in an additive constant from

the output of BIC/AIC. This is not relevant

for model comparison, since shifting by a common constant the BIC/AIC

does not change the lower-to-higher BIC/AIC ordering of models. However,

it is important to be aware of this fact in order to do not

compare directly the output of step with the one of

BIC/AIC. The additive constant (included in

BIC/AIC but not in extractAIC) is

\(n(\log(2 \pi) + 1) + \log(n)\) for

the BIC and \(n(\log(2 \pi) + 1) + 2\)

for the AIC. The discrepancy arises from simplifying the computation of

the BIC/AIC for linear models and from counting \(\hat\sigma^2\) as an estimated

parameter.

The following chunk of code illustrates the relation of the AIC reported in step, the output of extractAIC, and the BIC/AIC reported by BIC/AIC.

# Same BICs, different scale

n <- nobs(mod_bic)

extractAIC(mod_bic, k = log(n))[2]

## [1] -56.55135

BIC(mod_bic)

## [1] 23.36717

# Observe that step(mod, k = log(nrow(wine))) returned as final BIC

# the one given by extractAIC(), not by BIC()! But both are equivalent, they

# just differ in a constant shift

# Same AICs, different scale

extractAIC(mod_aic, k = 2)[2]

## [1] -63.03053

AIC(mod_aic)

## [1] 15.59215

# The additive constant: BIC() includes it but extractAIC() does not

BIC(mod_bic) - extractAIC(mod_bic, k = log(n))[2]

## [1] 79.91852

n * (log(2 * pi) + 1) + log(n)

## [1] 79.91852

# Same for the AIC

AIC(mod_aic) - extractAIC(mod_aic)[2]

## [1] 78.62268

n * (log(2 * pi) + 1) + 2

## [1] 78.622683.2.1 Case study application

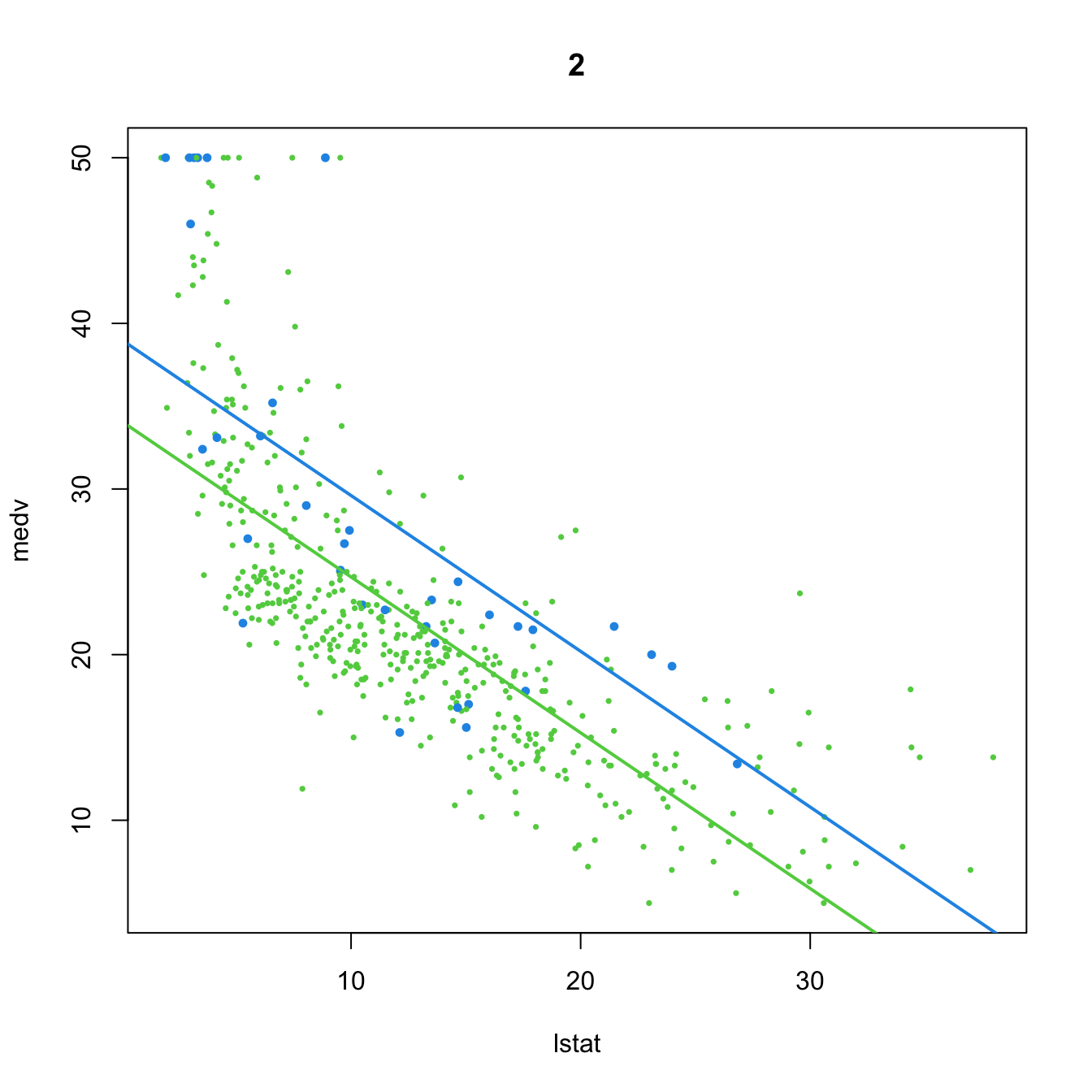

We want to build a linear model for predicting and explaining medv. There are a good number of predictors and some of them might be of little use for predicting medv. However, there is no clear intuition of which predictors will yield better explanations of medv with the information at hand. Therefore, we can start by doing a linear model on all the predictors:

mod_house <- lm(medv ~ ., data = Boston)

summary(mod_house)

##

## Call:

## lm(formula = medv ~ ., data = Boston)

##

## Residuals:

## Min 1Q Median 3Q Max

## -15.595 -2.730 -0.518 1.777 26.199

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 3.646e+01 5.103e+00 7.144 3.28e-12 ***

## crim -1.080e-01 3.286e-02 -3.287 0.001087 **

## zn 4.642e-02 1.373e-02 3.382 0.000778 ***

## indus 2.056e-02 6.150e-02 0.334 0.738288

## chas 2.687e+00 8.616e-01 3.118 0.001925 **

## nox -1.777e+01 3.820e+00 -4.651 4.25e-06 ***

## rm 3.810e+00 4.179e-01 9.116 < 2e-16 ***

## age 6.922e-04 1.321e-02 0.052 0.958229

## dis -1.476e+00 1.995e-01 -7.398 6.01e-13 ***

## rad 3.060e-01 6.635e-02 4.613 5.07e-06 ***

## tax -1.233e-02 3.760e-03 -3.280 0.001112 **

## ptratio -9.527e-01 1.308e-01 -7.283 1.31e-12 ***

## black 9.312e-03 2.686e-03 3.467 0.000573 ***

## lstat -5.248e-01 5.072e-02 -10.347 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 4.745 on 492 degrees of freedom

## Multiple R-squared: 0.7406, Adjusted R-squared: 0.7338

## F-statistic: 108.1 on 13 and 492 DF, p-value: < 2.2e-16There are a couple of non-significant variables, but so far the model has an \(R^2=0.74\) and the fitted coefficients are sensible with what would be expected. For example, crim, tax, ptratio, and nox have negative effects on medv, while rm, rad, and chas have positive effects. However, the non-significant coefficients are only adding noise to the prediction and decreasing the overall accuracy of the coefficient estimates.

Let’s polish the previous model a little bit. Instead of manually removing each non-significant variable to reduce the complexity, we employ step for performing a forward-backward search starting from the full model. This gives us a candidate best model.

# Best BIC and AIC models

mod_bic <- step(mod_house, k = log(nrow(Boston)))

## Start: AIC=1648.81

## medv ~ crim + zn + indus + chas + nox + rm + age + dis + rad +

## tax + ptratio + black + lstat

##

## Df Sum of Sq RSS AIC

## - age 1 0.06 11079 1642.6

## - indus 1 2.52 11081 1642.7

## <none> 11079 1648.8

## - chas 1 218.97 11298 1652.5

## - tax 1 242.26 11321 1653.5

## - crim 1 243.22 11322 1653.6

## - zn 1 257.49 11336 1654.2

## - black 1 270.63 11349 1654.8

## - rad 1 479.15 11558 1664.0

## - nox 1 487.16 11566 1664.4

## - ptratio 1 1194.23 12273 1694.4

## - dis 1 1232.41 12311 1696.0

## - rm 1 1871.32 12950 1721.6

## - lstat 1 2410.84 13490 1742.2

##

## Step: AIC=1642.59

## medv ~ crim + zn + indus + chas + nox + rm + dis + rad + tax +

## ptratio + black + lstat

##

## Df Sum of Sq RSS AIC

## - indus 1 2.52 11081 1636.5

## <none> 11079 1642.6

## - chas 1 219.91 11299 1646.3

## - tax 1 242.24 11321 1647.3

## - crim 1 243.20 11322 1647.3

## - zn 1 260.32 11339 1648.1

## - black 1 272.26 11351 1648.7

## - rad 1 481.09 11560 1657.9

## - nox 1 520.87 11600 1659.6

## - ptratio 1 1200.23 12279 1688.4

## - dis 1 1352.26 12431 1694.6

## - rm 1 1959.55 13038 1718.8

## - lstat 1 2718.88 13798 1747.4

##

## Step: AIC=1636.48

## medv ~ crim + zn + chas + nox + rm + dis + rad + tax + ptratio +

## black + lstat

##

## Df Sum of Sq RSS AIC

## <none> 11081 1636.5

## - chas 1 227.21 11309 1640.5

## - crim 1 245.37 11327 1641.3

## - zn 1 257.82 11339 1641.9

## - black 1 270.82 11352 1642.5

## - tax 1 273.62 11355 1642.6

## - rad 1 500.92 11582 1652.6

## - nox 1 541.91 11623 1654.4

## - ptratio 1 1206.45 12288 1682.5

## - dis 1 1448.94 12530 1692.4

## - rm 1 1963.66 13045 1712.8

## - lstat 1 2723.48 13805 1741.5

mod_aic <- step(mod_house, trace = 0, k = 2)

# Comparison: same fits

car::compareCoefs(mod_bic, mod_aic)

## Calls:

## 1: lm(formula = medv ~ crim + zn + chas + nox + rm + dis + rad + tax + ptratio + black + lstat, data = Boston)

## 2: lm(formula = medv ~ crim + zn + chas + nox + rm + dis + rad + tax + ptratio + black + lstat, data = Boston)

##

## Model 1 Model 2

## (Intercept) 36.34 36.34

## SE 5.07 5.07

##

## crim -0.1084 -0.1084

## SE 0.0328 0.0328

##

## zn 0.0458 0.0458

## SE 0.0135 0.0135

##

## chas 2.719 2.719

## SE 0.854 0.854

##

## nox -17.38 -17.38

## SE 3.54 3.54

##

## rm 3.802 3.802

## SE 0.406 0.406

##

## dis -1.493 -1.493

## SE 0.186 0.186

##

## rad 0.2996 0.2996

## SE 0.0634 0.0634

##

## tax -0.01178 -0.01178

## SE 0.00337 0.00337

##

## ptratio -0.947 -0.947

## SE 0.129 0.129

##

## black 0.00929 0.00929

## SE 0.00267 0.00267

##

## lstat -0.5226 -0.5226

## SE 0.0474 0.0474

##

summary(mod_bic)

##

## Call:

## lm(formula = medv ~ crim + zn + chas + nox + rm + dis + rad +

## tax + ptratio + black + lstat, data = Boston)

##

## Residuals:

## Min 1Q Median 3Q Max

## -15.5984 -2.7386 -0.5046 1.7273 26.2373

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 36.341145 5.067492 7.171 2.73e-12 ***

## crim -0.108413 0.032779 -3.307 0.001010 **

## zn 0.045845 0.013523 3.390 0.000754 ***

## chas 2.718716 0.854240 3.183 0.001551 **

## nox -17.376023 3.535243 -4.915 1.21e-06 ***

## rm 3.801579 0.406316 9.356 < 2e-16 ***

## dis -1.492711 0.185731 -8.037 6.84e-15 ***

## rad 0.299608 0.063402 4.726 3.00e-06 ***

## tax -0.011778 0.003372 -3.493 0.000521 ***

## ptratio -0.946525 0.129066 -7.334 9.24e-13 ***

## black 0.009291 0.002674 3.475 0.000557 ***

## lstat -0.522553 0.047424 -11.019 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 4.736 on 494 degrees of freedom

## Multiple R-squared: 0.7406, Adjusted R-squared: 0.7348

## F-statistic: 128.2 on 11 and 494 DF, p-value: < 2.2e-16

# Confidence intervals

confint(mod_bic)

## 2.5 % 97.5 %

## (Intercept) 26.384649126 46.29764088

## crim -0.172817670 -0.04400902

## zn 0.019275889 0.07241397

## chas 1.040324913 4.39710769

## nox -24.321990312 -10.43005655

## rm 3.003258393 4.59989929

## dis -1.857631161 -1.12779176

## rad 0.175037411 0.42417950

## tax -0.018403857 -0.00515209

## ptratio -1.200109823 -0.69293932

## black 0.004037216 0.01454447

## lstat -0.615731781 -0.42937513Note how the \(R^2_\text{Adj}\) has slightly increased with respect to the full model and how all the predictors are significant. Note also that mod_bic and mod_aic are the same.

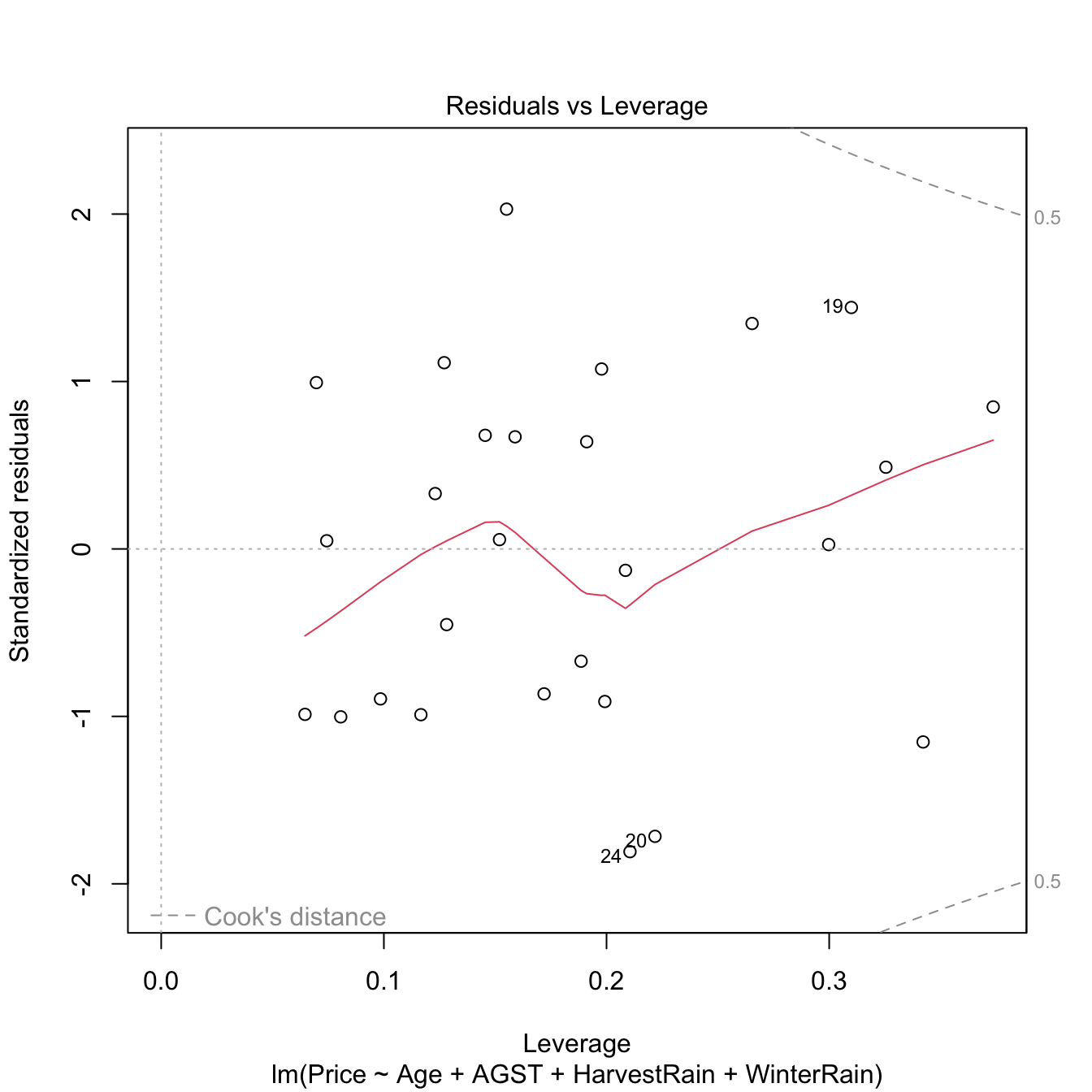

Using mod_bic, we can quantify the influence of the predictor variables on the housing prices (Q1) and we can conclude that, in the final model (Q2) and with significance level \(\alpha=0.05\):

-

zn,chas,rm,rad, andblackhave a significantly positive influence onmedv; -

crim,nox,dis,tax,ptratio, andlstathave a significantly negative influence onmedv.

Exercise 3.2 The functions add1 and drop1 (the base-R counterparts of MASS::addterm / MASS::dropterm) allow adding and removing all individual predictors to a given model, and inform the BICs / AICs of the possible combinations. Check that:

-

mod_biccannot be improved in terms of BIC by removing predictors. Usedrop1(mod_bic, k = log(nobs(mod_bic)))for that. -

mod_biccannot be improved in terms of BIC by adding predictors. Useadd1(mod_bic, scope = terms(medv ~ ., data = Boston), k = log(nobs(mod_bic)))for that.

For the second point, recall that scope must specify the maximal model or formula. However, be careful because if using the formula approach, add1(mod_bic, scope = medv ~ ., k = log(nobs(mod_bic))) will understand that . refers to all the predictors in mod_bic, not in the Boston dataset. Using terms(medv ~ ., data = Boston) materializes the full set of predictors and sidesteps the issue.

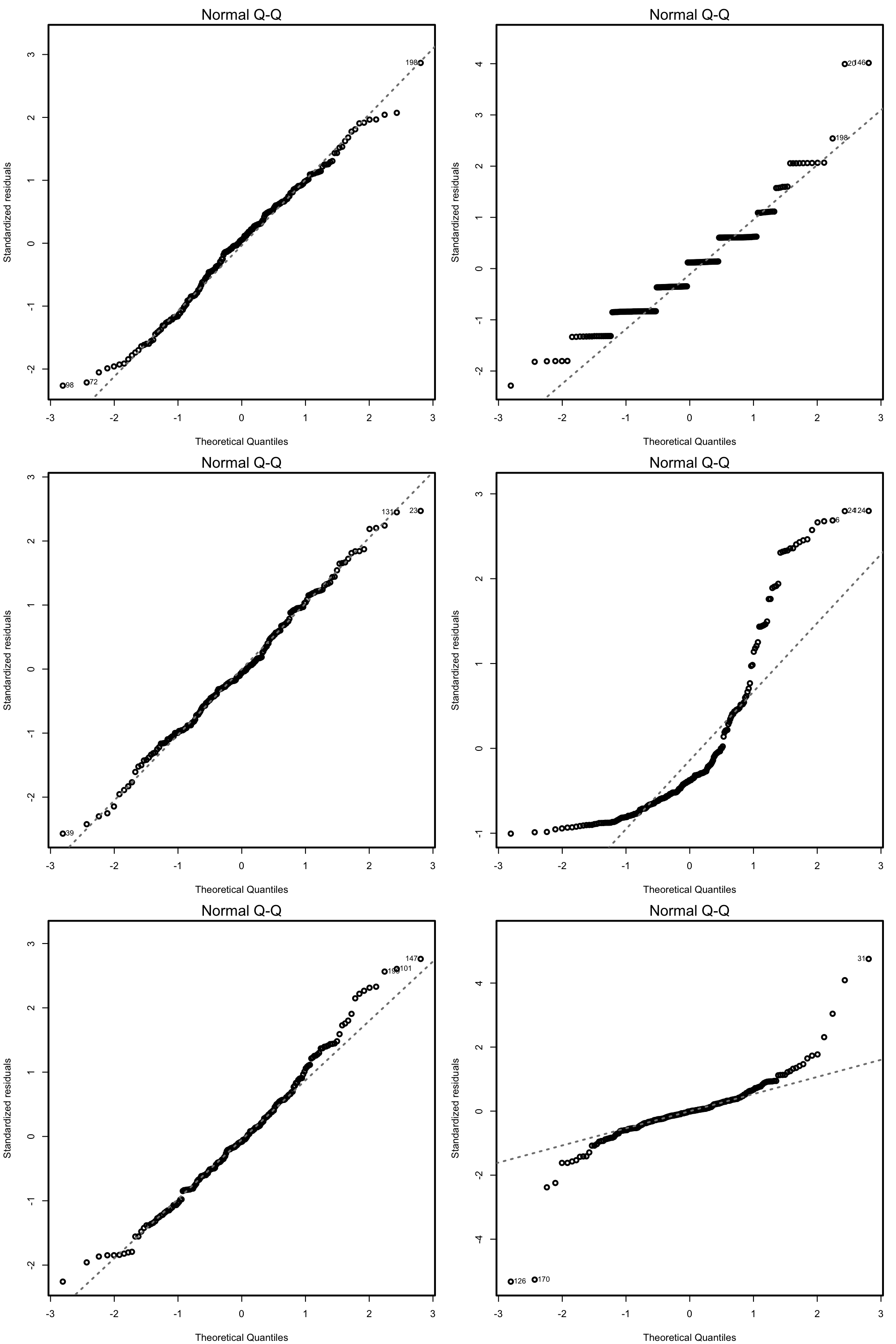

3.2.2 Consistency in model selection

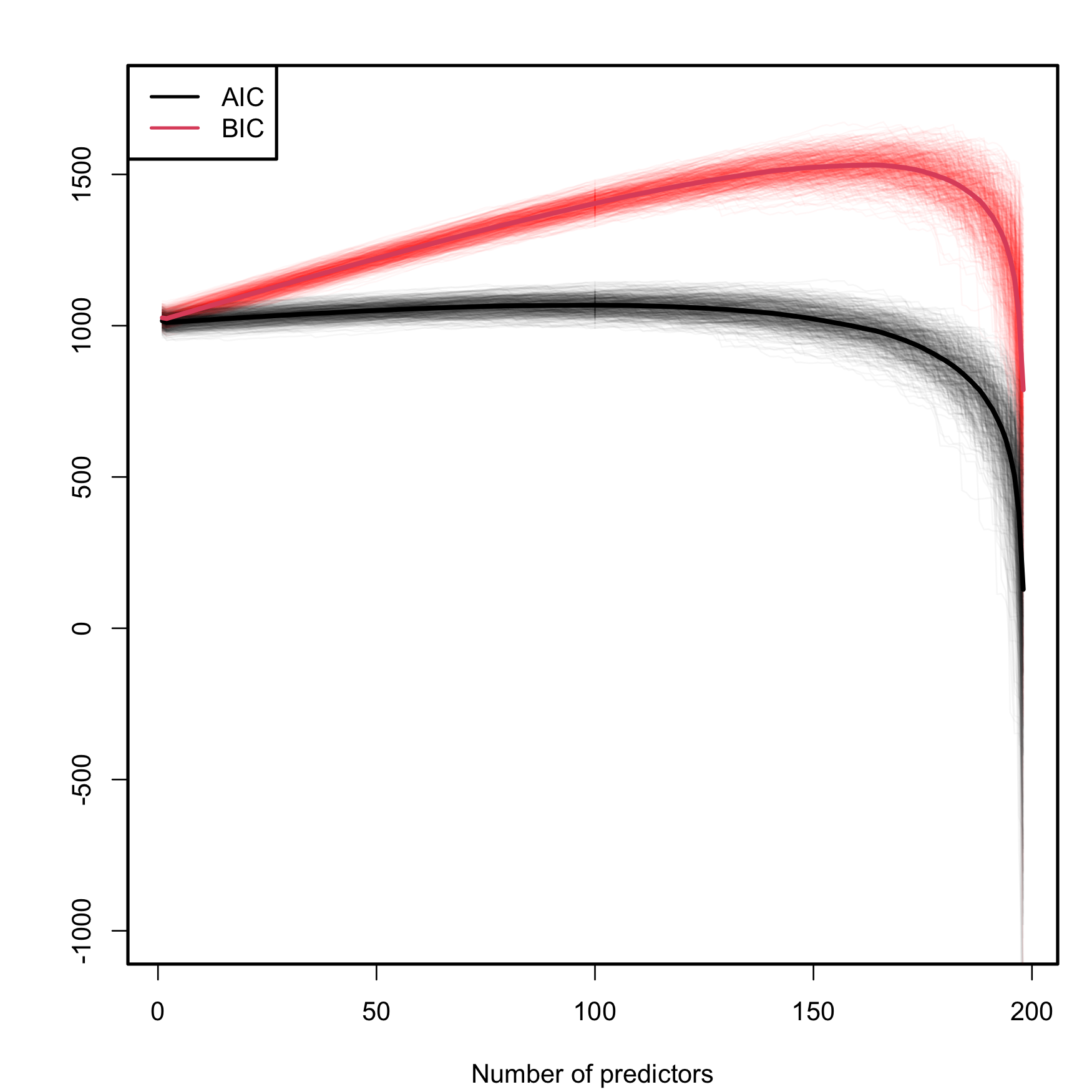

A caveat on the use of the BIC/AIC is that both criteria are constructed assuming that the sample size \(n\) is much larger than the number of parameters in the model (\(p+2\)), that is, assuming that \(n\gg p+2.\) Therefore, they will work reasonably well if \(n\gg p+2\) but, when this is not true, they enter in “overfitting mode” and start favoring unrealistic complex models. An illustration of this phenomenon is shown in Figure 3.3, which is the BIC/AIC version of Figure 2.19 and corresponds to the simulation study of Section 2.6. The BIC/AIC curves tend to have local minima close to \(p=10\) and then increase. But, when \(p+2\) gets close to \(n,\) they quickly drop below their values at \(p=10.\)

In particular, Figure 3.3 gives the following insights:

- The steeper BIC curves are a consequence of the higher penalization that BIC introduces on model complexity with respect to AIC. Therefore, the local minima of the BIC curves are better identified than those of the flatter AIC curves.

- The BIC resists better the overfitting than the AIC. The average BIC curve, \(\overline{\mathrm{BIC}}(p),\) starts giving smaller values than \(\overline{\mathrm{BIC}}(10)\approx 1057.74\) when \(p=197\) (\(\overline{\mathrm{BIC}}(198)\approx 788.30\)). Overfitting appears earlier in the AIC, and \(\overline{\mathrm{AIC}}(10)\approx 1018.16\) is surpassed for \(p\geq152\) (\(\overline{\mathrm{AIC}}(152)\approx 1017.50\); \(\overline{\mathrm{AIC}}(198)\approx 128.64\)).

- The variabilities of both AIC and BIC curves are comparable, with no significant differences between them.

Figure 3.3: Comparison of BIC and AIC on the model (2.26) fitted with data generated by (2.25). The number of predictors \(p\) ranges from \(1\) to \(198,\) with only the first ten predictors being significant. The \(M=500\) curves for each color arise from \(M\) simulated datasets of sample size \(n=200.\) The thicker curves are the mean of each color’s curves.

Another big difference between the AIC and BIC, which is indeed behind the behaviors seen in Figure 3.3, is the consistency of the BIC in performing model selection. In simple terms, “consistency” means that, if enough data is provided, the BIC is guaranteed to identify the true data-generating model among a list of candidate models if the true model is included in the list. Mathematically, it means that, given a collection of models \(M_0,M_1,\ldots,M_m,\) where \(M_0\) is the generating model of a sample of size \(n,\) then \[\begin{align} \mathbb{P}\left[\arg\min_{k=0,\ldots,m}\mathrm{BIC}(\hat{M}_k)=0\right]\to1\quad \text{as}\quad n\to\infty, \tag{3.2} \end{align}\] where \(\hat{M}_k\) represents the \(M_k\) model fitted with a sample of size \(n\) generated from \(M_0.\)62 Note that, despite being a nice theoretical result, its application may be unrealistic in practice, as most likely the true model is nonlinear or not present in the list of candidate models we examine.

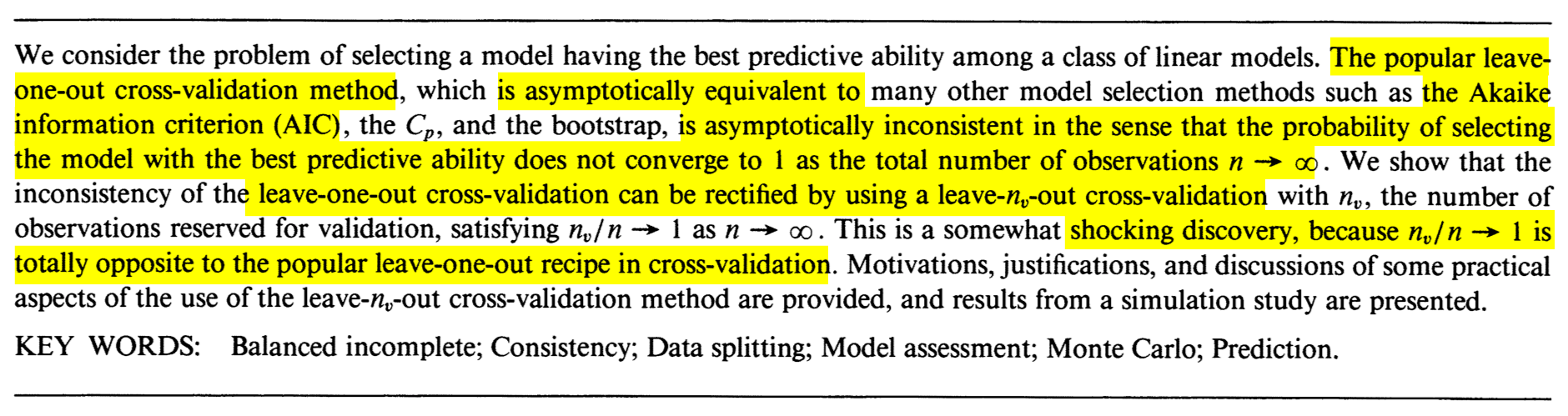

The AIC is inconsistent, in the sense that (3.2) is not true if \(\mathrm{BIC}\) is replaced by \(\mathrm{AIC}.\) Indeed, this result can be seen as a consequence of the asymptotic equivalence of model selection by AIC and leave-one-out cross-validation,63 and the inconsistency of the latter. This is beautifully described in the paper by Shao (1993), whose abstract is given in Figure 3.4. The paper made a shocking discovery in terms of what is the required modification to induce consistency in model selection by cross-validation.

Figure 3.4: The abstract of Jun Shao’s Linear model selection by cross-validation (Shao 1993).

The Leave-One-Out Cross-Validation (LOOCV) error in a fitted linear model is defined as \[\begin{align} \mathrm{LOOCV}:=\frac{1}{n}\sum_{i=1}^n(Y_i-\hat{Y}_{i,-i})^2,\tag{3.3} \end{align}\] where \(\hat{Y}_{i,-i}\) is the prediction of the \(i\)-th observation in a linear model that has been fitted without the \(i\)-th datum \((X_{i1},\ldots,X_{ip},Y_i).\) That is, \(\hat{Y}_{i,-i}=\hat{\beta}_{0,-i}+\hat{\beta}_{1,-i}X_{i1}+\cdots+\hat{\beta}_{p,-i}X_{ip},\) where \(\hat{\boldsymbol{\beta}}_{-i}\) are the estimated coefficients from the sample \(\{(X_{j1},\ldots,X_{jp},Y_j)\}_{j=1,\, j\neq i}^n.\) Model selection based on LOOCV chooses the model with minimum error (3.3) within a list of candidate models. Note that the computation of \(\mathrm{LOOCV}\) requires, in principle, to fit \(n\) separate linear models, predict, and then aggregate. However, an algebraic shortcut based on (4.25) allows us to compute (3.3) using a single linear fit.

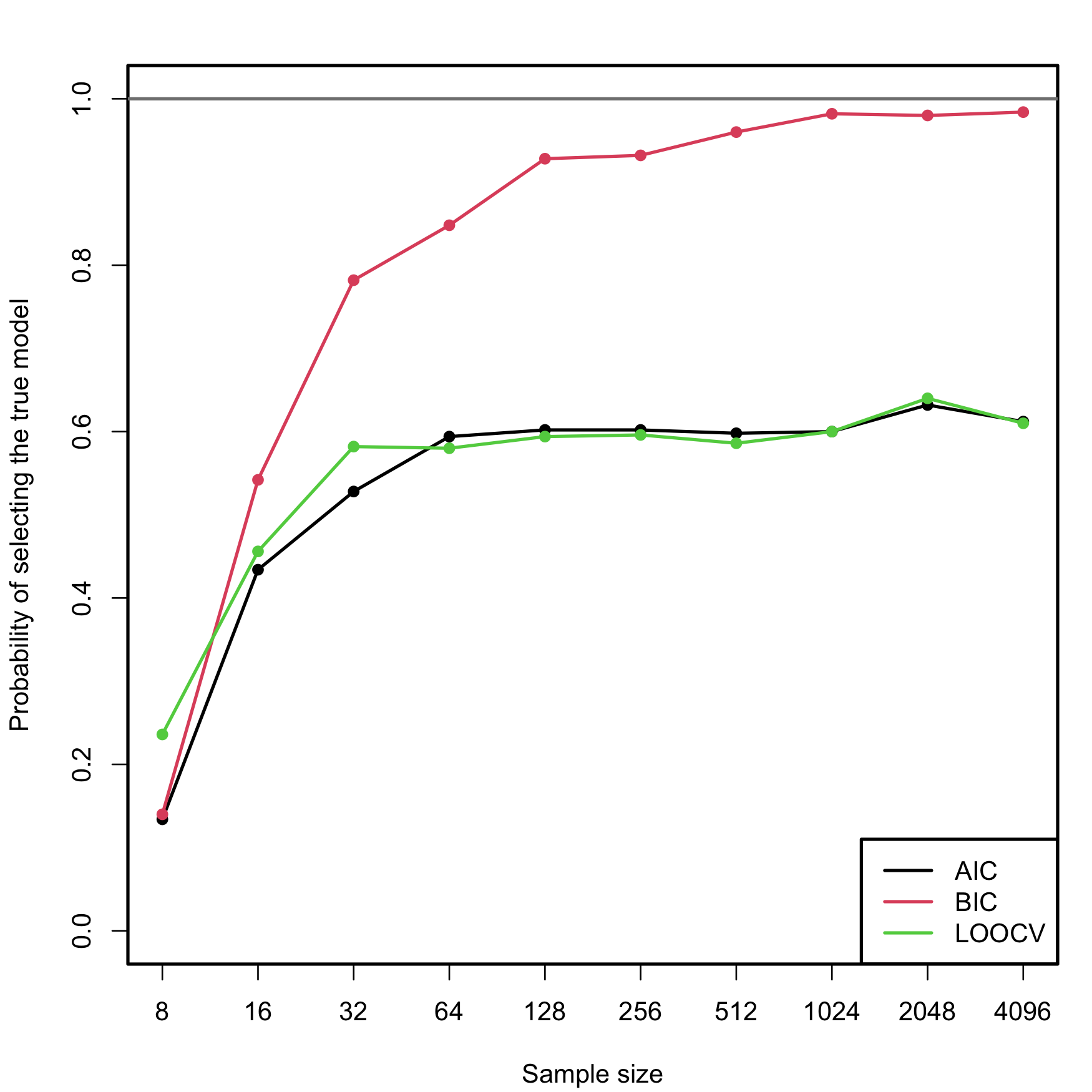

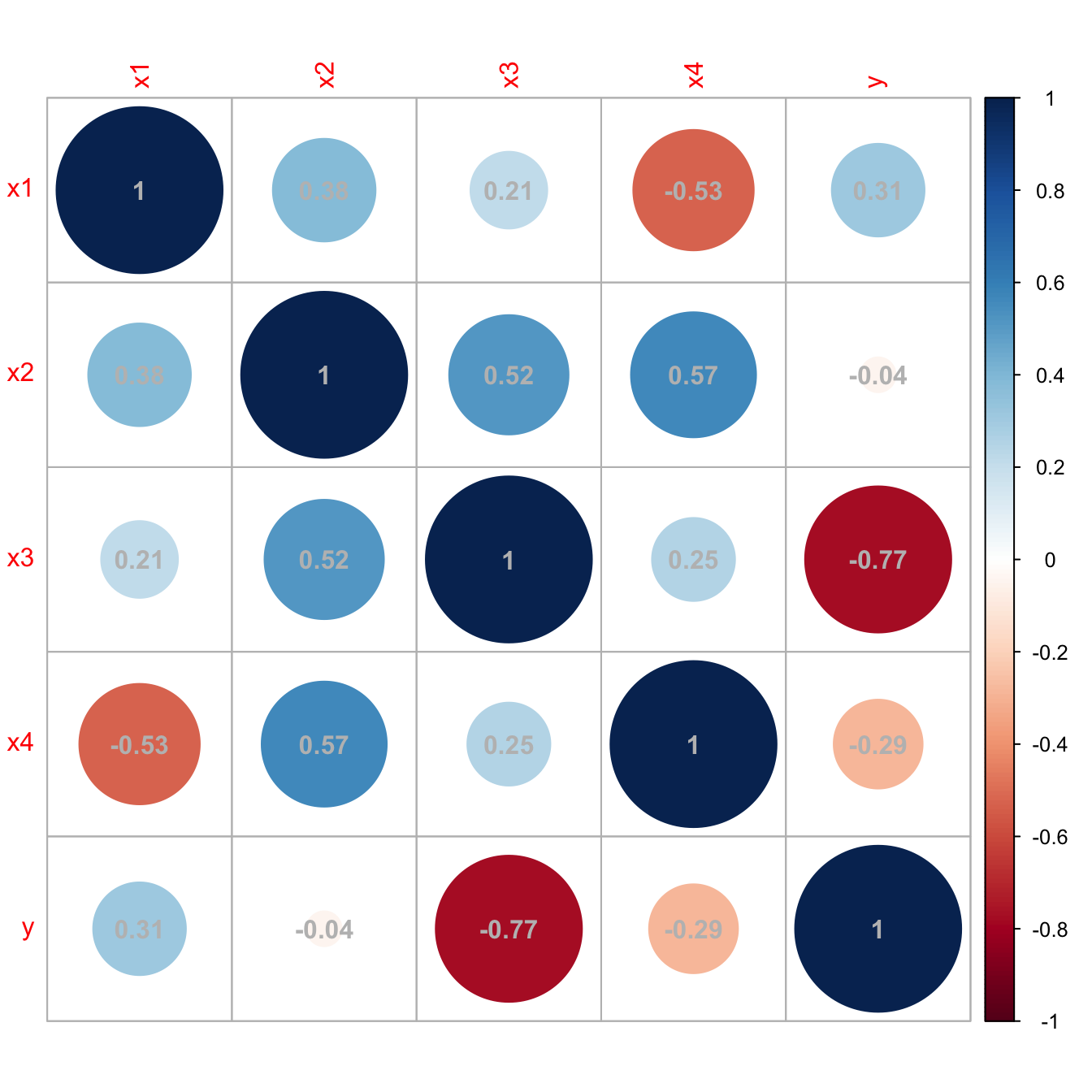

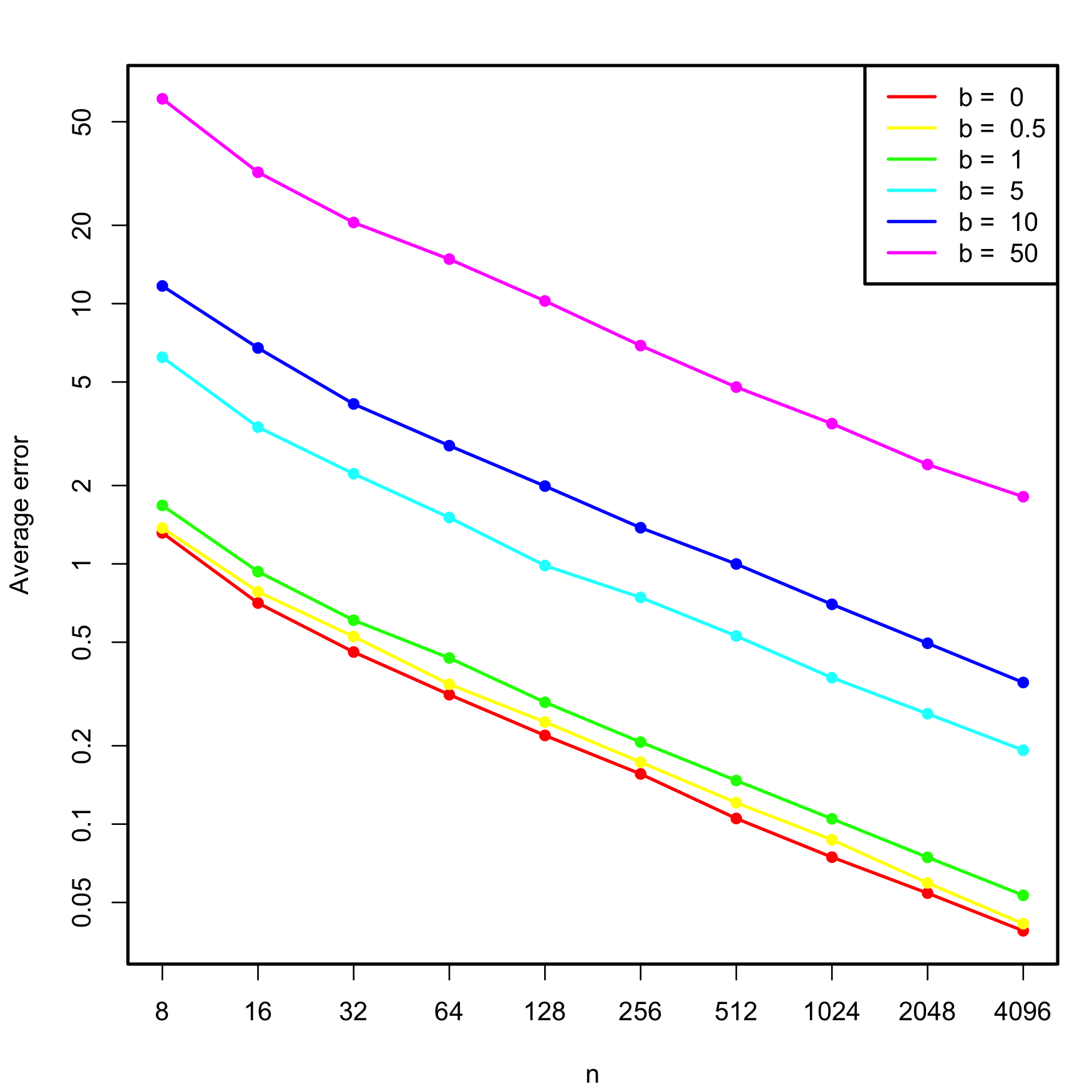

We carry out a small simulation study in order to illustrate the consistency property (3.2) of BIC and the inconsistency of model selection by AIC and LOOCV. For that, we consider the linear model \[\begin{align} \begin{split} Y&=\beta_0+\beta_1X_1+\beta_2X_2+\beta_3X_3+\beta_4X_4+\beta_5X_5+\varepsilon,\\ \boldsymbol{\beta}&=(0.5,1,1,0,0,0)^\top, \end{split} \tag{3.4} \end{align}\] where \(X_j\sim\mathcal{N}(0,1)\) for \(j=1,\ldots,5\) (independently) and \(\varepsilon\sim\mathcal{N}(0,1).\) Only the first two predictors are relevant, the last three are “garbage” predictors. For a given sample, model selection is performed by selecting among the \(2^5\) possible fitted models the ones with minimum AIC, BIC, and LOOCV. The experiment is repeated \(M=500\) times for sample sizes \(n=2^\ell,\) \(\ell=3,\ldots,12,\) and the estimated probability of selecting the correct model (the one only involving \(X_1\) and \(X_2\)) is displayed in Figure 3.5. The figure evidences empirically several interesting results:

- LOOCV and AIC are asymptotically equivalent. For large \(n,\) they tend to select the same model and hence their estimated probabilities of selecting the true model are almost equal. For small \(n,\) there are significant differences between them.

- The BIC is consistent in selecting the true model, and its probability of doing so quickly approaches \(1,\) as anticipated by (3.2).

- The AIC and LOOCV are inconsistent in selecting the true model. Despite the sample size \(n\) doubling at each step, their probability of recovering the true model gets stuck at about \(0.60.\)

- Even for moderate \(n\)’s, the probability of recovering the true model by BIC quickly outperforms those of AIC/LOOCV.

Figure 3.5: Estimation of the probability of selecting the correct model by minimizing the AIC, BIC, and LOOCV criteria, done for an exhaustive search with \(p=5\) predictors. The correct model contained two predictors. The probability was estimated with \(M=500\) Monte Carlo runs. The horizontal axis is in logarithmic scale. The estimated proportion of true model recoveries with BIC for \(n=4096\) is \(0.984\).

Exercise 3.3 Implement the simulation study behind Figure 3.5:

- Sample from (3.4), but take \(p=4.\)

- Fit the \(2^p\) possible models.

- Select the best model according to the AIC and BIC.

- Repeat Steps 1–3 \(M=100\) times. Estimate by Monte Carlo the probability of selecting the true model.

- Move \(n\) in a range from \(10\) to \(200.\)

Once you have a working solution, increase \((p, n, M)\) to approach the settings in Figure 3.5 (or go beyond!).

Exercise 3.4 Modify the Step 3 of the previous exercise to:

Exercise 3.5 Investigate what happens with the probability of selecting the true model using BIC and AIC if the exhaustive search is replaced by a stepwise selection. Precisely, do:

- Sample from (3.4), but take \(p=10\) (pad \(\boldsymbol{\beta}\) with zeros).

- Run a forward-backward stepwise search, both for the AIC and BIC.

- Repeat Steps 1–2 \(M=100\) times. Estimate by Monte Carlo the probability of selecting the true model.

- Move \(n\) in a range from \(20\) to \(200.\)

Once you have a working solution, increase \((n, M)\) to approach the settings in Figure 3.5 (or go beyond!).

Shao (1993)’s modification on how to make consistent the model selection by cross-validation is shocking. As in LOOCV, one has to split the sample of size \(n\) into two subsets: a training set, of size \(n_t,\) and a validation set, of size \(n_v.\) Of course, \(n=n_t+n_v.\) However, totally opposite to LOOCV, where \(n_t=n-1\) and \(n_v=1,\) the modification required is to choose \(n_v\) asymptotically equal to \(n\), in the sense that \(n_v/n\to1\) as \(n\to\infty.\) An example would be to take \(n_v=n-20\) and \(n_t=20.\) But \(n_v=n/2\) and \(n_t=n/2,\) a typical choice, would not make the selection consistent! Shao (1993)’s result gives a great insight: the difficulty in model selection lies in the comparison of models, not in their estimation, and the sample information has to be disproportionately allocated to the comparison task to achieve a consistent model selection device.

Exercise 3.6 Verify Shao (1993)’s result by constructing the analogous of Figure 3.5 for the following choices of \((n_t,n_v)\):

- \(n_t=n-1,\) \(n_v=1\) (LOOCV).

- \(n_t=n/2,\) \(n_v=n/2.\)

- \(n_t=n/4,\) \(n_v=(3/4)n.\)

- \(n_t=5p,\) \(n_v=n-5p.\)

Use first \(p=4\) predictors, \(M=100\) Monte Carlo runs, and move \(n\) in a range from \(20\) to \(200.\) Then increase the settings to approach those of Figure 3.5.

3.3 Use of qualitative predictors

An important situation not covered so far is how to deal with qualitative, and not quantitative, predictors. Qualitative predictors are also known as categorical variables or, in R’s terminology, factors, and are very common in many fields, such as in social sciences. Dealing with them requires some care and proper understanding of how these variables are represented.

The simplest case is the situation with two levels. A binary variable \(C\) with two levels (for example, a and b) can be encoded as

\[\begin{align*} D=\left\{\begin{array}{ll} 1,&\text{if }C=b,\\ 0,&\text{if }C=a. \end{array}\right. \end{align*}\]

\(D\) is a dummy variable: it codifies with zeros and ones the two possible levels of the categorical variable. An example of \(C\) is smoker, which has levels yes and no. The dummy variable associated is \(D=1\) if a person smokes and \(D=0\) if a person is non-smoker.

The advantage of this dummification is its interpretability in regression models. Since level a corresponds to \(0,\) it can be seen as the reference level to which level b is compared. This is the key point in dummification: set one level as the reference, codify the rest as departures from it.

The previous interpretation translates easily to the linear model. Assume that the dummy variable \(D\) is available together with other predictors \(X_1,\ldots,X_p.\) Then:

\[\begin{align*} \mathbb{E}[Y \mid X_1=x_1,\ldots,X_p=x_p,D=d]=\beta_0+\beta_1x_1+\cdots+\beta_px_p+\beta_{p+1}d. \end{align*}\]

The coefficient associated with \(D\) is easily interpretable: \(\beta_{p+1}\) is the increment in the mean of \(Y\) associated with changing \(D=0\) (reference) to \(D=1,\) while the rest of the predictors are fixed. Or in other words, \(\beta_{p+1}\) is the increment in the mean of \(Y\) associated with changing the level of the categorical variable from a to b.

R does the dummification automatically, translating a categorical variable \(C\) into its dummy version \(D,\) if it detects that a factor variable is present in the regression model.

Let’s see now the case with more than two levels, for example, a categorical variable \(C\) with levels a, b, and c. If we take a as the reference level, this variable can be represented by two dummy variables:

\[\begin{align*} D_1=\left\{\begin{array}{ll}1,&\text{if }C=b,\\0,& \text{if }C\neq b\end{array}\right. \end{align*}\]

and

\[\begin{align*} D_2=\left\{\begin{array}{ll}1,&\text{if }C=c,\\0,& \text{if }C\neq c.\end{array}\right. \end{align*}\]

Therefore, we can represent the levels of \(C\) as in the following table.

| \(C\) | \(D_1\) | \(D_2\) |

|---|---|---|

| \(a\) | \(0\) | \(0\) |

| \(b\) | \(1\) | \(0\) |

| \(c\) | \(0\) | \(1\) |

The interpretation of the regression models in the presence of \(D_1\) and \(D_2\) is very similar to the one before. For example, for the linear model, the coefficient associated with \(D_1\) gives the increment in the mean of \(Y\) when the level of \(C\) changes from a to b, and the coefficient for \(D_2\) gives the increment in the mean of \(Y\) when \(C\) changes from a to c.

In general, if we have a categorical variable \(C\) with \(J\) levels, then the previous process is iterated and the number of dummy variables required to encode \(C\) is \(J-1\).64 \(\!\!^,\)65 Again, R does the dummification automatically if it detects that a factor variable is present in the regression model.

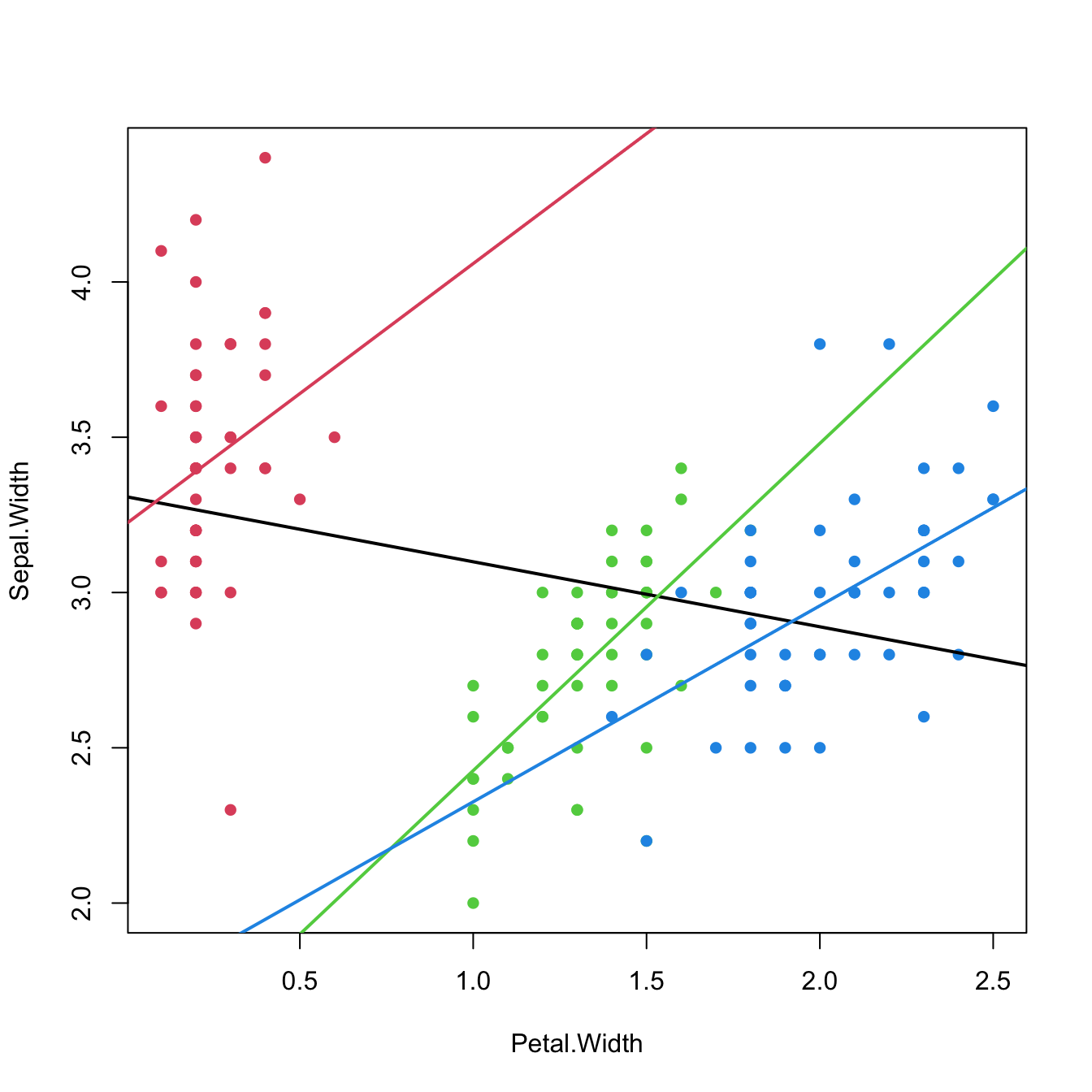

Let’s see an example with the famous iris dataset.

# iris dataset -- factors in the last column

summary(iris)

## Sepal.Length Sepal.Width Petal.Length Petal.Width Species

## Min. :4.300 Min. :2.000 Min. :1.000 Min. :0.100 setosa :50

## 1st Qu.:5.100 1st Qu.:2.800 1st Qu.:1.600 1st Qu.:0.300 versicolor:50

## Median :5.800 Median :3.000 Median :4.350 Median :1.300 virginica :50

## Mean :5.843 Mean :3.057 Mean :3.758 Mean :1.199

## 3rd Qu.:6.400 3rd Qu.:3.300 3rd Qu.:5.100 3rd Qu.:1.800

## Max. :7.900 Max. :4.400 Max. :6.900 Max. :2.500

# Summary of a linear model

mod1 <- lm(Sepal.Length ~ ., data = iris)

summary(mod1)

##

## Call:

## lm(formula = Sepal.Length ~ ., data = iris)

##

## Residuals:

## Min 1Q Median 3Q Max

## -0.79424 -0.21874 0.00899 0.20255 0.73103

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 2.17127 0.27979 7.760 1.43e-12 ***

## Sepal.Width 0.49589 0.08607 5.761 4.87e-08 ***

## Petal.Length 0.82924 0.06853 12.101 < 2e-16 ***

## Petal.Width -0.31516 0.15120 -2.084 0.03889 *

## Speciesversicolor -0.72356 0.24017 -3.013 0.00306 **

## Speciesvirginica -1.02350 0.33373 -3.067 0.00258 **

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 0.3068 on 144 degrees of freedom

## Multiple R-squared: 0.8673, Adjusted R-squared: 0.8627

## F-statistic: 188.3 on 5 and 144 DF, p-value: < 2.2e-16

# Speciesversicolor (D1) coefficient: -0.72356. The average increment of

# Sepal.Length when the species is versicolor instead of setosa (reference)

# Speciesvirginica (D2) coefficient: -1.02350. The average increment of

# Sepal.Length when the species is virginica instead of setosa (reference)

# Both dummy variables are significant

# How to set a different level as reference (versicolor)

iris$Species <- relevel(iris$Species, ref = "versicolor")

# Same estimates, except for the dummy coefficients

mod2 <- lm(Sepal.Length ~ ., data = iris)

summary(mod2)

##

## Call:

## lm(formula = Sepal.Length ~ ., data = iris)

##

## Residuals:

## Min 1Q Median 3Q Max

## -0.79424 -0.21874 0.00899 0.20255 0.73103

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 1.44770 0.28149 5.143 8.68e-07 ***

## Sepal.Width 0.49589 0.08607 5.761 4.87e-08 ***

## Petal.Length 0.82924 0.06853 12.101 < 2e-16 ***

## Petal.Width -0.31516 0.15120 -2.084 0.03889 *

## Speciessetosa 0.72356 0.24017 3.013 0.00306 **

## Speciesvirginica -0.29994 0.11898 -2.521 0.01280 *

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 0.3068 on 144 degrees of freedom

## Multiple R-squared: 0.8673, Adjusted R-squared: 0.8627

## F-statistic: 188.3 on 5 and 144 DF, p-value: < 2.2e-16

# Speciessetosa (D1) coefficient: 0.72356. The average increment of

# Sepal.Length when the species is setosa instead of versicolor (reference)

# Speciesvirginica (D2) coefficient: -0.29994. The average increment of

# Sepal.Length when the species is virginica instead of versicolor (reference)

# Both dummy variables are significant

# Coefficients of the model

confint(mod2)

## 2.5 % 97.5 %

## (Intercept) 0.8913266 2.00408209

## Sepal.Width 0.3257653 0.66601260

## Petal.Length 0.6937939 0.96469395

## Petal.Width -0.6140049 -0.01630542

## Speciessetosa 0.2488500 1.19827390

## Speciesvirginica -0.5351144 -0.06475727

# The coefficients of Speciessetosa and Speciesvirginica are

# significantly positive and negative, respectively

# Show the dummy variables employed for encoding a factor

contrasts(iris$Species)

## setosa virginica

## versicolor 0 0

## setosa 1 0

## virginica 0 1

iris$Species <- relevel(iris$Species, ref = "setosa")

contrasts(iris$Species)

## versicolor virginica

## setosa 0 0

## versicolor 1 0

## virginica 0 1It may happen that one dummy variable, say \(D_1,\) is not significant, while other dummy variables of the same categorical variable, say \(D_2,\) are significant. For example, this happens in the example above at level \(\alpha=0.01.\) Then, in the considered model, the level associated with \(D_1\) does not add relevant information for explaining \(Y\) with respect to the reference level.

Do not codify a categorical variable as a discrete variable. This constitutes a major methodological failure that will flaw the subsequent statistical analysis.

For example if you have a categorical variable party

with levels partyA, partyB, and

partyC, do not encode it as a discrete variable taking the

values 1, 2, and 3, respectively.

If you do so:

-

You assume implicitly an order in the levels of

party, sincepartyAis closer topartyBthan topartyC. -

You assume implicitly that

partyCis three times larger thanpartyA. -

The codification is completely arbitrary – why not consider

1,1.5, and1.75instead?

The right way of dealing with categorical variables in regression is to set the variable as a factor and let R do the dummification internally.

3.3.1 Case study application

Let’s see what the dummy variables are in the Boston dataset and what effect they have on medv.

# Load the Boston dataset

data(Boston, package = "MASS")

# Structure of the data

str(Boston)

## 'data.frame': 506 obs. of 14 variables:

## $ crim : num 0.00632 0.02731 0.02729 0.03237 0.06905 ...

## $ zn : num 18 0 0 0 0 0 12.5 12.5 12.5 12.5 ...

## $ indus : num 2.31 7.07 7.07 2.18 2.18 2.18 7.87 7.87 7.87 7.87 ...

## $ chas : int 0 0 0 0 0 0 0 0 0 0 ...

## $ nox : num 0.538 0.469 0.469 0.458 0.458 0.458 0.524 0.524 0.524 0.524 ...

## $ rm : num 6.58 6.42 7.18 7 7.15 ...

## $ age : num 65.2 78.9 61.1 45.8 54.2 58.7 66.6 96.1 100 85.9 ...

## $ dis : num 4.09 4.97 4.97 6.06 6.06 ...

## $ rad : int 1 2 2 3 3 3 5 5 5 5 ...

## $ tax : num 296 242 242 222 222 222 311 311 311 311 ...

## $ ptratio: num 15.3 17.8 17.8 18.7 18.7 18.7 15.2 15.2 15.2 15.2 ...

## $ black : num 397 397 393 395 397 ...

## $ lstat : num 4.98 9.14 4.03 2.94 5.33 ...

## $ medv : num 24 21.6 34.7 33.4 36.2 28.7 22.9 27.1 16.5 18.9 ...

# chas is a dummy variable measuring if the suburb is close to the river (1)

# or not (0). In this case it is not codified as a factor but as a 0 or 1

# (so it is already dummified)

# Summary of a linear model

mod <- lm(medv ~ chas + crim, data = Boston)

summary(mod)

##

## Call:

## lm(formula = medv ~ chas + crim, data = Boston)

##

## Residuals:

## Min 1Q Median 3Q Max

## -16.540 -5.421 -1.878 2.575 30.134

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 23.61403 0.41862 56.409 < 2e-16 ***

## chas 5.57772 1.46926 3.796 0.000165 ***

## crim -0.40598 0.04339 -9.358 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 8.373 on 503 degrees of freedom

## Multiple R-squared: 0.1744, Adjusted R-squared: 0.1712

## F-statistic: 53.14 on 2 and 503 DF, p-value: < 2.2e-16

# The coefficient associated with `chas` is 5.57772. That means that if the suburb

# is close to the river, the mean of medv increases in 5.57772 units for

# the same house and neighborhood conditions

# chas is significant (the presence of the river adds a valuable information

# for explaining medv)

# Summary of the best model in terms of BIC

summary(mod_bic)

##

## Call:

## lm(formula = medv ~ crim + zn + chas + nox + rm + dis + rad +

## tax + ptratio + black + lstat, data = Boston)

##

## Residuals:

## Min 1Q Median 3Q Max

## -15.5984 -2.7386 -0.5046 1.7273 26.2373

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 36.341145 5.067492 7.171 2.73e-12 ***

## crim -0.108413 0.032779 -3.307 0.001010 **

## zn 0.045845 0.013523 3.390 0.000754 ***

## chas 2.718716 0.854240 3.183 0.001551 **

## nox -17.376023 3.535243 -4.915 1.21e-06 ***

## rm 3.801579 0.406316 9.356 < 2e-16 ***

## dis -1.492711 0.185731 -8.037 6.84e-15 ***

## rad 0.299608 0.063402 4.726 3.00e-06 ***

## tax -0.011778 0.003372 -3.493 0.000521 ***

## ptratio -0.946525 0.129066 -7.334 9.24e-13 ***

## black 0.009291 0.002674 3.475 0.000557 ***

## lstat -0.522553 0.047424 -11.019 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 4.736 on 494 degrees of freedom

## Multiple R-squared: 0.7406, Adjusted R-squared: 0.7348

## F-statistic: 128.2 on 11 and 494 DF, p-value: < 2.2e-16

# The coefficient associated with `chas` is 2.71871. If the suburb is close to

# the river, the mean of medv increases in 2.71871 units

# chas is significant as well in the presence of more predictorsWe will see how to mix dummy and quantitative predictors in Section 3.4.3.

3.4 Nonlinear relationships

3.4.1 Transformations in the simple linear model

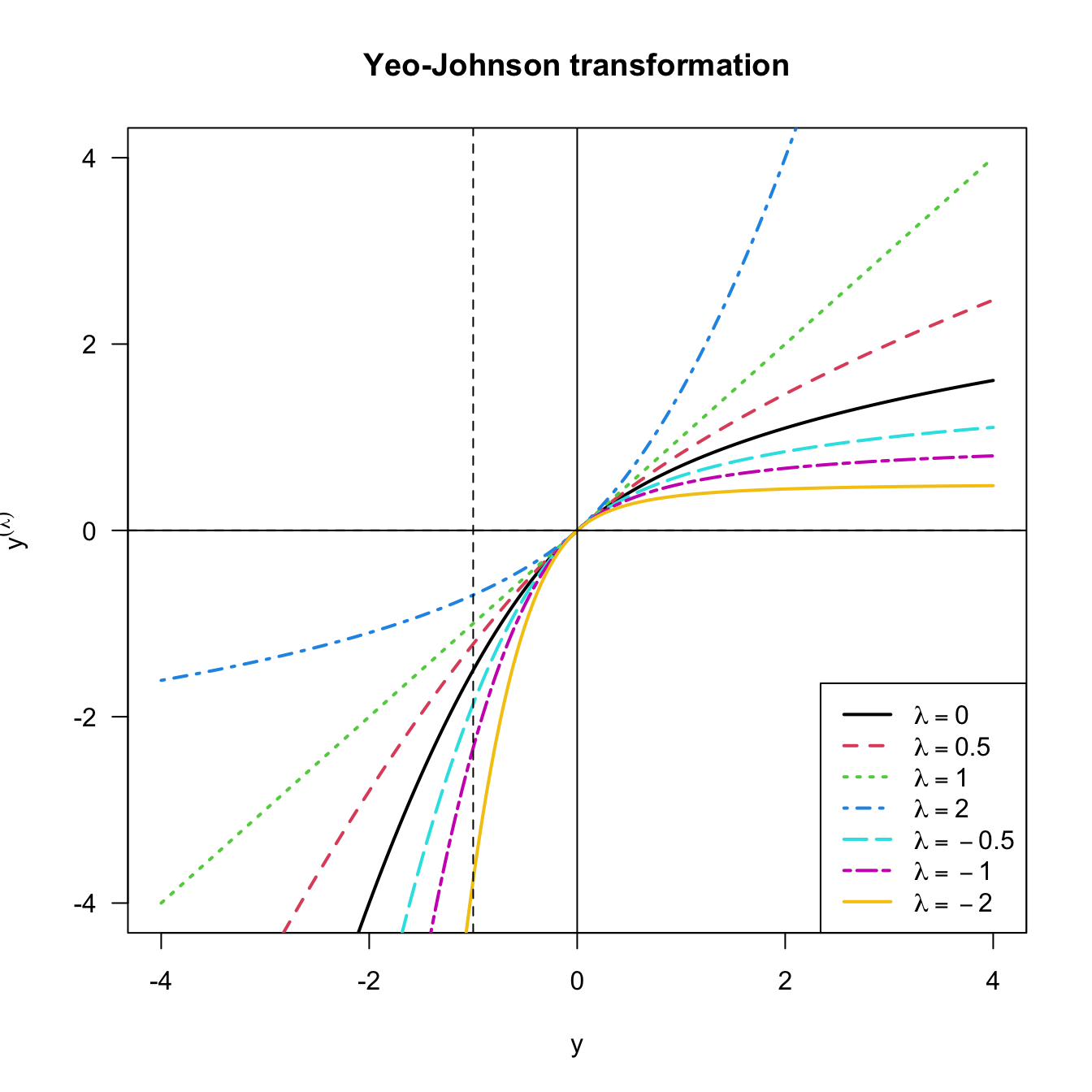

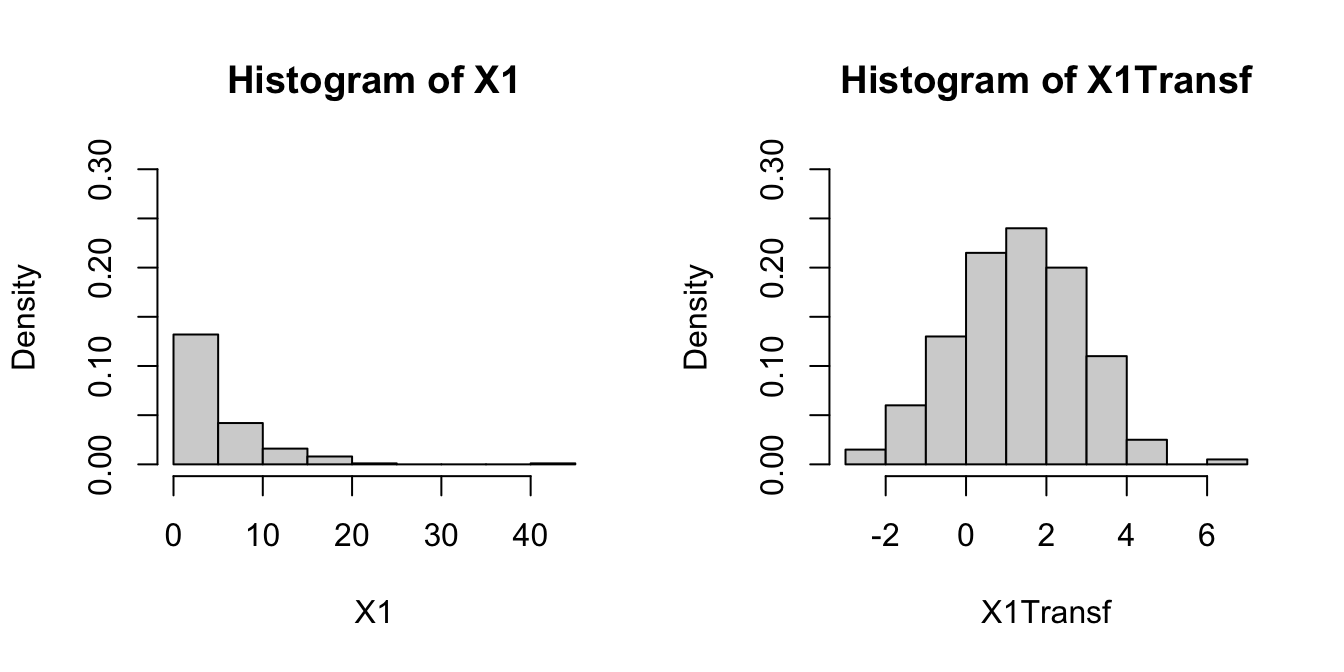

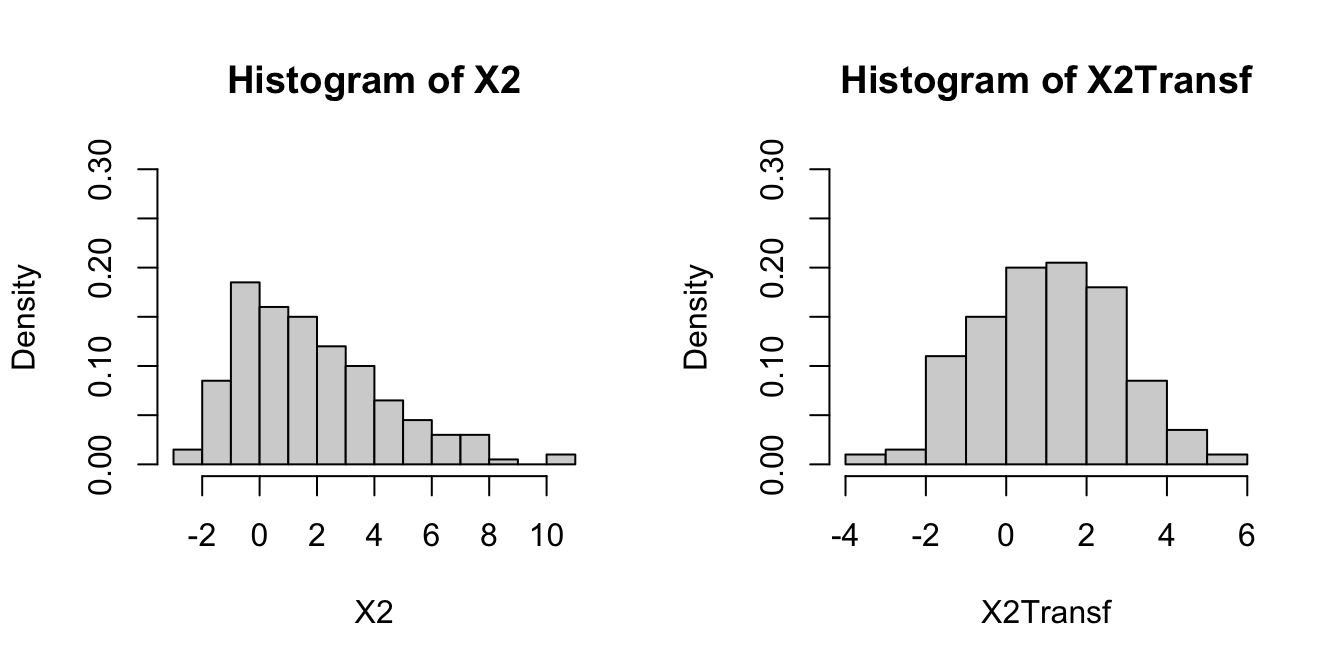

The linear model is termed linear not only because the form it assumes for the regression function \(m\) is linear, but because the effects of the parameters are linear. Indeed, the predictor \(X\) may exhibit a nonlinear effect on the response \(Y\) and still be a linear model! For example, the following models can be transformed into simple linear models:

- \(Y=\beta_0+\beta_1X^2+\varepsilon.\)

- \(Y=\beta_0+\beta_1\log(X)+\varepsilon.\)

- \(Y=\beta_0+\beta_1(X^3-\log(|X|) + 2^{X})+\varepsilon.\)

The trick is to work with a transformed predictor \(\tilde{X}\) as a replacement of the original predictor \(X.\) Then, for the above examples, rather than working with the sample \(\{(X_i,Y_i)\}_{i=1}^n,\) we consider the transformed sample \(\{(\tilde X_i,Y_i)\}_{i=1}^n\) with:

- \(\tilde X_i=X_i^2,\) \(i=1,\ldots,n.\)

- \(\tilde X_i=\log(X_i),\) \(i=1,\ldots,n.\)

- \(\tilde X_i=X_i^3-\log(|X_i|) + 2^{X_i},\) \(i=1,\ldots,n.\)

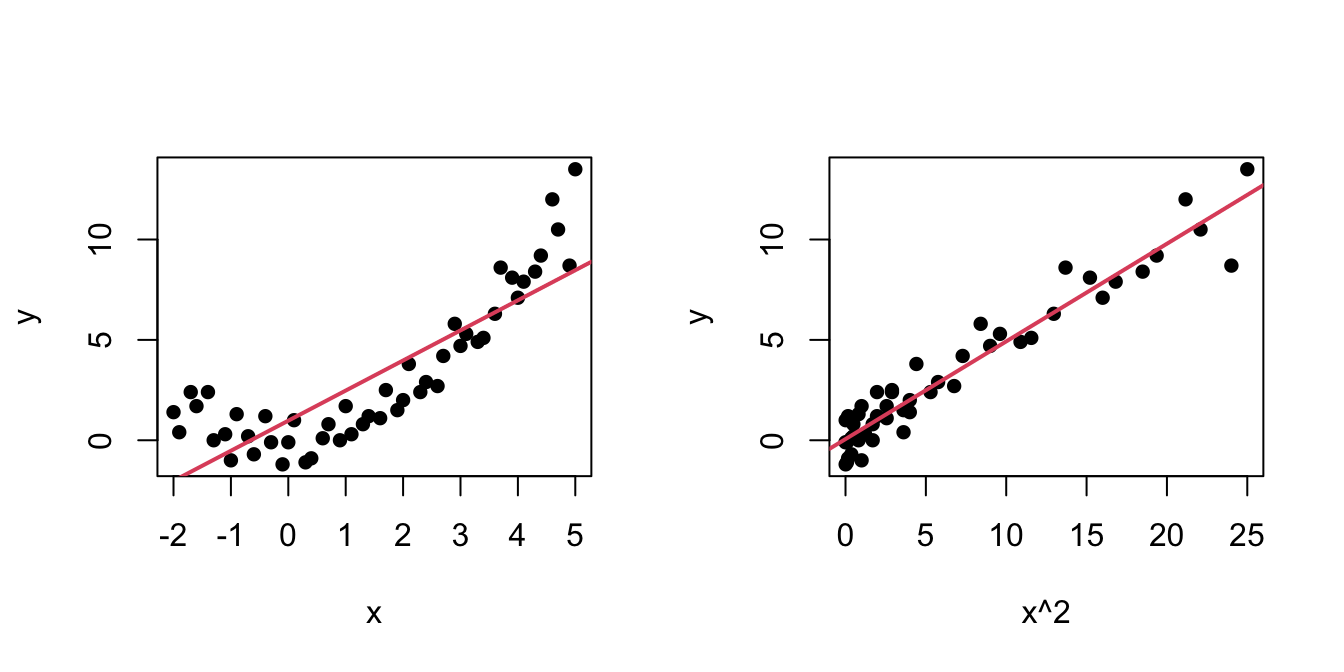

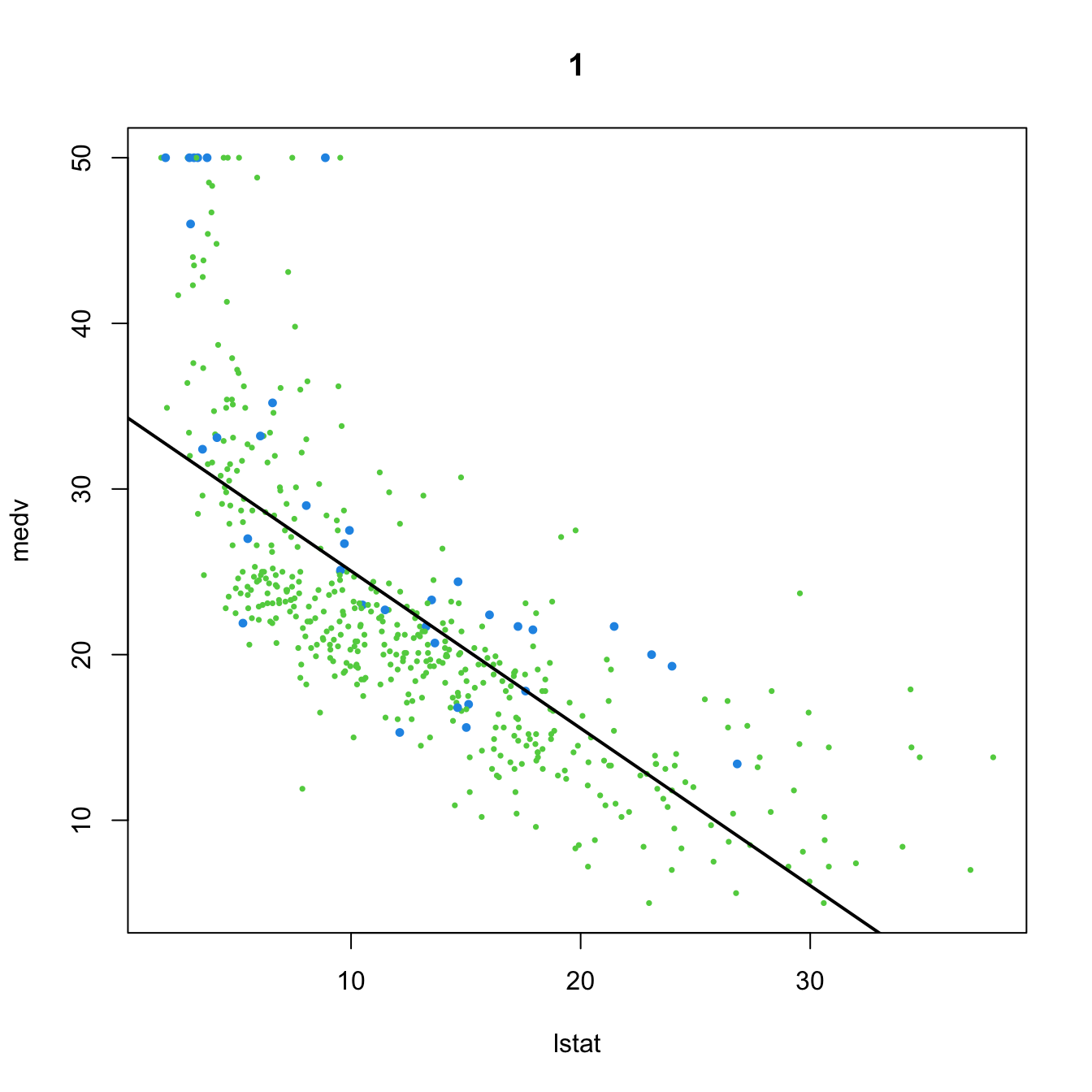

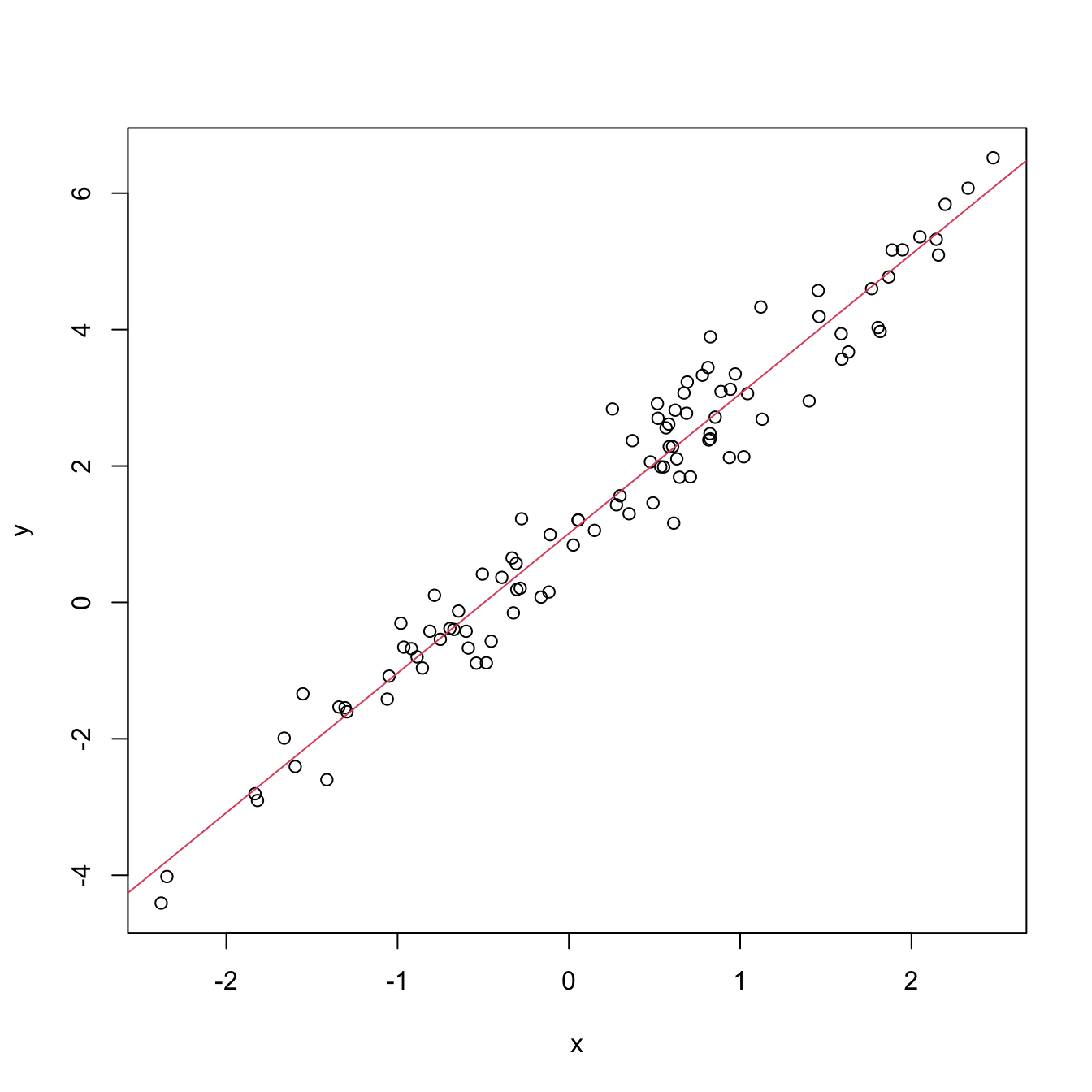

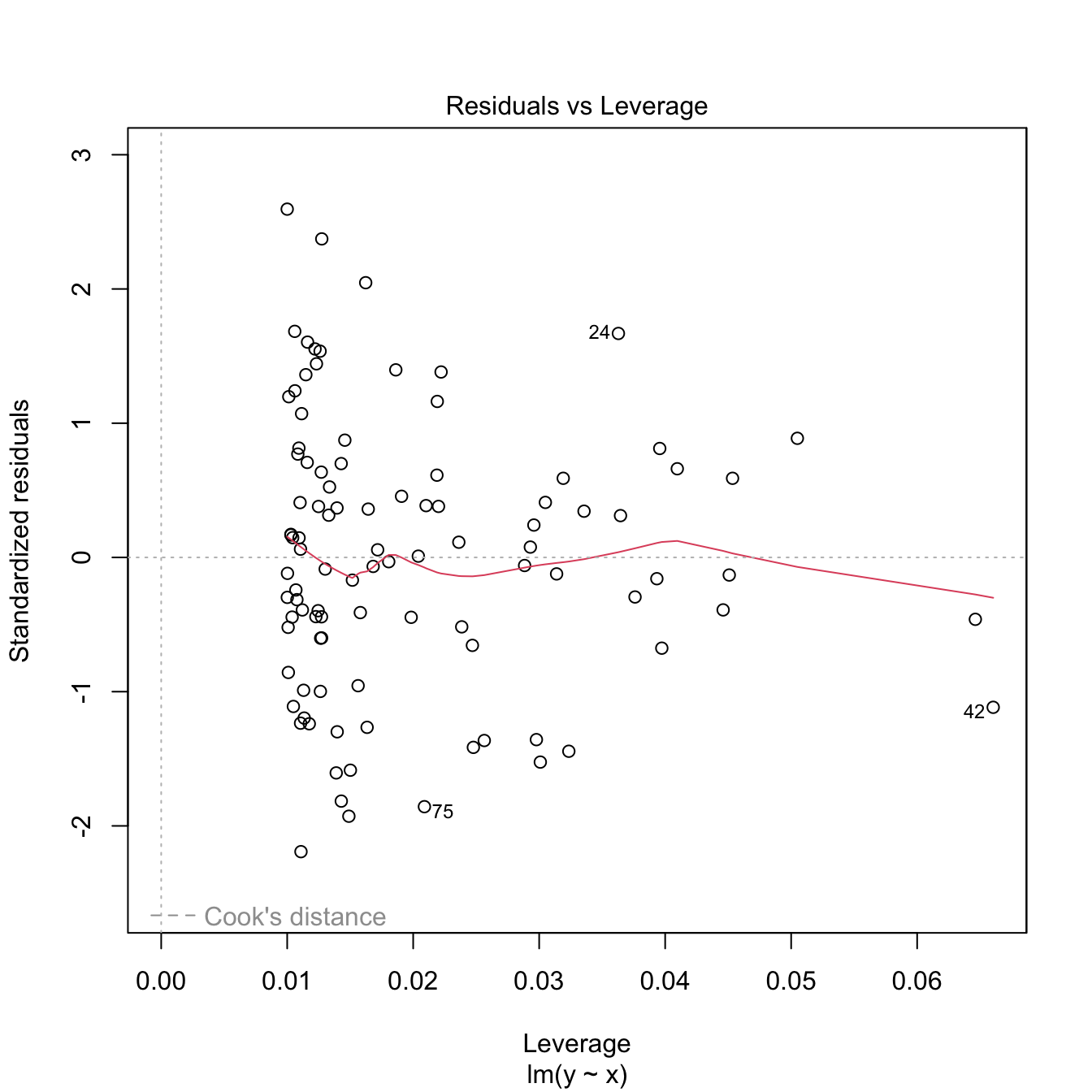

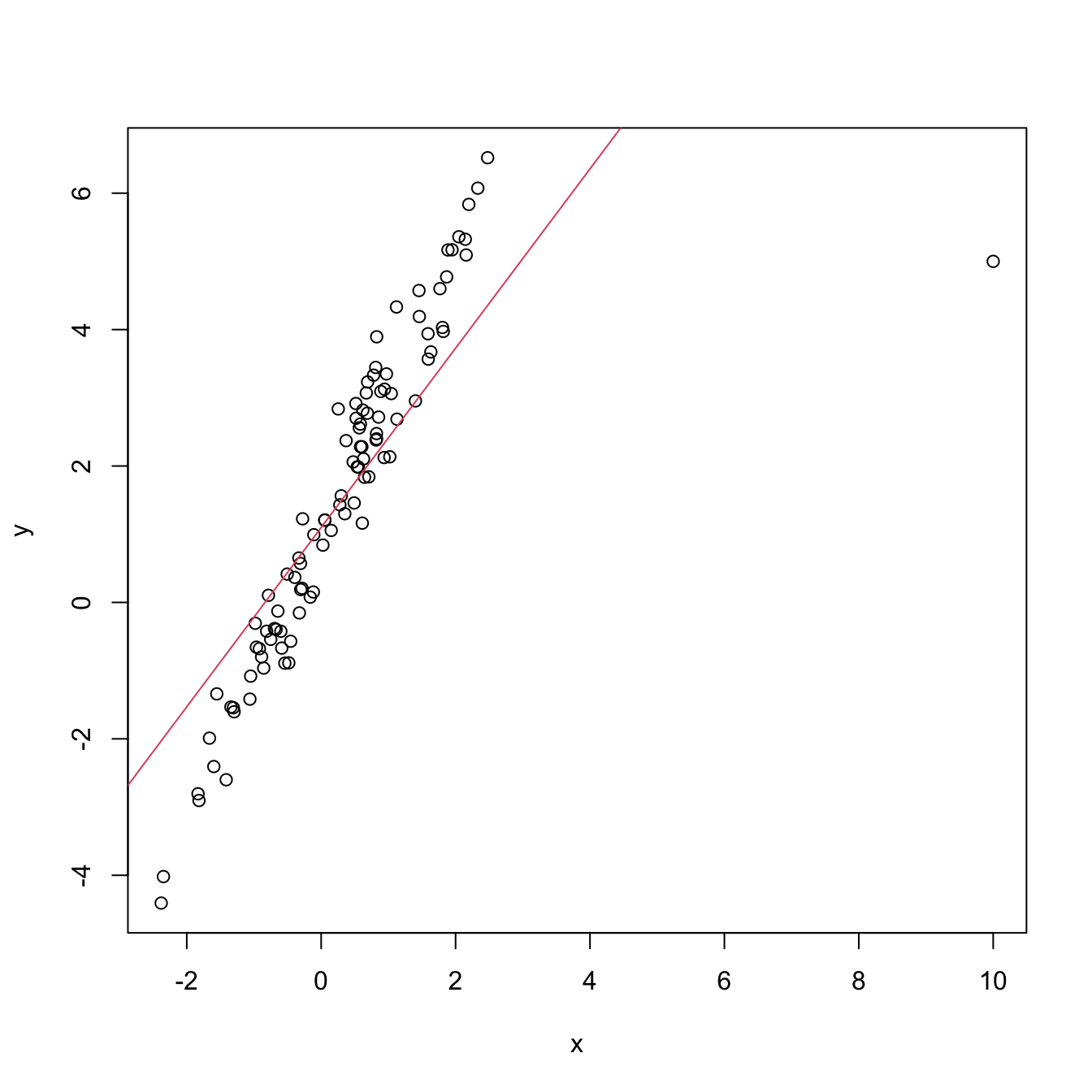

An example of this simple but powerful trick is given as follows. The left panel of Figure 3.6 shows the scatterplot for some data y and x, together with its fitted regression line. Clearly, the data does not follow a linear pattern, but a nonlinear one, similar to a parabola \(y=x^2.\) Hence, y might be better explained by the square of x, x^2, rather than by x. Indeed, if we plot y against x^2 in the right panel of Figure 3.6, we can see that the relation of y and x^2 is now linear!

Figure 3.6: Left: quadratic pattern when plotting \(Y\) against \(X.\) Right: linearized pattern when plotting \(Y\) against \(X^2.\) In red, the fitted regression line.

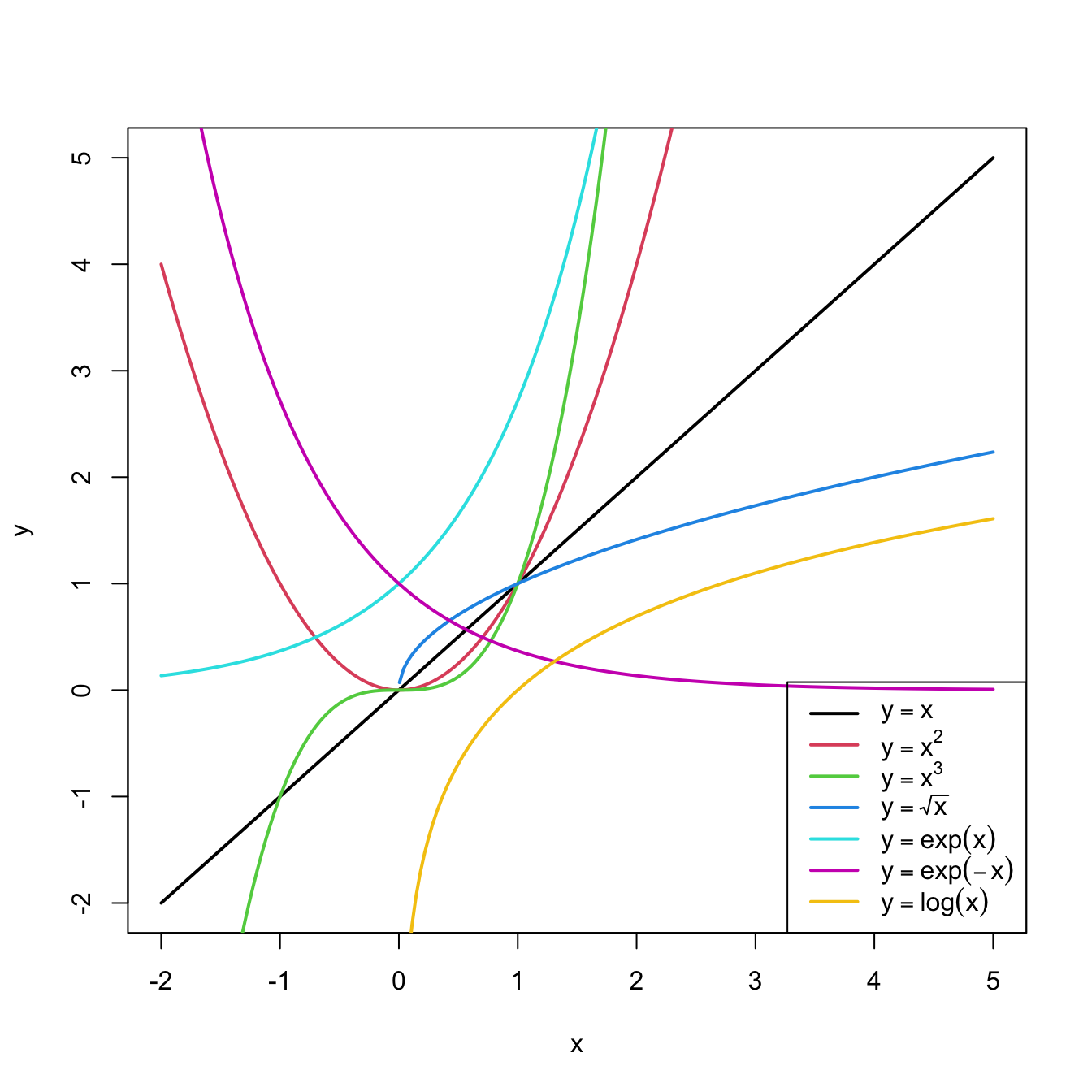

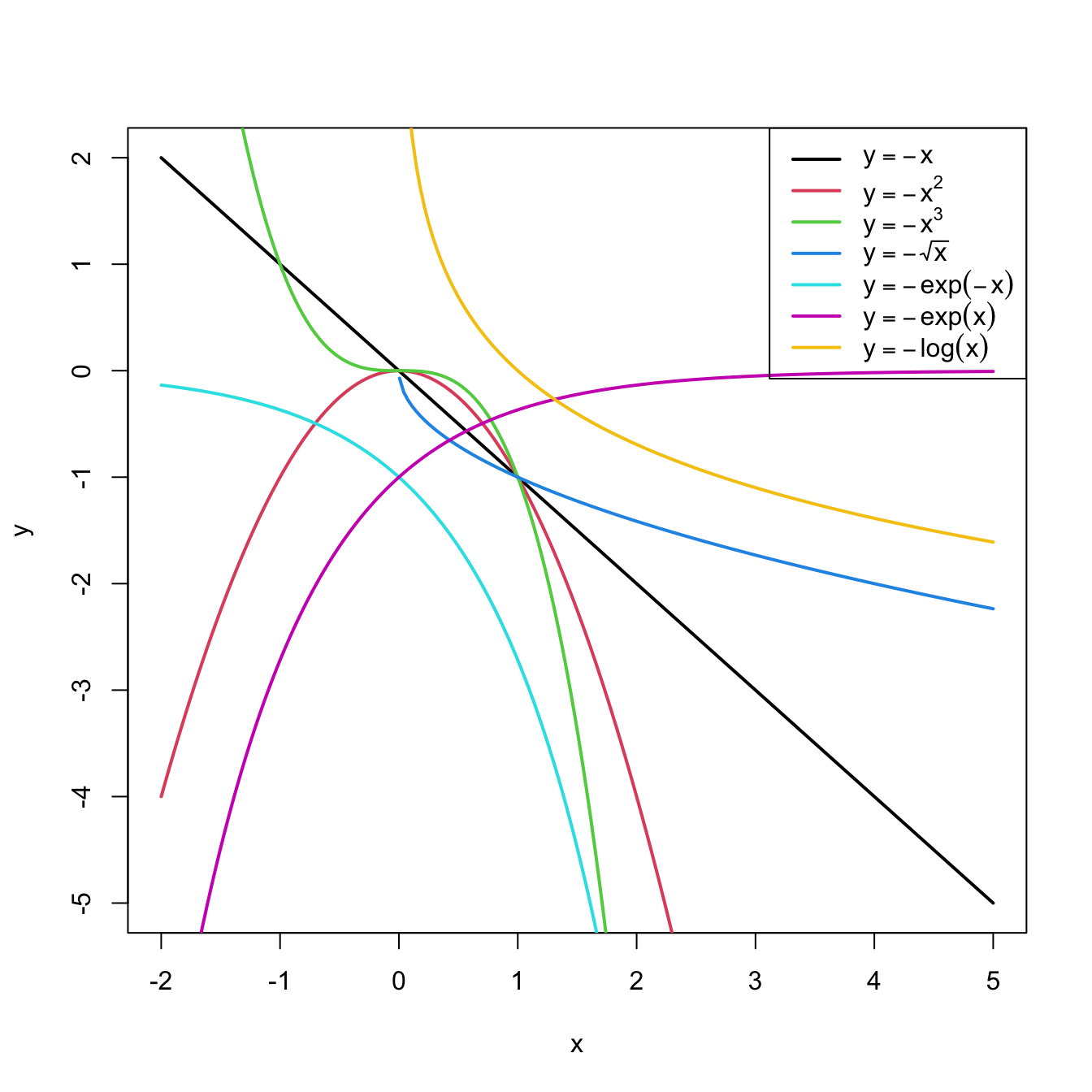

Figure 3.7: Illustration of the choice of the nonlinear transformation. Application available here.

In conclusion, with a simple trick we have drastically increased the explanation of the response. However, there is a catch: knowing which transformation is required in order to linearize the relation between response and the predictor is a kind of art which requires good eyeballing. A first approach is to try with one of the usual transformations, displayed in Figure 3.8, depending on the pattern of the data. Figure 3.7 illustrates how to choose an adequate transformation for linearizing certain nonlinear data patterns.

Figure 3.8: Some common nonlinear transformations and their negative counterparts. Recall the domain of definition of each transformation.

If you apply a nonlinear transformation, namely \(f,\) and fit the linear model \(Y=\beta_0+\beta_1 f(X)+\varepsilon,\) then there is no point in also fitting the model resulting from the negative transformation \(-f.\) The model with \(-f\) is exactly the same as the one with \(f\) but with the sign of \(\beta_1\) flipped.

As a rule of thumb, use Figure 3.8 with the transformations to compare it with the data pattern, choose the most similar curve, and apply the corresponding function with positive sign (for simpler interpretation).Let’s see how to transform the predictor and perform the regressions behind Figure 3.6.

# Data

x <- c(-2, -1.9, -1.7, -1.6, -1.4, -1.3, -1.1, -1, -0.9, -0.7, -0.6,

-0.4, -0.3, -0.1, 0, 0.1, 0.3, 0.4, 0.6, 0.7, 0.9, 1, 1.1, 1.3,

1.4, 1.6, 1.7, 1.9, 2, 2.1, 2.3, 2.4, 2.6, 2.7, 2.9, 3, 3.1,

3.3, 3.4, 3.6, 3.7, 3.9, 4, 4.1, 4.3, 4.4, 4.6, 4.7, 4.9, 5)

y <- c(1.4, 0.4, 2.4, 1.7, 2.4, 0, 0.3, -1, 1.3, 0.2, -0.7, 1.2, -0.1,

-1.2, -0.1, 1, -1.1, -0.9, 0.1, 0.8, 0, 1.7, 0.3, 0.8, 1.2, 1.1,

2.5, 1.5, 2, 3.8, 2.4, 2.9, 2.7, 4.2, 5.8, 4.7, 5.3, 4.9, 5.1,

6.3, 8.6, 8.1, 7.1, 7.9, 8.4, 9.2, 12, 10.5, 8.7, 13.5)

# Data frame (a matrix with column names)

non_linear <- data.frame(x = x, y = y)

# We create a new column inside nonLinear, called x2, that contains the

# new variable x^2

non_linear$x2 <- non_linear$x^2

# If you wish to remove it

# nonLinear$x2 <- NULL

# Regressions

mod1 <- lm(y ~ x, data = non_linear)

mod2 <- lm(y ~ x2, data = non_linear)

summary(mod1)

##

## Call:

## lm(formula = y ~ x, data = non_linear)

##

## Residuals:

## Min 1Q Median 3Q Max

## -2.5268 -1.7513 -0.4017 0.9750 5.0265

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 0.9771 0.3506 2.787 0.0076 **

## x 1.4993 0.1374 10.911 1.35e-14 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 2.005 on 48 degrees of freedom

## Multiple R-squared: 0.7126, Adjusted R-squared: 0.7067

## F-statistic: 119 on 1 and 48 DF, p-value: 1.353e-14

summary(mod2)

##

## Call:

## lm(formula = y ~ x2, data = non_linear)

##

## Residuals:

## Min 1Q Median 3Q Max

## -3.0418 -0.5523 -0.1465 0.6286 1.8797

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 0.05891 0.18462 0.319 0.751

## x2 0.48659 0.01891 25.725 <2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 0.9728 on 48 degrees of freedom

## Multiple R-squared: 0.9324, Adjusted R-squared: 0.931

## F-statistic: 661.8 on 1 and 48 DF, p-value: < 2.2e-16

# mod2 has a larger R^2. Also notice the intercept is not significantA fast way of performing and summarizing the quadratic fit is

summary(lm(y ~ I(x^2), data = nonLinear))

The I() function wrapping x^2 is

fundamental when applying arithmetic operations in the predictor. The

symbols +, *, ^, … have

different meaning when inputted in a formula, so it is

required to use I() to indicate that they must be

interpreted in their arithmetic meaning and that the result of the

expression denotes a new predictor. For example, use

I((x - 1)^3 - log(3 * x)) to apply the transformation

(x - 1)^3 - log(3 * x).

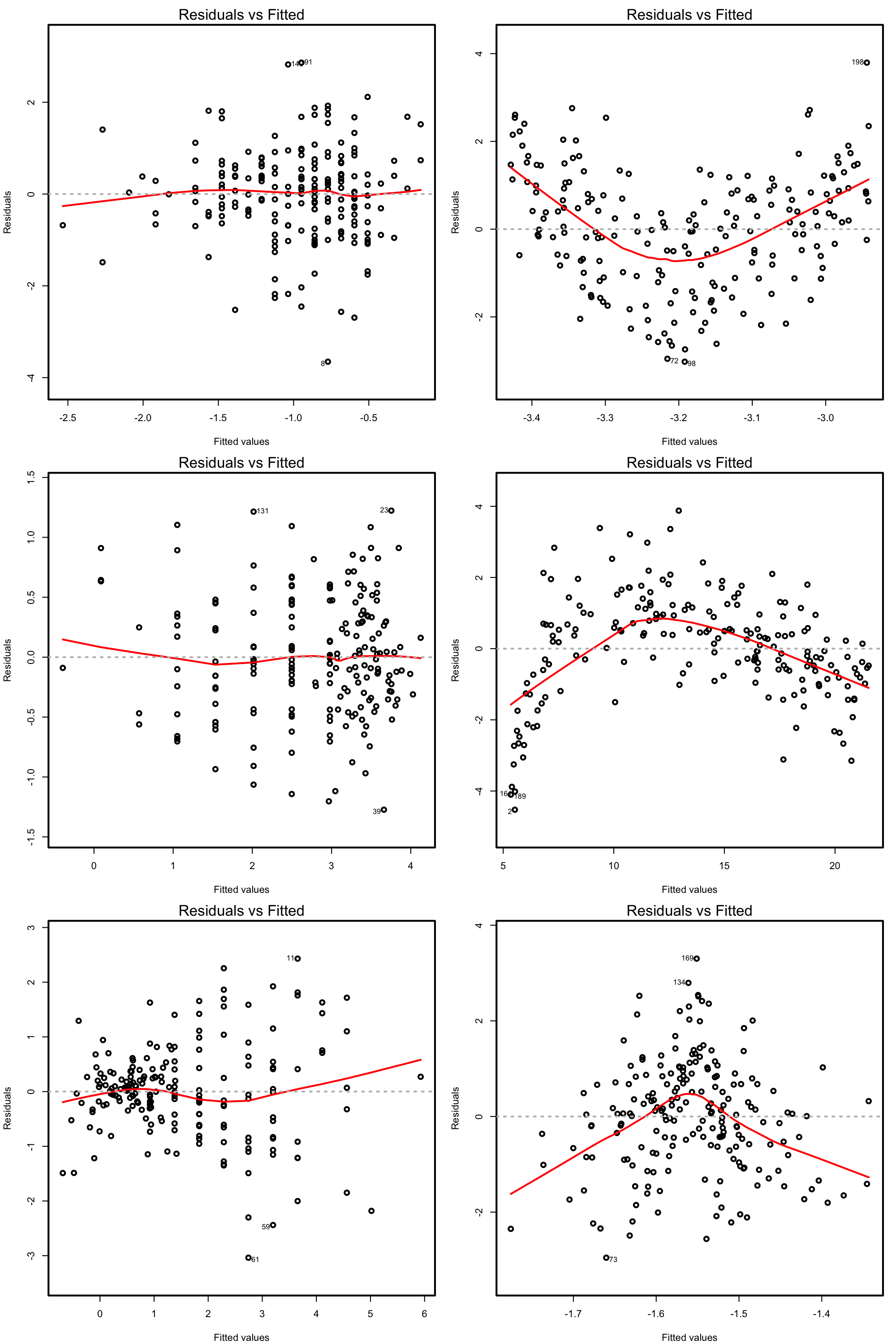

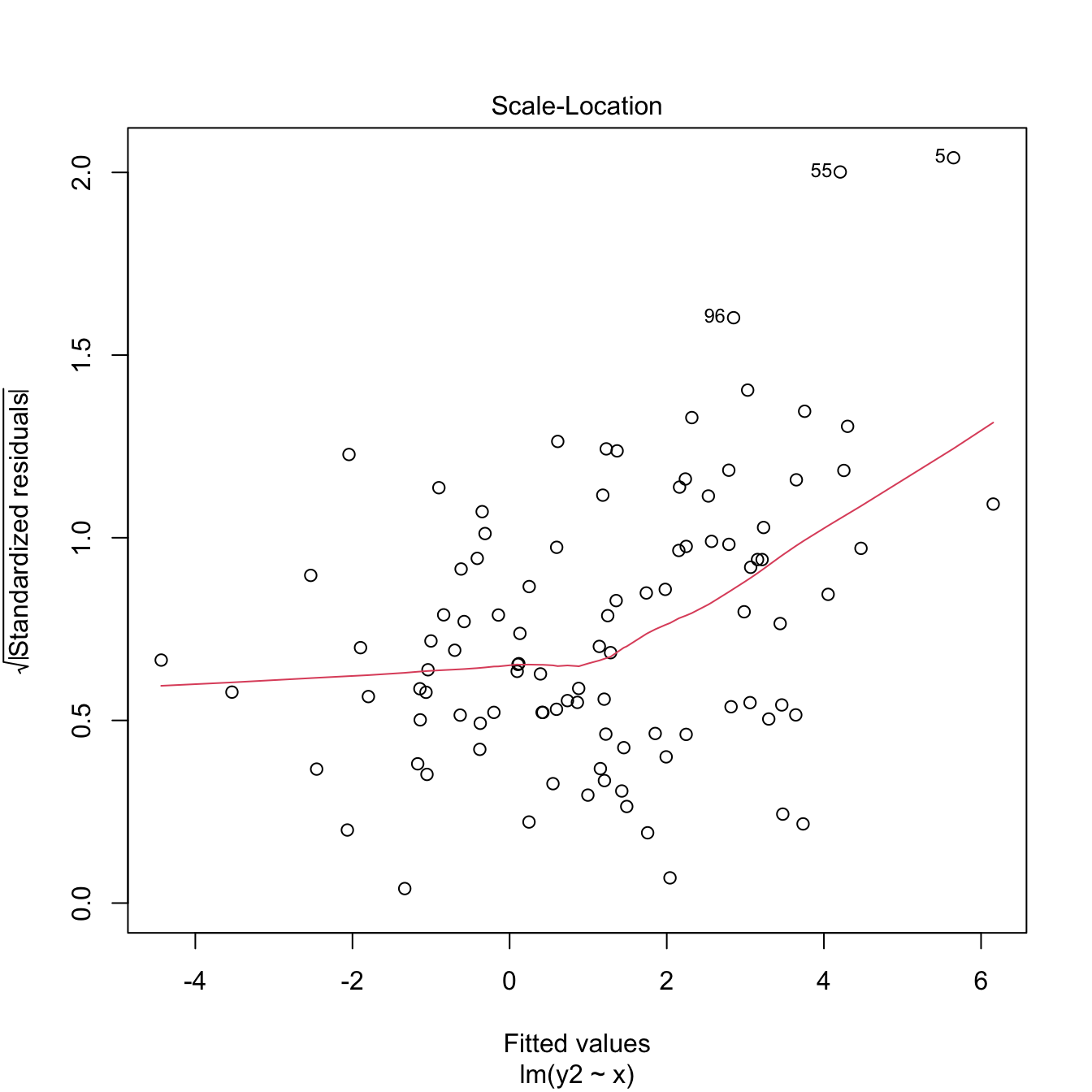

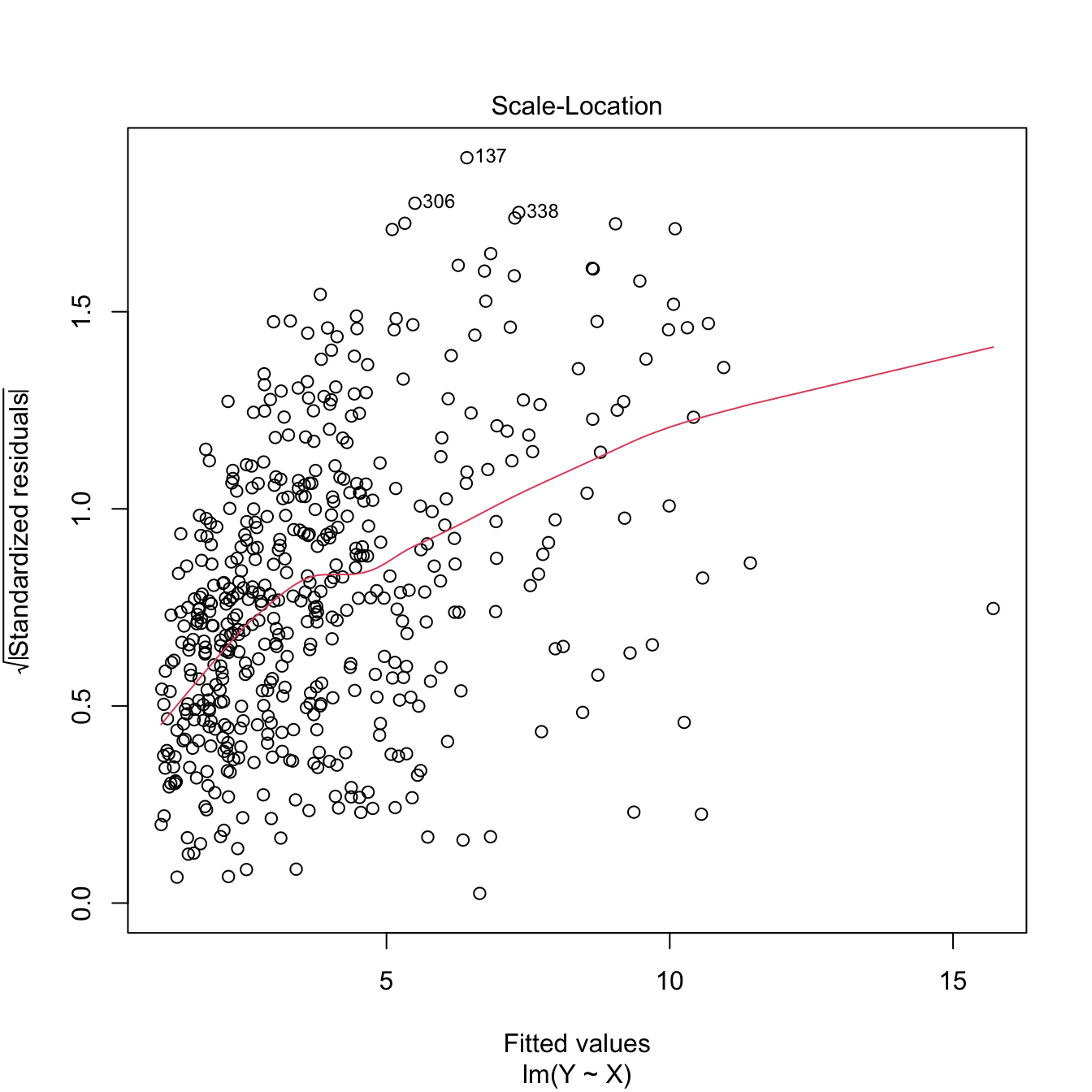

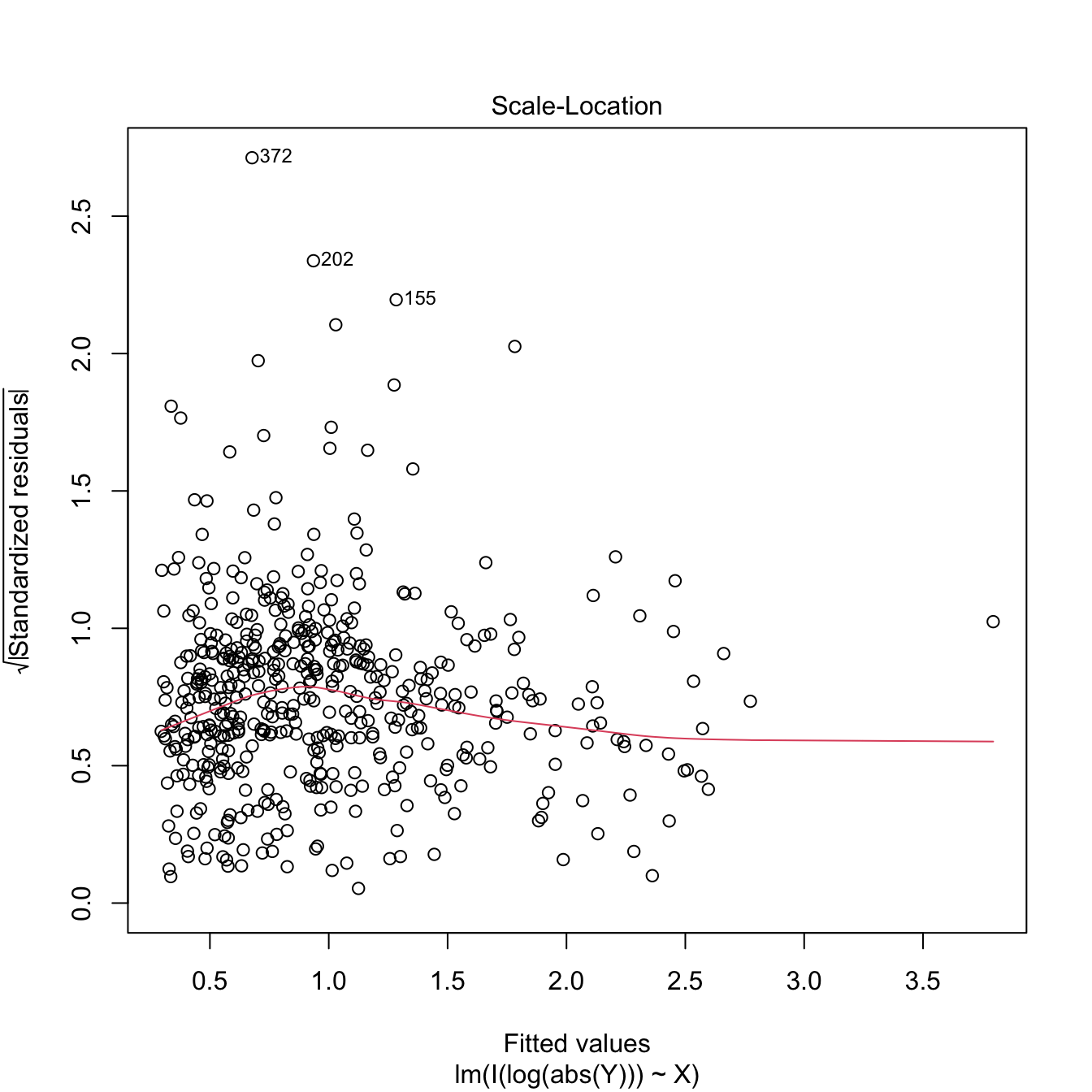

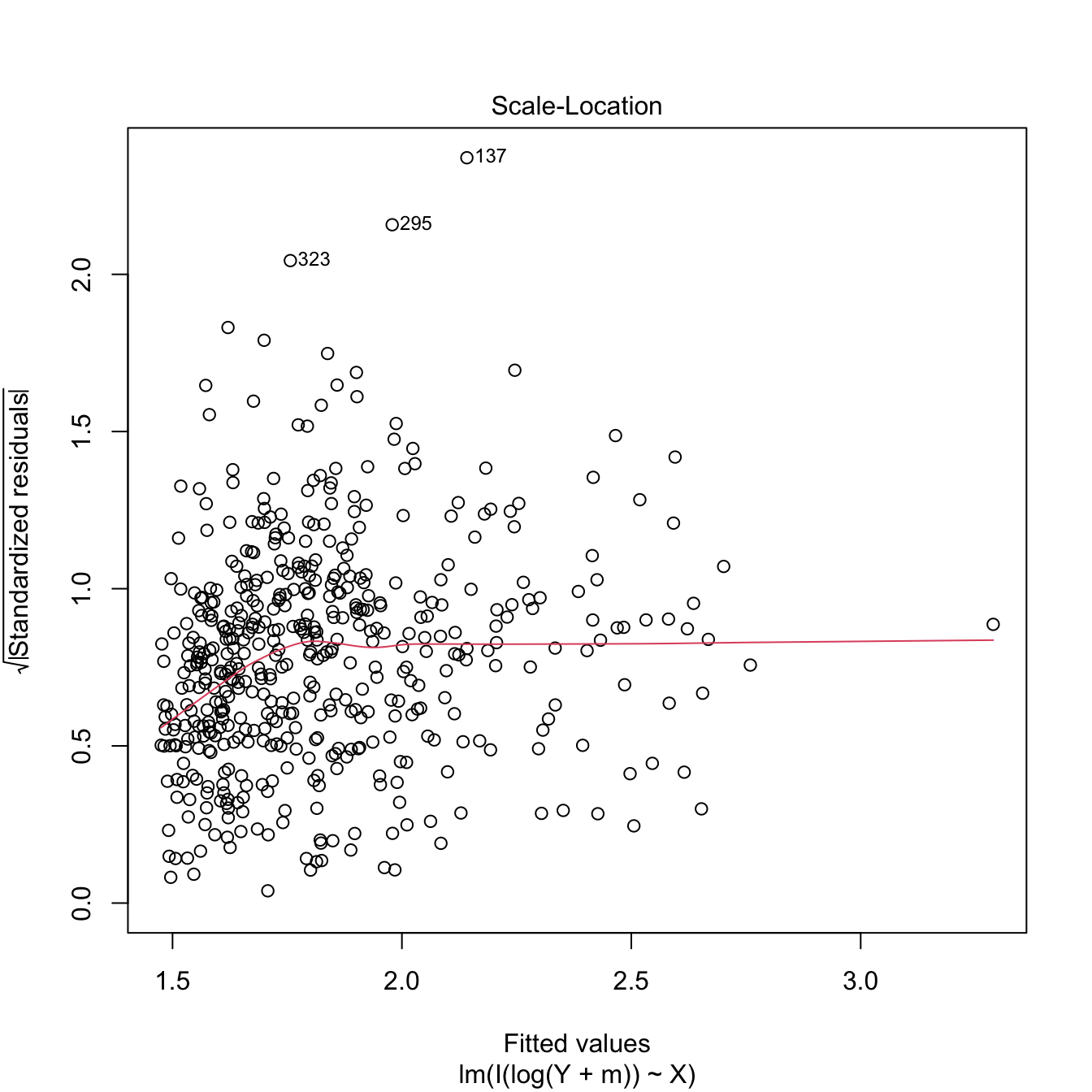

Exercise 3.7 Load the dataset assumptions.RData. We are going to work with the regressions y2 ~ x2, y3 ~ x3, y8 ~ x8, and y9 ~ x9, in order to identify which transformation of Figure 3.8 gives the best fit. For these, do the following:

- Find the transformation that yields the largest \(R^2.\)

- Compare the original and transformed linear models.

Hints:

-

y2 ~ x2has a negative dependence, so look at the right panel of Figure 3.7. -

y3 ~ x3seems to have just a subtle nonlinearity… Will it be worth to attempt a transformation? - For

y9 ~ x9, try also withexp(-abs(x9)),log(abs(x9)), and2^abs(x9).

The nonlinear transformations introduced for the simple linear model are readily applicable in the multiple linear model. Consequently, the multiple linear model is able to approximate regression functions with nonlinear forms, if appropriate nonlinear transformations for the predictors are used.66

3.4.2 Polynomial transformations

Polynomial models are a powerful nonlinear extension of the linear model. These are constructed by replacing each predictor \(X_j\) by a set of monomials \((X_j,X_j^2,\ldots,X_j^{k_j})\) constructed from \(X_j.\) In the simpler case with a single predictor67 \(X,\) we have the \(k\)-th order polynomial fit:

\[\begin{align*} Y=\beta_0+\beta_1X+\cdots+\beta_kX^k+\varepsilon. \end{align*}\]

With this approach, a highly flexible model is produced, as it was already shown in Figure 1.3.

The creation of polynomial models can be automated with the R’s poly function. For the observations \((X_1,\ldots,X_n)\) of \(X,\) poly creates the matrices

\[\begin{align} \begin{pmatrix} X_{1} & X_{1}^2 & \ldots & X_{1}^k \\ \vdots & \vdots & \ddots & \vdots \\ X_{n} & X_{n}^2 & \ldots & X_{n}^k \\ \end{pmatrix}\text{ or } \begin{pmatrix} p_1(X_{1}) & p_2(X_{1}) & \ldots & p_k(X_{1}) \\ \vdots & \vdots & \ddots & \vdots \\ p_1(X_{n}) & p_2(X_{n}) & \ldots & p_k(X_{n}) \\ \end{pmatrix},\tag{3.5} \end{align}\]

where \(p_1,\ldots,p_k\) are orthogonal polynomials68 of orders \(1,\ldots,k,\) respectively. For orthogonal polynomials, poly yields a data matrix in (3.5) with uncorrelated69 columns. That is, such that the sample correlation coefficient between two columns is zero.

Let’s see a couple of examples on using poly.

x1 <- seq(-1, 1, l = 4)

poly(x = x1, degree = 2, raw = TRUE) # (X, X^2)

## 1 2

## [1,] -1.0000000 1.0000000

## [2,] -0.3333333 0.1111111

## [3,] 0.3333333 0.1111111

## [4,] 1.0000000 1.0000000

## attr(,"degree")

## [1] 1 2

## attr(,"class")

## [1] "poly" "matrix"

poly(x = x1, degree = 2) # By default, it employs orthogonal polynomials

## 1 2

## [1,] -0.6708204 0.5

## [2,] -0.2236068 -0.5

## [3,] 0.2236068 -0.5

## [4,] 0.6708204 0.5

## attr(,"coefs")

## attr(,"coefs")$alpha

## [1] -5.551115e-17 -5.551115e-17

##

## attr(,"coefs")$norm2

## [1] 1.0000000 4.0000000 2.2222222 0.7901235

##

## attr(,"degree")

## [1] 1 2

## attr(,"class")

## [1] "poly" "matrix"

# Depiction of raw polynomials

x <- seq(-1, 1, l = 200)

degree <- 5

matplot(x, poly(x, degree = degree, raw = TRUE), type = "l", lty = 1,

ylab = expression(x^k))

legend("bottomright", legend = paste("k =", 1:degree), col = 1:degree, lwd = 2)

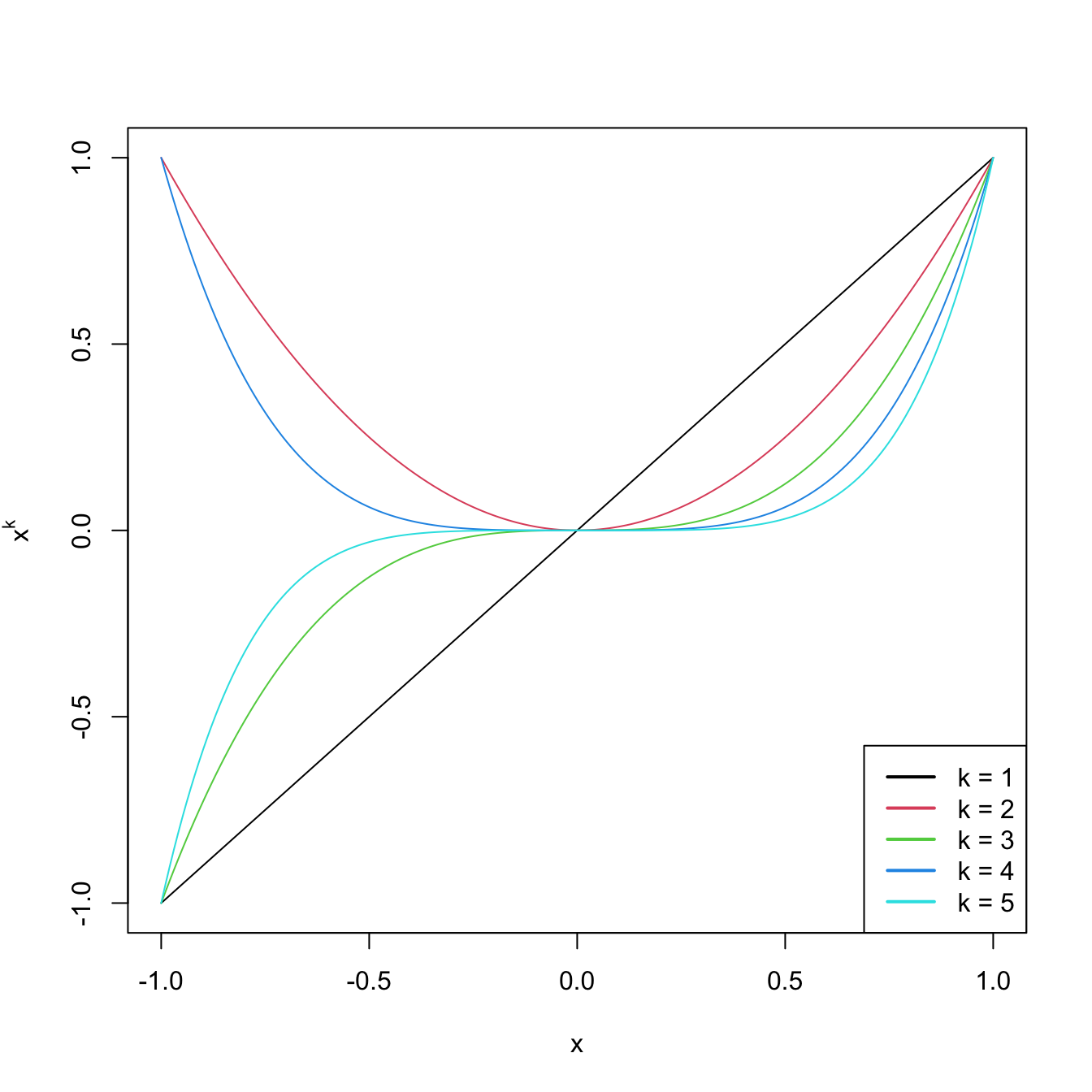

Figure 3.9: Raw polynomials \(x^k\) in \((-1,1),\) up to degree \(k=5.\)

# Depiction of orthogonal polynomials

matplot(x, poly(x, degree = degree), type = "l", lty = 1,

ylab = expression(p[k](x)))

legend("bottomright", legend = paste("k =", 1:degree), col = 1:degree, lwd = 2)

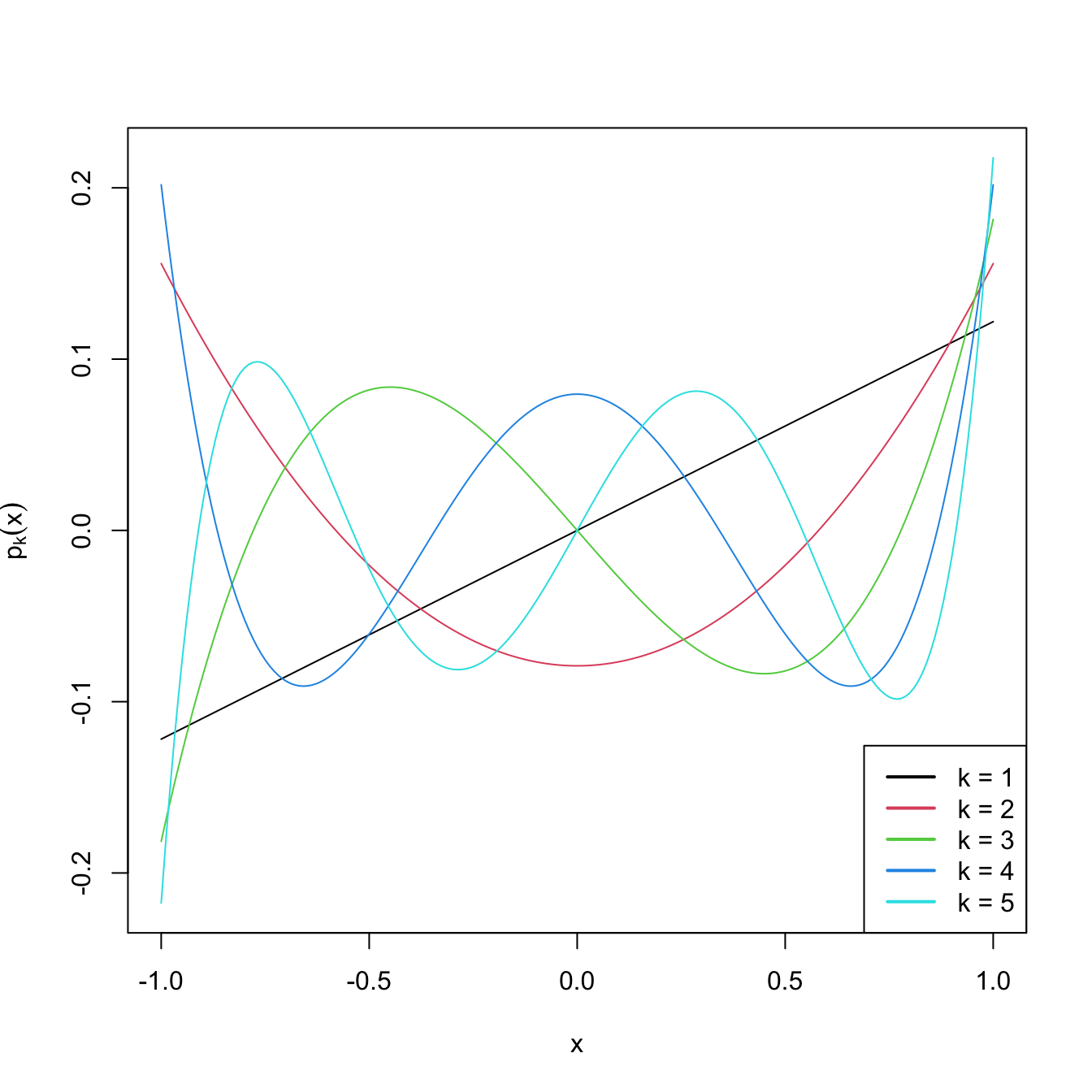

Figure 3.10: Orthogonal polynomials \(p_k(x)\) in \((-1,1),\) up to degree \(k=5.\)

These matrices can now be used as inputs in the predictor side of lm. Let’s see this in an example.

# Data containing speed (mph) and stopping distance (ft) of cars from 1920

data(cars)

plot(cars, xlab = "Speed (mph)", ylab = "Stopping distance (ft)")

# Fit a linear model of dist ~ speed

mod1 <- lm(dist ~ speed, data = cars)

abline(coef = mod1$coefficients, col = 2)

# Quadratic

mod2 <- lm(dist ~ poly(speed, degree = 2), data = cars)

# The fit is not a line, we must look for an alternative approach

d <- seq(0, 25, length.out = 200)

lines(d, predict(mod2, new = data.frame(speed = d)), col = 3)

# Cubic

mod3 <- lm(dist ~ poly(speed, degree = 3), data = cars)

lines(d, predict(mod3, new = data.frame(speed = d)), col = 4)

# 10th order -- overfitting

mod10 <- lm(dist ~ poly(speed, degree = 10), data = cars)

lines(d, predict(mod10, new = data.frame(speed = d)), col = 5)

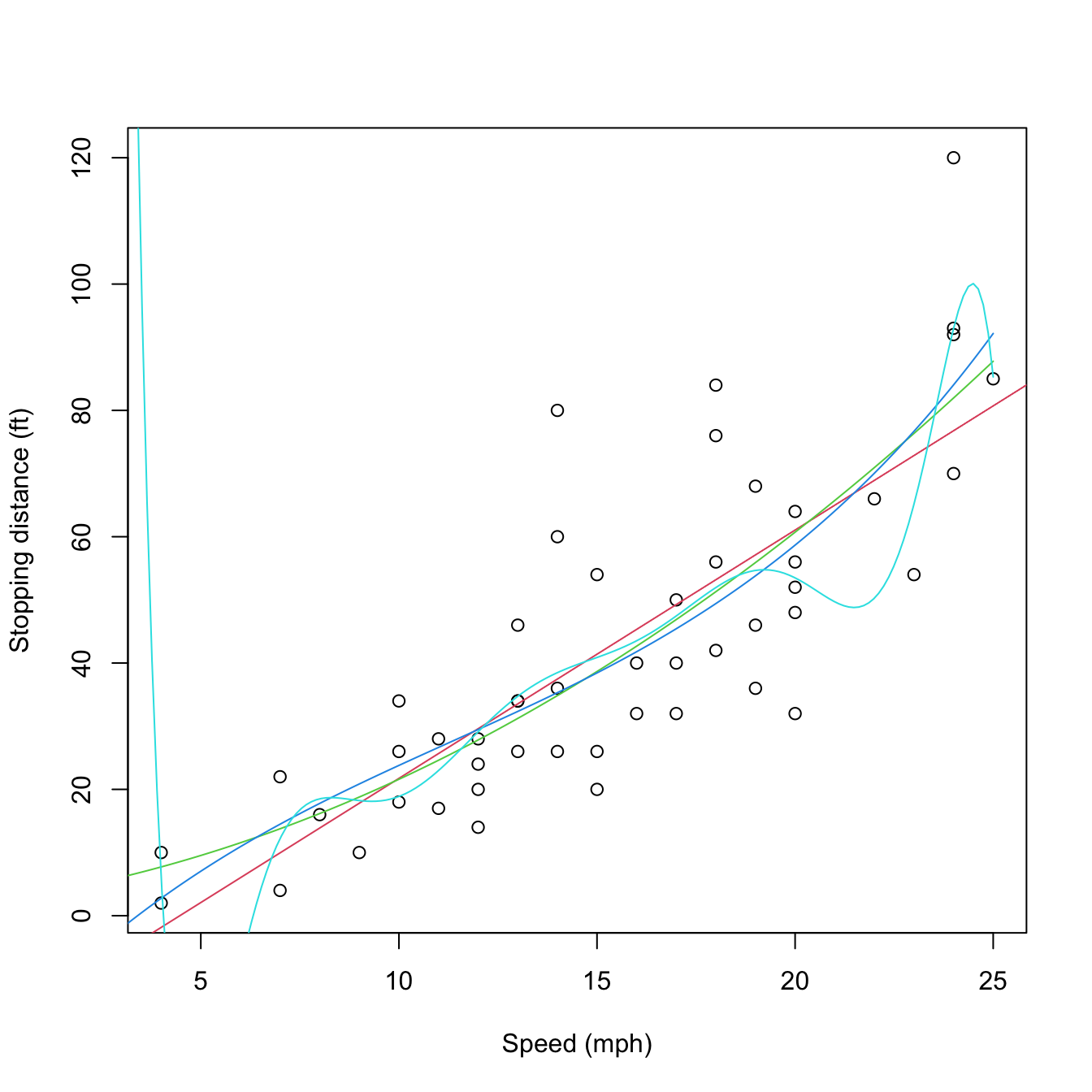

Figure 3.11: Raw and orthogonal polynomial fits of dist ~ speed in the cars dataset.

# BICs -- the linear model is better!

BIC(mod1, mod2, mod3, mod10)

## df BIC

## mod1 3 424.8929

## mod2 4 426.4202

## mod3 5 429.4451

## mod10 12 450.3523

# poly computes by default orthogonal polynomials. These are not

# X^1, X^2, ..., X^p but combinations of them such that the polynomials are

# orthogonal. 'Raw' polynomials are possible with raw = TRUE. They give the

# same fit, but the coefficient estimates are different.

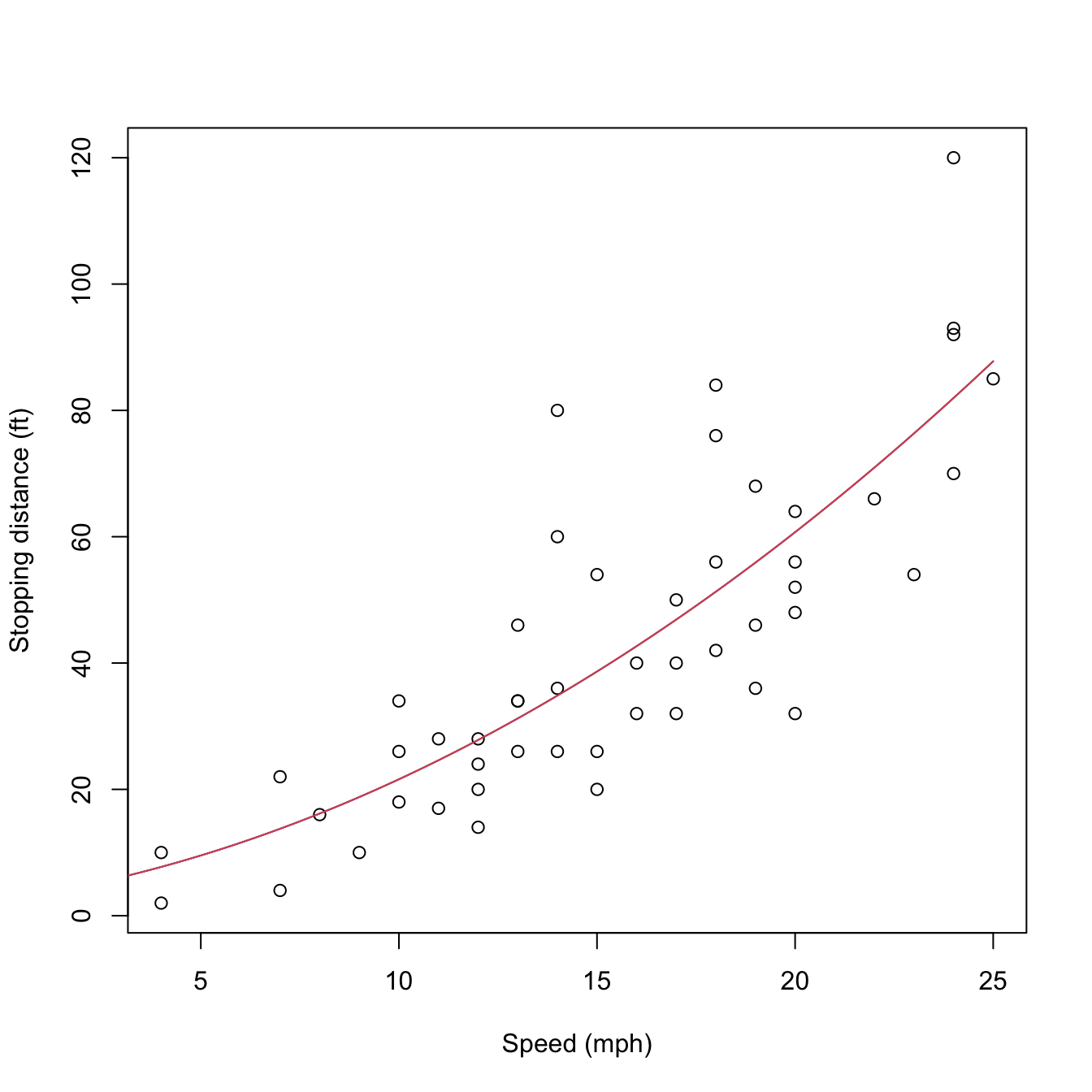

mod2_raw <- lm(dist ~ poly(speed, degree = 2, raw = TRUE), data = cars)

plot(cars, xlab = "Speed (mph)", ylab = "Stopping distance (ft)")

lines(d, predict(mod2, new = data.frame(speed = d)), col = 1)

lines(d, predict(mod2_raw, new = data.frame(speed = d)), col = 2)

Figure 3.12: Raw and orthogonal polynomial fits of dist ~ speed in the cars dataset.

# However: different coefficient estimates, but same R^2. How is this possible?

summary(mod2)

##

## Call:

## lm(formula = dist ~ poly(speed, degree = 2), data = cars)

##

## Residuals:

## Min 1Q Median 3Q Max

## -28.720 -9.184 -3.188 4.628 45.152

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 42.980 2.146 20.026 < 2e-16 ***

## poly(speed, degree = 2)1 145.552 15.176 9.591 1.21e-12 ***

## poly(speed, degree = 2)2 22.996 15.176 1.515 0.136

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 15.18 on 47 degrees of freedom

## Multiple R-squared: 0.6673, Adjusted R-squared: 0.6532

## F-statistic: 47.14 on 2 and 47 DF, p-value: 5.852e-12

summary(mod2_raw)

##

## Call:

## lm(formula = dist ~ poly(speed, degree = 2, raw = TRUE), data = cars)

##

## Residuals:

## Min 1Q Median 3Q Max

## -28.720 -9.184 -3.188 4.628 45.152

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 2.47014 14.81716 0.167 0.868

## poly(speed, degree = 2, raw = TRUE)1 0.91329 2.03422 0.449 0.656

## poly(speed, degree = 2, raw = TRUE)2 0.09996 0.06597 1.515 0.136

##

## Residual standard error: 15.18 on 47 degrees of freedom

## Multiple R-squared: 0.6673, Adjusted R-squared: 0.6532

## F-statistic: 47.14 on 2 and 47 DF, p-value: 5.852e-12

# Because the predictors in mod2Raw are highly related between them, and

# the ones in mod2 are uncorrelated between them!

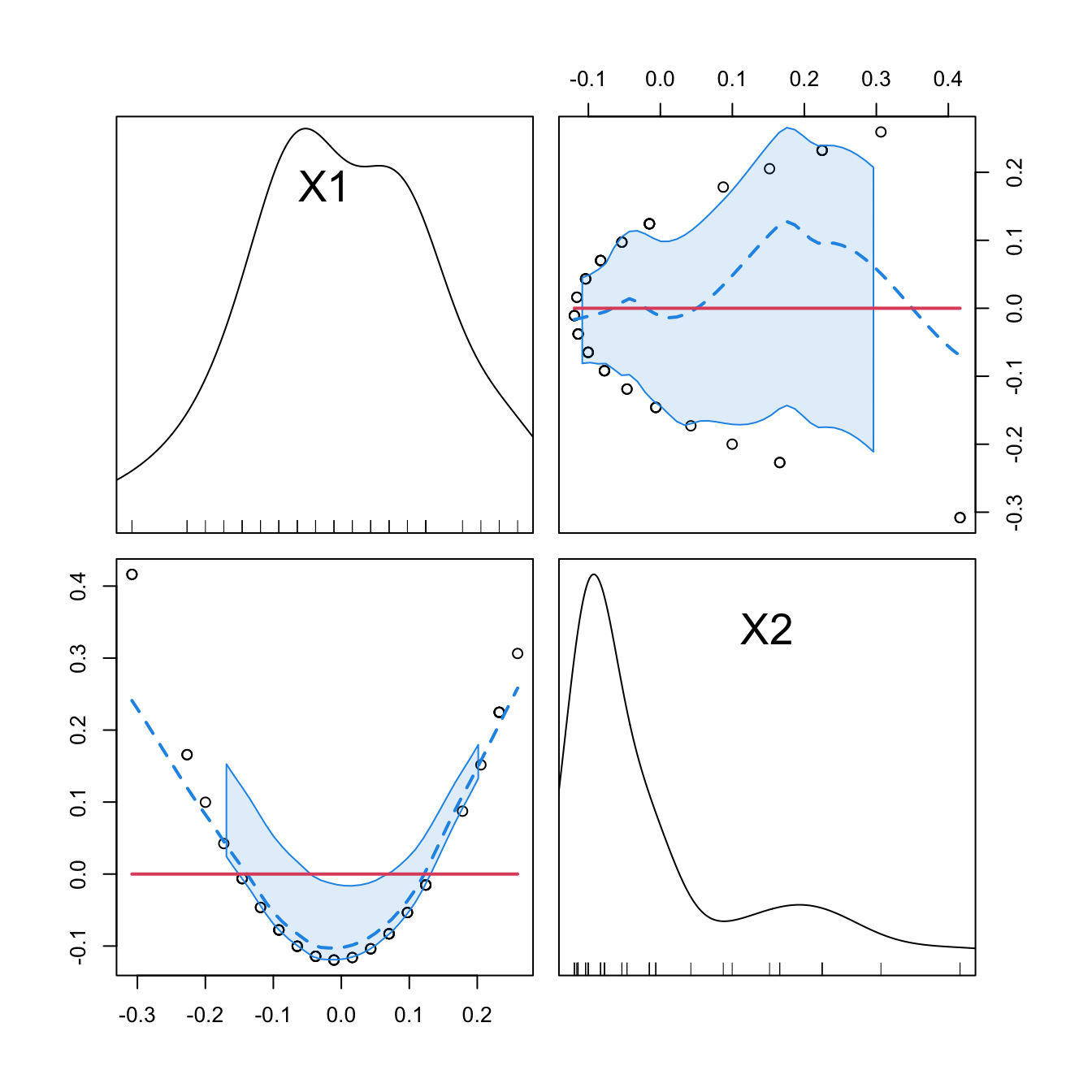

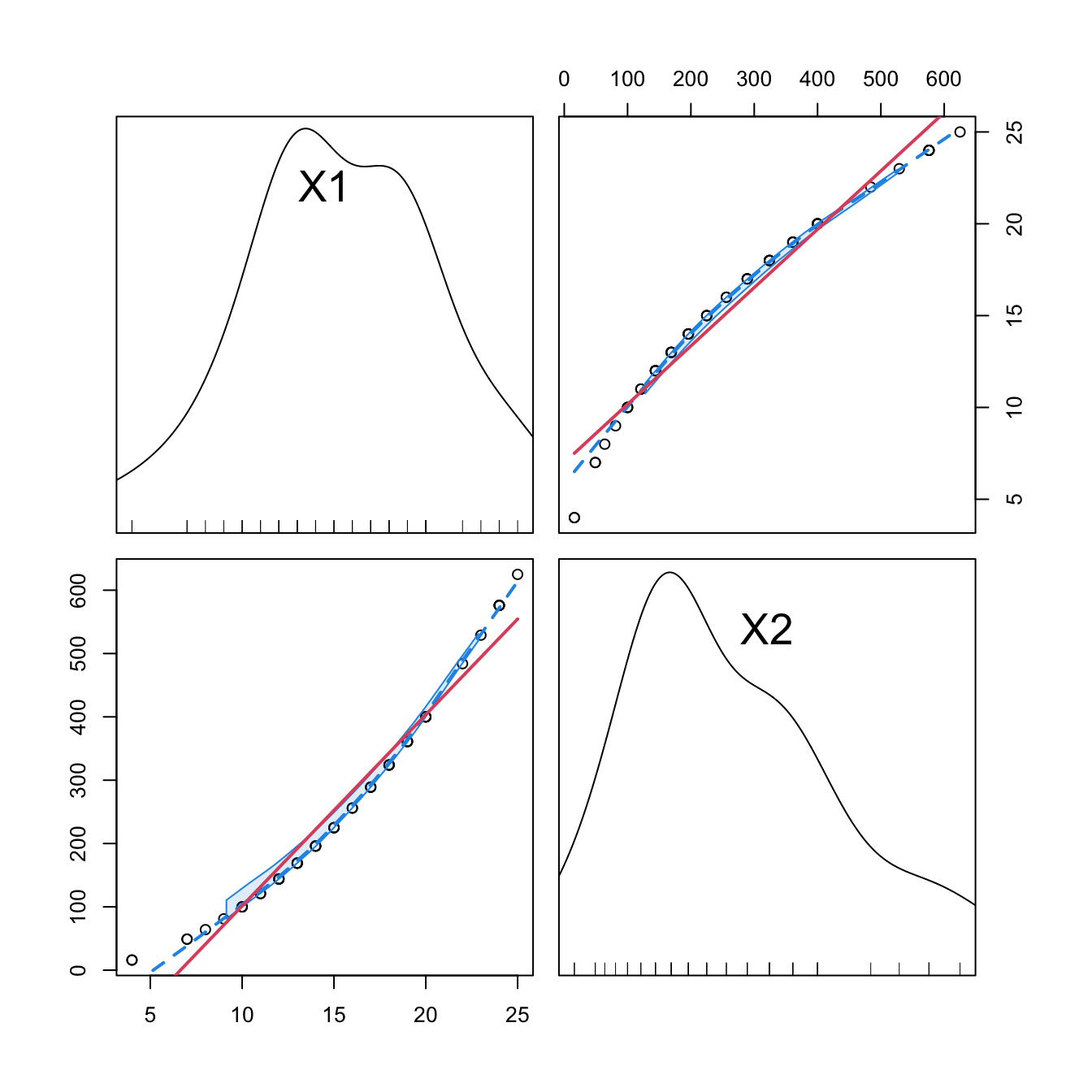

car::scatterplotMatrix(mod2$model[, -1], col = 1, regLine = list(col = 2),

smooth = list(col.smooth = 4, col.spread = 4))

car::scatterplotMatrix(mod2_raw$model[, -1],col = 1, regLine = list(col = 2),

smooth = list(col.smooth = 4, col.spread = 4))

cor(mod2$model[, -1])

## 1 2

## 1 1.000000e+00 2.148001e-18

## 2 2.148001e-18 1.000000e+00

cor(mod2_raw$model[, -1])

## 1 2

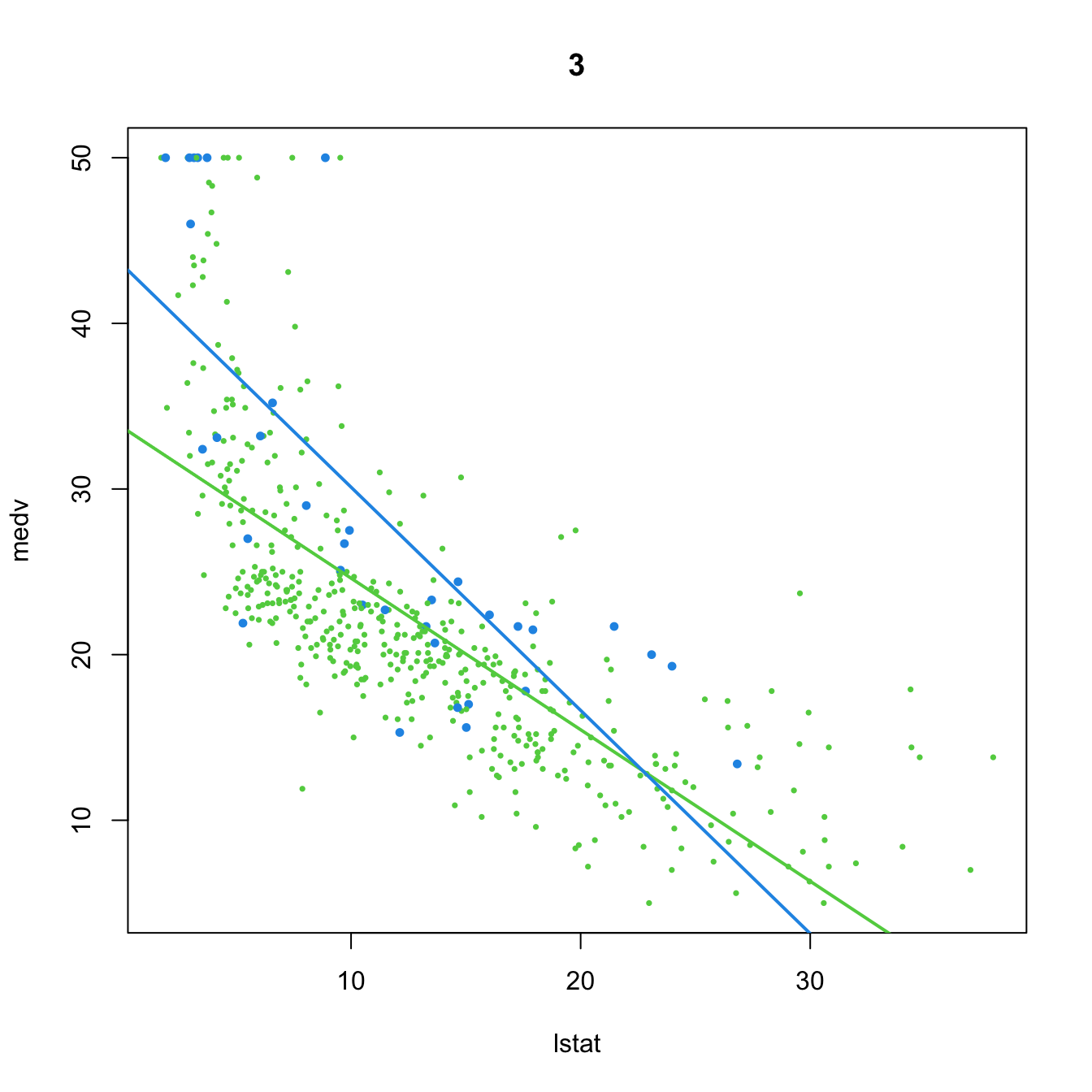

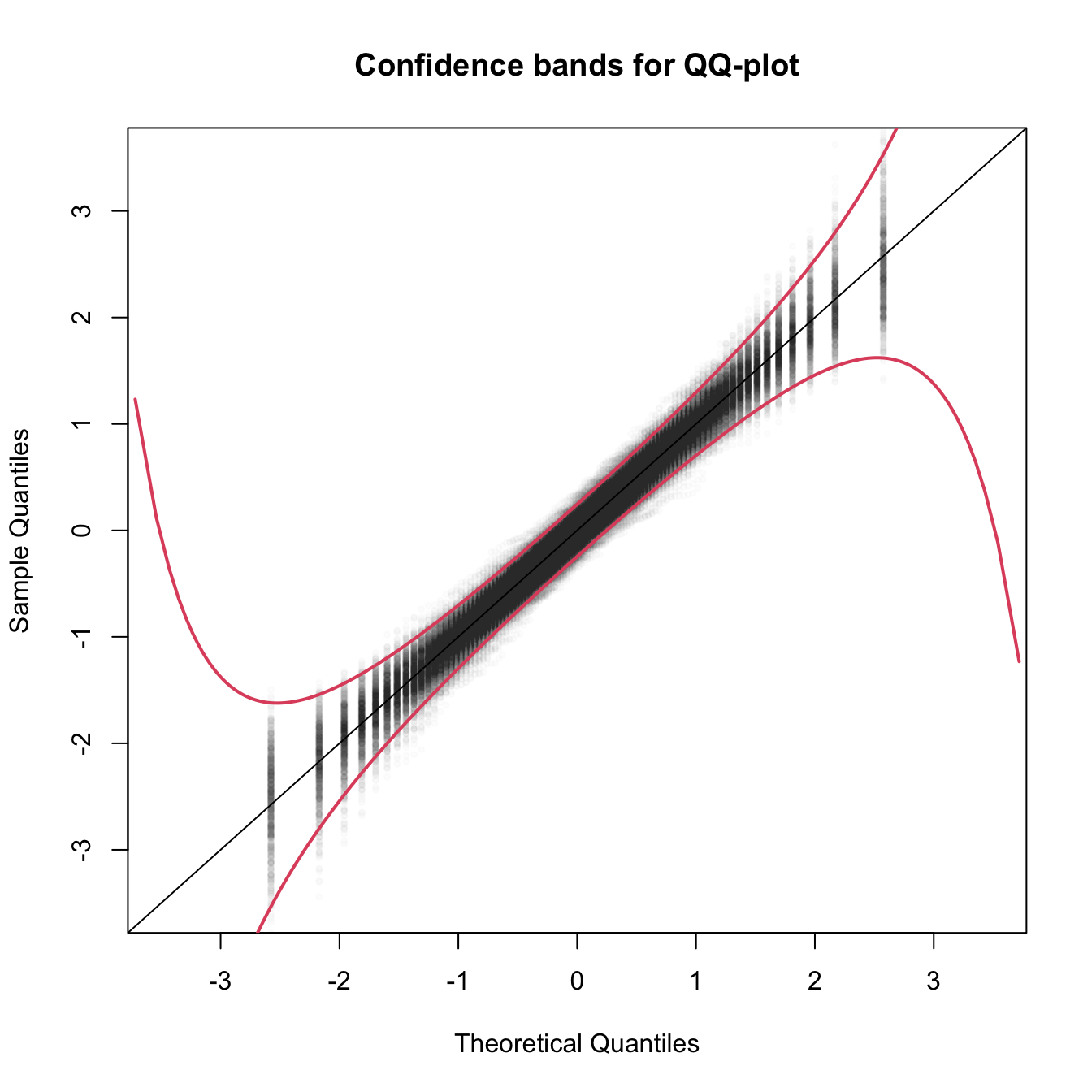

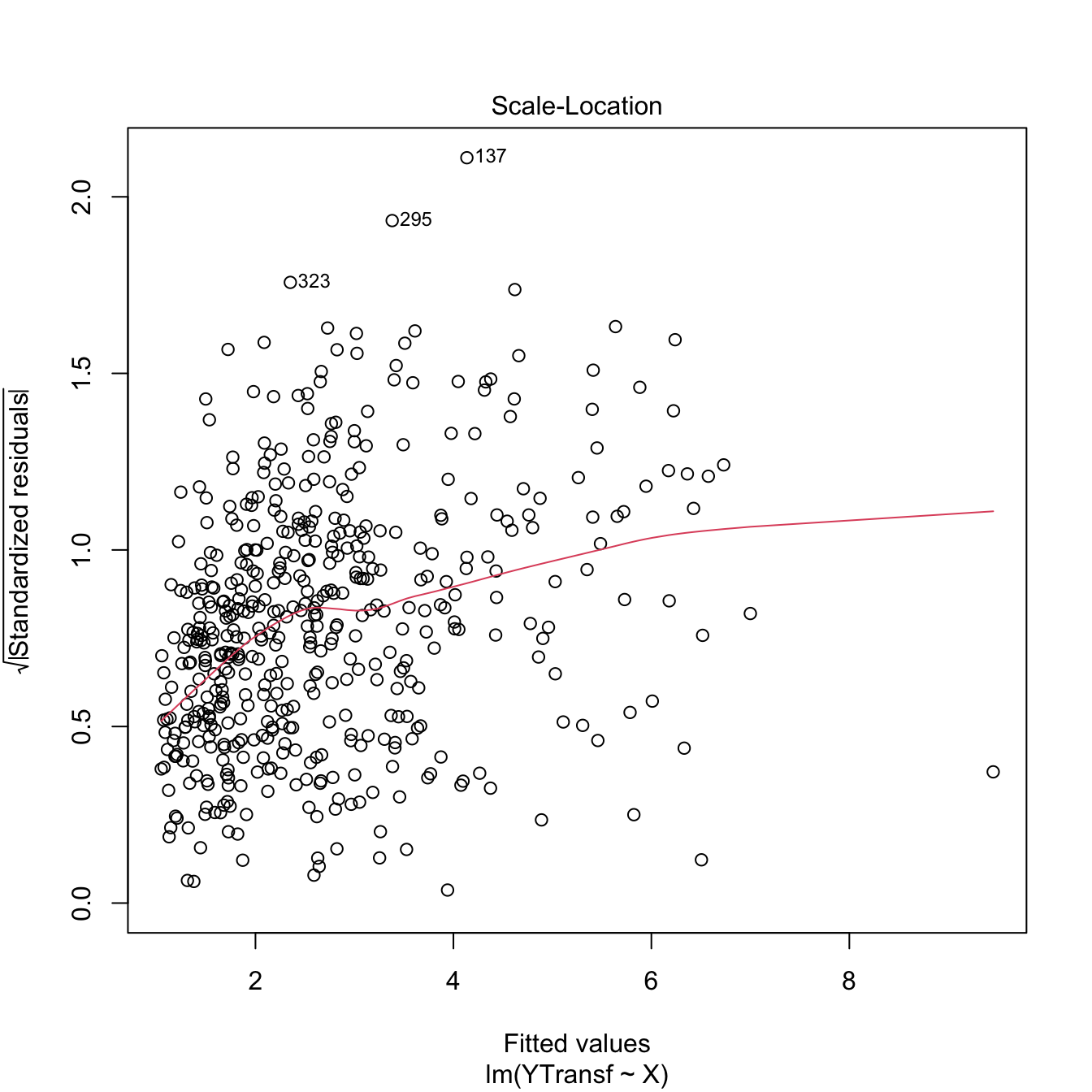

## 1 1.0000000 0.9794765